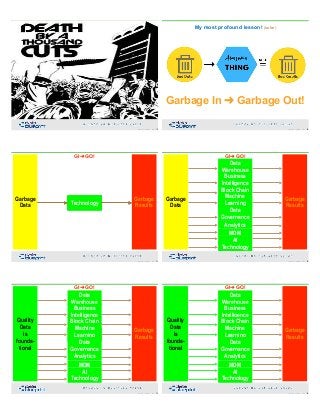

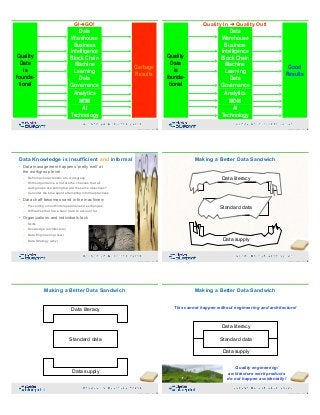

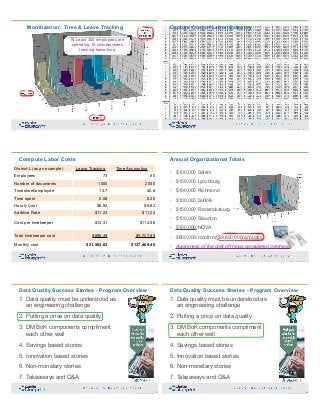

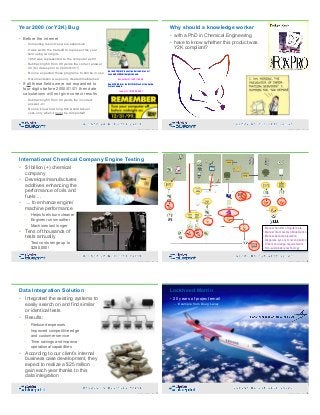

The document emphasizes the importance of data quality management as a critical engineering challenge for organizations, highlighting the link between poor data quality and chronic business issues. It outlines strategies for improving data quality, including prioritizing cleaning efforts and aligning data owners with business needs, while also providing case studies to illustrate successful implementations. The webinar, hosted by Peter Aiken, aims to educate participants on foundational concepts in data quality and its impact on organizational performance.