ContentMine Architecture

•Download as PPTX, PDF•

0 likes•684 views

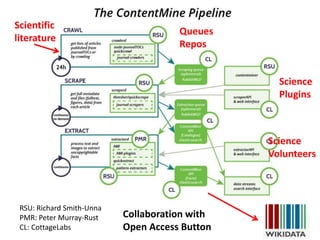

Working diagram slides of key parts of contentmine.org software

Report

Share

Report

Share

Recommended

ContentMine + EPMC: Finding Zika!

Use of ContentMine tools on the Open Access subset of EuropePubMedCentral to discover new knowledge about the Zika virus.

Three slides have embedded movies - these do not show in slideshare and a first pass of this can be seen as a single file at https://vimeo.com/154705161

Can machines understand the scientific literature

With over 5000 scientific articles per day we need machines to help us understand the content. This material is to be used at an interactive session for the Science Society at Trinity College Cambridge UK

Scaling up with Cisco Big Data: Data + Science = Data Science

Discover how IoT data is ingested with real-time technologies such as Apache Spark and analysed using Data Science techniques to glean insights.

Overview of stinger interactive query for hive

Presentation given to the OC Big Data Meetup Group. http://www.meetup.com/OCBigData

Hybrid architecture integrateduserviewdata-peyman_mohajerian

Big Data Camp LA 2014, Hybrid Architecture for Integrated User View of Data of different Temperature and Velocity by Peyman Mohajerian of Teradata

Koalas: How Well Does Koalas Work?

Koalas is an open source project that provides pandas APIs on top of Apache Spark. Pandas is the standard tool for data science and it is typically the first step to explore and manipulate a data set, but pandas does not scale well to big data. Koalas fills the gap by providing pandas equivalent APIs that work on Apache Spark.

There are also many libraries trying to scale pandas APIs, such as Vaex, Modin, and so on. Dask is one of them and very popular among pandas users, and also works on its own cluster similar to Koalas which is on top of Spark cluster. In this talk, we will introduce Koalas and its current status, and the comparison between Koalas and Dask, including benchmarking.

SF Big Analytics meetup : Hoodie From Uber

Even after a decade, the name “Hadoop" remains synonymous with "big data”, even as new options for processing/querying (stream processing, in-memory analytics, interactive sql) and storage services (S3/Google Cloud/Azure) have emerged & unlocked new possibilities. However, the overall data architecture has become more complex with more moving parts and specialized systems, leading to duplication of data and strain on usability . In this talk, we argue that by adding some missing blocks to existing Hadoop stack, we are able to a provide similar capabilities right on top of Hadoop, at reduced cost and increased efficiency, greatly simplifying the overall architecture as well in the process. We will discuss the need for incremental processing primitives on Hadoop, motivating them with some real world problems from Uber. We will then introduce “Hoodie”, an open source spark library built at Uber, to enable faster data for petabyte scale data analytics and solve these problems. We will deep dive into the design & implementation of the system and discuss the core concepts around timeline consistency, tradeoffs between ingest speed & query performance. We contrast Hoodie with similar systems in the space, discuss how its deployed across Hadoop ecosystem at Uber and finally also share the technical direction ahead for the project.

Speaker: VINOTH CHANDAR, Staff Software Engineer at Uber

Vinoth is the founding engineer/architect of the data team at Uber, as well as author of many data processing & querying systems at Uber, including "Hoodie". He has keen interest in unified architectures for data analytics and processing.

Previously, Vinoth was the lead on Linkedin’s Voldemort key value store and has also worked on Oracle Database replication engine, HPC, and stream processing.

Recommended

ContentMine + EPMC: Finding Zika!

Use of ContentMine tools on the Open Access subset of EuropePubMedCentral to discover new knowledge about the Zika virus.

Three slides have embedded movies - these do not show in slideshare and a first pass of this can be seen as a single file at https://vimeo.com/154705161

Can machines understand the scientific literature

With over 5000 scientific articles per day we need machines to help us understand the content. This material is to be used at an interactive session for the Science Society at Trinity College Cambridge UK

Scaling up with Cisco Big Data: Data + Science = Data Science

Discover how IoT data is ingested with real-time technologies such as Apache Spark and analysed using Data Science techniques to glean insights.

Overview of stinger interactive query for hive

Presentation given to the OC Big Data Meetup Group. http://www.meetup.com/OCBigData

Hybrid architecture integrateduserviewdata-peyman_mohajerian

Big Data Camp LA 2014, Hybrid Architecture for Integrated User View of Data of different Temperature and Velocity by Peyman Mohajerian of Teradata

Koalas: How Well Does Koalas Work?

Koalas is an open source project that provides pandas APIs on top of Apache Spark. Pandas is the standard tool for data science and it is typically the first step to explore and manipulate a data set, but pandas does not scale well to big data. Koalas fills the gap by providing pandas equivalent APIs that work on Apache Spark.

There are also many libraries trying to scale pandas APIs, such as Vaex, Modin, and so on. Dask is one of them and very popular among pandas users, and also works on its own cluster similar to Koalas which is on top of Spark cluster. In this talk, we will introduce Koalas and its current status, and the comparison between Koalas and Dask, including benchmarking.

SF Big Analytics meetup : Hoodie From Uber

Even after a decade, the name “Hadoop" remains synonymous with "big data”, even as new options for processing/querying (stream processing, in-memory analytics, interactive sql) and storage services (S3/Google Cloud/Azure) have emerged & unlocked new possibilities. However, the overall data architecture has become more complex with more moving parts and specialized systems, leading to duplication of data and strain on usability . In this talk, we argue that by adding some missing blocks to existing Hadoop stack, we are able to a provide similar capabilities right on top of Hadoop, at reduced cost and increased efficiency, greatly simplifying the overall architecture as well in the process. We will discuss the need for incremental processing primitives on Hadoop, motivating them with some real world problems from Uber. We will then introduce “Hoodie”, an open source spark library built at Uber, to enable faster data for petabyte scale data analytics and solve these problems. We will deep dive into the design & implementation of the system and discuss the core concepts around timeline consistency, tradeoffs between ingest speed & query performance. We contrast Hoodie with similar systems in the space, discuss how its deployed across Hadoop ecosystem at Uber and finally also share the technical direction ahead for the project.

Speaker: VINOTH CHANDAR, Staff Software Engineer at Uber

Vinoth is the founding engineer/architect of the data team at Uber, as well as author of many data processing & querying systems at Uber, including "Hoodie". He has keen interest in unified architectures for data analytics and processing.

Previously, Vinoth was the lead on Linkedin’s Voldemort key value store and has also worked on Oracle Database replication engine, HPC, and stream processing.

Applied Machine learning using H2O, python and R Workshop

Note: Get all workshop content at - https://github.com/h2oai/h2o-meetups/tree/master/2017_02_22_Seattle_STC_Meetup

Basic knowledge of R/python and general ML concepts

Note: This is bring-your-own-laptop workshop. Make sure you bring your laptop in order to be able to participate in the workshop

Level: 200

Time: 2 Hours

Agenda:

- Introduction to ML, H2O and Sparkling Water

- Refresher of data manipulation in R & Python

- Supervised learning

---- Understanding liner regression model with an example

---- Understanding binomial classification with an example

---- Understanding multinomial classification with an example

- Unsupervised learning

---- Understanding k-means clustering with an example

- Using machine learning models in production

- Sparkling Water Introduction & Demo

Large-Scale Data Science in Apache Spark 2.0

Data science is one of the only fields where scalability can lead to fundamentally better results. Scalability allows users to train models on more data or to experiment with more types of models, both of which result in better models. It is no accident that the organizations most successful with AI have been those with huge distributed computing resources. In this talk, Matei Zaharia will describe how Apache Spark is democratizing large-scale data science to make it easier for more organizations to build high-quality data and AI products. Matei Zaharia will talk about the new structured APIs in Spark 2.0 that enable more optimization underneath familia programming interfaces, as well as libraries to scale up deep learning or traditional machine learning libraries on Apache Spark.

Speaker: Matei Zaharia

Ted Willke, Intel Labs MLconf 2013

Ted Willke, Principal Engineer/GM, Intel Labs: "Avoiding Cluster-Scale Headaches with Better Tools for Data Quality and Feature Engineering"

Drill at the Chicago Hug

This is a project report given to the Chicago Hadoop Users' Group meeting in Chicago on September 19, 2012

Architecture of ContentMine Components contentmine.org

This is the evolving architecture of ContentMine (contentmine.org) architecture. It includes an overview ( slide #2, ) showing getpapers, quickscrape, norma and ami.

The key container is the CTree and the architecture shows where components are added or transformed to this.

These slides are dated and may be out-of-date wrt code. Some diagrams are autogenerated from *.dot files.

Please use http://discuss.contentmine.org/c/software as the main source of up-to-date info. Feel free to ask questions, offer help, critique, etc.

All s/w is Open (BSD, Apache2)

The power of hadoop in business

What is the future of Hadoop?

What is the new future of Hadoop?

How is that different from the old one?

Here is how Ted Dunning answered these questions at the winter Hadoop Conference of Japan 2013.

HUG France - Apache Drill

Talk at Hug FR on December 4, 2012 about the new Apache Drill project. Notably, this talk includes an introduction to the converging specification for the logical plan in Drill.

Metadata and Provenance for ML Pipelines with Hopsworks

This talk describes the scale-out, consistent metadata architecture of Hopsworks and how we use it to support custom metadata and provenance for ML Pipelines with Hopsworks Feature Store, NDB, and ePipe . The talk is here: https://www.youtube.com/watch?v=oPp8PJ9QBnU&feature=emb_logo

Bitkom Cray presentation - on HPC affecting big data analytics in FS

High value analytics in FS are being enabled by Graph, machine learning and Spark technologies. To make these real at production scale HPC technologies are more appropriate than commodity clusters.

An Introduction to Spark with Scala

An Introduction to Spark with Scala | Accionlabs Training | Data Engineering month.

PySpark Cassandra - Amsterdam Spark Meetup

At an Amsterdam Spark Meetup I gave a talk about how to work with Python Spark and Cassandra.

DataEngConf: Parquet at Datadog: Fast, Efficient, Portable Storage for Big Data

By Doug Daniels (Director of Engineering, Data Dog)

At Datadog, we collect hundreds of billions of metric data points per day from hosts, services, and customers all over the world. In addition charting and monitoring this data in real time, we also run many large-scale offline jobs to apply algorithms and compute aggregations on the data. In the past months, we’ve migrated our largest data sets over to Apache Parquet—an efficient, portable columnar storage format

Predictive Analytics San Diego

The unification of big and little data processing onto a single platform is an important requirement for Hadoop. How can this be achieved? Ted Dunning explains what is needed for three important use cases.

Python redis talk

This is a talk that I gave on July 20, 2012 at the Southern California Python Interest Group meetup at Cross Campus, with food and drinks provided by Graph Effect.

Emerging technologies /frameworks in Big Data

A short overview presentation on Emerging technologies /frameworks in Big Data covering Apache Parquet, Apache Flink, Apache Drill with basic concepts of Columnar Storage and Dremel.

SolrCloud on Hadoop

An overview of building and serving Lucene indexes on a Hadoop cluster with Solr for text and parametric searching, as presented at Cleveland Hadoop User Group on 13 January 2014.

Apache Calcite: A Foundational Framework for Optimized Query Processing Over ...

A talk given at ACM SIGMOD 2018 in support of the paper <a href="https://arxiv.org/abs/1802.10233"> Calcite: A Foundational Framework for Optimized Query Processing Over Heterogeneous Data Sources</a>.

Apache Calcite is a foundational software framework that provides query processing, optimization, and query language support to many popular open-source data processing systems such as Apache Hive, Apache Storm, Apache Flink, Druid, and MapD. Calcite's architecture consists of a modular and extensible query optimizer with hundreds of built-in optimization rules, a query processor capable of processing a variety of query languages, an adapter architecture designed for extensibility, and support for heterogeneous data models and stores (relational, semi-structured, streaming, and geospatial). This flexible, embeddable, and extensible architecture is what makes Calcite an attractive choice for adoption in big-data frameworks. It is an active project that continues to introduce support for the new types of data sources, query languages, and approaches to query processing and optimization.

Omdi2021 Ontologies for (Materials) Science in the Digital Age

A review of computable ontologies relevant to science and how they can help Materials Science in particular

Open Science Principles and Practice

Talk to Indian National Young Academy of Scientists on Open Sciuence. Emphasis on Open Notebook and Data Science, mining data from journals

More Related Content

Similar to ContentMine Architecture

Applied Machine learning using H2O, python and R Workshop

Note: Get all workshop content at - https://github.com/h2oai/h2o-meetups/tree/master/2017_02_22_Seattle_STC_Meetup

Basic knowledge of R/python and general ML concepts

Note: This is bring-your-own-laptop workshop. Make sure you bring your laptop in order to be able to participate in the workshop

Level: 200

Time: 2 Hours

Agenda:

- Introduction to ML, H2O and Sparkling Water

- Refresher of data manipulation in R & Python

- Supervised learning

---- Understanding liner regression model with an example

---- Understanding binomial classification with an example

---- Understanding multinomial classification with an example

- Unsupervised learning

---- Understanding k-means clustering with an example

- Using machine learning models in production

- Sparkling Water Introduction & Demo

Large-Scale Data Science in Apache Spark 2.0

Data science is one of the only fields where scalability can lead to fundamentally better results. Scalability allows users to train models on more data or to experiment with more types of models, both of which result in better models. It is no accident that the organizations most successful with AI have been those with huge distributed computing resources. In this talk, Matei Zaharia will describe how Apache Spark is democratizing large-scale data science to make it easier for more organizations to build high-quality data and AI products. Matei Zaharia will talk about the new structured APIs in Spark 2.0 that enable more optimization underneath familia programming interfaces, as well as libraries to scale up deep learning or traditional machine learning libraries on Apache Spark.

Speaker: Matei Zaharia

Ted Willke, Intel Labs MLconf 2013

Ted Willke, Principal Engineer/GM, Intel Labs: "Avoiding Cluster-Scale Headaches with Better Tools for Data Quality and Feature Engineering"

Drill at the Chicago Hug

This is a project report given to the Chicago Hadoop Users' Group meeting in Chicago on September 19, 2012

Architecture of ContentMine Components contentmine.org

This is the evolving architecture of ContentMine (contentmine.org) architecture. It includes an overview ( slide #2, ) showing getpapers, quickscrape, norma and ami.

The key container is the CTree and the architecture shows where components are added or transformed to this.

These slides are dated and may be out-of-date wrt code. Some diagrams are autogenerated from *.dot files.

Please use http://discuss.contentmine.org/c/software as the main source of up-to-date info. Feel free to ask questions, offer help, critique, etc.

All s/w is Open (BSD, Apache2)

The power of hadoop in business

What is the future of Hadoop?

What is the new future of Hadoop?

How is that different from the old one?

Here is how Ted Dunning answered these questions at the winter Hadoop Conference of Japan 2013.

HUG France - Apache Drill

Talk at Hug FR on December 4, 2012 about the new Apache Drill project. Notably, this talk includes an introduction to the converging specification for the logical plan in Drill.

Metadata and Provenance for ML Pipelines with Hopsworks

This talk describes the scale-out, consistent metadata architecture of Hopsworks and how we use it to support custom metadata and provenance for ML Pipelines with Hopsworks Feature Store, NDB, and ePipe . The talk is here: https://www.youtube.com/watch?v=oPp8PJ9QBnU&feature=emb_logo

Bitkom Cray presentation - on HPC affecting big data analytics in FS

High value analytics in FS are being enabled by Graph, machine learning and Spark technologies. To make these real at production scale HPC technologies are more appropriate than commodity clusters.

An Introduction to Spark with Scala

An Introduction to Spark with Scala | Accionlabs Training | Data Engineering month.

PySpark Cassandra - Amsterdam Spark Meetup

At an Amsterdam Spark Meetup I gave a talk about how to work with Python Spark and Cassandra.

DataEngConf: Parquet at Datadog: Fast, Efficient, Portable Storage for Big Data

By Doug Daniels (Director of Engineering, Data Dog)

At Datadog, we collect hundreds of billions of metric data points per day from hosts, services, and customers all over the world. In addition charting and monitoring this data in real time, we also run many large-scale offline jobs to apply algorithms and compute aggregations on the data. In the past months, we’ve migrated our largest data sets over to Apache Parquet—an efficient, portable columnar storage format

Predictive Analytics San Diego

The unification of big and little data processing onto a single platform is an important requirement for Hadoop. How can this be achieved? Ted Dunning explains what is needed for three important use cases.

Python redis talk

This is a talk that I gave on July 20, 2012 at the Southern California Python Interest Group meetup at Cross Campus, with food and drinks provided by Graph Effect.

Emerging technologies /frameworks in Big Data

A short overview presentation on Emerging technologies /frameworks in Big Data covering Apache Parquet, Apache Flink, Apache Drill with basic concepts of Columnar Storage and Dremel.

SolrCloud on Hadoop

An overview of building and serving Lucene indexes on a Hadoop cluster with Solr for text and parametric searching, as presented at Cleveland Hadoop User Group on 13 January 2014.

Apache Calcite: A Foundational Framework for Optimized Query Processing Over ...

A talk given at ACM SIGMOD 2018 in support of the paper <a href="https://arxiv.org/abs/1802.10233"> Calcite: A Foundational Framework for Optimized Query Processing Over Heterogeneous Data Sources</a>.

Apache Calcite is a foundational software framework that provides query processing, optimization, and query language support to many popular open-source data processing systems such as Apache Hive, Apache Storm, Apache Flink, Druid, and MapD. Calcite's architecture consists of a modular and extensible query optimizer with hundreds of built-in optimization rules, a query processor capable of processing a variety of query languages, an adapter architecture designed for extensibility, and support for heterogeneous data models and stores (relational, semi-structured, streaming, and geospatial). This flexible, embeddable, and extensible architecture is what makes Calcite an attractive choice for adoption in big-data frameworks. It is an active project that continues to introduce support for the new types of data sources, query languages, and approaches to query processing and optimization.

Similar to ContentMine Architecture (20)

Applied Machine learning using H2O, python and R Workshop

Applied Machine learning using H2O, python and R Workshop

Architecture of ContentMine Components contentmine.org

Architecture of ContentMine Components contentmine.org

Metadata and Provenance for ML Pipelines with Hopsworks

Metadata and Provenance for ML Pipelines with Hopsworks

Bitkom Cray presentation - on HPC affecting big data analytics in FS

Bitkom Cray presentation - on HPC affecting big data analytics in FS

DataEngConf: Parquet at Datadog: Fast, Efficient, Portable Storage for Big Data

DataEngConf: Parquet at Datadog: Fast, Efficient, Portable Storage for Big Data

Apache Calcite: A Foundational Framework for Optimized Query Processing Over ...

Apache Calcite: A Foundational Framework for Optimized Query Processing Over ...

More from petermurrayrust

Omdi2021 Ontologies for (Materials) Science in the Digital Age

A review of computable ontologies relevant to science and how they can help Materials Science in particular

Open Science Principles and Practice

Talk to Indian National Young Academy of Scientists on Open Sciuence. Emphasis on Open Notebook and Data Science, mining data from journals

Can machines understand the scientific literature?

A presentation to Cambridge MPhil Computational Biology. 2020-11-11 . Presenters Peter Murray-Rust, Shweata Hegde and Ambreen Hamadani from https://github.com/petermr/openvirus .

This chunk is PMR with a large break in the middle for SH and AH talks.

I cover Global Challenges, knowledge equity, semantics of scientific articles, Wikidata, Data Extraction from images, and ethics/politics.

Answer: Yes, technically. No, politically as the Publisher-Academic Complex will block it.

OpenVirus at OpenPublishingFest

Semantic content created from Open Access papers to help in the fight against viral epidemics. Includes contributions from NIPGR interns, 5 supported by Indian National Young Academy of Scientists.

Open Virus Indian Presentation

Overview of openVirus project. Interns in India have worked for 2 months to extract scientific knowledge from the literature about viral epidemics. Covers data science, machine learning and virtual collaboration

Automatic mining of data from materials science literature

The literature on materials science (batteries, etc.) contains huge amounts of scientific facts, but not in easily accessible form. our AMI program has been developed to automatically:

scrape , clean, annotate and display/publish

data for re-use in science.

Examples will be given from electrochemistry, magnetism and other fields . The general principles and (open) tech are applicable to many other disciplines.

Climate Change and Human Migration

A presentation by Open Climate Knowledge for European Forum for Advanced Practices. Showing how the scientific literature can be searched for knowledge on this multidisciplinary topic.

openVirus - tools for discovering literature on viruses

Open tools for scraping scientific articles from the scientific literature on a high-throughput

XML for science; its huge potential; but are pubiishers preventing it?

XML can represent almost all well derfined scientific objects. chemistry, plants medcine. But it's not yet widely used. Is this because publishers oppose thr re-use of science?

Early Career Reseachers in Science. Start Early, Be Open , Be Brave

Highlights the importance of supporting Early Career Researchers to pursue their own ideas, possibly alongside their main research. Illustrated with biology but applies to all fields of science. This was a 14 min presentation and shows narratives of how ECRs develop and reinforce each other.

Early Career Reseachers and Open Healthcare

Presentation given at NUI, Galway 2019-04-11 for Open Science Week.

An overview of Early Career Researchers, their innovation and contribution towards Open Infrastructure

Rapid biomedical search

The ContentMine system (Open Source) can search EuropePMC and download hundreds of articles in seconds. These can be indexed by AMI dictionaries allowing a rapid evaluations and refinement of the search

Scientific search for everyone

The scientific and medical literature is a vast resource of knowledge, but it needs turning into semantic FAIR form. The ContentMine can do this and we presented a rapid overview of the potential

Openplant2018 Poster; Semantic searching

A poster presented at OpenPLant 2018 showing how ContentMine dictionaries can enhance the precision and power of search (in this case plants)

Extracting science from the archive

A 10-minute talk to lovers of early science (e.g. 1600-1900) at the Royal Society. Archivists , computer vision, scientific historical metadata all relevant.

I chose 4 examples of monochrome diagrams that I can extract something from automatically. Some of the methids would scale to larger volumes , e.g. tables for figures, or maps with points

WikiFactMine: Ontology for Everybody and Everything

WikiFactMine https://www.wikidata.org/wiki/Wikidata:WikiFactMine consists of several hundreds dictionaries created from Wikidata. They cover everything from science to medicine to geo to arts. Every item has a unique identifier (Q) and normally has several properties (P) creating a series of triples. Using SPARQL it's possible to create sophiticated queries and run them in seconds

Disrupting the Publisher-Academic Complex

The Publisher -Academic complex is a dystopian cycle where academia gives (mega)publishers manuscripts, reviews and money and the publishers give personal and institutional glory(vanity). This is analysed in its origins, impact and harm. The disruption can come from Advocacy/Activism, Community and Tools. Disruption comes from doing things Better or Novel, not Prices

AUDIO : https://soundcloud.com/damahub/peter-murray-rust-disturbing-the-publisher-academic-complex-210418-british-library

Thanks to DaMaHub

This has now been edited by Ewan McAndrew (Edinburgh Wikimedian in Residence) many thanks - to synchronize the slides with the soundtrack. https://media.ed.ac.uk/media/1_46h85ltt Brilliant

Paradise Lost and The Right to Read is the Right to Mine

Presented to UIUC CIRSS seminars to a mixed group of Library, CS, domain scientists with a great contingent of Early Career Researchers. Starts by honouring the creation of the wonderful NCSA Mosaic at UIUC in 1993 and the paradise of knowledge and community it opened. Then shows the gradual and tragic decline of the web into a megacorporate neocolonialist empire, where knowledge is sacrificed for money and power.

You have seen many of the slides before but the words are different and have been recorded.

Young people in an Age of Knowledge Neocolonialism

Knowledge is being ruthlessly enclosed by megacorporations.

More from petermurrayrust (20)

Omdi2021 Ontologies for (Materials) Science in the Digital Age

Omdi2021 Ontologies for (Materials) Science in the Digital Age

Can machines understand the scientific literature?

Can machines understand the scientific literature?

Automatic mining of data from materials science literature

Automatic mining of data from materials science literature

openVirus - tools for discovering literature on viruses

openVirus - tools for discovering literature on viruses

XML for science; its huge potential; but are pubiishers preventing it?

XML for science; its huge potential; but are pubiishers preventing it?

Early Career Reseachers in Science. Start Early, Be Open , Be Brave

Early Career Reseachers in Science. Start Early, Be Open , Be Brave

WikiFactMine: Ontology for Everybody and Everything

WikiFactMine: Ontology for Everybody and Everything

Paradise Lost and The Right to Read is the Right to Mine

Paradise Lost and The Right to Read is the Right to Mine

Young people in an Age of Knowledge Neocolonialism

Young people in an Age of Knowledge Neocolonialism

Recently uploaded

Providing Globus Services to Users of JASMIN for Environmental Data Analysis

JASMIN is the UK’s high-performance data analysis platform for environmental science, operated by STFC on behalf of the UK Natural Environment Research Council (NERC). In addition to its role in hosting the CEDA Archive (NERC’s long-term repository for climate, atmospheric science & Earth observation data in the UK), JASMIN provides a collaborative platform to a community of around 2,000 scientists in the UK and beyond, providing nearly 400 environmental science projects with working space, compute resources and tools to facilitate their work. High-performance data transfer into and out of JASMIN has always been a key feature, with many scientists bringing model outputs from supercomputers elsewhere in the UK, to analyse against observational or other model data in the CEDA Archive. A growing number of JASMIN users are now realising the benefits of using the Globus service to provide reliable and efficient data movement and other tasks in this and other contexts. Further use cases involve long-distance (intercontinental) transfers to and from JASMIN, and collecting results from a mobile atmospheric radar system, pushing data to JASMIN via a lightweight Globus deployment. We provide details of how Globus fits into our current infrastructure, our experience of the recent migration to GCSv5.4, and of our interest in developing use of the wider ecosystem of Globus services for the benefit of our user community.

Globus Compute wth IRI Workflows - GlobusWorld 2024

As part of the DOE Integrated Research Infrastructure (IRI) program, NERSC at Lawrence Berkeley National Lab and ALCF at Argonne National Lab are working closely with General Atomics on accelerating the computing requirements of the DIII-D experiment. As part of the work the team is investigating ways to speedup the time to solution for many different parts of the DIII-D workflow including how they run jobs on HPC systems. One of these routes is looking at Globus Compute as a way to replace the current method for managing tasks and we describe a brief proof of concept showing how Globus Compute could help to schedule jobs and be a tool to connect compute at different facilities.

Large Language Models and the End of Programming

Talk by Matt Welsh at Craft Conference 2024 on the impact that Large Language Models will have on the future of software development. In this talk, I discuss the ways in which LLMs will impact the software industry, from replacing human software developers with AI, to replacing conventional software with models that perform reasoning, computation, and problem-solving.

OpenMetadata Community Meeting - 5th June 2024

The OpenMetadata Community Meeting was held on June 5th, 2024. In this meeting, we discussed about the data quality capabilities that are integrated with the Incident Manager, providing a complete solution to handle your data observability needs. Watch the end-to-end demo of the data quality features.

* How to run your own data quality framework

* What is the performance impact of running data quality frameworks

* How to run the test cases in your own ETL pipelines

* How the Incident Manager is integrated

* Get notified with alerts when test cases fail

Watch the meeting recording here - https://www.youtube.com/watch?v=UbNOje0kf6E

Introducing Crescat - Event Management Software for Venues, Festivals and Eve...

Crescat is industry-trusted event management software, built by event professionals for event professionals. Founded in 2017, we have three key products tailored for the live event industry.

Crescat Event for concert promoters and event agencies. Crescat Venue for music venues, conference centers, wedding venues, concert halls and more. And Crescat Festival for festivals, conferences and complex events.

With a wide range of popular features such as event scheduling, shift management, volunteer and crew coordination, artist booking and much more, Crescat is designed for customisation and ease-of-use.

Over 125,000 events have been planned in Crescat and with hundreds of customers of all shapes and sizes, from boutique event agencies through to international concert promoters, Crescat is rigged for success. What's more, we highly value feedback from our users and we are constantly improving our software with updates, new features and improvements.

If you plan events, run a venue or produce festivals and you're looking for ways to make your life easier, then we have a solution for you. Try our software for free or schedule a no-obligation demo with one of our product specialists today at crescat.io

Lecture 1 Introduction to games development

Software engineering

Game Development

unity Game Development

Top Features to Include in Your Winzo Clone App for Business Growth (4).pptx

Discover the essential features to incorporate in your Winzo clone app to boost business growth, enhance user engagement, and drive revenue. Learn how to create a compelling gaming experience that stands out in the competitive market.

APIs for Browser Automation (MoT Meetup 2024)

APIs for Browser Automation:

Selenium, Cypress, Puppeteer, and Playwright. Ministry of Testing Athens. Meetup on the Beach. 30 May 2024.

Introduction to Pygame (Lecture 7 Python Game Development)

Introduction to Pygame (Lecture 7 Python Game Development)

Essentials of Automations: The Art of Triggers and Actions in FME

In this second installment of our Essentials of Automations webinar series, we’ll explore the landscape of triggers and actions, guiding you through the nuances of authoring and adapting workspaces for seamless automations. Gain an understanding of the full spectrum of triggers and actions available in FME, empowering you to enhance your workspaces for efficient automation.

We’ll kick things off by showcasing the most commonly used event-based triggers, introducing you to various automation workflows like manual triggers, schedules, directory watchers, and more. Plus, see how these elements play out in real scenarios.

Whether you’re tweaking your current setup or building from the ground up, this session will arm you with the tools and insights needed to transform your FME usage into a powerhouse of productivity. Join us to discover effective strategies that simplify complex processes, enhancing your productivity and transforming your data management practices with FME. Let’s turn complexity into clarity and make your workspaces work wonders!

AI Pilot Review: The World’s First Virtual Assistant Marketing Suite

AI Pilot Review: The World’s First Virtual Assistant Marketing Suite

👉👉 Click Here To Get More Info 👇👇

https://sumonreview.com/ai-pilot-review/

AI Pilot Review: Key Features

✅Deploy AI expert bots in Any Niche With Just A Click

✅With one keyword, generate complete funnels, websites, landing pages, and more.

✅More than 85 AI features are included in the AI pilot.

✅No setup or configuration; use your voice (like Siri) to do whatever you want.

✅You Can Use AI Pilot To Create your version of AI Pilot And Charge People For It…

✅ZERO Manual Work With AI Pilot. Never write, Design, Or Code Again.

✅ZERO Limits On Features Or Usages

✅Use Our AI-powered Traffic To Get Hundreds Of Customers

✅No Complicated Setup: Get Up And Running In 2 Minutes

✅99.99% Up-Time Guaranteed

✅30 Days Money-Back Guarantee

✅ZERO Upfront Cost

See My Other Reviews Article:

(1) TubeTrivia AI Review: https://sumonreview.com/tubetrivia-ai-review

(2) SocioWave Review: https://sumonreview.com/sociowave-review

(3) AI Partner & Profit Review: https://sumonreview.com/ai-partner-profit-review

(4) AI Ebook Suite Review: https://sumonreview.com/ai-ebook-suite-review

Game Development with Unity3D (Game Development lecture 3)

Game Development with Unity3D (Game Development lecture 3)

Text-Summarization-of-Breaking-News-Using-Fine-tuning-BART-Model.pptx

Text summarization of braking news using fine tuning BART model

Innovating Inference - Remote Triggering of Large Language Models on HPC Clus...

Large Language Models (LLMs) are currently the center of attention in the tech world, particularly for their potential to advance research. In this presentation, we'll explore a straightforward and effective method for quickly initiating inference runs on supercomputers using the vLLM tool with Globus Compute, specifically on the Polaris system at ALCF. We'll begin by briefly discussing the popularity and applications of LLMs in various fields. Following this, we will introduce the vLLM tool, and explain how it integrates with Globus Compute to efficiently manage LLM operations on Polaris. Attendees will learn the practical aspects of setting up and remotely triggering LLMs from local machines, focusing on ease of use and efficiency. This talk is ideal for researchers and practitioners looking to leverage the power of LLMs in their work, offering a clear guide to harnessing supercomputing resources for quick and effective LLM inference.

Climate Science Flows: Enabling Petabyte-Scale Climate Analysis with the Eart...

The Earth System Grid Federation (ESGF) is a global network of data servers that archives and distributes the planet’s largest collection of Earth system model output for thousands of climate and environmental scientists worldwide. Many of these petabyte-scale data archives are located in proximity to large high-performance computing (HPC) or cloud computing resources, but the primary workflow for data users consists of transferring data, and applying computations on a different system. As a part of the ESGF 2.0 US project (funded by the United States Department of Energy Office of Science), we developed pre-defined data workflows, which can be run on-demand, capable of applying many data reduction and data analysis to the large ESGF data archives, transferring only the resultant analysis (ex. visualizations, smaller data files). In this talk, we will showcase a few of these workflows, highlighting how Globus Flows can be used for petabyte-scale climate analysis.

Enterprise Software Development with No Code Solutions.pptx

In the ever-evolving landscape of technology, enterprise software development is undergoing a significant transformation. Traditional coding methods are being challenged by innovative no-code solutions, which promise to streamline and democratize the software development process.

This shift is particularly impactful for enterprises, which require robust, scalable, and efficient software to manage their operations. In this article, we will explore the various facets of enterprise software development with no-code solutions, examining their benefits, challenges, and the future potential they hold.

Quarkus Hidden and Forbidden Extensions

Quarkus has a vast extension ecosystem and is known for its subsonic and subatomic feature set. Some of these features are not as well known, and some extensions are less talked about, but that does not make them less interesting - quite the opposite.

Come join this talk to see some tips and tricks for using Quarkus and some of the lesser known features, extensions and development techniques.

AI Fusion Buddy Review: Brand New, Groundbreaking Gemini-Powered AI App

AI Fusion Buddy Review: Brand New, Groundbreaking Gemini-Powered AI App

👉👉 Click Here To Get More Info 👇👇

https://sumonreview.com/ai-fusion-buddy-review

AI Fusion Buddy Review: Key Features

✅Create Stunning AI App Suite Fully Powered By Google's Latest AI technology, Gemini

✅Use Gemini to Build high-converting Converting Sales Video Scripts, ad copies, Trending Articles, blogs, etc.100% unique!

✅Create Ultra-HD graphics with a single keyword or phrase that commands 10x eyeballs!

✅Fully automated AI articles bulk generation!

✅Auto-post or schedule stunning AI content across all your accounts at once—WordPress, Facebook, LinkedIn, Blogger, and more.

✅With one keyword or URL, generate complete websites, landing pages, and more…

✅Automatically create & sell AI content, graphics, websites, landing pages, & all that gets you paid non-stop 24*7.

✅Pre-built High-Converting 100+ website Templates and 2000+ graphic templates logos, banners, and thumbnail images in Trending Niches.

✅Say goodbye to wasting time logging into multiple Chat GPT & AI Apps once & for all!

✅Save over $5000 per year and kick out dependency on third parties completely!

✅Brand New App: Not available anywhere else!

✅ Beginner-friendly!

✅ZERO upfront cost or any extra expenses

✅Risk-Free: 30-Day Money-Back Guarantee!

✅Commercial License included!

See My Other Reviews Article:

(1) AI Genie Review: https://sumonreview.com/ai-genie-review

(2) SocioWave Review: https://sumonreview.com/sociowave-review

(3) AI Partner & Profit Review: https://sumonreview.com/ai-partner-profit-review

(4) AI Ebook Suite Review: https://sumonreview.com/ai-ebook-suite-review

#AIFusionBuddyReview,

#AIFusionBuddyFeatures,

#AIFusionBuddyPricing,

#AIFusionBuddyProsandCons,

#AIFusionBuddyTutorial,

#AIFusionBuddyUserExperience

#AIFusionBuddyforBeginners,

#AIFusionBuddyBenefits,

#AIFusionBuddyComparison,

#AIFusionBuddyInstallation,

#AIFusionBuddyRefundPolicy,

#AIFusionBuddyDemo,

#AIFusionBuddyMaintenanceFees,

#AIFusionBuddyNewbieFriendly,

#WhatIsAIFusionBuddy?,

#HowDoesAIFusionBuddyWorks

openEuler Case Study - The Journey to Supply Chain Security

openEuler Case Study - The Journey to Supply Chain Security

Mobile App Development Company In Noida | Drona Infotech

Looking for a reliable mobile app development company in Noida? Look no further than Drona Infotech. We specialize in creating customized apps for your business needs.

Visit Us For : https://www.dronainfotech.com/mobile-application-development/

Recently uploaded (20)

Providing Globus Services to Users of JASMIN for Environmental Data Analysis

Providing Globus Services to Users of JASMIN for Environmental Data Analysis

Globus Compute wth IRI Workflows - GlobusWorld 2024

Globus Compute wth IRI Workflows - GlobusWorld 2024

Introducing Crescat - Event Management Software for Venues, Festivals and Eve...

Introducing Crescat - Event Management Software for Venues, Festivals and Eve...

Top Features to Include in Your Winzo Clone App for Business Growth (4).pptx

Top Features to Include in Your Winzo Clone App for Business Growth (4).pptx

Introduction to Pygame (Lecture 7 Python Game Development)

Introduction to Pygame (Lecture 7 Python Game Development)

Essentials of Automations: The Art of Triggers and Actions in FME

Essentials of Automations: The Art of Triggers and Actions in FME

AI Pilot Review: The World’s First Virtual Assistant Marketing Suite

AI Pilot Review: The World’s First Virtual Assistant Marketing Suite

Game Development with Unity3D (Game Development lecture 3)

Game Development with Unity3D (Game Development lecture 3)

Text-Summarization-of-Breaking-News-Using-Fine-tuning-BART-Model.pptx

Text-Summarization-of-Breaking-News-Using-Fine-tuning-BART-Model.pptx

Innovating Inference - Remote Triggering of Large Language Models on HPC Clus...

Innovating Inference - Remote Triggering of Large Language Models on HPC Clus...

Climate Science Flows: Enabling Petabyte-Scale Climate Analysis with the Eart...

Climate Science Flows: Enabling Petabyte-Scale Climate Analysis with the Eart...

Enterprise Software Development with No Code Solutions.pptx

Enterprise Software Development with No Code Solutions.pptx

AI Fusion Buddy Review: Brand New, Groundbreaking Gemini-Powered AI App

AI Fusion Buddy Review: Brand New, Groundbreaking Gemini-Powered AI App

openEuler Case Study - The Journey to Supply Chain Security

openEuler Case Study - The Journey to Supply Chain Security

Mobile App Development Company In Noida | Drona Infotech

Mobile App Development Company In Noida | Drona Infotech

ContentMine Architecture

- 1. RSU: Richard Smith-Unna PMR: Peter Murray-Rust CL: CottageLabs Queues Repos Scientific literature Science Plugins Science Volunteers Collaboration with Open Access Button

- 2. quickscrape Crawl Feed Norma Index & Transform PDF XML URL DOI Scientific literature Repositories DOC CSV sHTML Plugins Regex SequencesSpecies Bespoke Scrapers XPathPer-Journal Taggers Per- Journal MetadataChemistry Phylogenetics Farming AMI BadHTML OCR Diagrams Open NORMA-lized Scientific Literature + Facts CANARY pipeline CAT-alogue index

- 4. Starting points • Search/Crawl/Feed-> PMCID,DOI,URL -> quickscrape -> CMDir(PDF,HTML,XML,images/,meta) -> Norma -> CMDir(sHTML|TXT|SVG) good • PDF,XML,HTML -> Norma -> CMDir(PDF,rawHTML,TXT,images/,meta?) -> NormaOCR -> CMDir(sHTML,TXT,SVG) variable

- 5. Conversions • Paper-> Scanned -> TIFF (avoid) • PDF,TIFF,PNG -> Tesseract-N -> HTML, SVG fast, variable • PDF -> PDF2SVG-N -> sHTML, SVG, images/. slow, accurate-ish • PDF -> PDF2TXT-N -> TXT fast, variable • PDF -> PDF2Image-N -> PNG fast, accurate

- 6. Raw HTML Not wellformed Bad character semantics ScholarlyHTML Well-formed XHTML PNG Tagged Sections Captioned Figures Tables Captioned Tables XML HtmlTidy Jsoup HtmlUnit XSLT1/2 XSLT1/2 NORMA Per-journal Stylesheets

- 7. End points • Norma -> CMDir(OpenSHTML-SVG) • Norma -> CMDir(sHTML. sections) -> AMI -> all text + species, chemistry, sequences) • Norma -> CMDir(TXT (unsectioned)) -> AMI -> bagOfWords, regex, • Norma -> CMDir(PNG) -> AMI -> phylo, bar/xy- plots, • Norma -> CMDir(SVG) -> AMI -> phylo, bar/xy- plots, chemistry

- 8. PDF Non-Unicode Pixel glyphs No words No structures ScholarlyHTML SVG High-level graphics PDF2SVG characters Sentences Paras tables PNG OCR Tagged Sections SVGBuilder Captioned Figures NORMA XSLT1/2

- 9. NORMALIZE Norma Convert PDF,XML To sHTML Tag sections Normalized Scientific Literature AMI Index Transform Extract Search PDF2SVG XSL stylesheets Taggers normalization Parameters “Permanent” Filestore Temporary Filestore Extracted facts indexes Plugins Regex