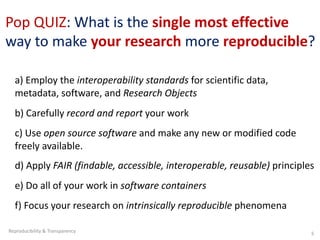

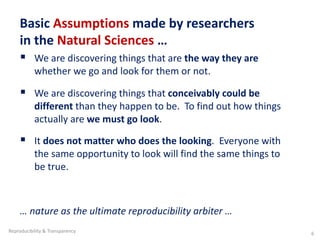

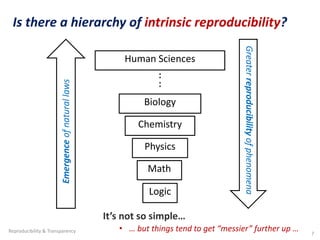

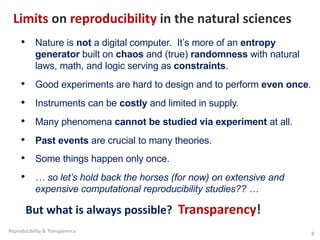

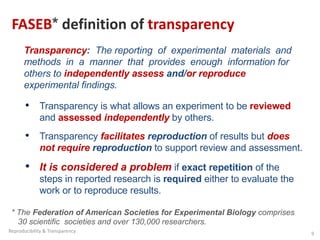

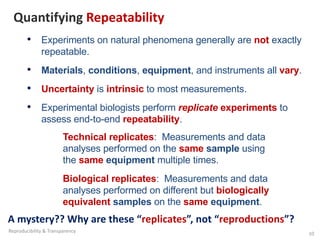

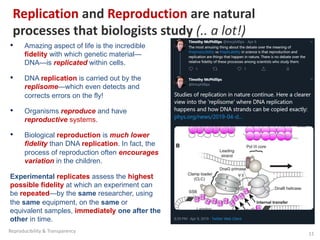

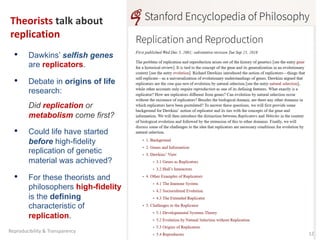

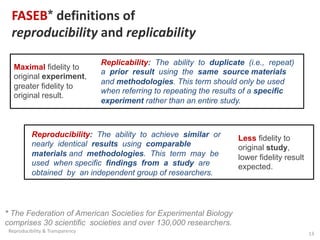

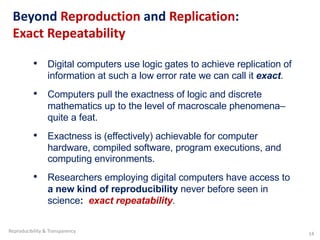

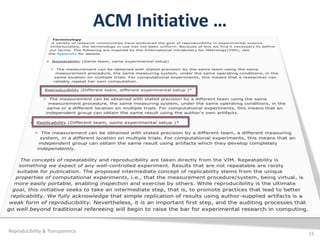

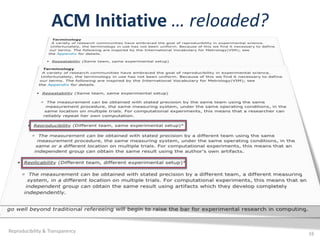

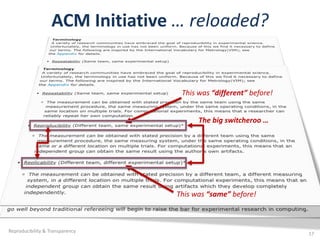

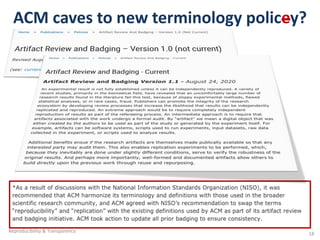

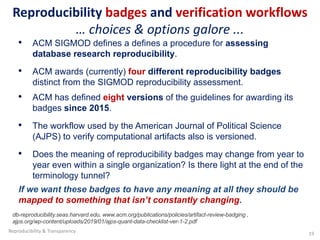

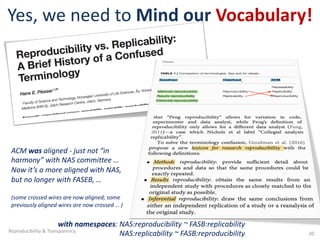

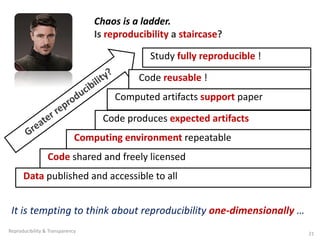

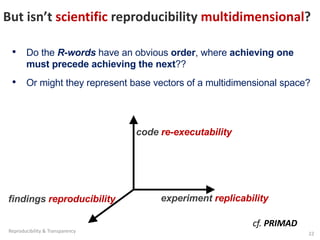

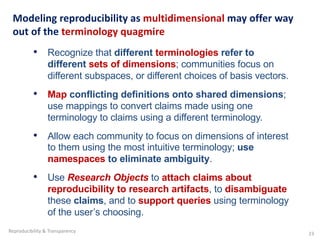

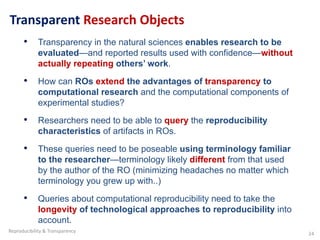

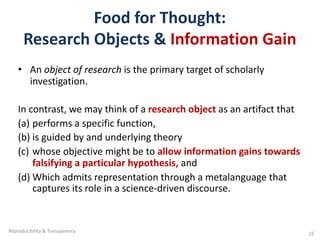

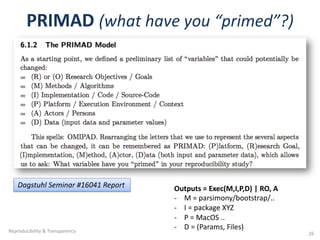

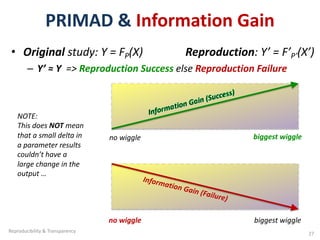

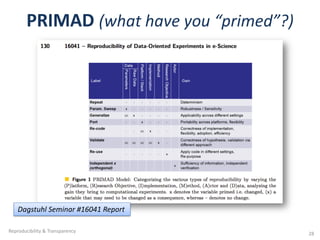

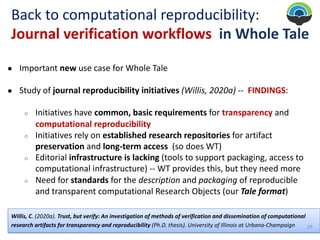

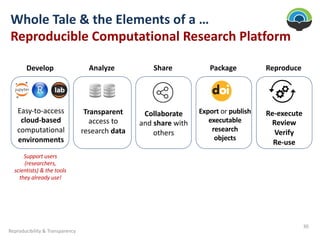

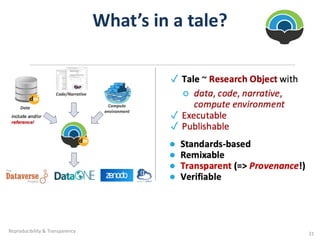

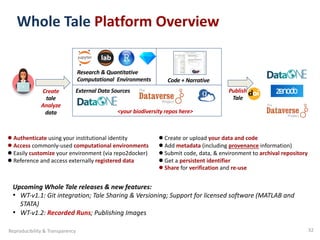

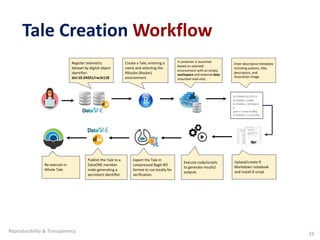

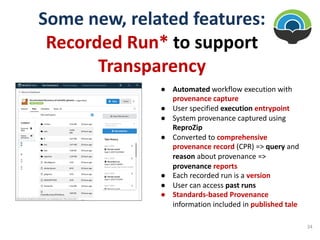

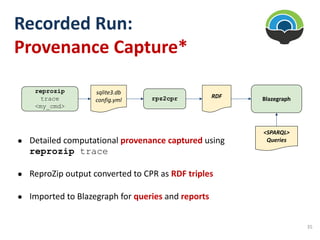

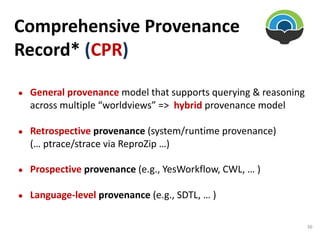

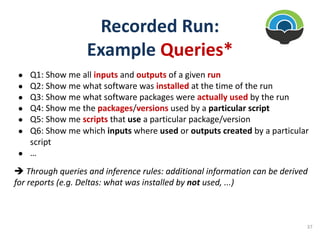

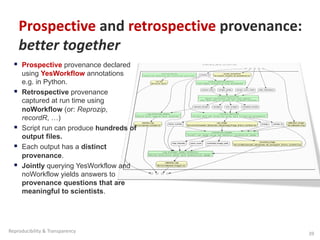

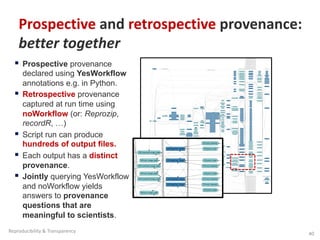

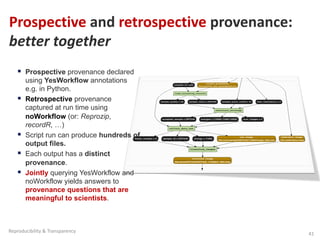

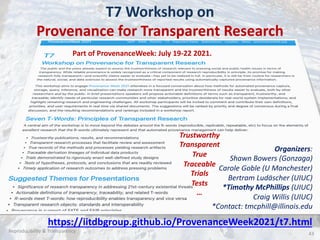

The document discusses the concepts of computational reproducibility and transparency in research, emphasizing the importance of fair data principles and proper reporting to address the reproducibility crisis in science. It highlights the distinction between reproducibility, replicability, and exact repeatability, while also advocating for the use of transparent research objects to facilitate reproducibility and improve access to research data. The paper proposes a multidimensional approach to understanding reproducibility and the necessity of capturing and querying provenance in computational research.