Embed presentation

Downloaded 119 times

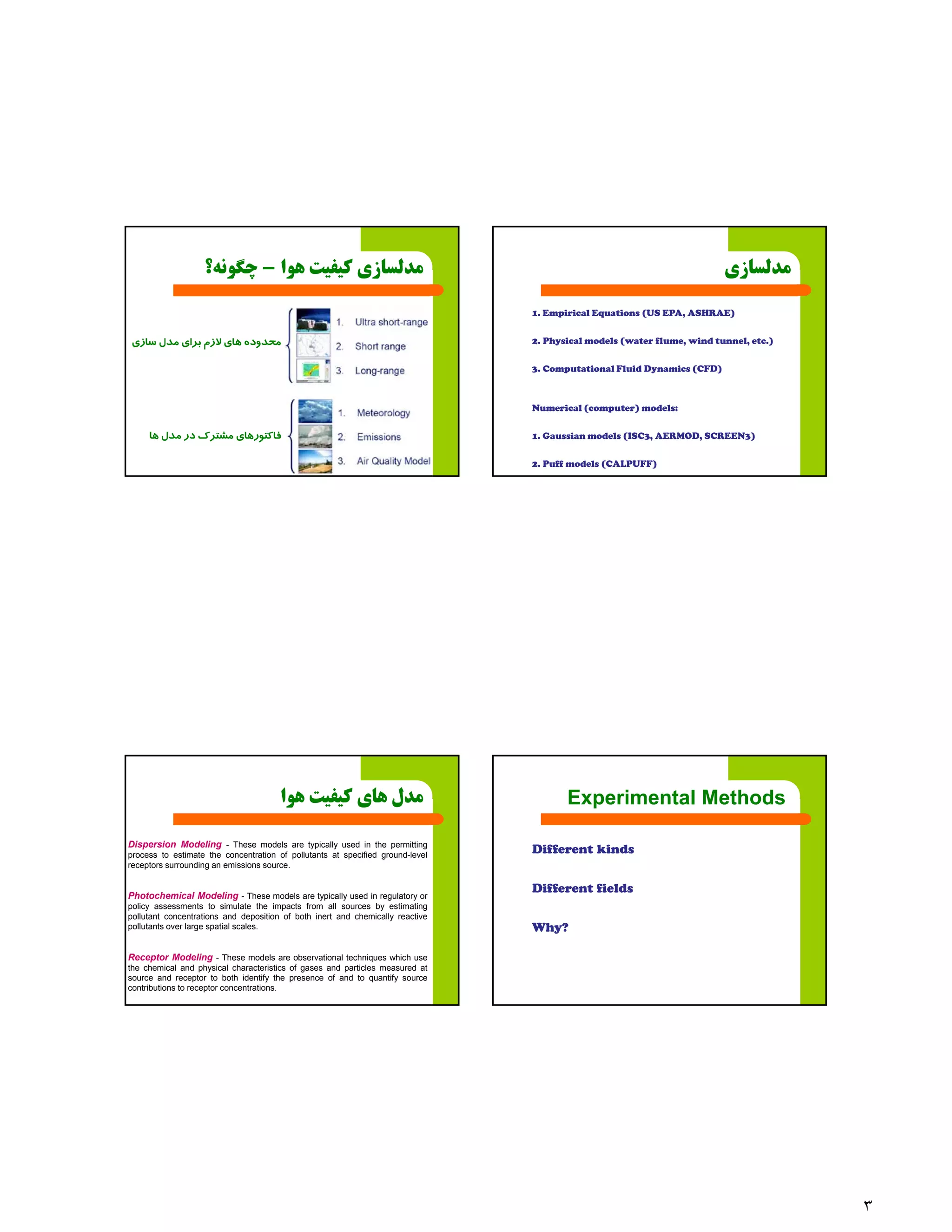

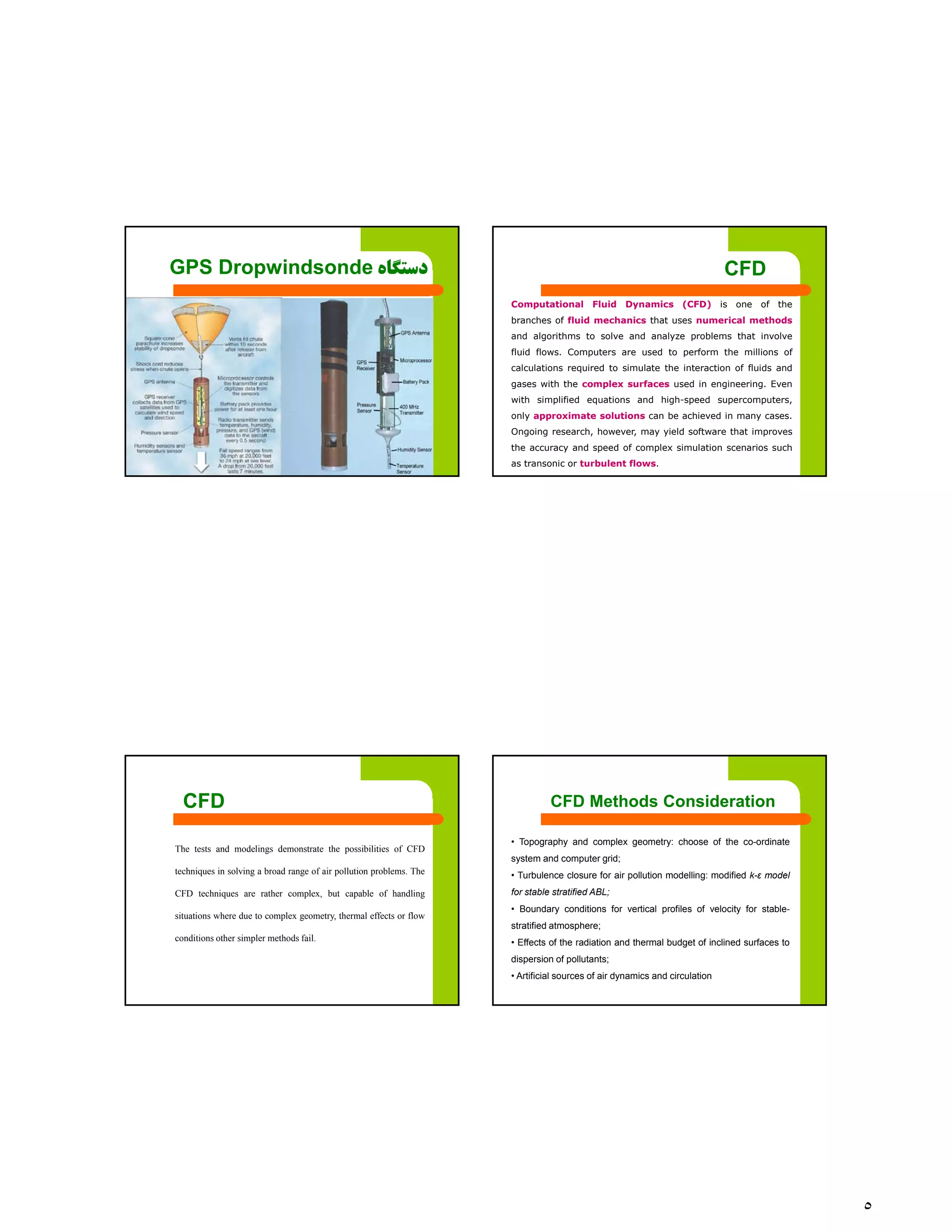

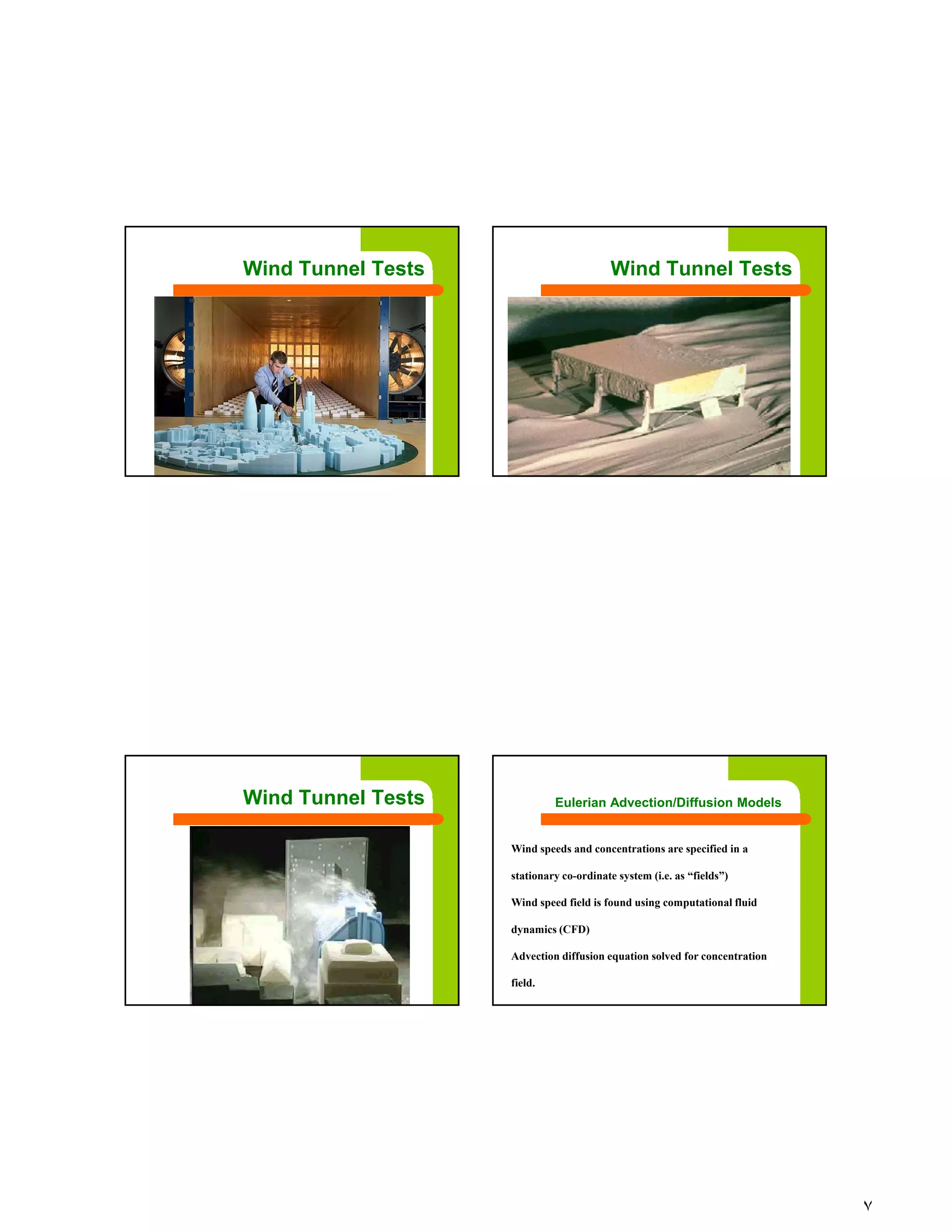

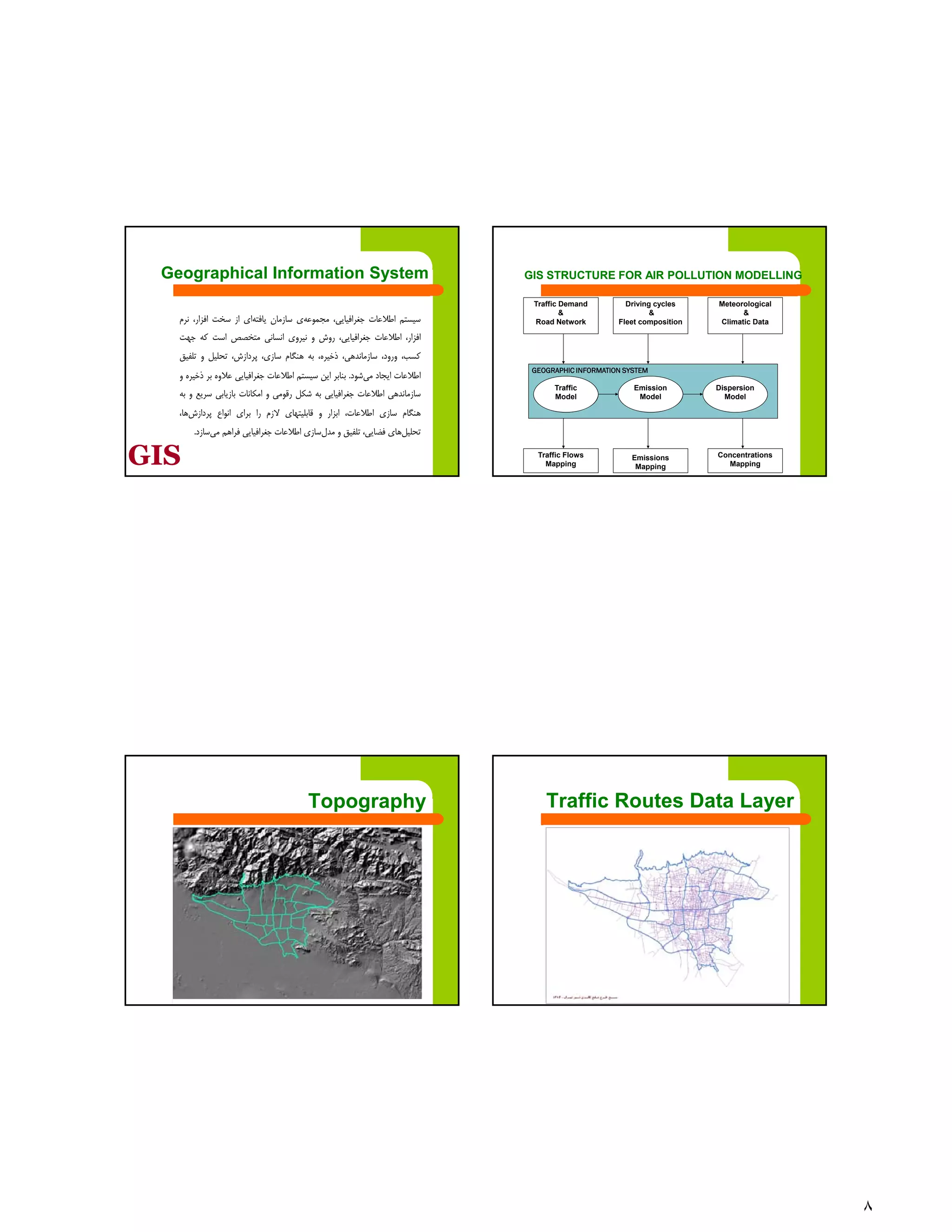

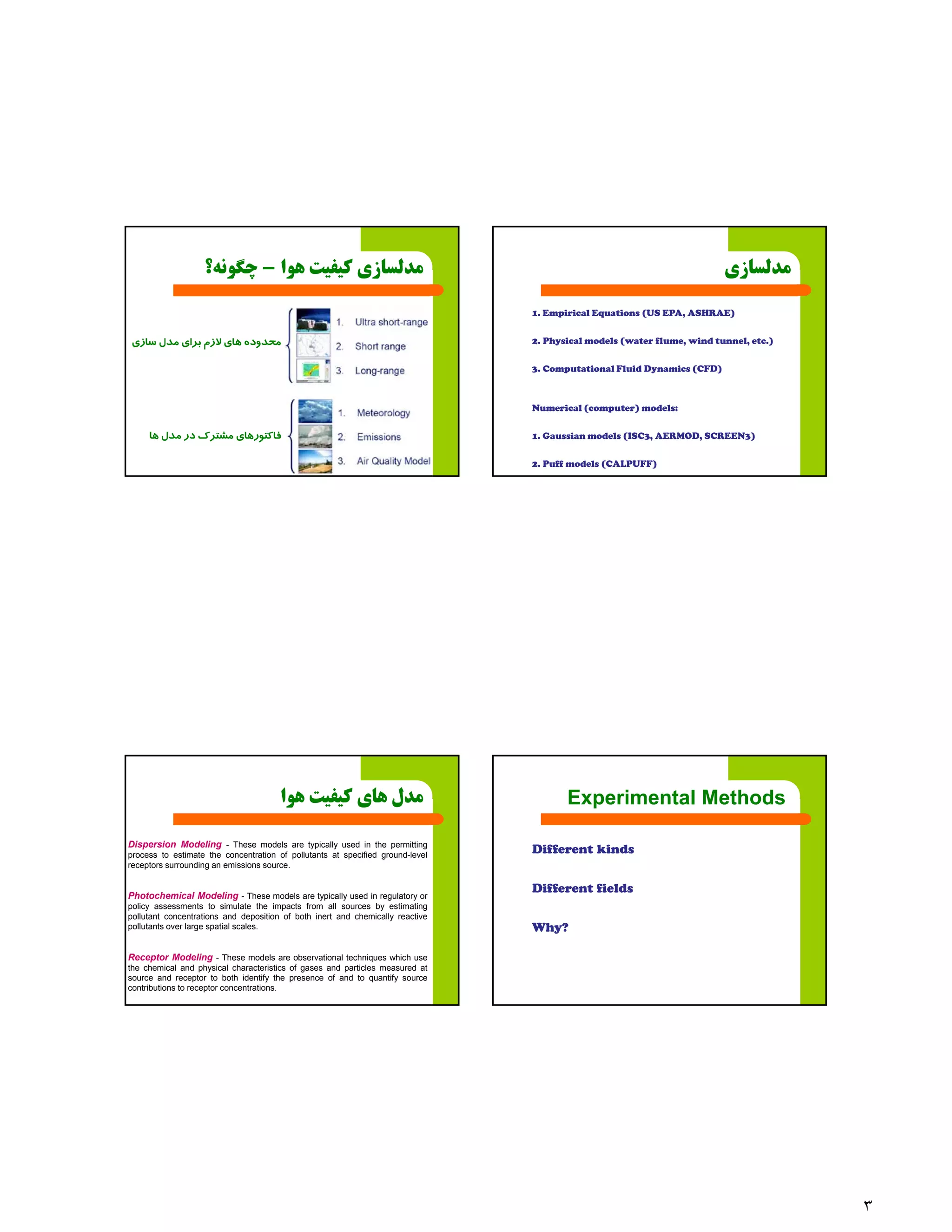

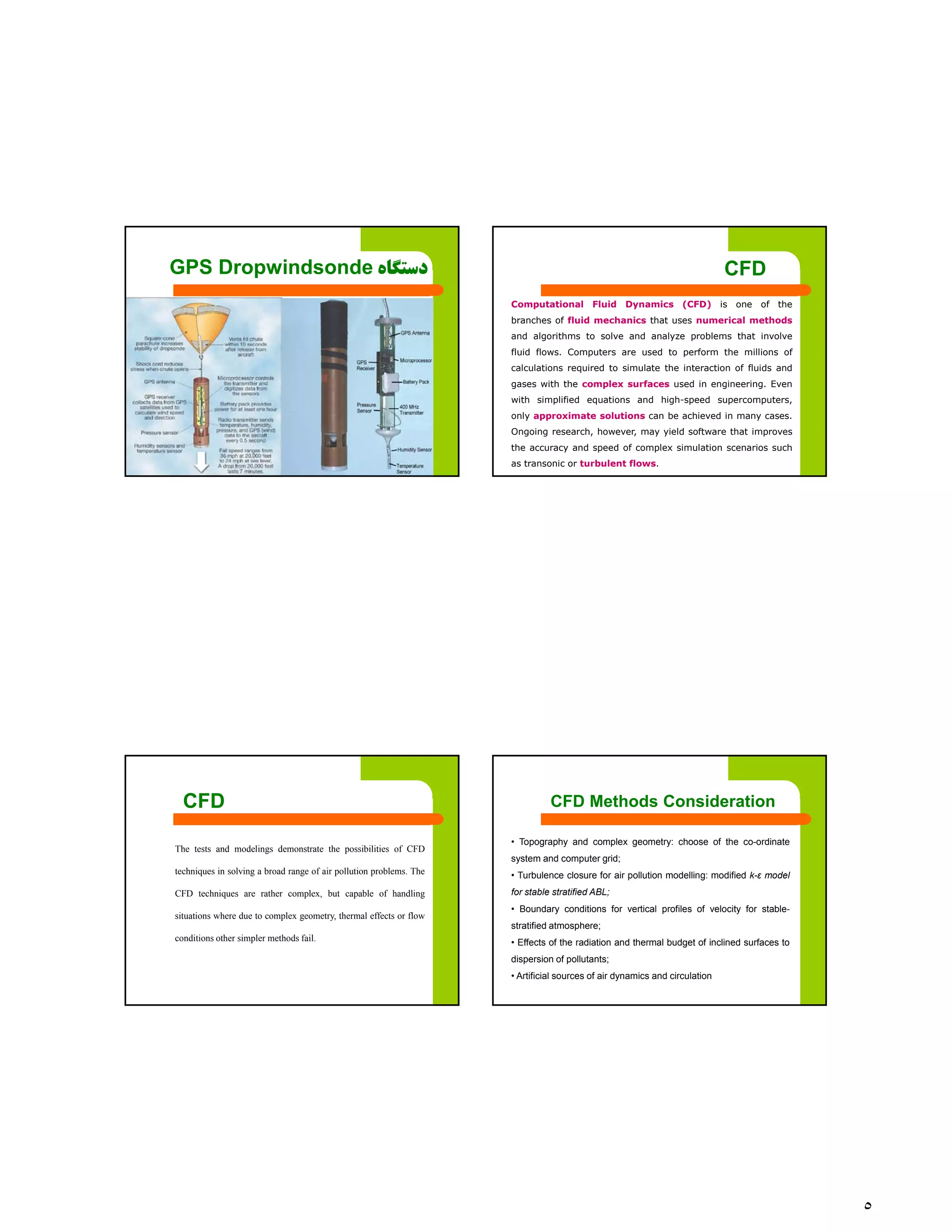

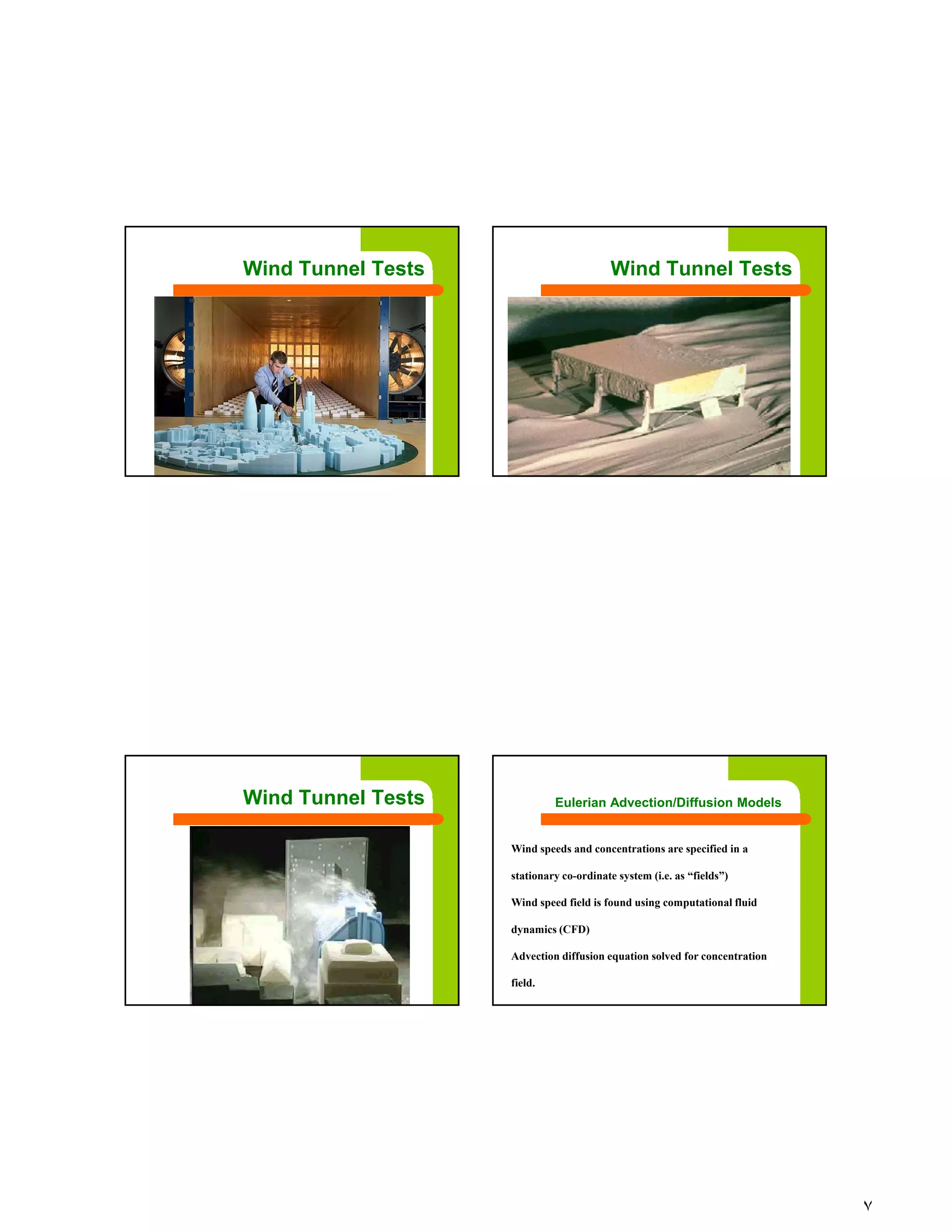

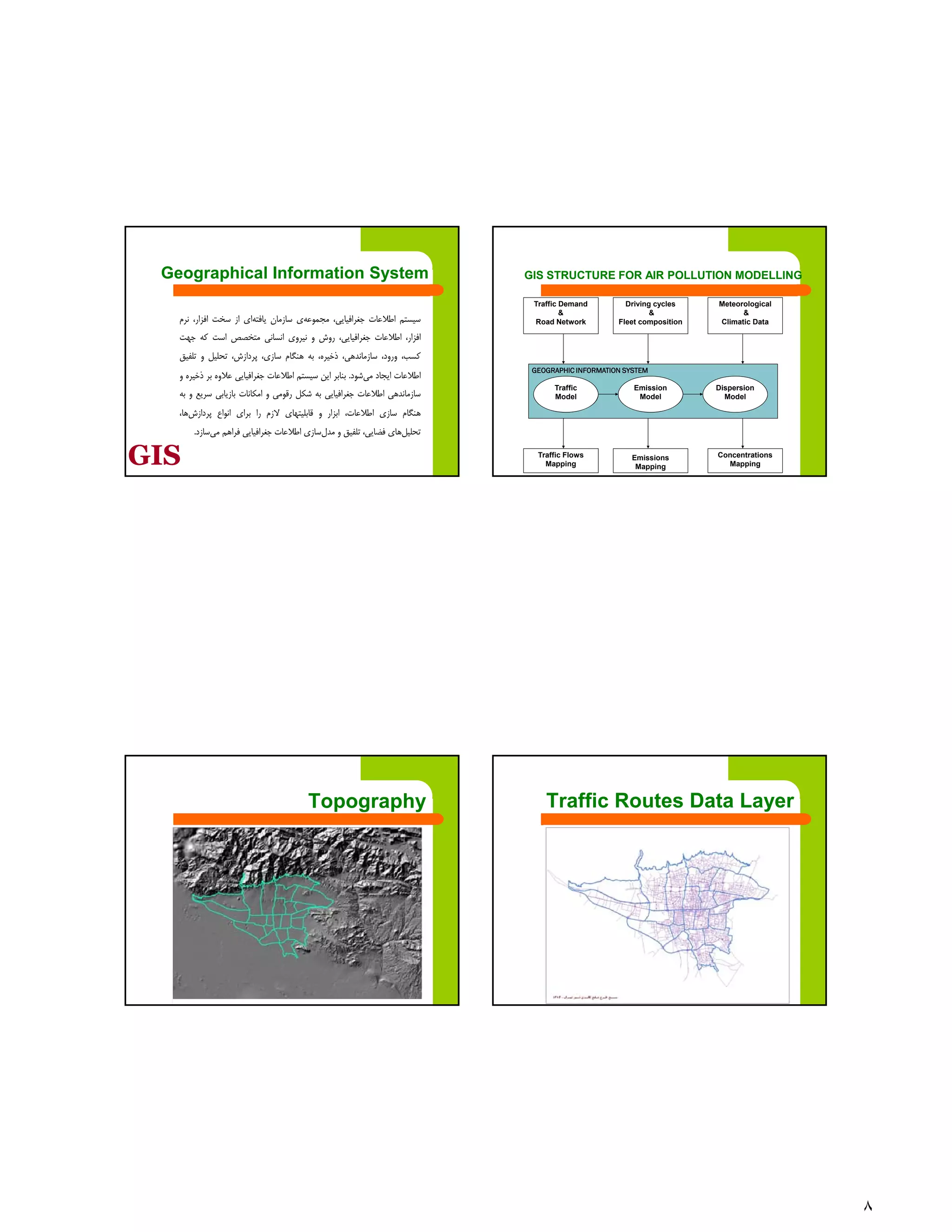

The document discusses integrated GIS-based methods for urban air pollution modeling, focusing on experimental and computational approaches. It outlines various modeling techniques such as empirical equations, physical models, and computational fluid dynamics (CFD) for predicting air pollution dispersion and impacts. The text also emphasizes the importance of advanced tools like wind tunnels and GIS in analyzing urban air quality and pollutant concentrations.