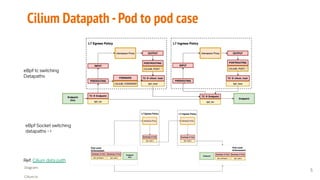

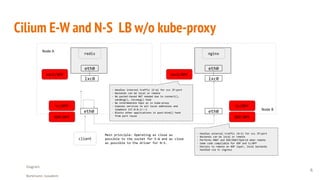

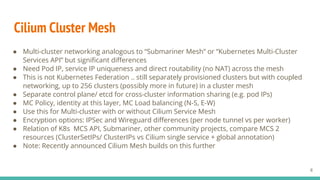

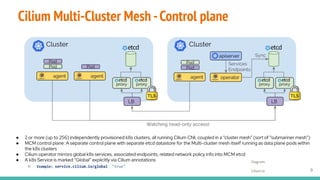

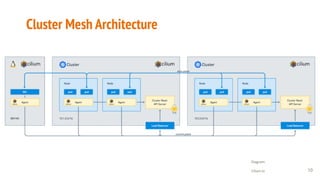

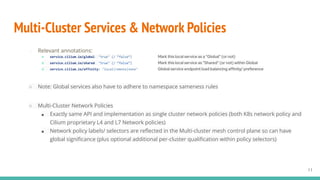

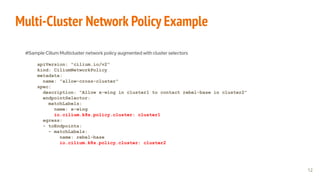

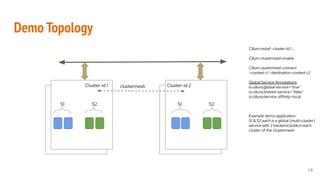

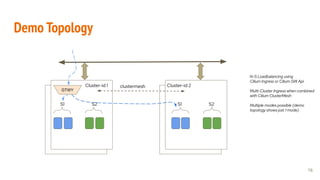

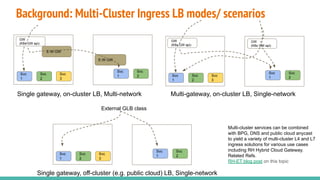

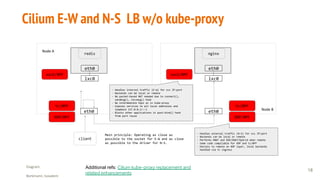

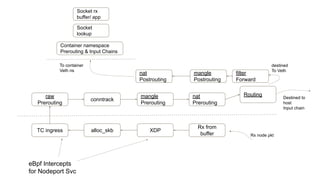

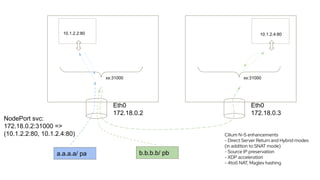

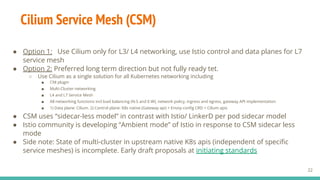

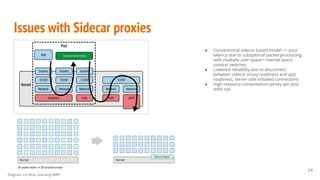

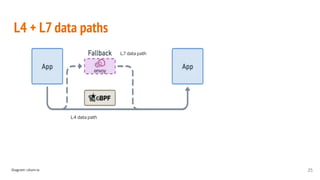

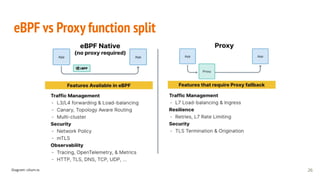

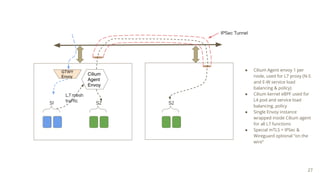

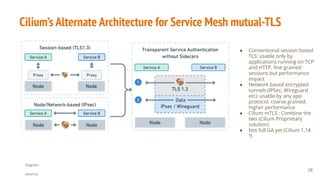

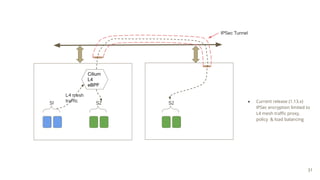

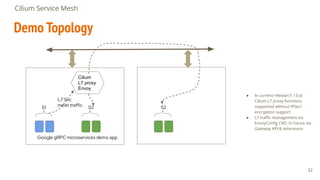

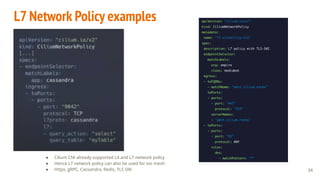

Cilium is an open source software that provides networking and security for Kubernetes. It implements Kubernetes networking, security policies, load balancing, and service mesh capabilities using eBPF. Cilium provides multi-cluster networking by coupling multiple Kubernetes clusters into a cluster mesh with a shared control plane. It also offers a sidecar-less service mesh that uses eBPF and Envoy for L4 and L7 traffic management instead of injecting proxies into each pod. Demos showed Cilium's multi-cluster load balancing and policies as well as its service mesh capabilities.