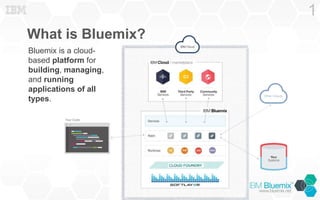

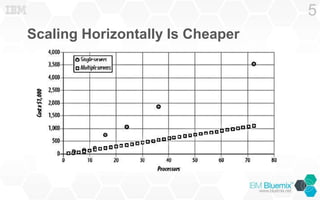

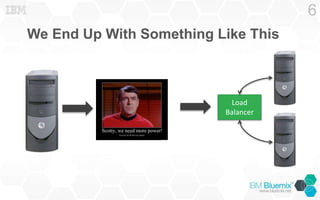

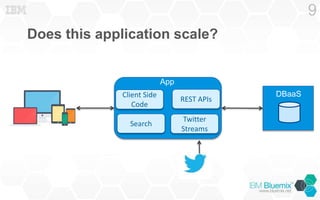

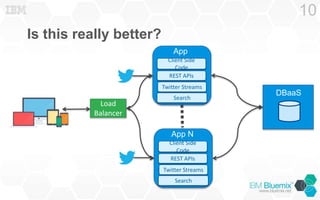

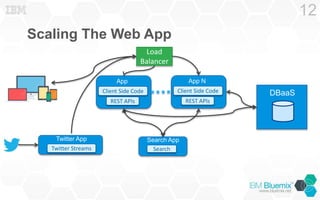

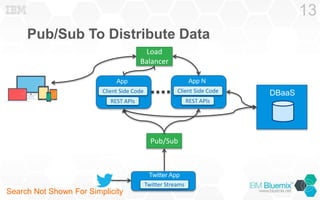

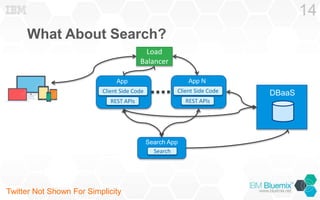

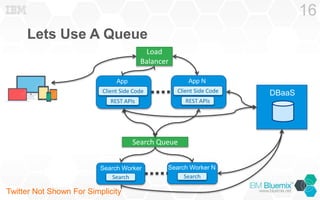

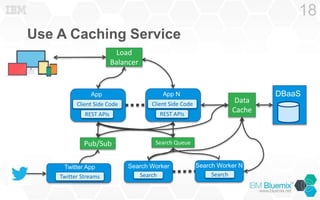

Bluemix is a cloud-based platform designed for building, managing, and running scalable applications. It emphasizes the importance of horizontal scaling and load balancing, proposing strategies like using content delivery networks and caching services to enhance application performance. Additionally, Bluemix offers auto-scaling functionality for various programming runtimes to streamline resource management.