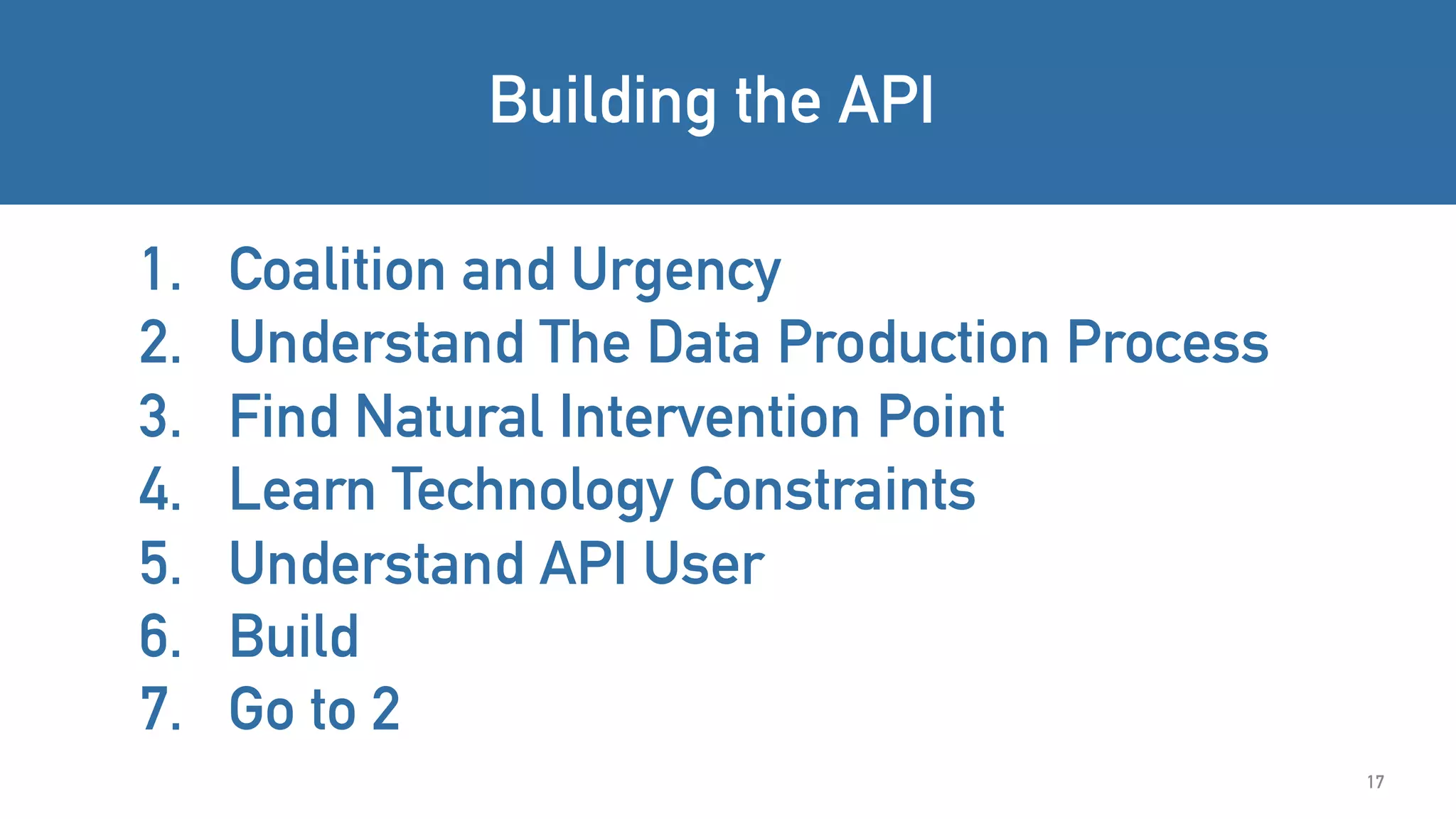

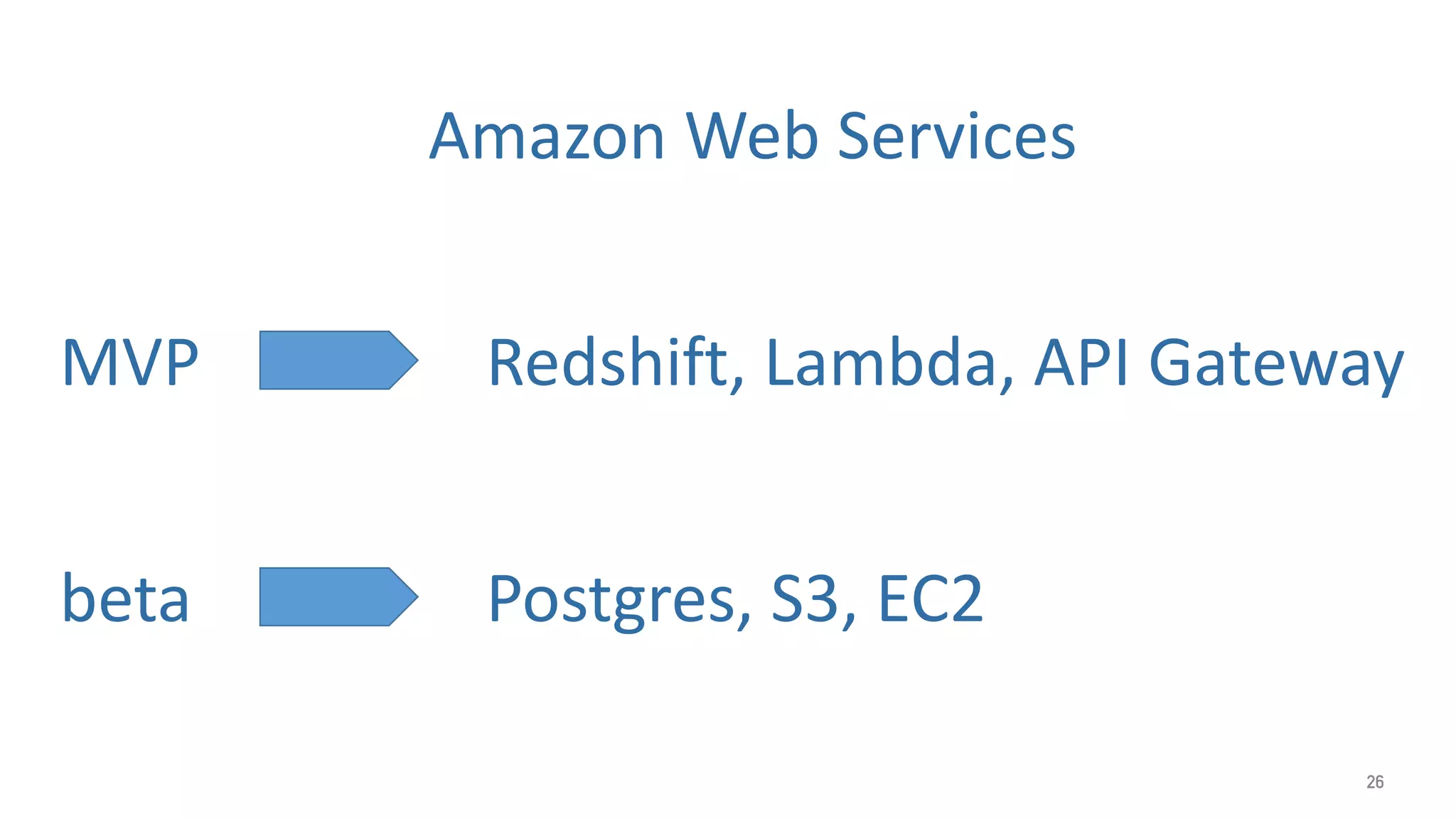

The document discusses the development of APIs in government, focusing on projects such as Sweat and Toil, CitySDK, and Midaas to address social issues. It outlines the steps for building effective APIs, including understanding user needs and technology constraints. The successes and recognition of these initiatives highlight their impact in promoting social good.