Branch and bound

•Download as PPTX, PDF•

0 likes•110 views

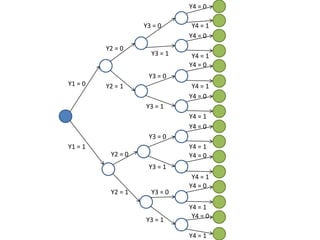

Branch and bound con 4 variables binarias

Report

Share

Report

Share

Recommended

Branch and bound technique

The document discusses using the branch and bound technique to solve optimization problems like the traveling salesman problem and water jug problem. It explains that branch and bound systematically breaks problems into smaller subsets, calculates bounds on objective functions, and discards subsets that cannot produce better solutions than what is currently known. For the water jug problem, it provides an example path and explains how branch and bound finds the optimal 6-state solution. For traveling salesman, it notes the problem is to minimize travel distance by visiting all cities once and returning home.

Branch and bound

Backtracking and branch and bound are algorithms for solving problems systematically by trying options in an orderly manner. Backtracking uses depth-first search and prunes subtrees that don't lead to solutions. Branch and bound uses breadth-first search and pruning, maintaining upper and lower bounds to eliminate options. Both aim to avoid exhaustive search by eliminating non-promising options early. Examples that can use these techniques include maze navigation, the eight queens problem, and Sudoku puzzles.

Branch and bound technique

This document discusses using the Branch and Bound technique to solve the traveling salesman problem and water jug problem. Branch and Bound is a method for solving discrete and combinatorial optimization problems by breaking the problem into smaller subsets, calculating bounds on the objective function, and discarding subsets that cannot produce better solutions than the best found so far. The document provides examples of applying Branch and Bound to find the optimal path between states for the water jug problem and the shortest route between cities for the traveling salesman problem.

Branch & bound

The document discusses backtracking and branch and bound algorithms for solving subset and permutation problems. It explains that backtracking performs a depth-first search of the solution space tree, exploring nodes recursively without storing the entire tree. Branch and bound also searches the tree systematically but uses priority queues and bounding functions to prioritize parts of the tree most likely to contain solutions. Both algorithms can solve large problem instances by exploring only portions of the exponential-sized solution space trees as needed.

Branch and bounding : Data structures

Branch and bound is a state space search method that generates all children of a node before expanding any children. It associates a cost or profit with each node and uses a min or max heap to select the next node to expand. For the travelling salesman problem, it constructs a permutation tree representing all possible routes and uses lower bounds and reduced cost matrices at each node to prune the search space and find an optimal solution.

Branch and bound

Branch and Bound is a state space search algorithm that involves generating all children of a node before exploring any children. It uses lower bounds to prune parts of the search tree that cannot produce better solutions than what has already been found. The algorithm is demonstrated on problems like the 8-puzzle and Travelling Salesman Problem. For TSP, it works by reducing the cost matrix at each node to calculate lower bounds, and exploring the child with the lowest estimated total cost.

01 knapsack using backtracking

The document discusses various backtracking techniques including bounding functions, promising functions, and pruning to avoid exploring unnecessary paths. It provides examples of problems that can be solved using backtracking including n-queens, graph coloring, Hamiltonian circuits, sum-of-subsets, 0-1 knapsack. Search techniques for backtracking problems include depth-first search (DFS), breadth-first search (BFS), and best-first search combined with branch-and-bound pruning.

Knapsack

The document discusses the 0/1 knapsack problem and the greedy algorithm approach. It describes the knapsack problem as selecting a subset of items with weights and values that fit within a knapsack capacity while maximizing the total value. The greedy algorithm works by selecting the highest value item at each step that fits within remaining capacity. The document provides an example problem of selecting boxes to fill a knapsack of 15kg capacity to maximize profit. It outlines the recurrence relation and time/space complexity of the greedy knapsack algorithm.

Recommended

Branch and bound technique

The document discusses using the branch and bound technique to solve optimization problems like the traveling salesman problem and water jug problem. It explains that branch and bound systematically breaks problems into smaller subsets, calculates bounds on objective functions, and discards subsets that cannot produce better solutions than what is currently known. For the water jug problem, it provides an example path and explains how branch and bound finds the optimal 6-state solution. For traveling salesman, it notes the problem is to minimize travel distance by visiting all cities once and returning home.

Branch and bound

Backtracking and branch and bound are algorithms for solving problems systematically by trying options in an orderly manner. Backtracking uses depth-first search and prunes subtrees that don't lead to solutions. Branch and bound uses breadth-first search and pruning, maintaining upper and lower bounds to eliminate options. Both aim to avoid exhaustive search by eliminating non-promising options early. Examples that can use these techniques include maze navigation, the eight queens problem, and Sudoku puzzles.

Branch and bound technique

This document discusses using the Branch and Bound technique to solve the traveling salesman problem and water jug problem. Branch and Bound is a method for solving discrete and combinatorial optimization problems by breaking the problem into smaller subsets, calculating bounds on the objective function, and discarding subsets that cannot produce better solutions than the best found so far. The document provides examples of applying Branch and Bound to find the optimal path between states for the water jug problem and the shortest route between cities for the traveling salesman problem.

Branch & bound

The document discusses backtracking and branch and bound algorithms for solving subset and permutation problems. It explains that backtracking performs a depth-first search of the solution space tree, exploring nodes recursively without storing the entire tree. Branch and bound also searches the tree systematically but uses priority queues and bounding functions to prioritize parts of the tree most likely to contain solutions. Both algorithms can solve large problem instances by exploring only portions of the exponential-sized solution space trees as needed.

Branch and bounding : Data structures

Branch and bound is a state space search method that generates all children of a node before expanding any children. It associates a cost or profit with each node and uses a min or max heap to select the next node to expand. For the travelling salesman problem, it constructs a permutation tree representing all possible routes and uses lower bounds and reduced cost matrices at each node to prune the search space and find an optimal solution.

Branch and bound

Branch and Bound is a state space search algorithm that involves generating all children of a node before exploring any children. It uses lower bounds to prune parts of the search tree that cannot produce better solutions than what has already been found. The algorithm is demonstrated on problems like the 8-puzzle and Travelling Salesman Problem. For TSP, it works by reducing the cost matrix at each node to calculate lower bounds, and exploring the child with the lowest estimated total cost.

01 knapsack using backtracking

The document discusses various backtracking techniques including bounding functions, promising functions, and pruning to avoid exploring unnecessary paths. It provides examples of problems that can be solved using backtracking including n-queens, graph coloring, Hamiltonian circuits, sum-of-subsets, 0-1 knapsack. Search techniques for backtracking problems include depth-first search (DFS), breadth-first search (BFS), and best-first search combined with branch-and-bound pruning.

Knapsack

The document discusses the 0/1 knapsack problem and the greedy algorithm approach. It describes the knapsack problem as selecting a subset of items with weights and values that fit within a knapsack capacity while maximizing the total value. The greedy algorithm works by selecting the highest value item at each step that fits within remaining capacity. The document provides an example problem of selecting boxes to fill a knapsack of 15kg capacity to maximize profit. It outlines the recurrence relation and time/space complexity of the greedy knapsack algorithm.

Back tracking and branch and bound class 20

Backtracking and branch and bound are algorithms used to solve problems with large search spaces. Backtracking uses depth-first search and prunes subtrees that don't lead to viable solutions. Branch and bound uses breadth-first search and pruning, maintaining partial solutions in a priority queue. Both techniques systematically eliminate possibilities to find optimal solutions faster than exhaustive search. Examples where they can be applied include maze pathfinding, the eight queens problem, sudoku, and the traveling salesman problem.

Travelling Salesman

The document compares and contrasts the Backtracking and Branch & Bound algorithms. Backtracking searches the entire state space tree using depth-first search until it finds a solution, while realizing when it has made an incorrect choice. Branch & Bound may search the tree using depth-first or breadth-first search, and prunes branches when it finds a better solution than exploring that branch could provide. The document also provides an example of applying the Branch & Bound algorithm to the Traveling Salesman Problem and explains how to compute the costs of nodes in this problem.

Travelling Salesperson Problem-Branch & Bound

The document discusses a proposed settlement agreement between two parties, John Doe and Richard Roe, to resolve a legal dispute over an automobile accident. The agreement states that John Doe will pay Richard Roe $5,000 in damages and that both parties will dismiss all claims against each other to avoid further legal proceedings. In exchange for the payment and dismissal of claims, Richard Roe agrees to release John Doe from any liability related to the accident.

Branch&bound at

1. The branch and bound algorithm divides the problem into sub-problems by fixing variables to 0 or 1.

2. It bounds sub-problems by relaxing constraints and solving the linear programming relaxation to obtain bounds.

3. Sub-problems are discarded if their bound is less than the best known solution or they are infeasible.

4. The algorithm proceeds by branching on the next variable until no sub-problems remain, leaving the optimal solution.

Application of greedy method prim

Prim's algorithm is a greedy algorithm that finds a minimum spanning tree for a connected weighted undirected graph. It finds a subset of edges that forms a tree including every vertex where the total weight is minimized. A minimum spanning tree is a subgraph that is a tree covering all vertices using the minimum total cost of edges. Prim's algorithm works by growing this tree one edge at a time, each time adding the minimum cost edge that connects the tree to new vertices until all vertices are included.

Knapsack Algorithm www.geekssay.com

The document discusses the knapsack problem and provides examples to illustrate the recursive solution approach. It describes the knapsack problem as selecting items to place in a knapsack with a weight capacity to maximize the total benefit without exceeding the weight limit. It shows that the optimal solution can be found using a recursive function f(w) that returns the maximum benefit for a knapsack of weight w. The examples demonstrate computing f(w) values to find the optimal solutions for sample knapsack problems.

Lec30

This document discusses various algorithm design methods and optimization problems. It provides examples of greedy algorithms for problems like machine scheduling, bin packing, and the 0/1 knapsack problem. While greedy algorithms provide efficient solutions, they do not always find the optimal solution. The document explores different greedy heuristics for the 0/1 knapsack problem and analyzes their performance compared to the best possible solution.

Application of greedy method

This document discusses the greedy algorithm approach for finding minimum spanning trees. It explains that greedy algorithms make locally optimal choices at each step to arrive at a global solution. Kruskal's algorithm is presented as an example greedy algorithm for finding minimum spanning trees. It works by sorting the edges by weight and then adding edges one by one if they do not form cycles. While greedy algorithms are faster, they do not always find the true optimal solution.

Knapsack problem

Knapsack problem ==>>

Given some items, pack the knapsack to get

the maximum total value. Each item has some

weight and some value. Total weight that we can

carry is no more than some fixed number W.

So we must consider weights of items as well as

their values.

Greedy Algorithm

This document provides an introduction to greedy algorithms. It defines greedy algorithms as algorithms that make locally optimal choices at each step in the hope of finding a global optimum. The document then provides examples of problems that can be solved using greedy algorithms, including counting money, scheduling jobs, finding minimum spanning trees, and the traveling salesman problem. It also provides pseudocode for a general greedy algorithm and discusses some properties of greedy algorithms.

Greedymethod

The document discusses greedy algorithms, their characteristics, and an example problem. Greedy algorithms make locally optimal choices at each step in the hope of finding a global optimum. They are simpler and faster than dynamic programming but may not always find the true optimal solution. The coin changing problem is used to illustrate a greedy approach of always selecting the largest valid coin denomination at each step.

Greedy

The document discusses the greedy method algorithm design paradigm. It can be used to solve optimization problems with the greedy-choice property, where choosing locally optimal decisions at each step leads to a globally optimal solution. Examples discussed include fractional knapsack problem, task scheduling, and making change problem. The greedy algorithm works by always making the choice that looks best at the moment, without considering future implications of that choice.

Greedy Algorihm

This document outlines greedy algorithms, their characteristics, and examples of their use. Greedy algorithms make locally optimal choices at each step in the hopes of finding a global optimum. They are simple to implement and fast, but may not always reach the true optimal solution. Examples discussed include coin changing, traveling salesman, minimum spanning trees using Kruskal's and Prim's algorithms, and Huffman coding.

Spanning trees & applications

This document discusses minimum spanning trees. It defines a minimum spanning tree as a spanning tree of a connected, undirected graph that has a minimum total cost among all spanning trees of that graph. The document provides properties of minimum spanning trees, including that they are acyclic, connect all vertices, and have n-1 edges for a graph with n vertices. Applications of minimum spanning trees mentioned include communication networks, power grids, and laying telephone wires to minimize total length.

Greedy algorithm

Greedy algorithms make locally optimal choices at each step to try to find a global optimum. They choose the best option available at each state. For the travelling salesman problem, the greedy approach is to always choose the nearest unvisited city from the current city to build the route. While greedy algorithms are simple to implement, they do not always find the true optimal solution, especially for large complex problems, as they only consider the best choice at each step rather than the overall route.

Greedy Algorithms

Greedy algorithms, kruskal's algorithm, merging sorted lists, knapsack problem, union find data structure with path compression

8 queens problem using back tracking

The document discusses solving the 8 queens problem using backtracking. It begins by explaining backtracking as an algorithm that builds partial candidates for solutions incrementally and abandons any partial candidate that cannot be completed to a valid solution. It then provides more details on the 8 queens problem itself - the goal is to place 8 queens on a chessboard so that no two queens attack each other. Backtracking is well-suited for solving this problem by attempting to place queens one by one and backtracking when an invalid placement is found.

Knapsack Problem

The document discusses the knapsack problem, which involves selecting a subset of items that fit within a knapsack of limited capacity to maximize the total value. There are two versions - the 0-1 knapsack problem where items can only be selected entirely or not at all, and the fractional knapsack problem where items can be partially selected. Solutions include brute force, greedy algorithms, and dynamic programming. Dynamic programming builds up the optimal solution by considering all sub-problems.

Backtracking

This document discusses various problems that can be solved using backtracking, including graph coloring, the Hamiltonian cycle problem, the subset sum problem, the n-queen problem, and map coloring. It provides examples of how backtracking works by constructing partial solutions and evaluating them to find valid solutions or determine dead ends. Key terms like state-space trees and promising vs non-promising states are introduced. Specific examples are given for problems like placing 4 queens on a chessboard and coloring a map of Australia.

More Related Content

Viewers also liked

Back tracking and branch and bound class 20

Backtracking and branch and bound are algorithms used to solve problems with large search spaces. Backtracking uses depth-first search and prunes subtrees that don't lead to viable solutions. Branch and bound uses breadth-first search and pruning, maintaining partial solutions in a priority queue. Both techniques systematically eliminate possibilities to find optimal solutions faster than exhaustive search. Examples where they can be applied include maze pathfinding, the eight queens problem, sudoku, and the traveling salesman problem.

Travelling Salesman

The document compares and contrasts the Backtracking and Branch & Bound algorithms. Backtracking searches the entire state space tree using depth-first search until it finds a solution, while realizing when it has made an incorrect choice. Branch & Bound may search the tree using depth-first or breadth-first search, and prunes branches when it finds a better solution than exploring that branch could provide. The document also provides an example of applying the Branch & Bound algorithm to the Traveling Salesman Problem and explains how to compute the costs of nodes in this problem.

Travelling Salesperson Problem-Branch & Bound

The document discusses a proposed settlement agreement between two parties, John Doe and Richard Roe, to resolve a legal dispute over an automobile accident. The agreement states that John Doe will pay Richard Roe $5,000 in damages and that both parties will dismiss all claims against each other to avoid further legal proceedings. In exchange for the payment and dismissal of claims, Richard Roe agrees to release John Doe from any liability related to the accident.

Branch&bound at

1. The branch and bound algorithm divides the problem into sub-problems by fixing variables to 0 or 1.

2. It bounds sub-problems by relaxing constraints and solving the linear programming relaxation to obtain bounds.

3. Sub-problems are discarded if their bound is less than the best known solution or they are infeasible.

4. The algorithm proceeds by branching on the next variable until no sub-problems remain, leaving the optimal solution.

Application of greedy method prim

Prim's algorithm is a greedy algorithm that finds a minimum spanning tree for a connected weighted undirected graph. It finds a subset of edges that forms a tree including every vertex where the total weight is minimized. A minimum spanning tree is a subgraph that is a tree covering all vertices using the minimum total cost of edges. Prim's algorithm works by growing this tree one edge at a time, each time adding the minimum cost edge that connects the tree to new vertices until all vertices are included.

Knapsack Algorithm www.geekssay.com

The document discusses the knapsack problem and provides examples to illustrate the recursive solution approach. It describes the knapsack problem as selecting items to place in a knapsack with a weight capacity to maximize the total benefit without exceeding the weight limit. It shows that the optimal solution can be found using a recursive function f(w) that returns the maximum benefit for a knapsack of weight w. The examples demonstrate computing f(w) values to find the optimal solutions for sample knapsack problems.

Lec30

This document discusses various algorithm design methods and optimization problems. It provides examples of greedy algorithms for problems like machine scheduling, bin packing, and the 0/1 knapsack problem. While greedy algorithms provide efficient solutions, they do not always find the optimal solution. The document explores different greedy heuristics for the 0/1 knapsack problem and analyzes their performance compared to the best possible solution.

Application of greedy method

This document discusses the greedy algorithm approach for finding minimum spanning trees. It explains that greedy algorithms make locally optimal choices at each step to arrive at a global solution. Kruskal's algorithm is presented as an example greedy algorithm for finding minimum spanning trees. It works by sorting the edges by weight and then adding edges one by one if they do not form cycles. While greedy algorithms are faster, they do not always find the true optimal solution.

Knapsack problem

Knapsack problem ==>>

Given some items, pack the knapsack to get

the maximum total value. Each item has some

weight and some value. Total weight that we can

carry is no more than some fixed number W.

So we must consider weights of items as well as

their values.

Greedy Algorithm

This document provides an introduction to greedy algorithms. It defines greedy algorithms as algorithms that make locally optimal choices at each step in the hope of finding a global optimum. The document then provides examples of problems that can be solved using greedy algorithms, including counting money, scheduling jobs, finding minimum spanning trees, and the traveling salesman problem. It also provides pseudocode for a general greedy algorithm and discusses some properties of greedy algorithms.

Greedymethod

The document discusses greedy algorithms, their characteristics, and an example problem. Greedy algorithms make locally optimal choices at each step in the hope of finding a global optimum. They are simpler and faster than dynamic programming but may not always find the true optimal solution. The coin changing problem is used to illustrate a greedy approach of always selecting the largest valid coin denomination at each step.

Greedy

The document discusses the greedy method algorithm design paradigm. It can be used to solve optimization problems with the greedy-choice property, where choosing locally optimal decisions at each step leads to a globally optimal solution. Examples discussed include fractional knapsack problem, task scheduling, and making change problem. The greedy algorithm works by always making the choice that looks best at the moment, without considering future implications of that choice.

Greedy Algorihm

This document outlines greedy algorithms, their characteristics, and examples of their use. Greedy algorithms make locally optimal choices at each step in the hopes of finding a global optimum. They are simple to implement and fast, but may not always reach the true optimal solution. Examples discussed include coin changing, traveling salesman, minimum spanning trees using Kruskal's and Prim's algorithms, and Huffman coding.

Spanning trees & applications

This document discusses minimum spanning trees. It defines a minimum spanning tree as a spanning tree of a connected, undirected graph that has a minimum total cost among all spanning trees of that graph. The document provides properties of minimum spanning trees, including that they are acyclic, connect all vertices, and have n-1 edges for a graph with n vertices. Applications of minimum spanning trees mentioned include communication networks, power grids, and laying telephone wires to minimize total length.

Greedy algorithm

Greedy algorithms make locally optimal choices at each step to try to find a global optimum. They choose the best option available at each state. For the travelling salesman problem, the greedy approach is to always choose the nearest unvisited city from the current city to build the route. While greedy algorithms are simple to implement, they do not always find the true optimal solution, especially for large complex problems, as they only consider the best choice at each step rather than the overall route.

Greedy Algorithms

Greedy algorithms, kruskal's algorithm, merging sorted lists, knapsack problem, union find data structure with path compression

8 queens problem using back tracking

The document discusses solving the 8 queens problem using backtracking. It begins by explaining backtracking as an algorithm that builds partial candidates for solutions incrementally and abandons any partial candidate that cannot be completed to a valid solution. It then provides more details on the 8 queens problem itself - the goal is to place 8 queens on a chessboard so that no two queens attack each other. Backtracking is well-suited for solving this problem by attempting to place queens one by one and backtracking when an invalid placement is found.

Knapsack Problem

The document discusses the knapsack problem, which involves selecting a subset of items that fit within a knapsack of limited capacity to maximize the total value. There are two versions - the 0-1 knapsack problem where items can only be selected entirely or not at all, and the fractional knapsack problem where items can be partially selected. Solutions include brute force, greedy algorithms, and dynamic programming. Dynamic programming builds up the optimal solution by considering all sub-problems.

Backtracking

This document discusses various problems that can be solved using backtracking, including graph coloring, the Hamiltonian cycle problem, the subset sum problem, the n-queen problem, and map coloring. It provides examples of how backtracking works by constructing partial solutions and evaluating them to find valid solutions or determine dead ends. Key terms like state-space trees and promising vs non-promising states are introduced. Specific examples are given for problems like placing 4 queens on a chessboard and coloring a map of Australia.

Viewers also liked (19)

Branch and bound

- 1. Y4 = 1 Y4 = 1 Y4 = 1 Y4 = 1 Y4 = 1 Y4 = 1 Y4 = 1 Y4 = 0 Y4 = 0 Y4 = 0 Y4 = 0 Y4 = 0 Y4 = 0 Y4 = 0 Y3 = 1 Y3 = 1 Y3 = 1 Y3 = 0 Y3 = 0 Y3 = 0 Y2 = 1 Y2 = 1 Y2 = 0 Y1 = 1 Y1 = 0 Y2 = 0 Y3 = 1 Y3 = 0 Y4 = 1 Y4 = 0