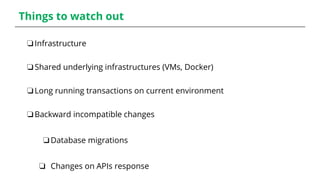

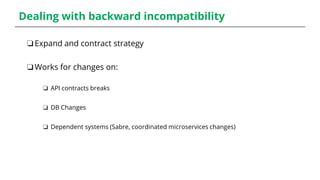

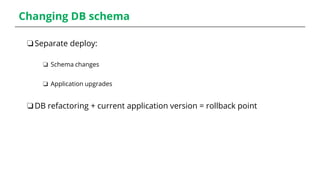

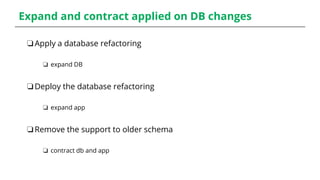

The document outlines the concept and implementation of blue-green deployment within a DevOps context, covering its benefits, costs, and necessary precautions. It emphasizes the importance of minimizing downtime during software production releases and highlights strategies for rapid rollbacks, especially in scenarios involving backward incompatible changes and database migrations. The document also presents a live demo and warns about potential pitfalls related to shared infrastructures and long-running transactions.