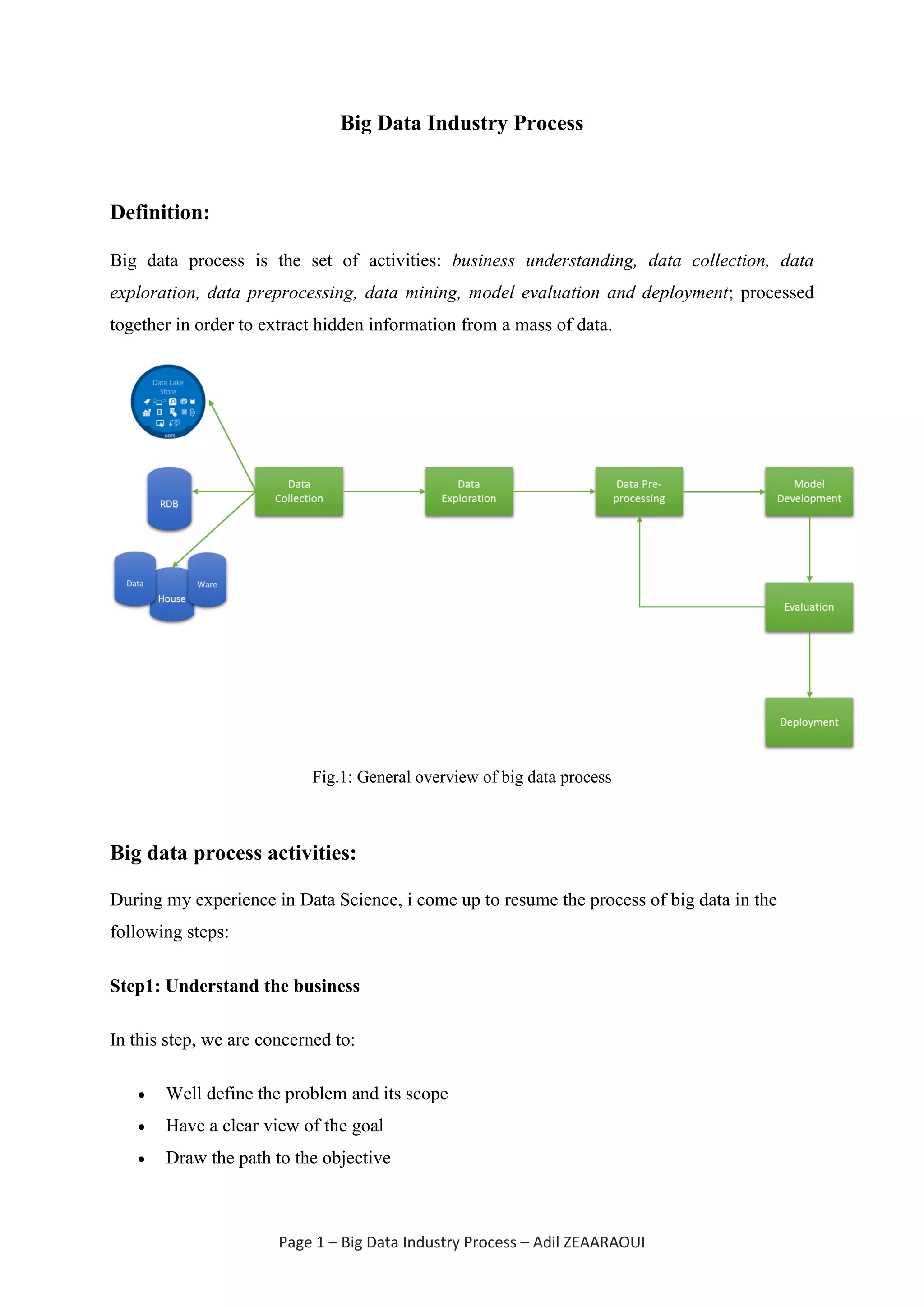

The document outlines the big data industry process, which includes steps such as business understanding, data collection, data exploration, data preprocessing, data mining, and model evaluation and deployment. It emphasizes the importance of each step, particularly data preprocessing, which can account for up to 90% of the overall process. The ultimate goal is to extract valuable insights from large data sets through a systematic approach.