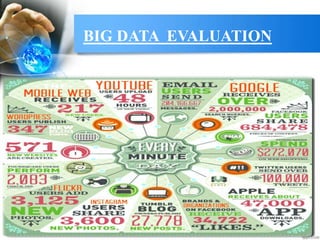

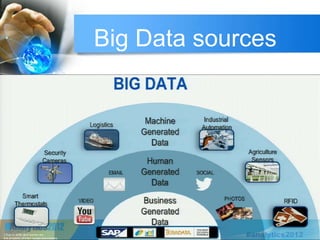

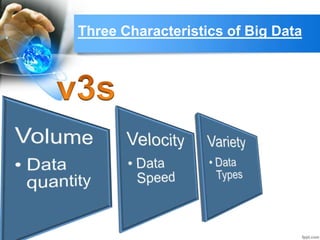

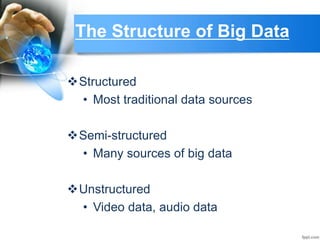

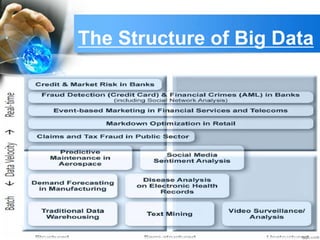

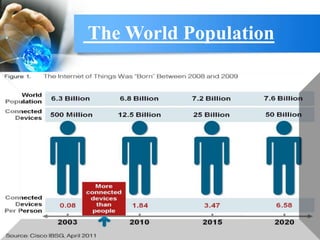

This document provides an overview of big data. It begins with definitions of big data and its key characteristics, including volume, velocity, and variety. It then discusses how big data is stored, selected, and processed. Examples of big data sources and tools are provided. The document outlines several applications of big data across different industries like healthcare, manufacturing, and retail. It also discusses risks of big data like privacy issues and costs. The future of big data is presented, with projections that the big data market will grow significantly in coming years. In closing, references are provided for additional information on big data.