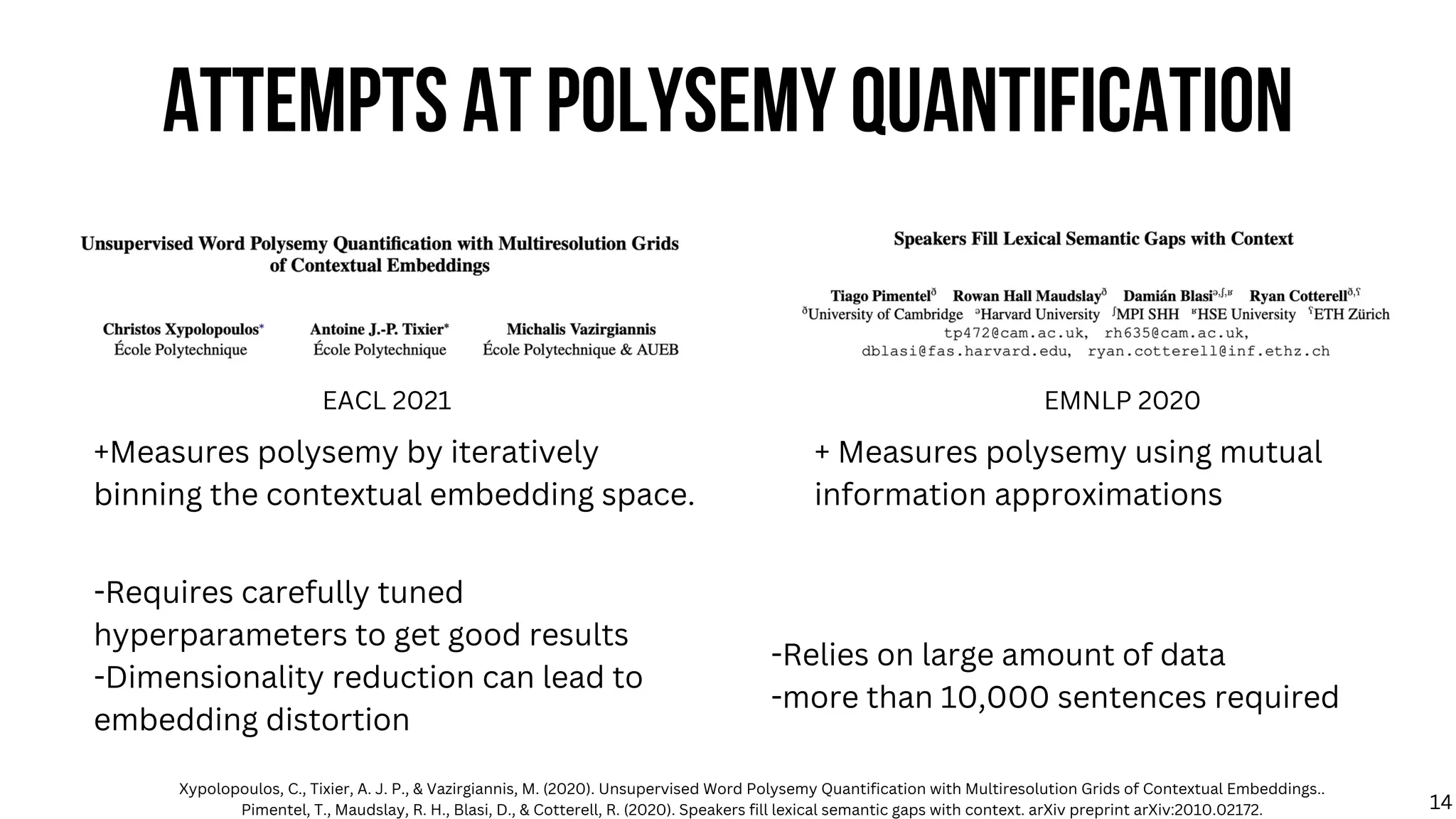

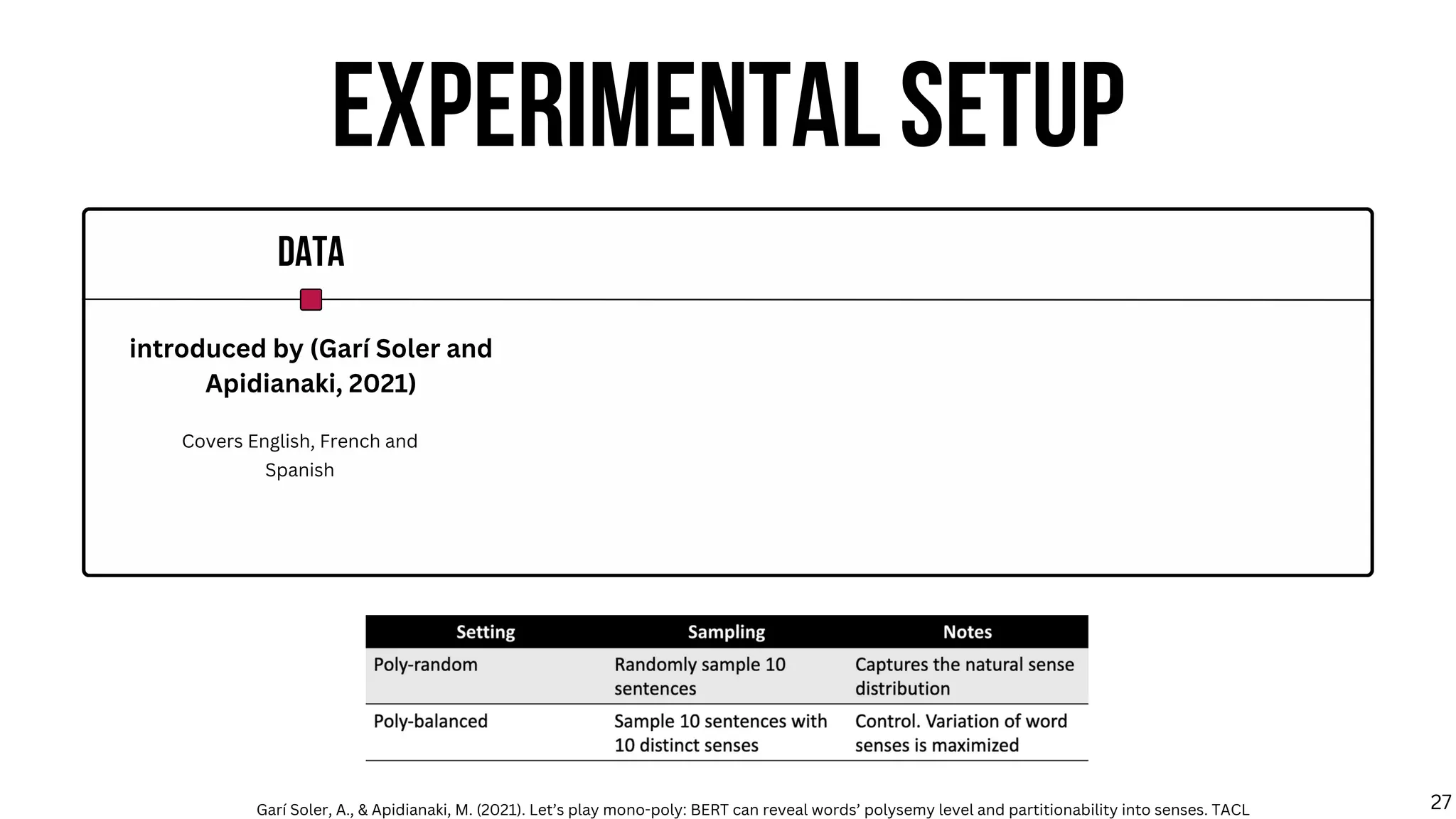

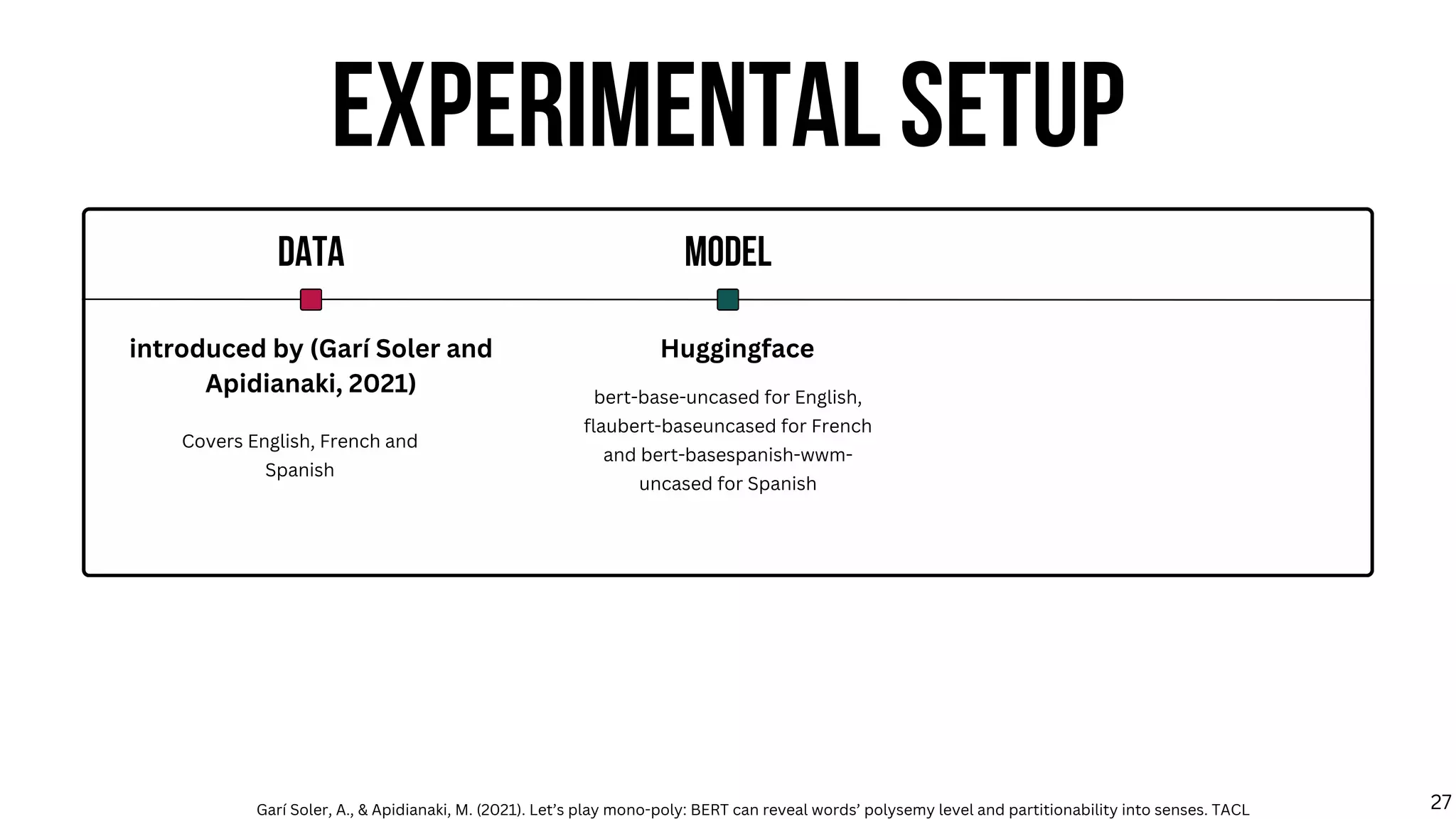

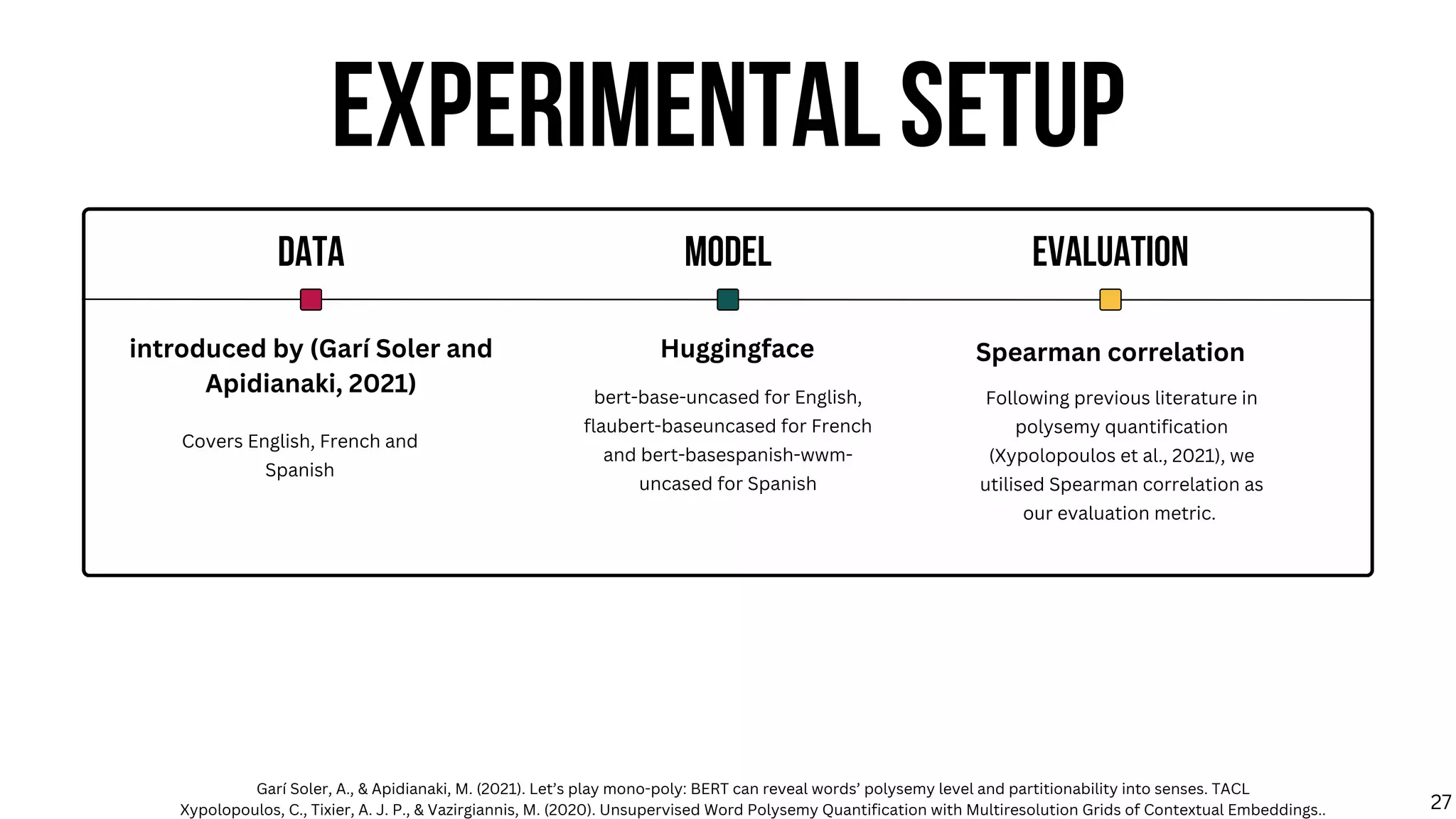

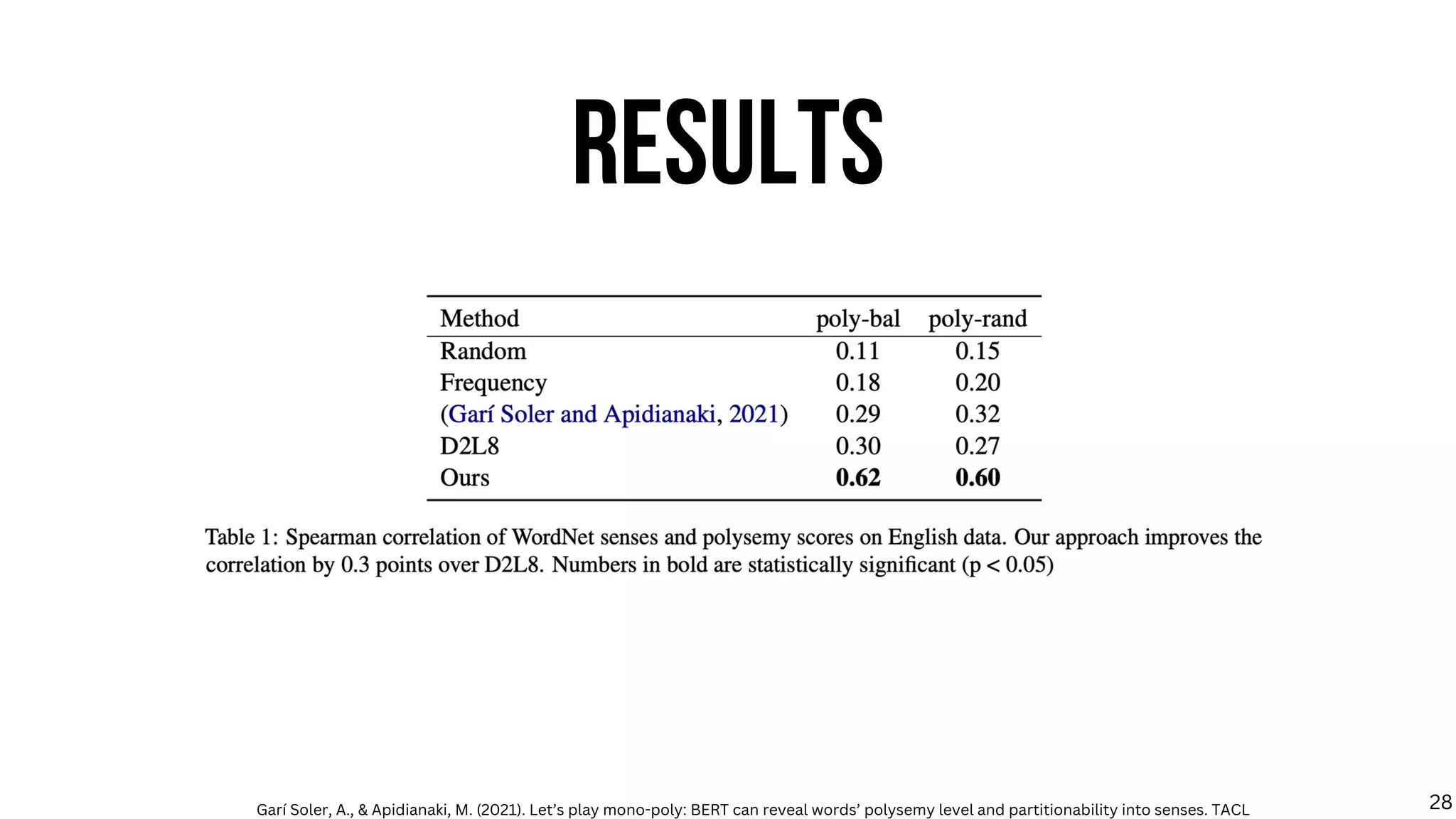

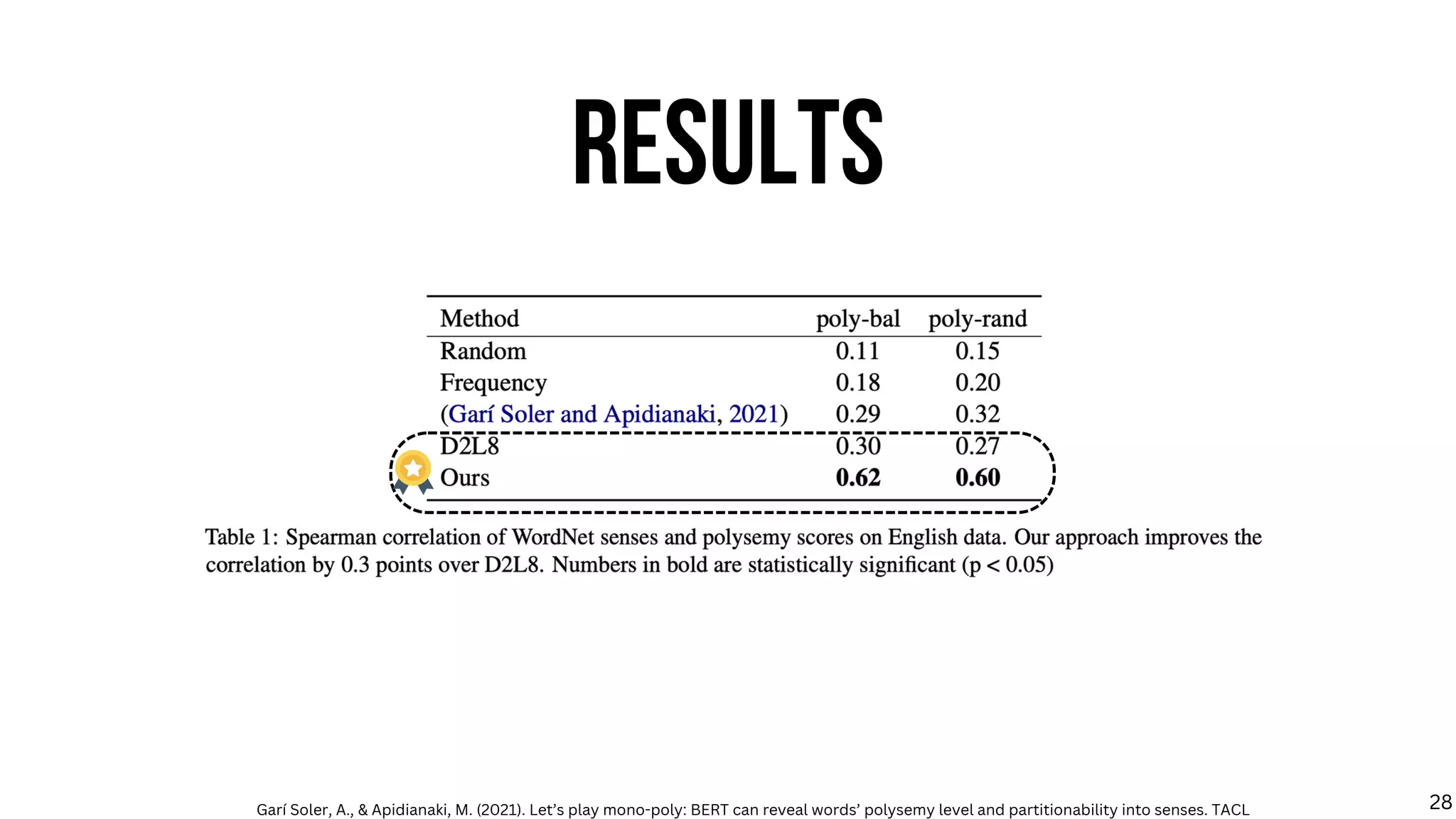

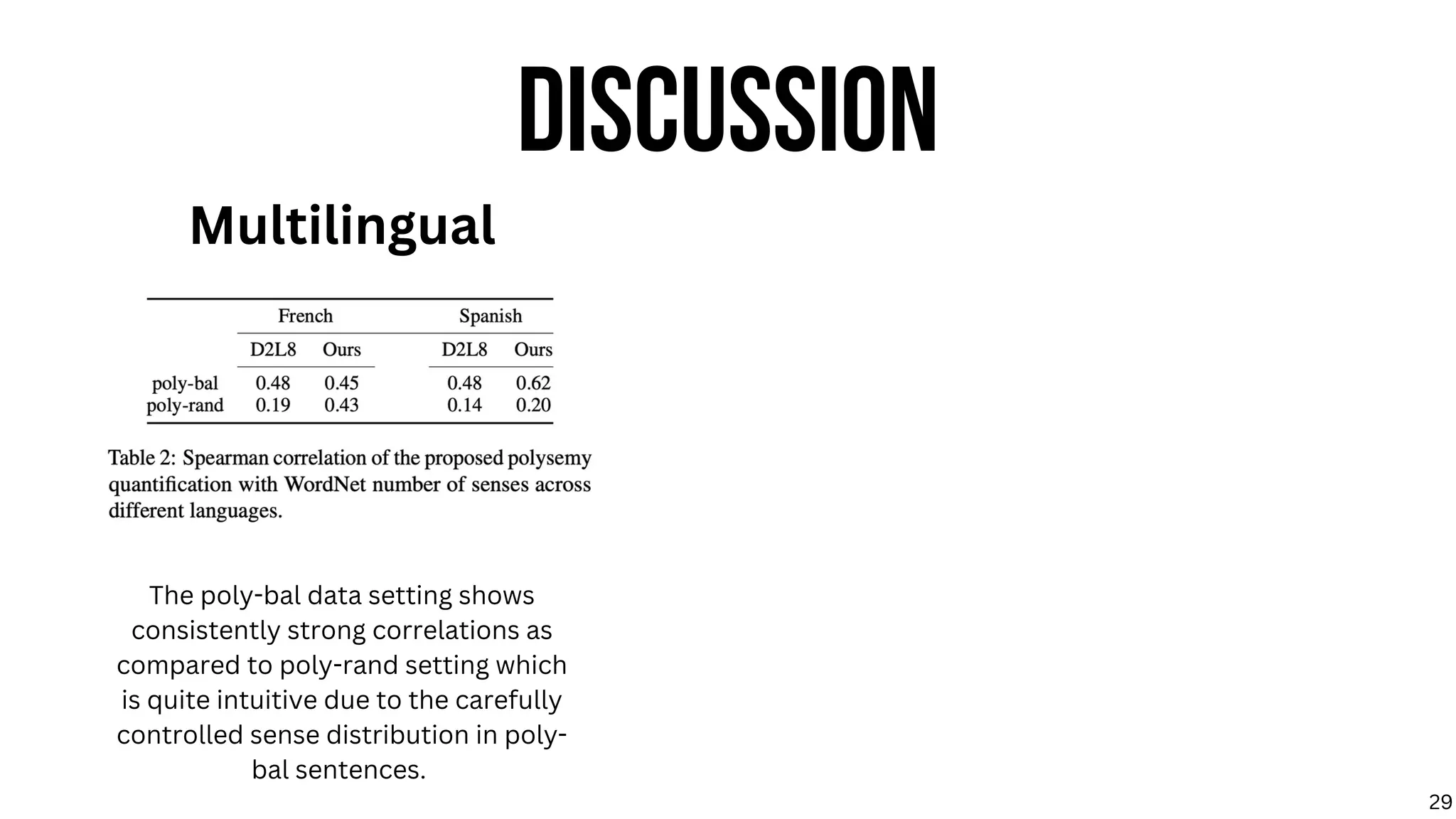

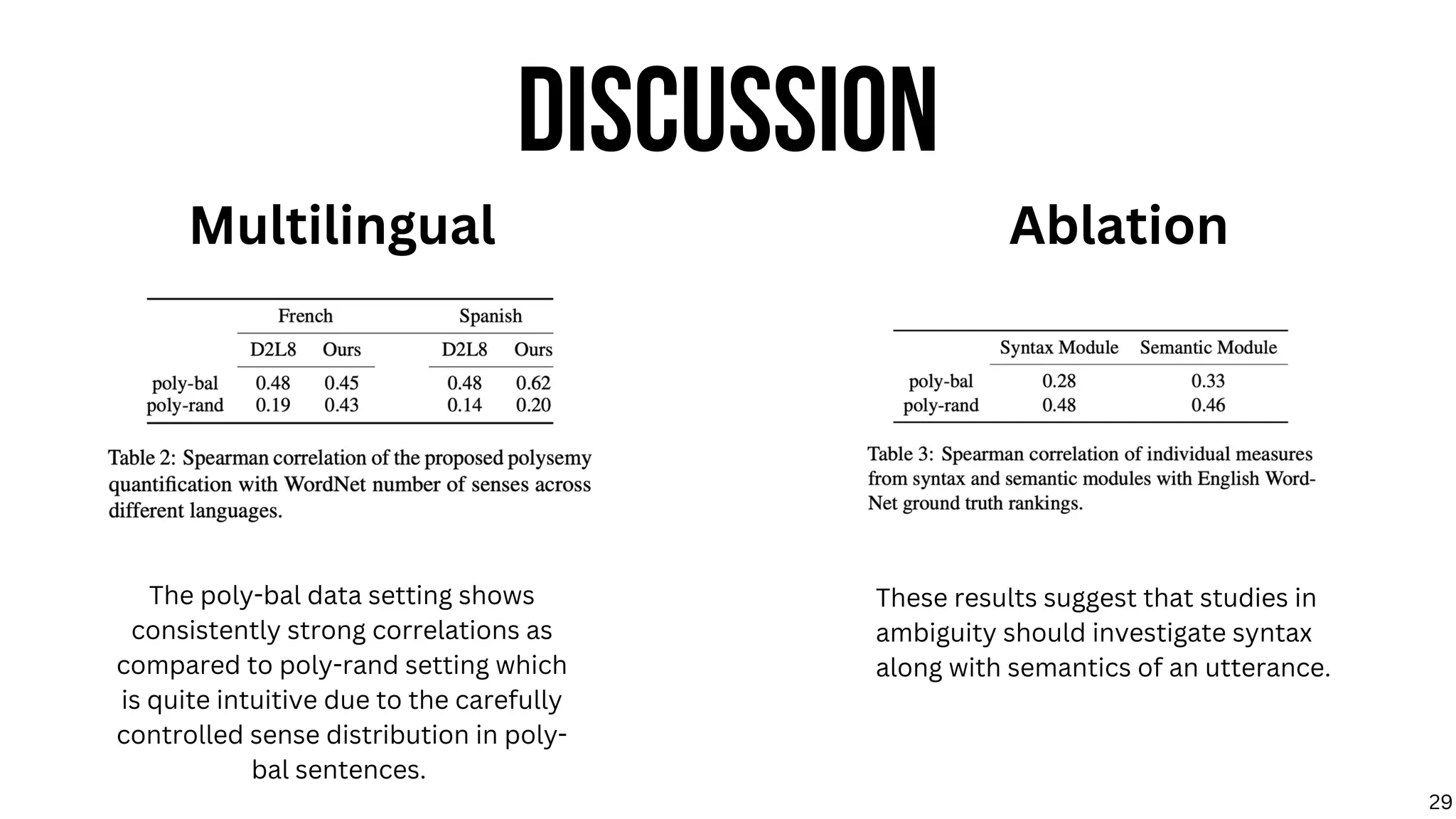

The dissertation explores linguistic ambiguity, focusing on polysemy and tautology, with an aim to improve understanding and resolution of ambiguities in natural language processing. It investigates how syntax affects polysemy quantification and examines large language models' ability to handle tautological sentences. The research highlights the necessity for nuanced approaches that integrate linguistic theory with language model behavior.

![TYPES OF AMBIGUITY

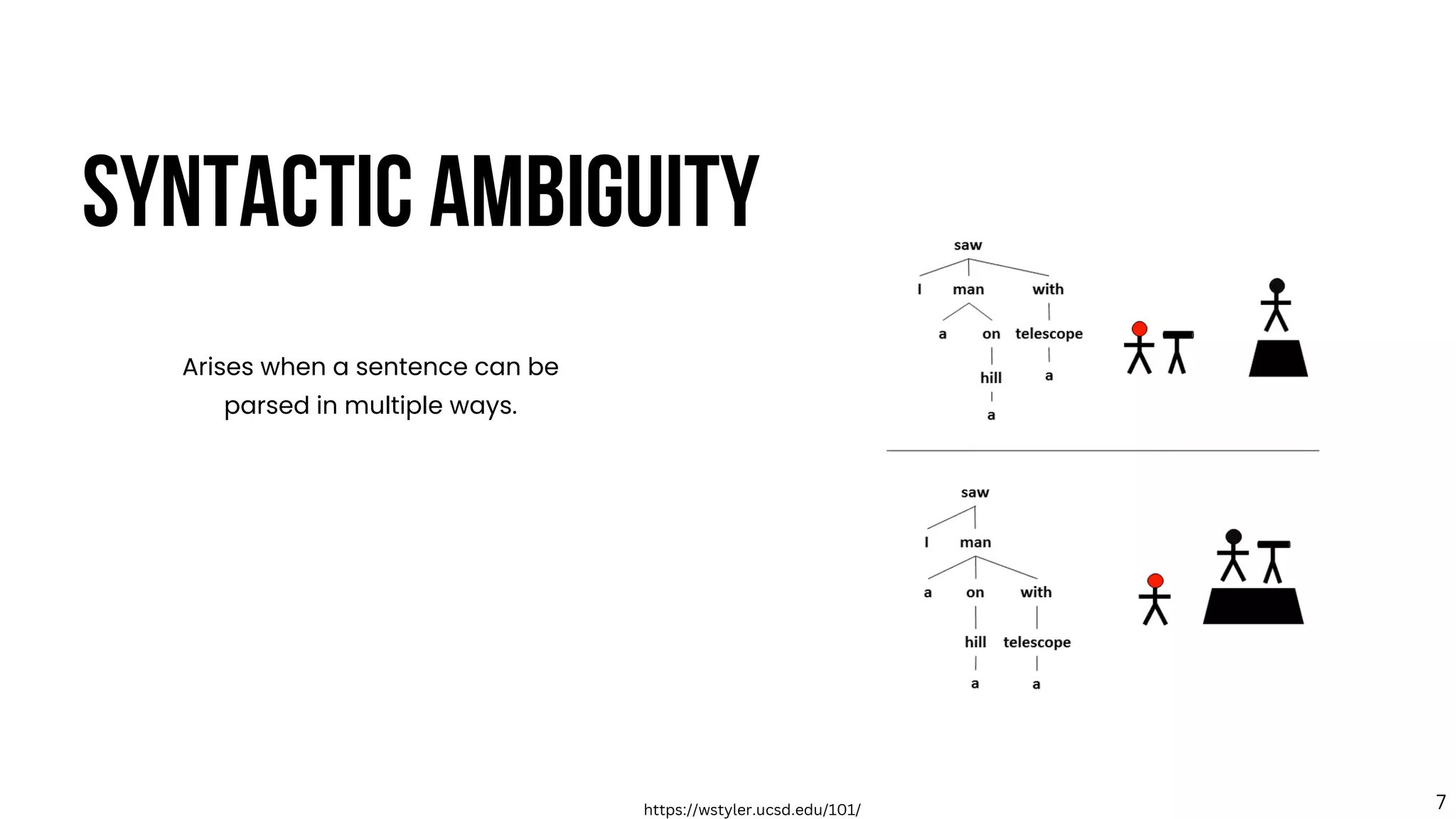

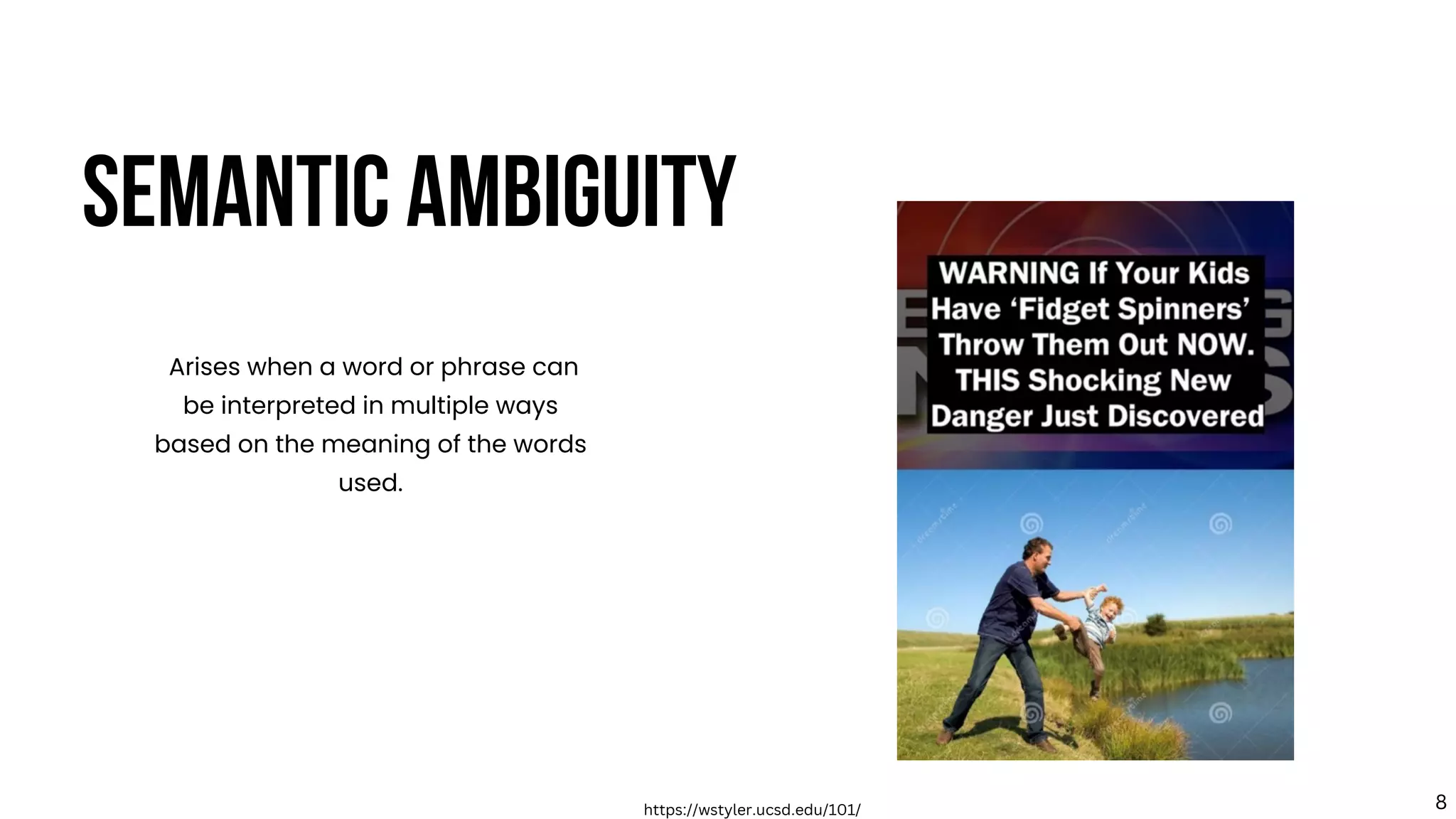

Typically, three main types of ambiguity [Fromkin et al., 2018] in language are considered -

lexical,

syntactic,

and semantic.

Fromkin, V., Rodman, R., & Hyams, N. (2018). An Introduction to Language (w/MLA9E Updates). Cengage Learning. 5](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-12-2048.jpg)

![Typically, three main types of ambiguity [Fromkin et al., 2018] in language are considered -

lexical,

syntactic,

and semantic.

TYPES OF AMBIGUITY

Foci of this thesis

Fromkin, V., Rodman, R., & Hyams, N. (2018). An Introduction to Language (w/MLA9E Updates). Cengage Learning. 9](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-16-2048.jpg)

![PRAGMATIC VIEW

[Grice, 1975] proposed a pragmatic model on how listeners and speakers communicate and cooperate in

conversations.

Information is implied rather than asserted.

Proposes the four maxims of conversation

Grice, H. P. (1975). Logic and conversation. In Speech acts, pages 41–58. Brill. 33](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-56-2048.jpg)

![SEMANTIC VIEW

Argues that the interpretation of tautologies is not solely based on their pragmatic implications, but

rather also on the syntactic patterns and nominal classifications of the phrases [Wierzbicka, 1987].

For example, tautologies of the form "N will be N" generally convey negative aspects of the topic with an

indulgent undertone.

The way that the words are arranged in a sentence can impact the interpretation of the tautology.

Wierzbicka, A. (1987). Boys will be boys:’radical semantics’ vs.’radical pragmat- ics’. Language, pages 95–114 35](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-61-2048.jpg)

![EXPERIMENTAL SETUP

DATA

216 sentences Controlling for

noun type, syntax and context.

Methodology of [Gibbs

and McCarrell, 1990]

Gibbs, R. W. and McCarrell, N. S. (1990). Why boys will be boys and girls will be girls: Understanding colloquial tautologies. Journal of Psycholinguistic Research, 19:125–145. 37](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-63-2048.jpg)

![EXPERIMENTAL SETUP

DATA MODEL

216 sentences Controlling for

noun type, syntax and context.

Methodology of [Gibbs

and McCarrell, 1990]

Huggingface

Pretrained BERT and GPT2

37](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-64-2048.jpg)

![EXPERIMENTAL SETUP

DATA MODEL EVALUATION

216 sentences Controlling for

noun type, syntax and context.

Sequence log probability

scores are a measure of how

likely a sequence of words is

according to a transformer-

based language model.

Methodology of [Gibbs

and McCarrell, 1990]

Huggingface Acceptability scores

Pretrained BERT and GPT2

37](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-65-2048.jpg)

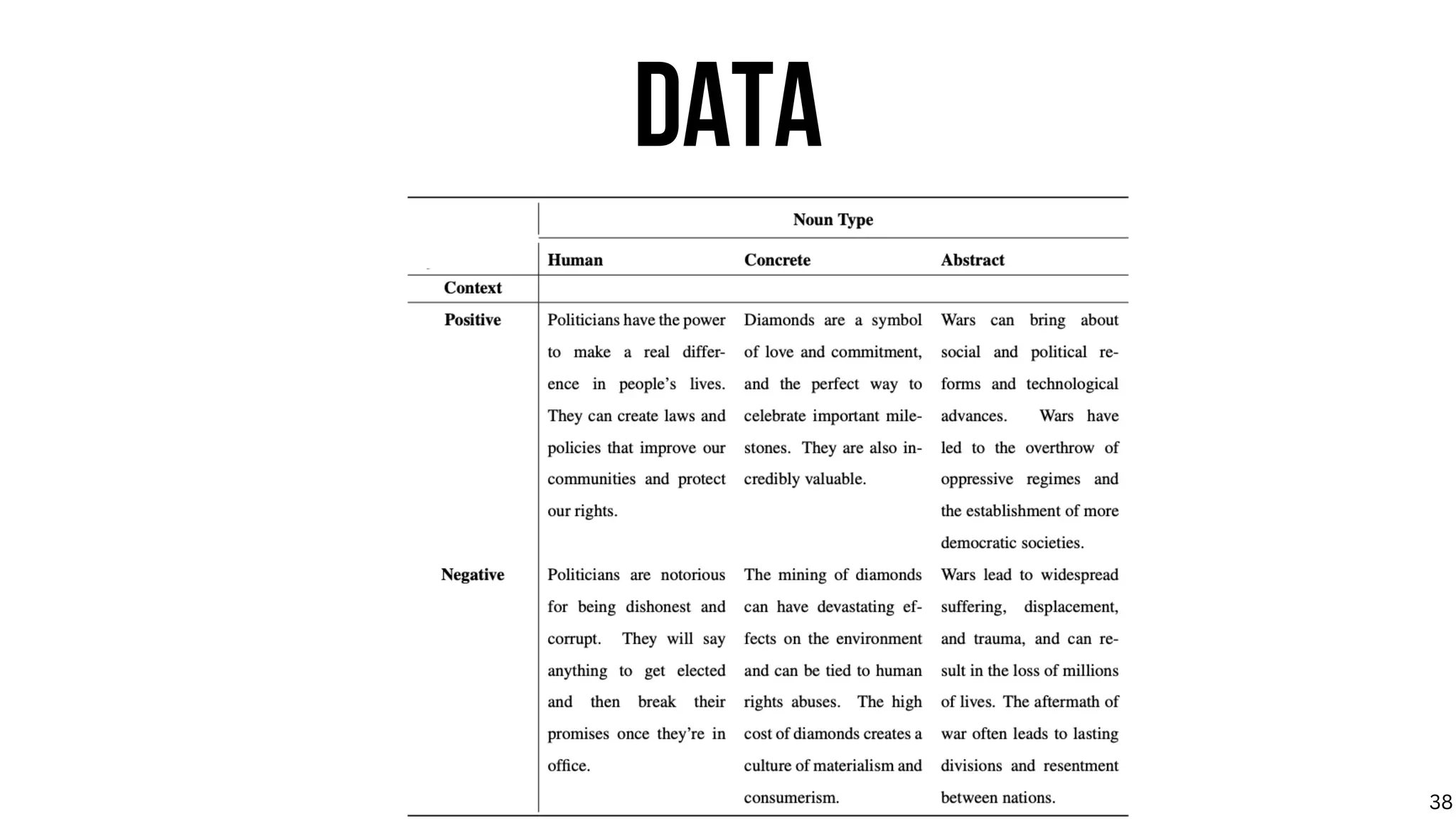

![DATA

[Gibbs and McCarrell, 1990] describes a blueprint to create datasets for

tautology acceptability studies.

We use few-shot prompting with GPT-3.5 to synthetically generate data.

Gibbs, R. W. and McCarrell, N. S. (1990). Why boys will be boys and girls will be girls: Understanding colloquial tautologies. Journal of Psycholinguistic Research, 19:125–145. 38](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-66-2048.jpg)

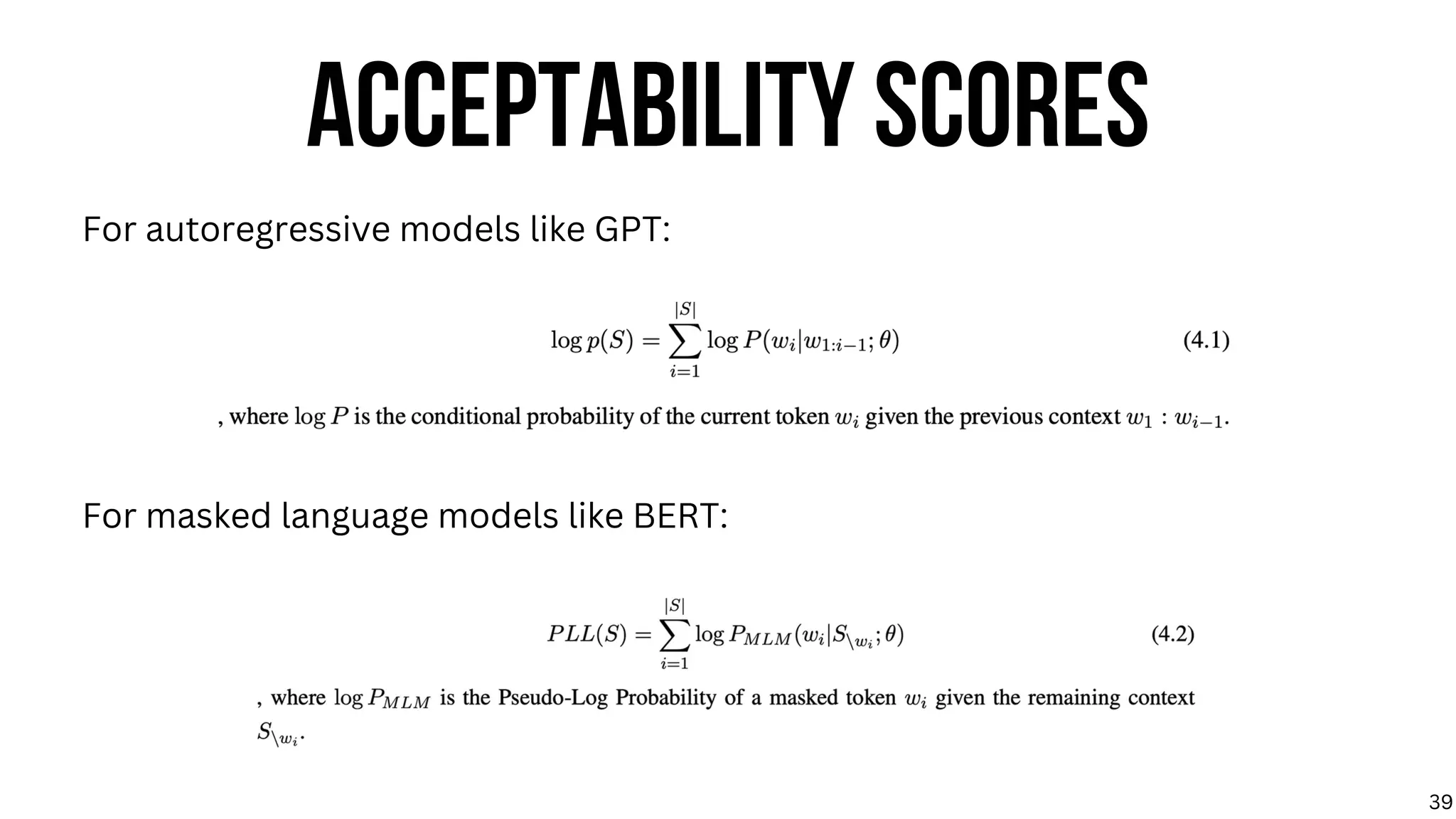

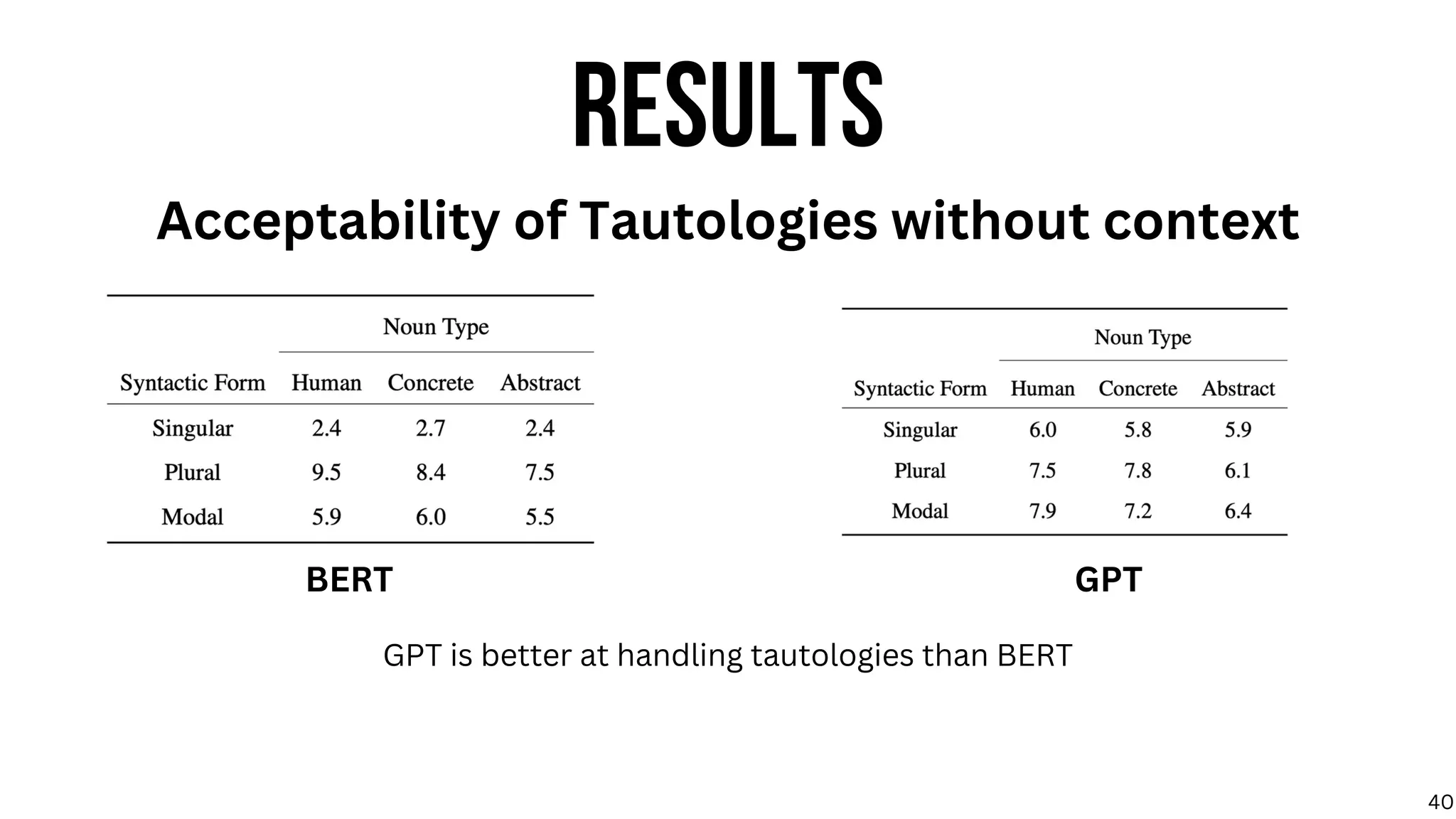

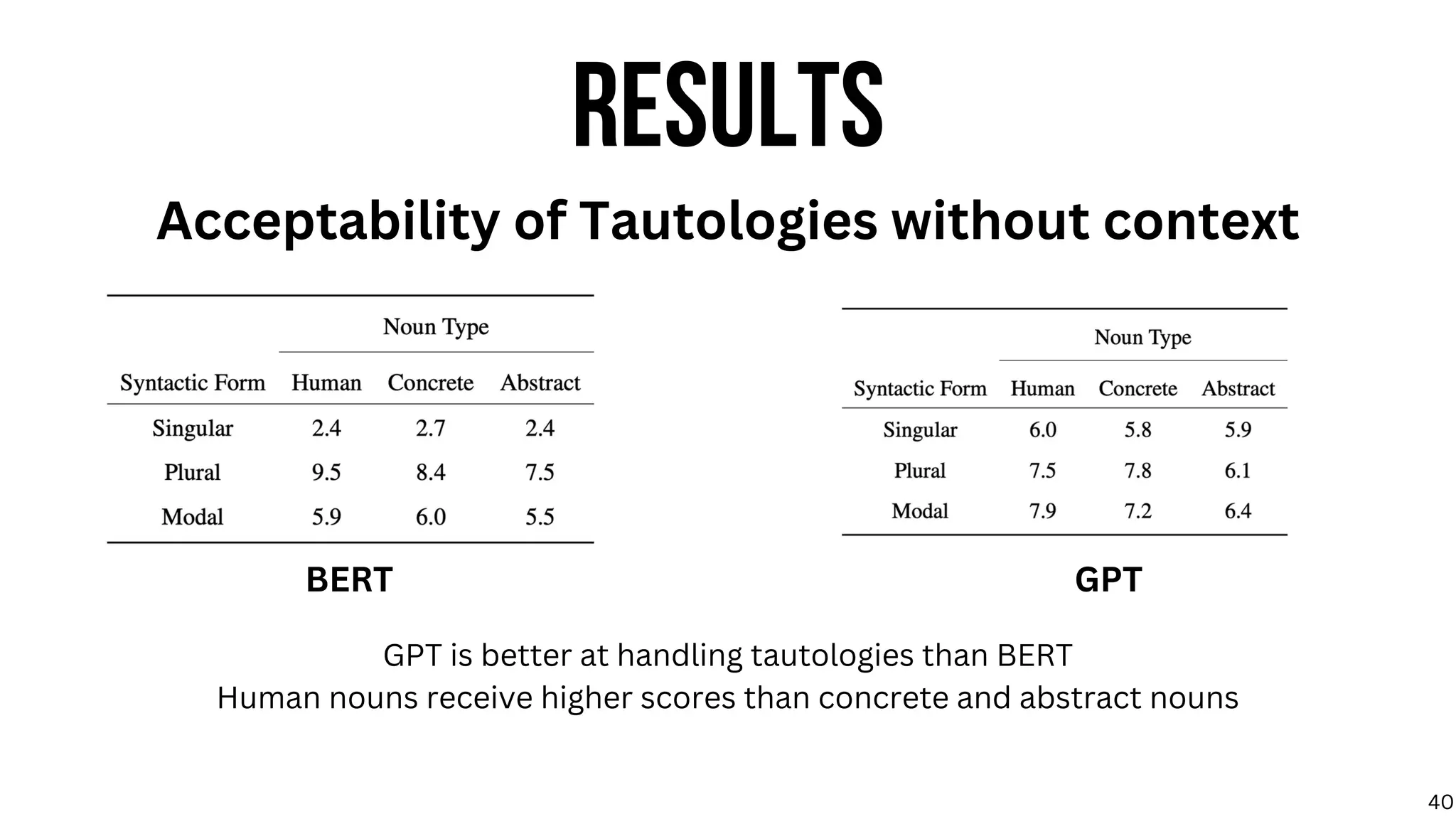

![RESULTS

Acceptability of Tautologies without context

GPT is better at handling tautologies than BERT

Human nouns receive higher scores than concrete and abstract nouns

Surprisingly, LLMs seem to prefer plural tautological constructions, contrary to previous literature

on humans’ preference for modal forms [Gibbs and McCarrell, 1990]

BERT GPT

40](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-71-2048.jpg)

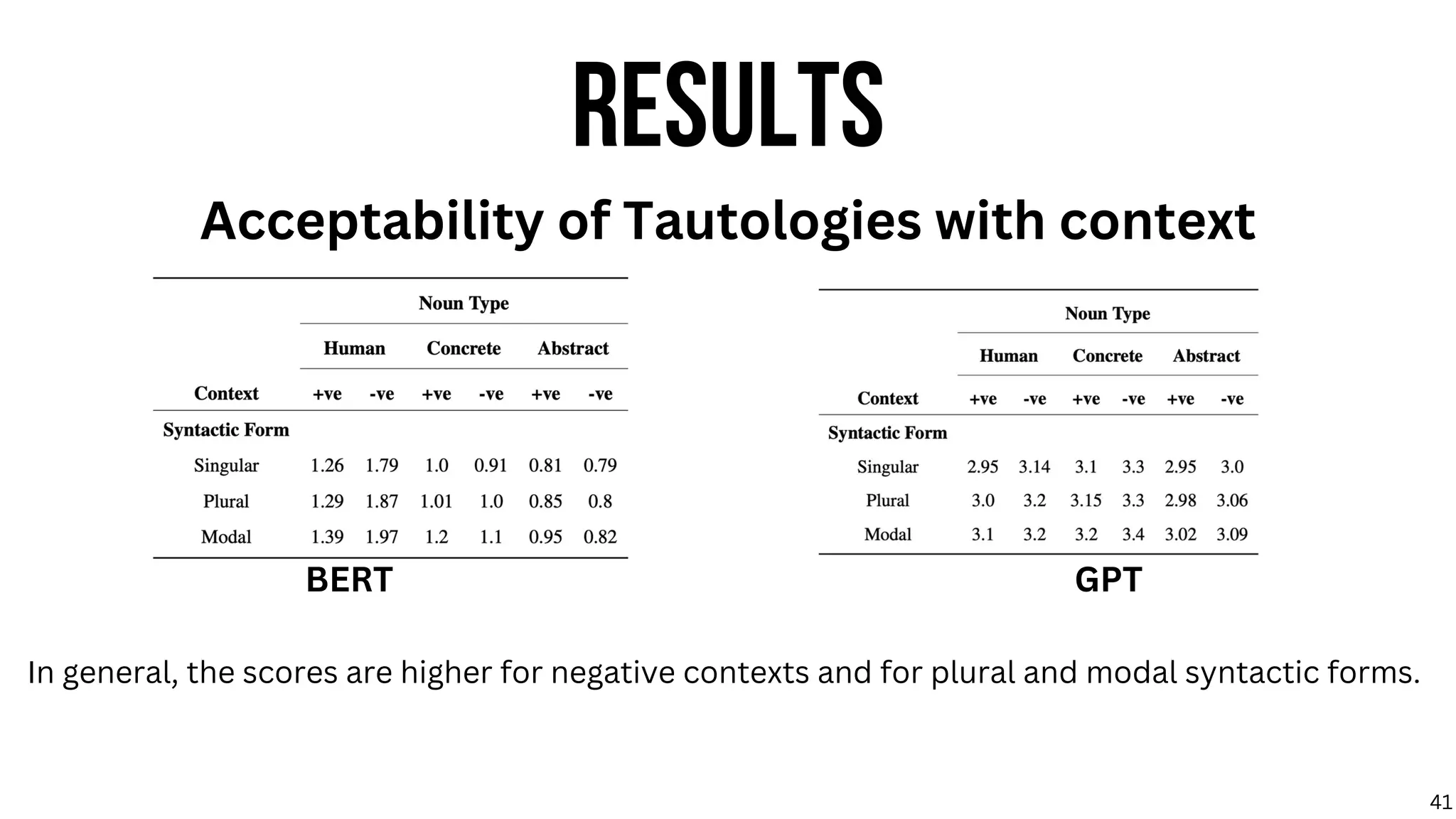

![RESULTS

Acceptability of Tautologies with context

In general, the scores are higher for negative contexts and for plural and modal syntactic forms.

This suggests that models encode negative factual connotations for tautological constructions,

similar to human behaviour [Gibbs and McCarrell, 1990].

BERT GPT

41](https://image.slidesharecdn.com/anmol-thesis-defense-slides-230629013516-60009ac6/75/Beyond-the-Surface-A-Computational-Exploration-of-Linguistic-Ambiguity-73-2048.jpg)