Embed presentation

Download as PDF, PPTX

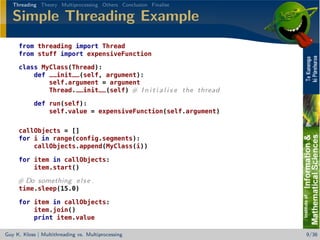

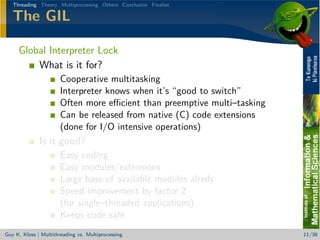

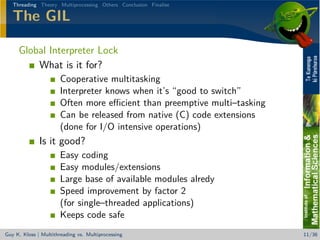

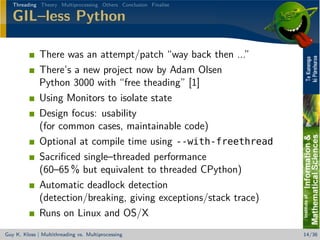

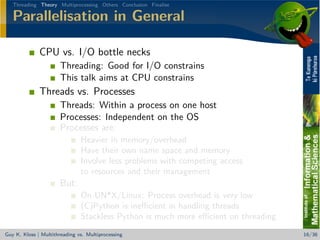

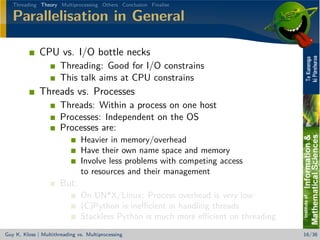

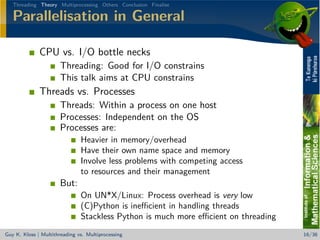

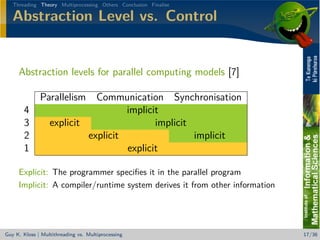

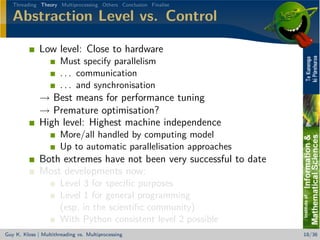

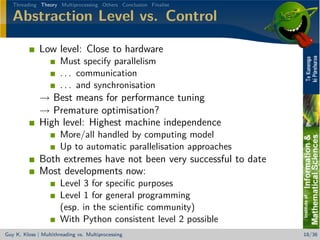

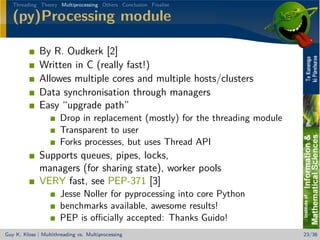

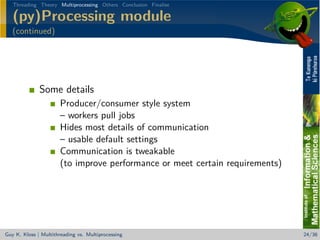

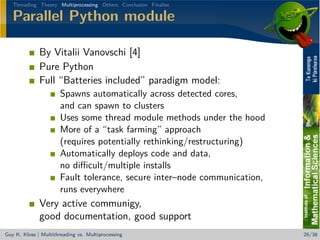

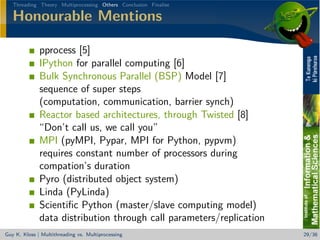

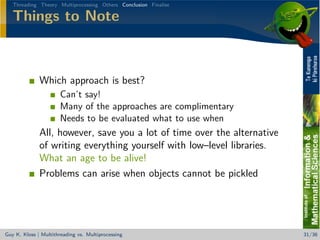

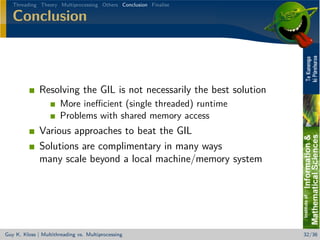

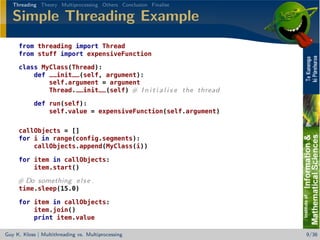

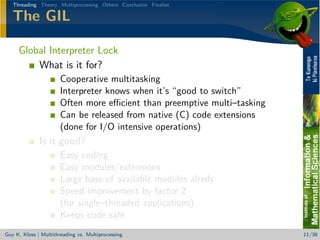

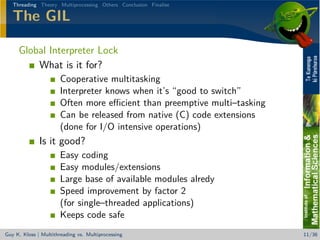

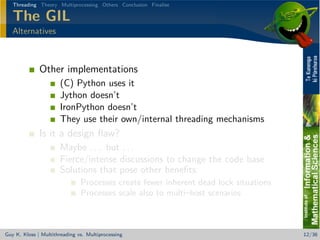

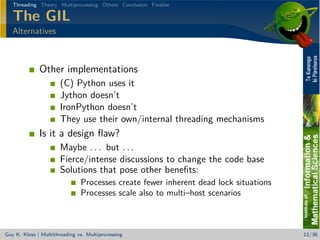

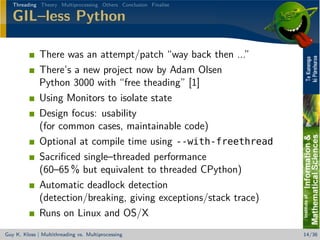

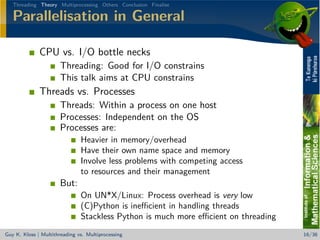

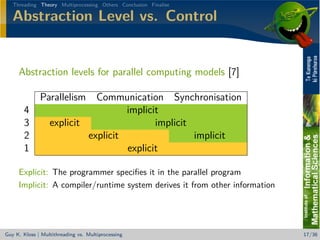

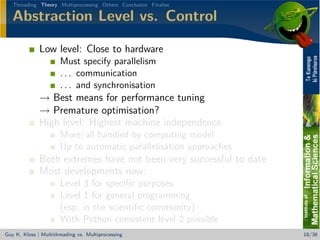

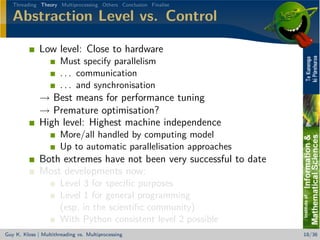

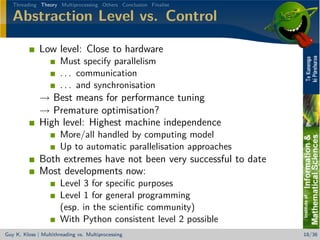

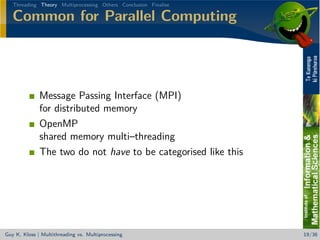

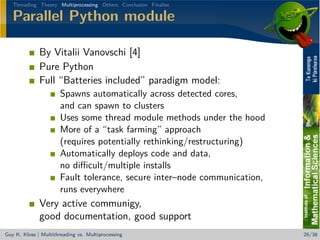

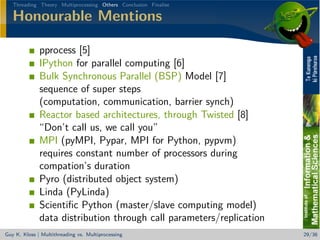

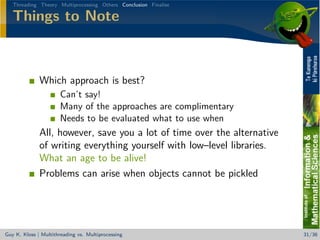

The document discusses multithreading and multiprocessing in the context of Python, highlighting the challenges and benefits of each approach, particularly concerning the Global Interpreter Lock (GIL). It also provides insights into various alternatives and solutions for managing parallelism, as well as the implications of using threads versus processes. The speaker emphasizes the importance of understanding these concepts for efficient CPU and I/O handling in programming.