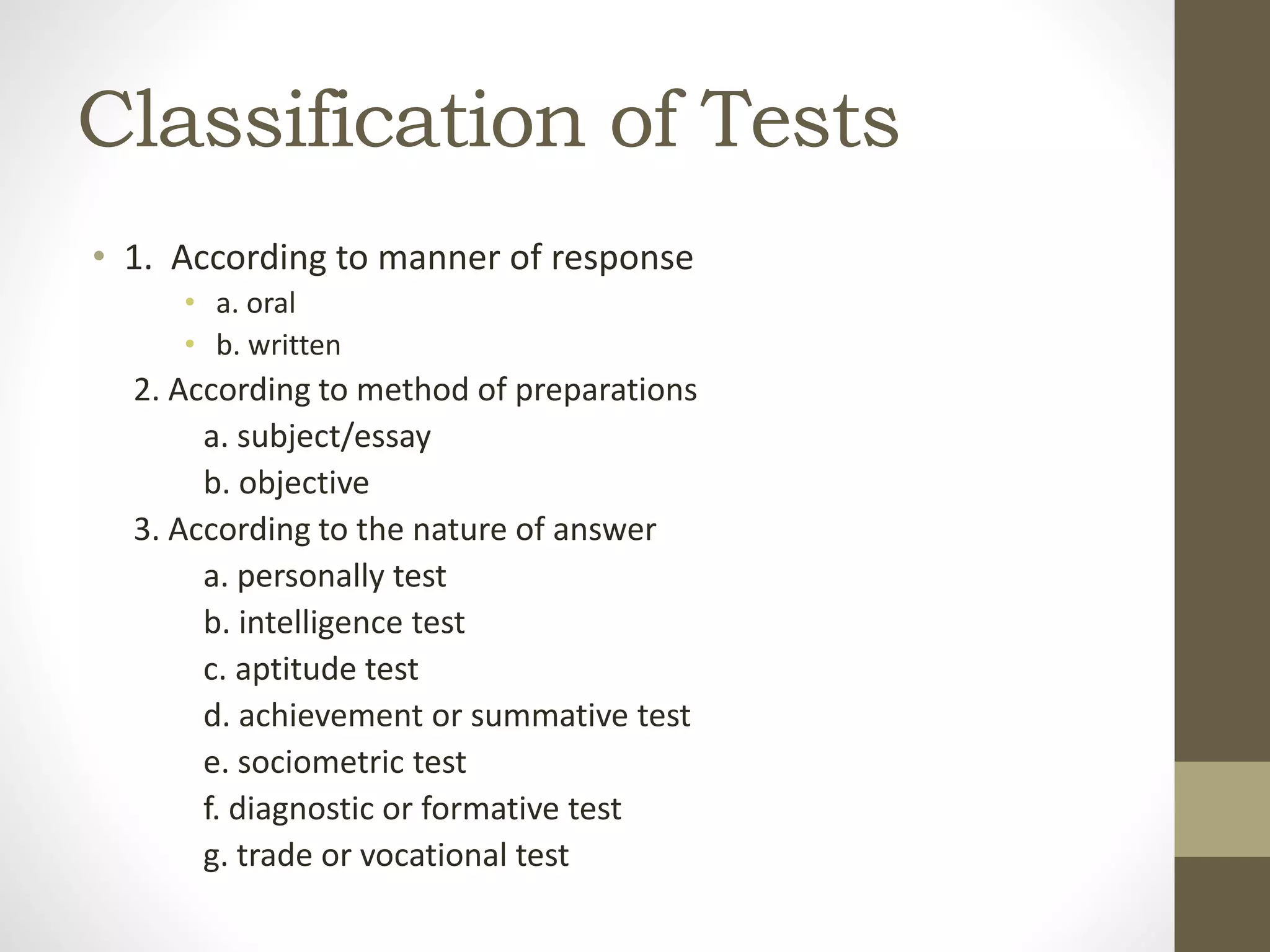

The document discusses assessment of learning and educational technology. It provides information on different types of assessment tools used to evaluate the teaching and learning process, including tests, measurements, and evaluations. Various audiovisual aids that can be used to support instruction are also outlined, such as still photography, motion pictures, and multimedia equipment. Common classroom devices and their purposes are defined.