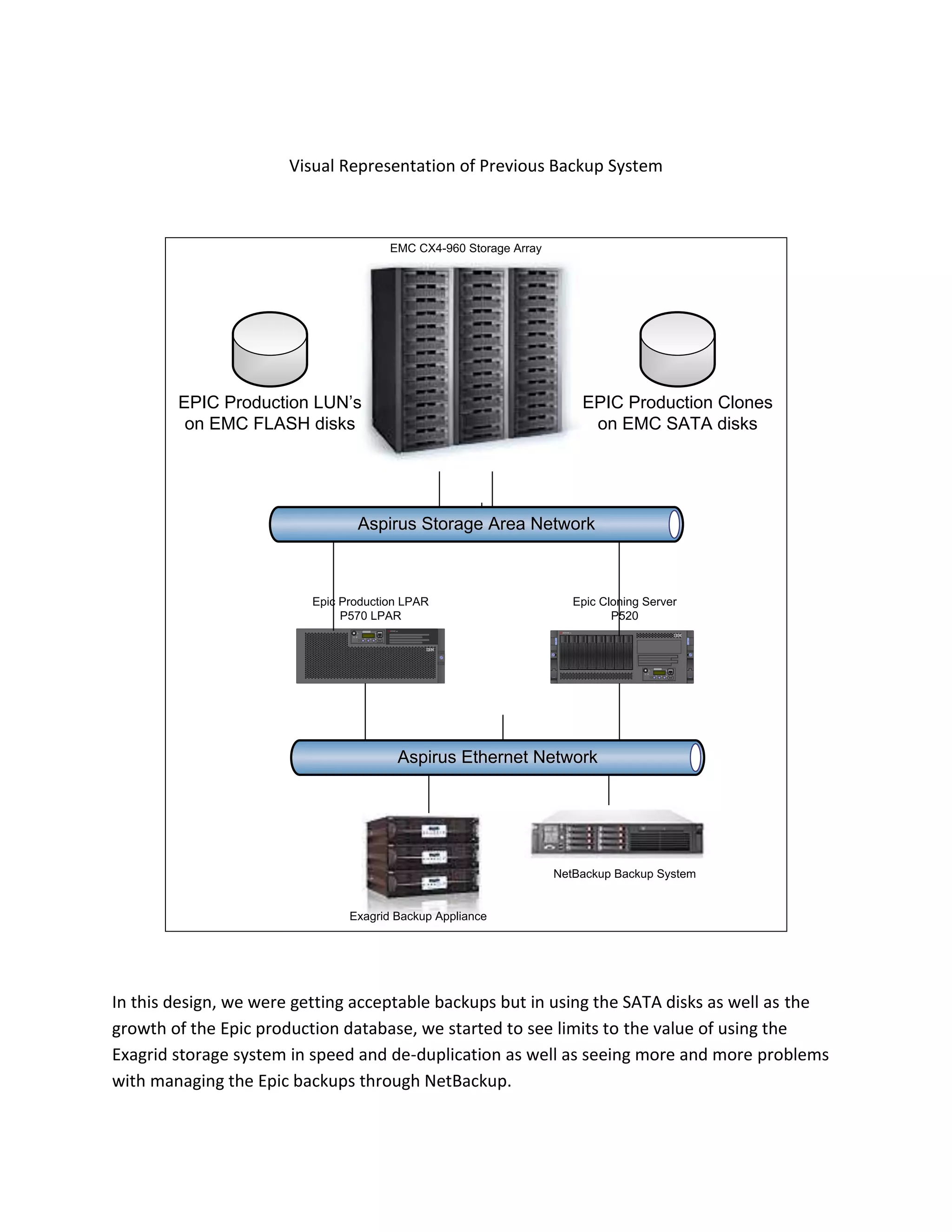

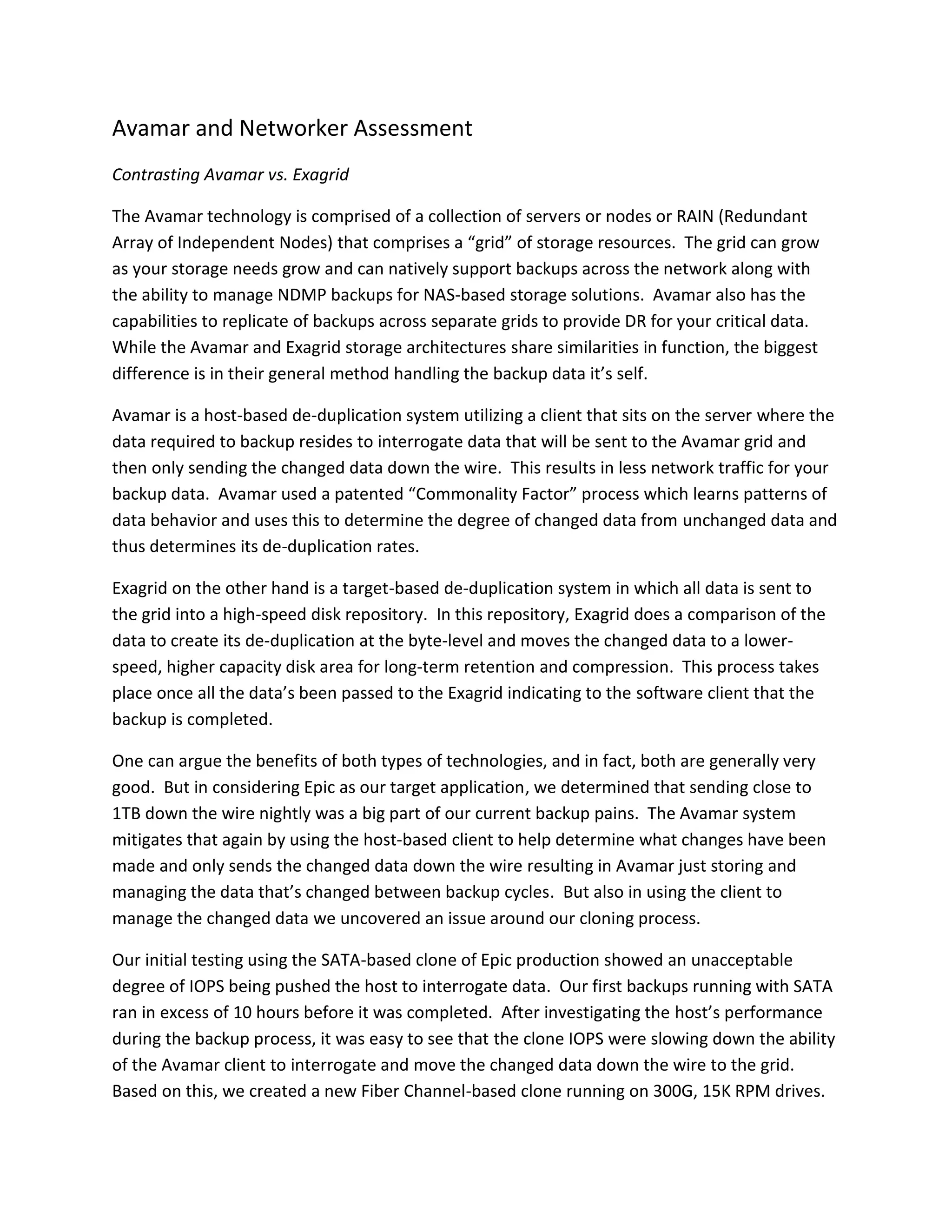

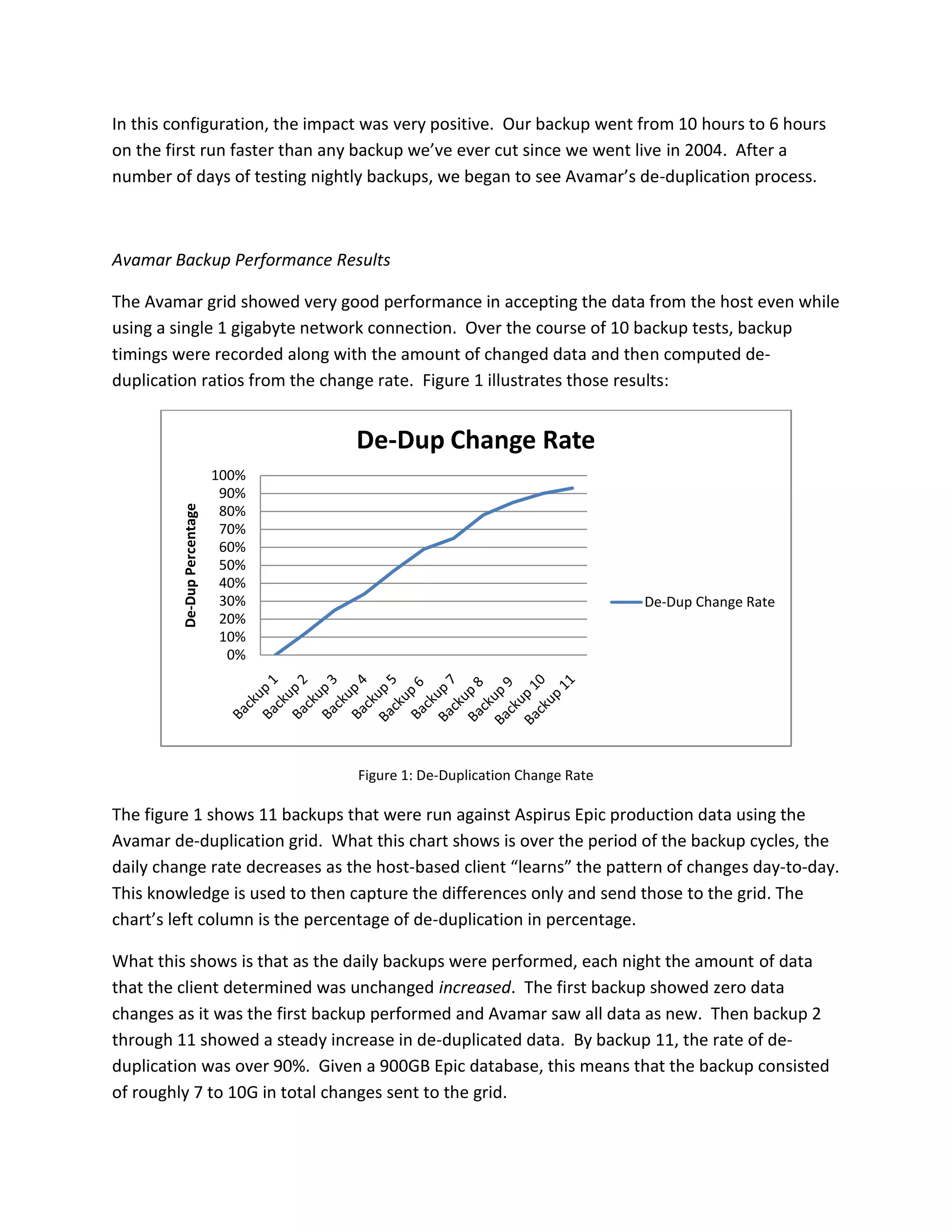

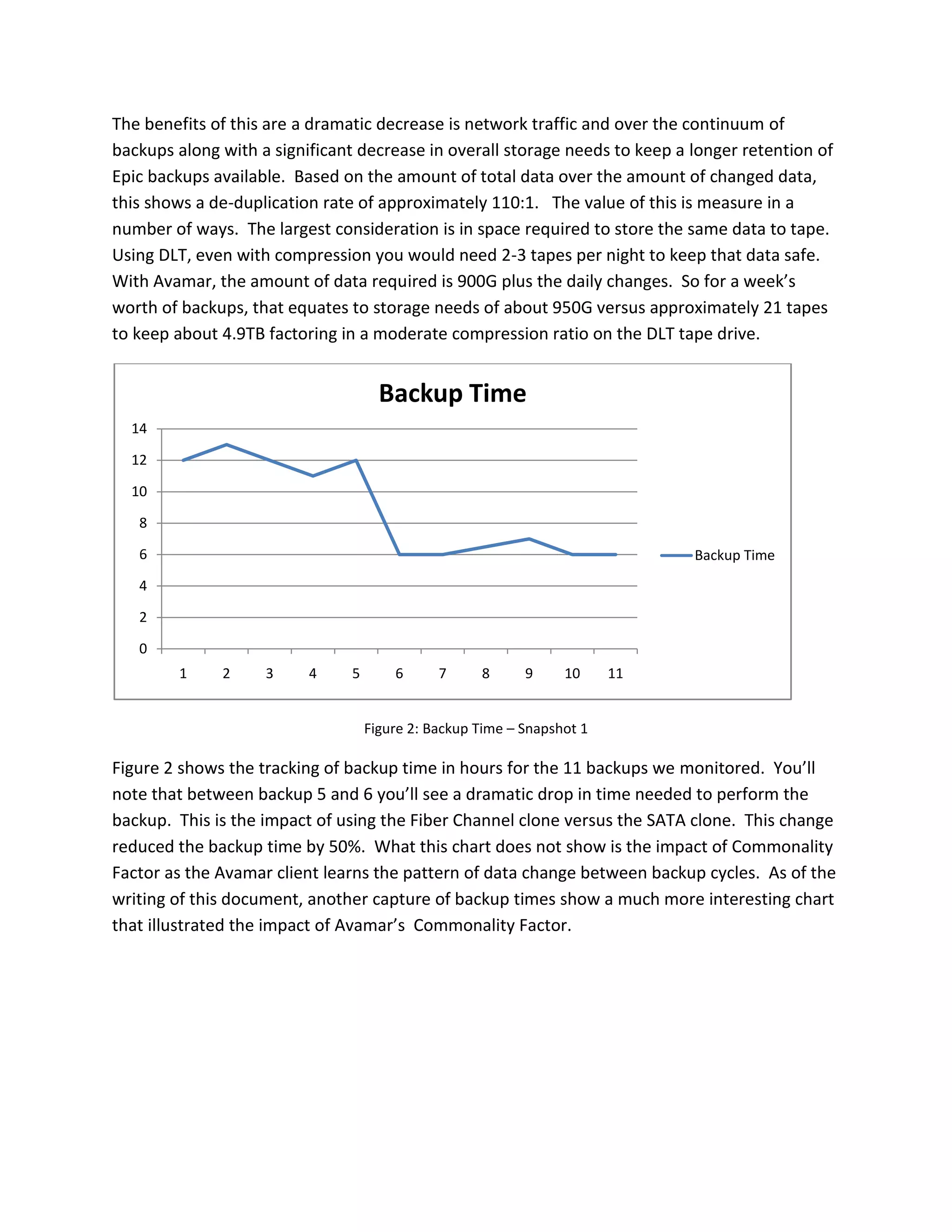

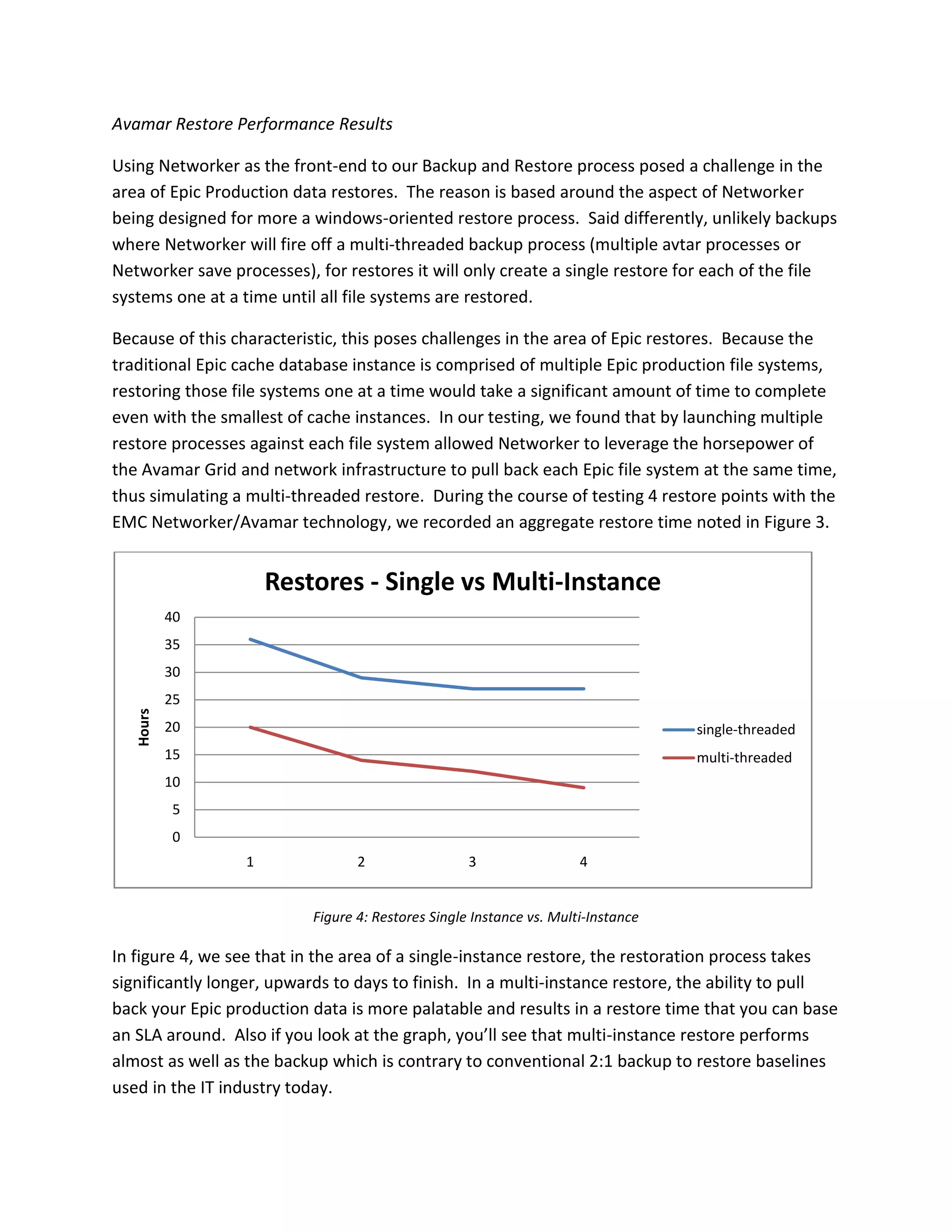

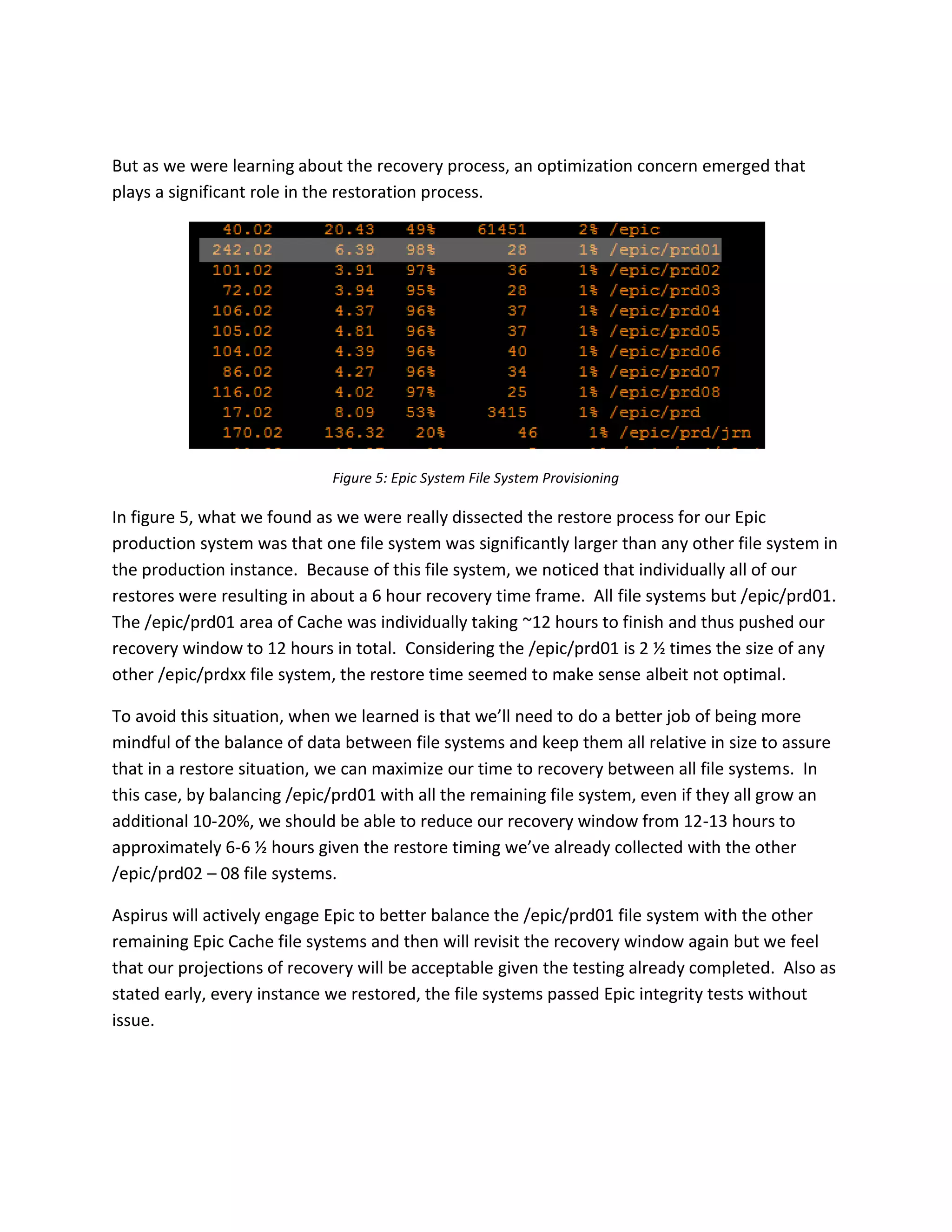

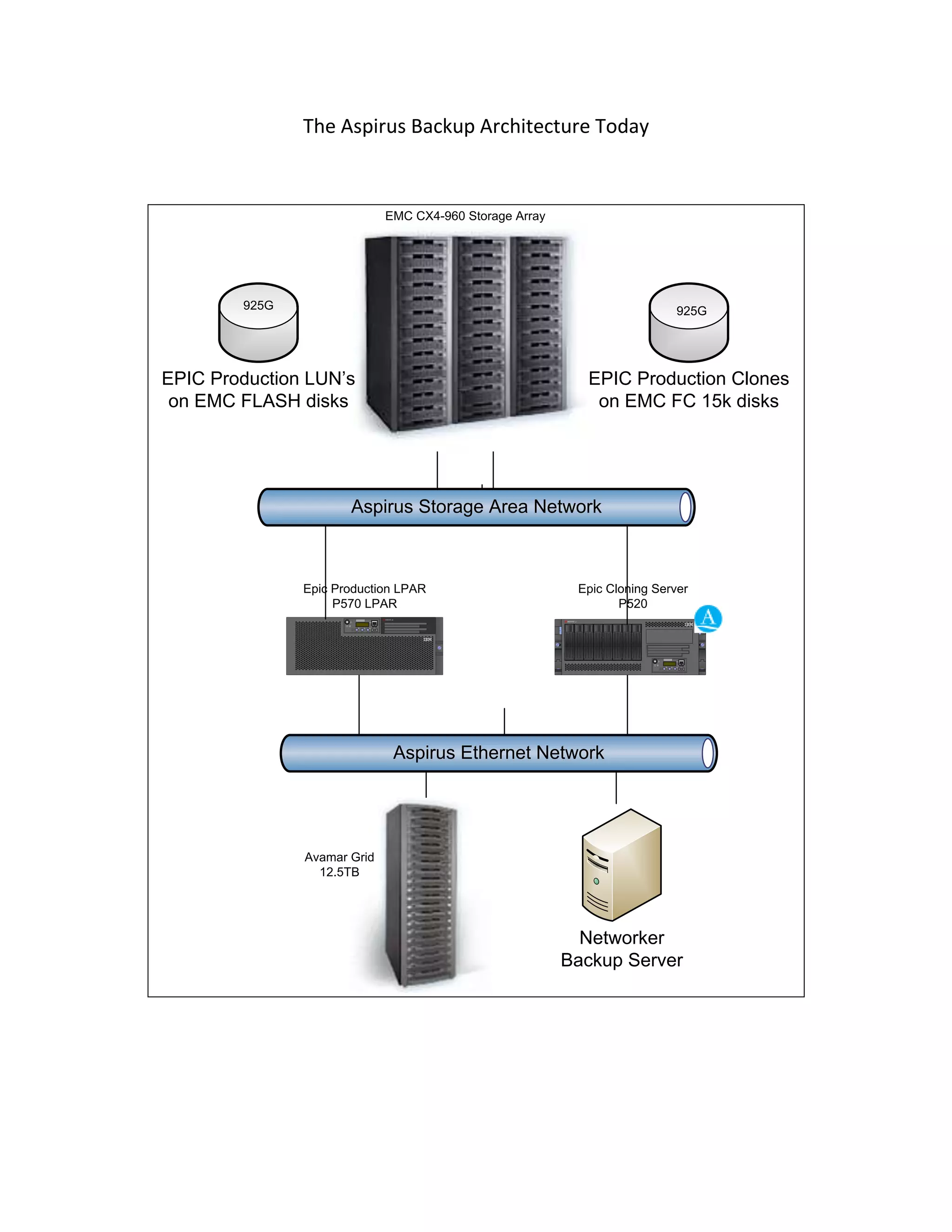

Aspirus implemented EMC's Avamar backup solution to address issues with long backup times and inconsistent backups using their previous Exagrid and NetBackup system. Testing showed Avamar provided significantly better deduplication rates of over 110:1 and reduced backup times from over 10 hours to under 6 hours. The improved performance, reliability and reduced storage needs provided by Avamar resulted in lower costs and allowed IT staff to focus on more strategic work.