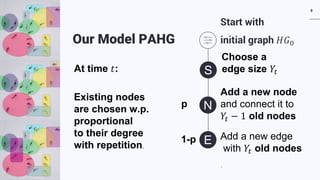

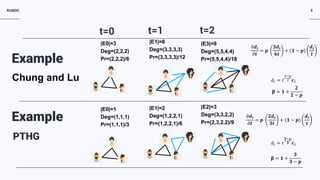

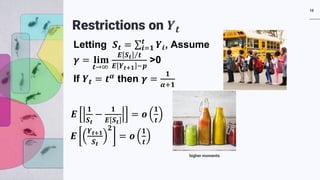

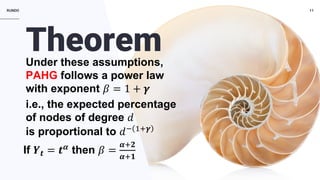

The document discusses a preferential attachment model for hypergraphs called PAHG. PAHG adds new nodes and hyperedges to an initial hypergraph over time. New nodes preferentially attach to existing nodes based on their degree, while new hyperedges connect existing nodes. The model results in power law degree distributions, where the exponent depends on the rate of growth of hyperedge sizes. Many real-world networks are best modeled as hypergraphs, and PAHG provides a way to analyze hypergraph growth and properties directly. Open problems regarding properties of PAHG like the core size, expansion, influence, and diameter are mentioned.

![RUNDO 6

P[x=k]~

𝟏𝟏

𝒌𝒌𝜷𝜷

Power Law Distributions

1. Observed in both network and non-network

structures

2. “On Power-Law Relationships of the Internet

Topology” (Faloutsos^3, 1999)

3. “Emergence of Scaling in Random Networks”

(Barabási and Albert, 1999)

4. “Networks of scientific papers” (de Solla Price,

1976).

5. Word frequencies, net worth, city populations,

etc.](https://image.slidesharecdn.com/asonam-2019-zvi-3-s-190905115103/85/Asonam-2019-zvi-3-s-6-320.jpg)

![RUNDO

In step 𝑡𝑡 vertex 𝑣𝑣𝑡𝑡 arrives,

and Pr[ 𝑣𝑣𝑡𝑡connects to 𝑣𝑣𝑖𝑖 ] =

𝑑𝑑𝑖𝑖

∑𝑗𝑗 𝑑𝑑𝑗𝑗

7

Preferential Attachment Process

In step 𝑡𝑡 vertex

Vertex event 𝑣𝑣𝑡𝑡 with probability 𝑝𝑝

Pr[ 𝑣𝑣𝑡𝑡connects to 𝑣𝑣𝑖𝑖 ] =

𝑑𝑑𝑖𝑖

∑𝑗𝑗 𝑑𝑑𝑗𝑗

Edge event 𝑒𝑒𝑡𝑡 with probability 1 − 𝑝𝑝

Pr[ 𝑣𝑣𝑘𝑘connects to 𝑣𝑣𝑖𝑖 ] =

𝑑𝑑𝑖𝑖

∑𝑗𝑗 𝑑𝑑𝑗𝑗

𝑑𝑑𝑘𝑘

∑𝑗𝑗 𝑑𝑑𝑗𝑗

HistoryChung and Lu

2006

Udny Yule1925, Price in 1976, Barabási, Albert in 1999](https://image.slidesharecdn.com/asonam-2019-zvi-3-s-190905115103/85/Asonam-2019-zvi-3-s-7-320.jpg)