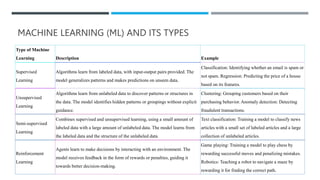

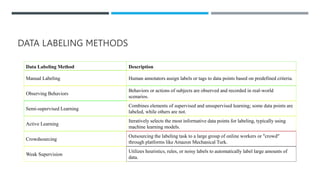

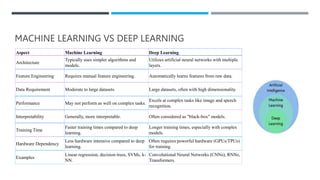

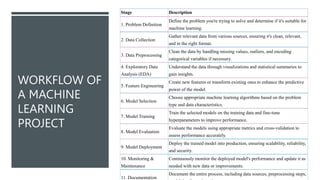

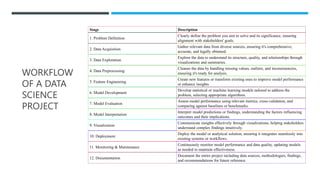

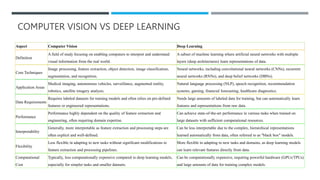

Artificial Intelligence (AI) encompasses the development of systems that perform tasks requiring human-like intelligence, with types including Artificial Narrow Intelligence (ANI), Artificial General Intelligence (AGI), and Artificial Super Intelligence (ASI). Machine Learning (ML) is a key component of AI, employing various methodologies such as supervised, unsupervised, and reinforcement learning, while also addressing the importance of high-quality data and ethical concerns. Furthermore, advancements in deep learning, computer vision, and Generative Adversarial Networks (GANs) contribute to diverse applications across industries, although challenges such as interpretability and data bias persist.