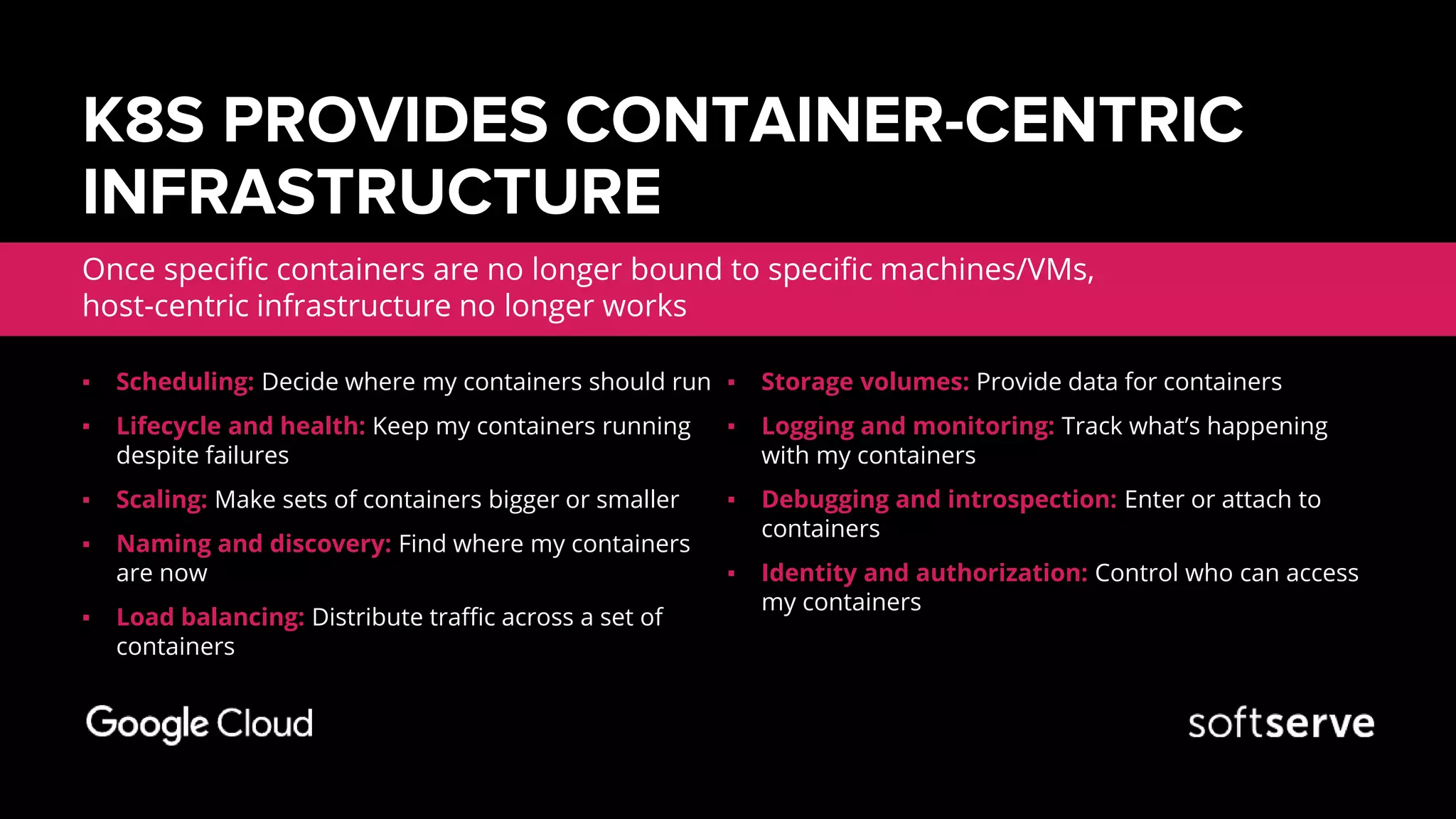

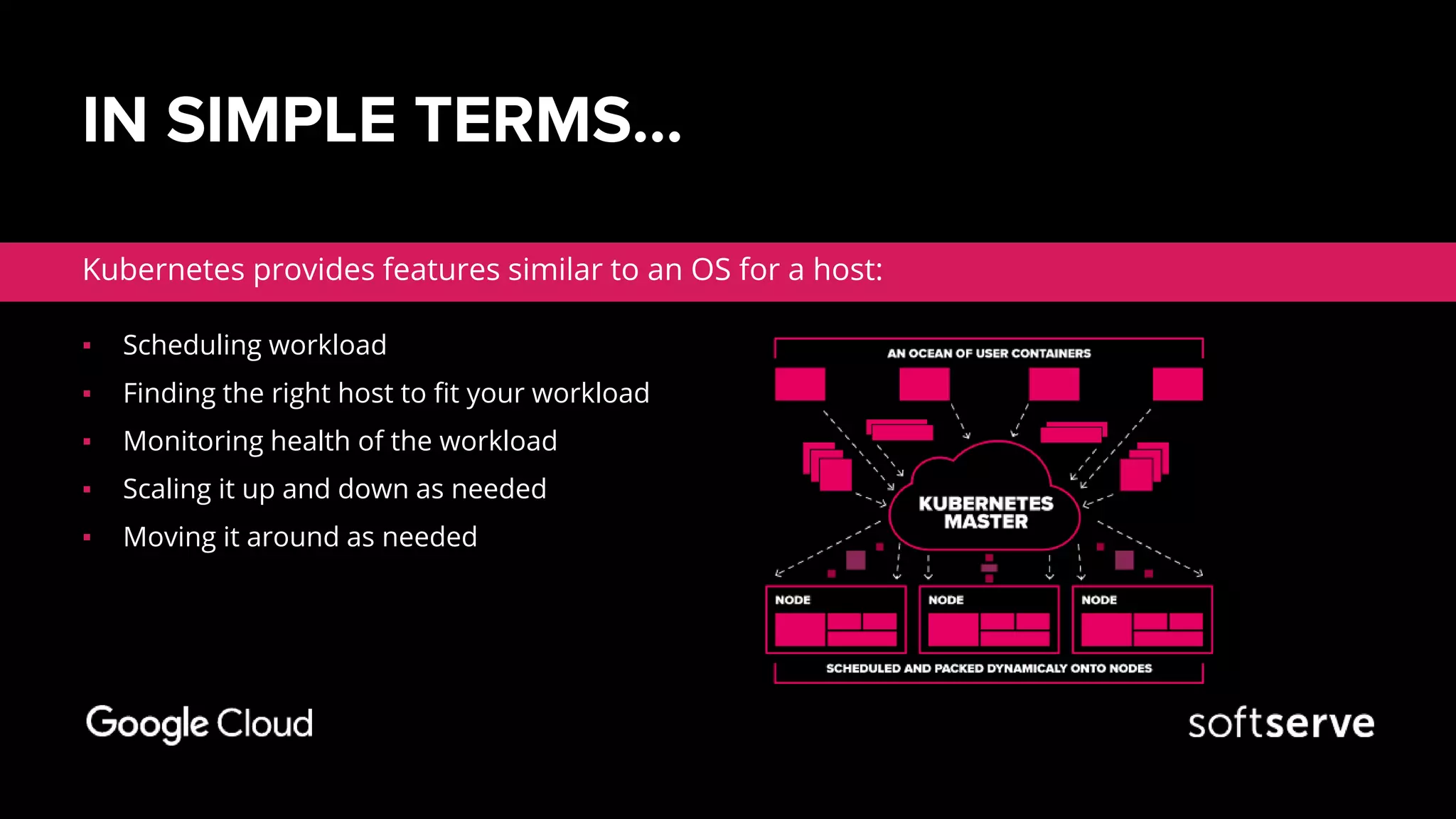

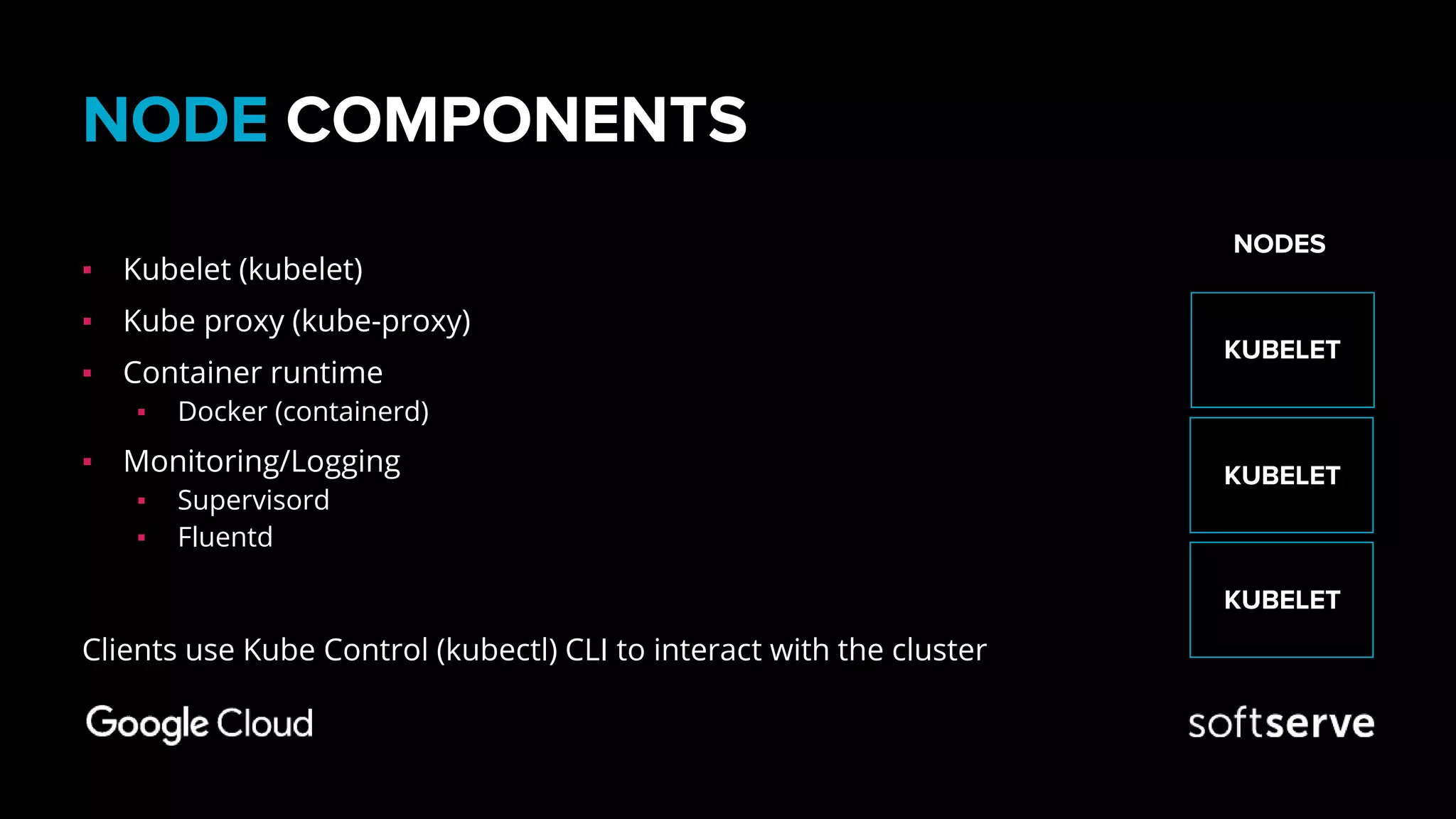

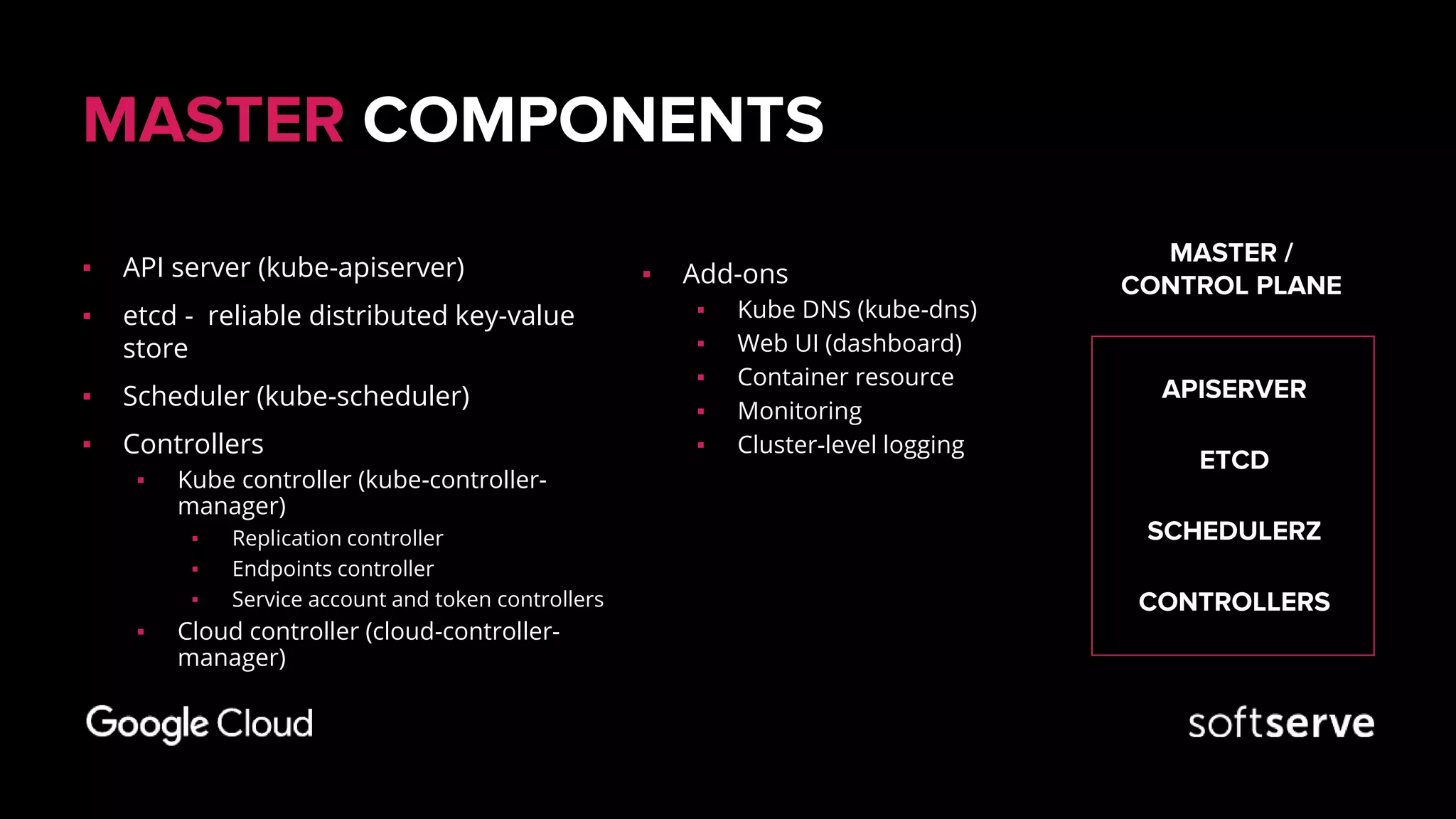

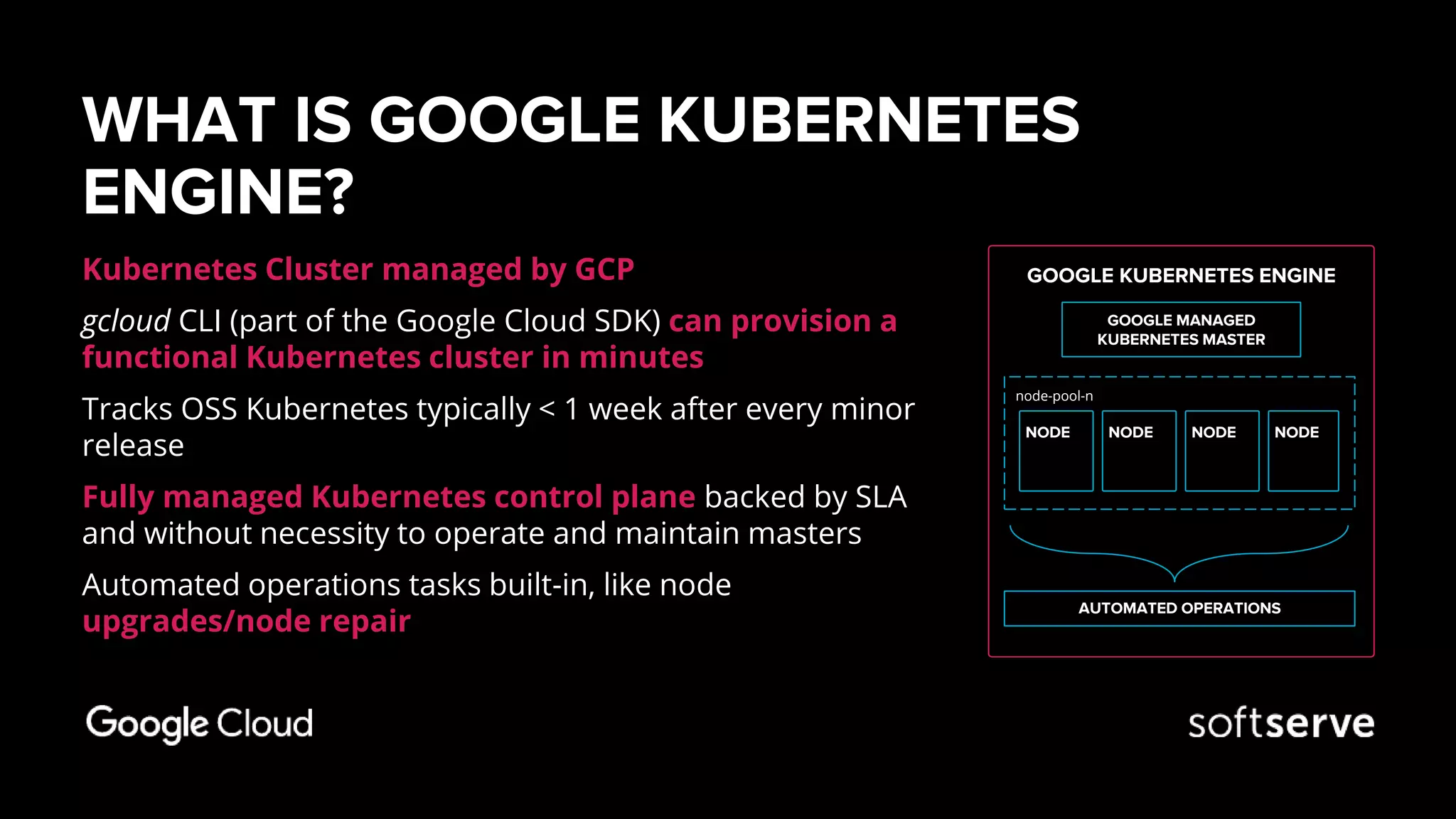

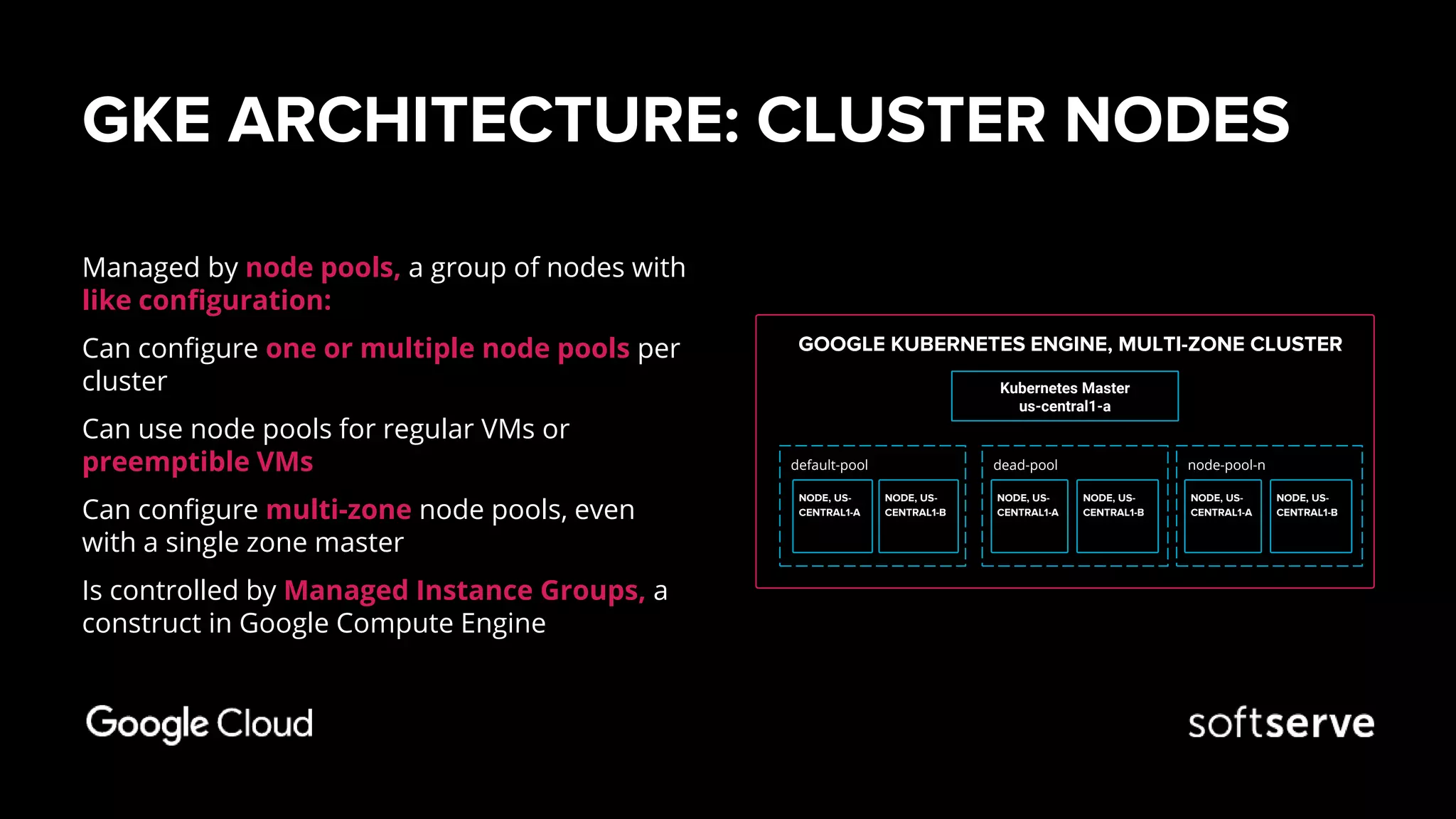

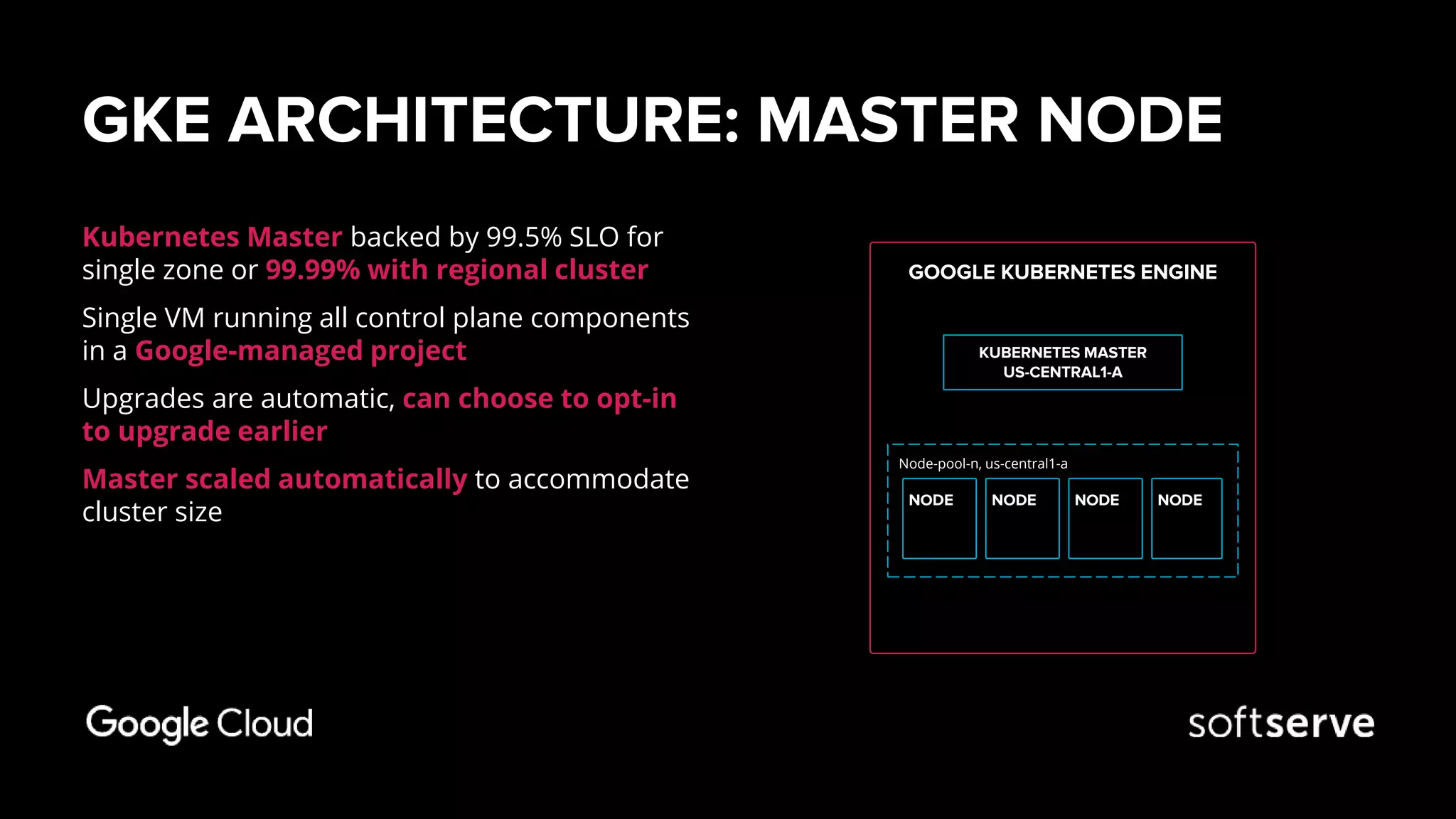

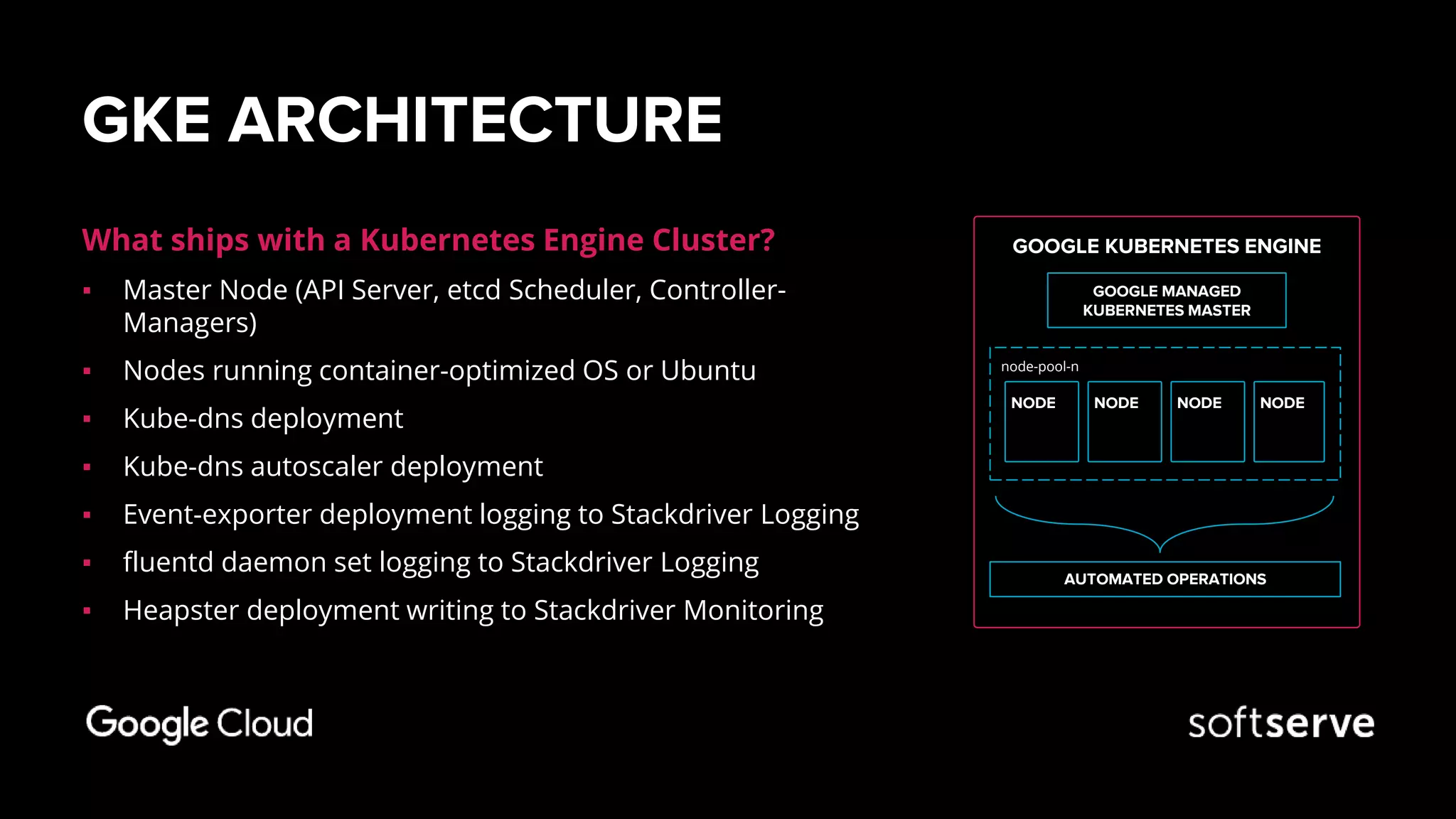

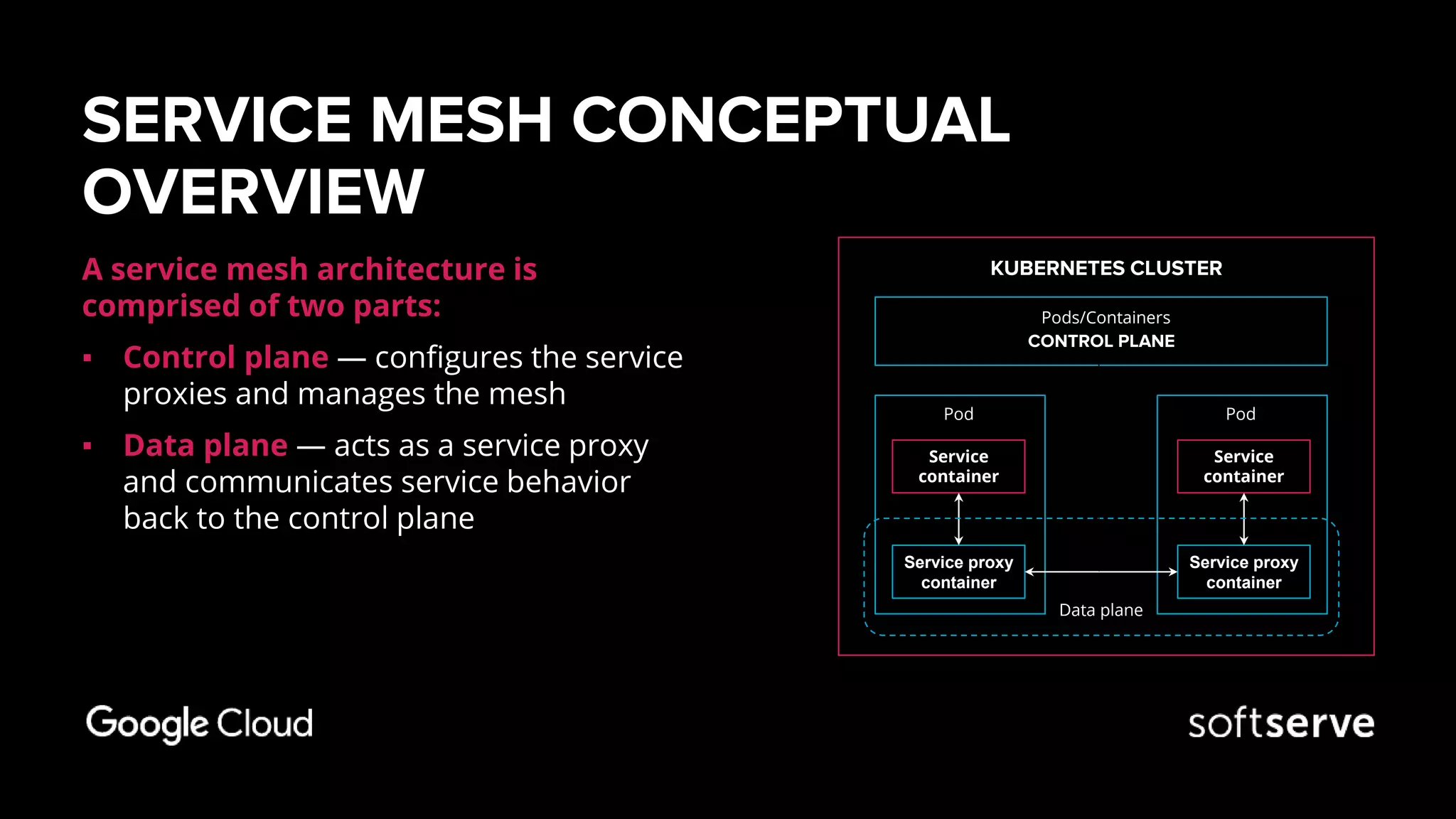

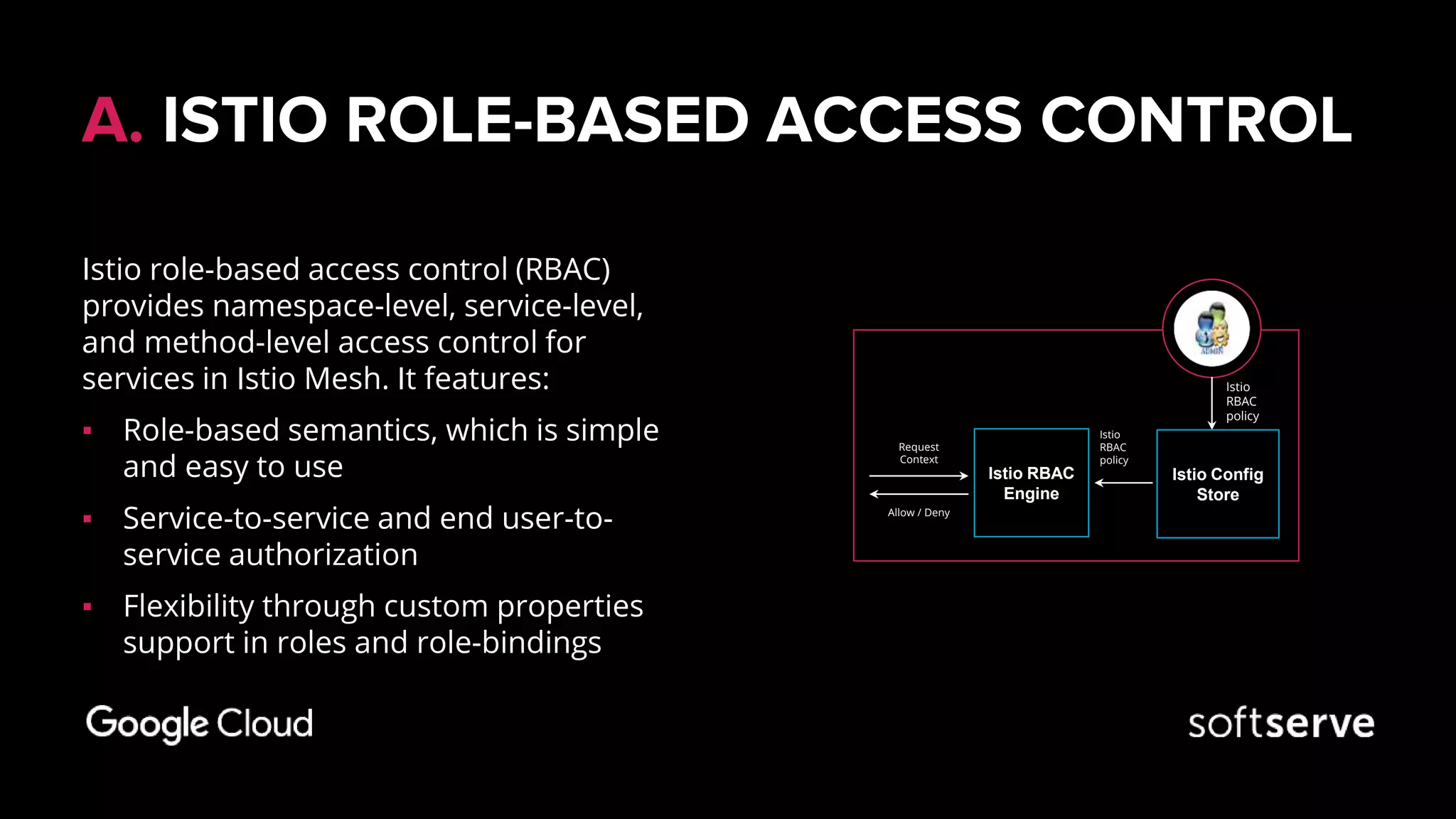

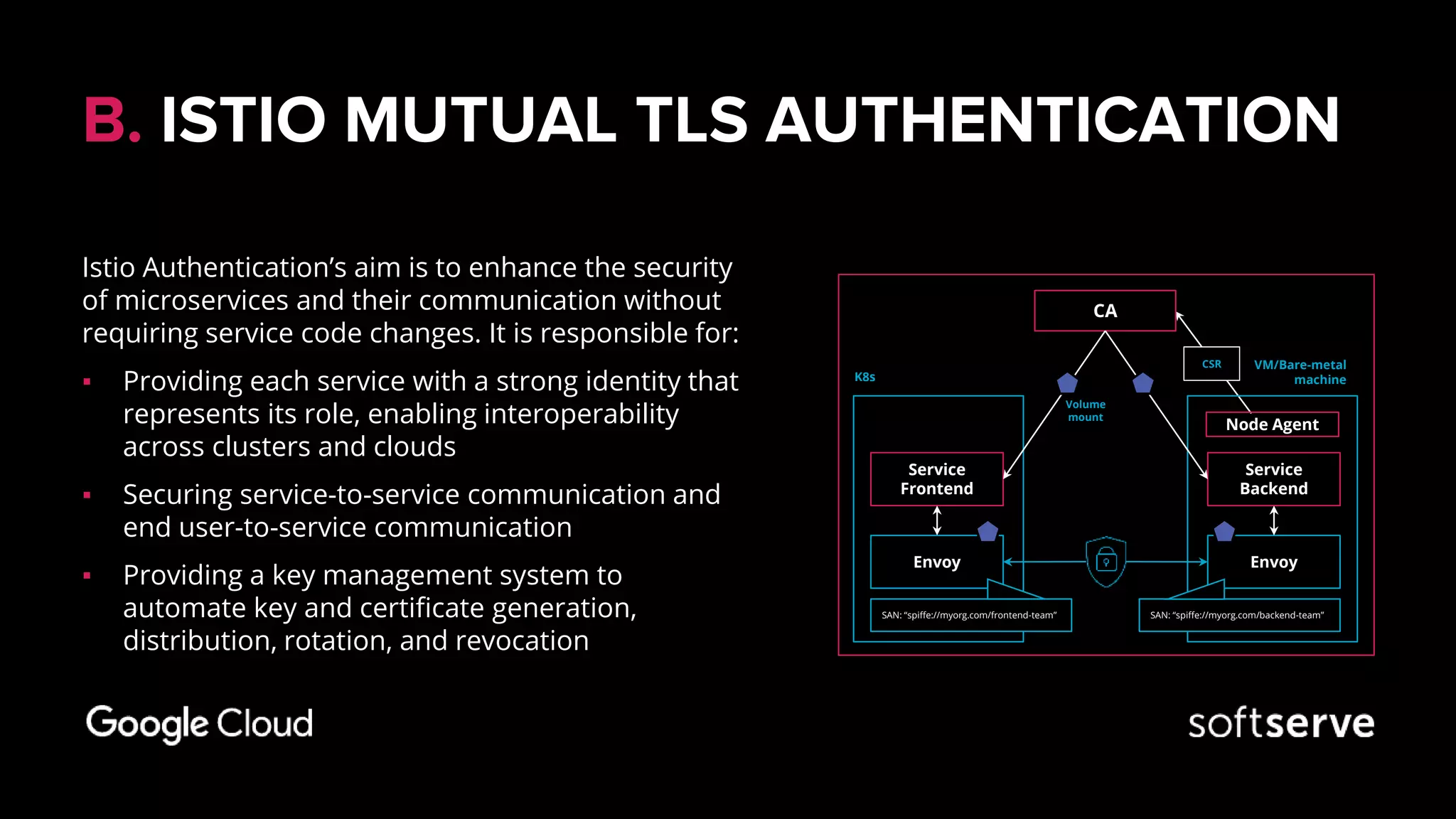

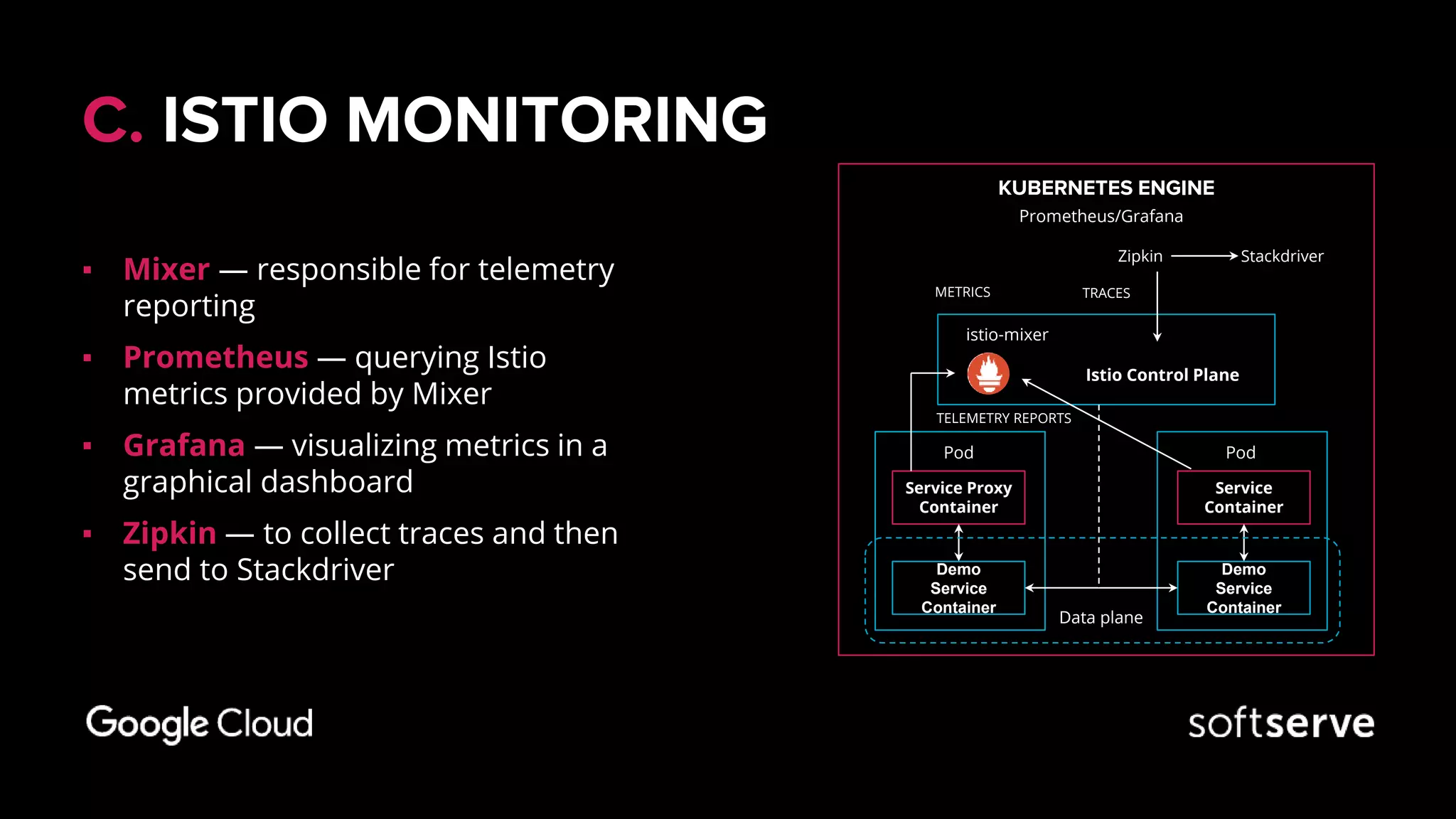

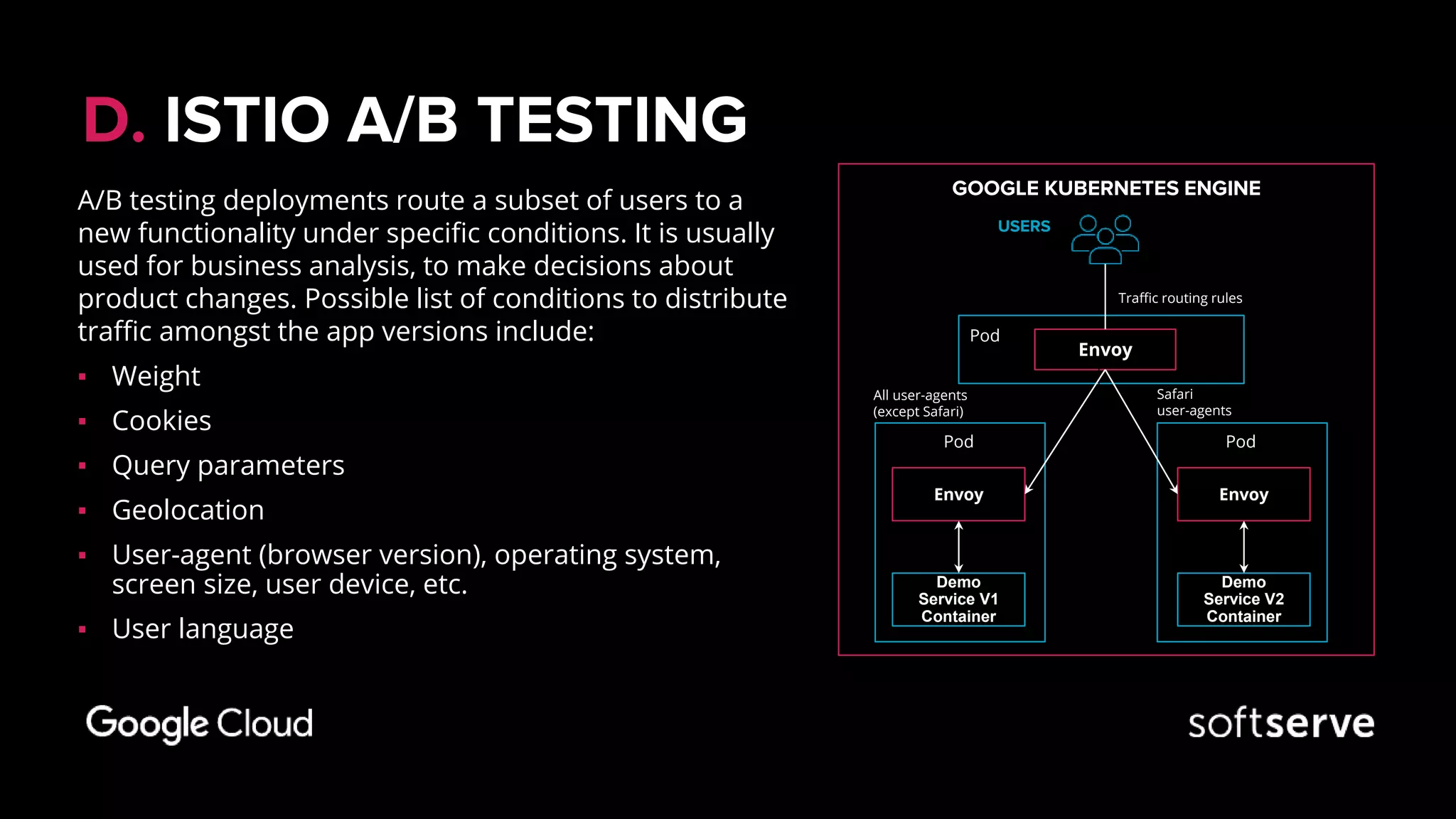

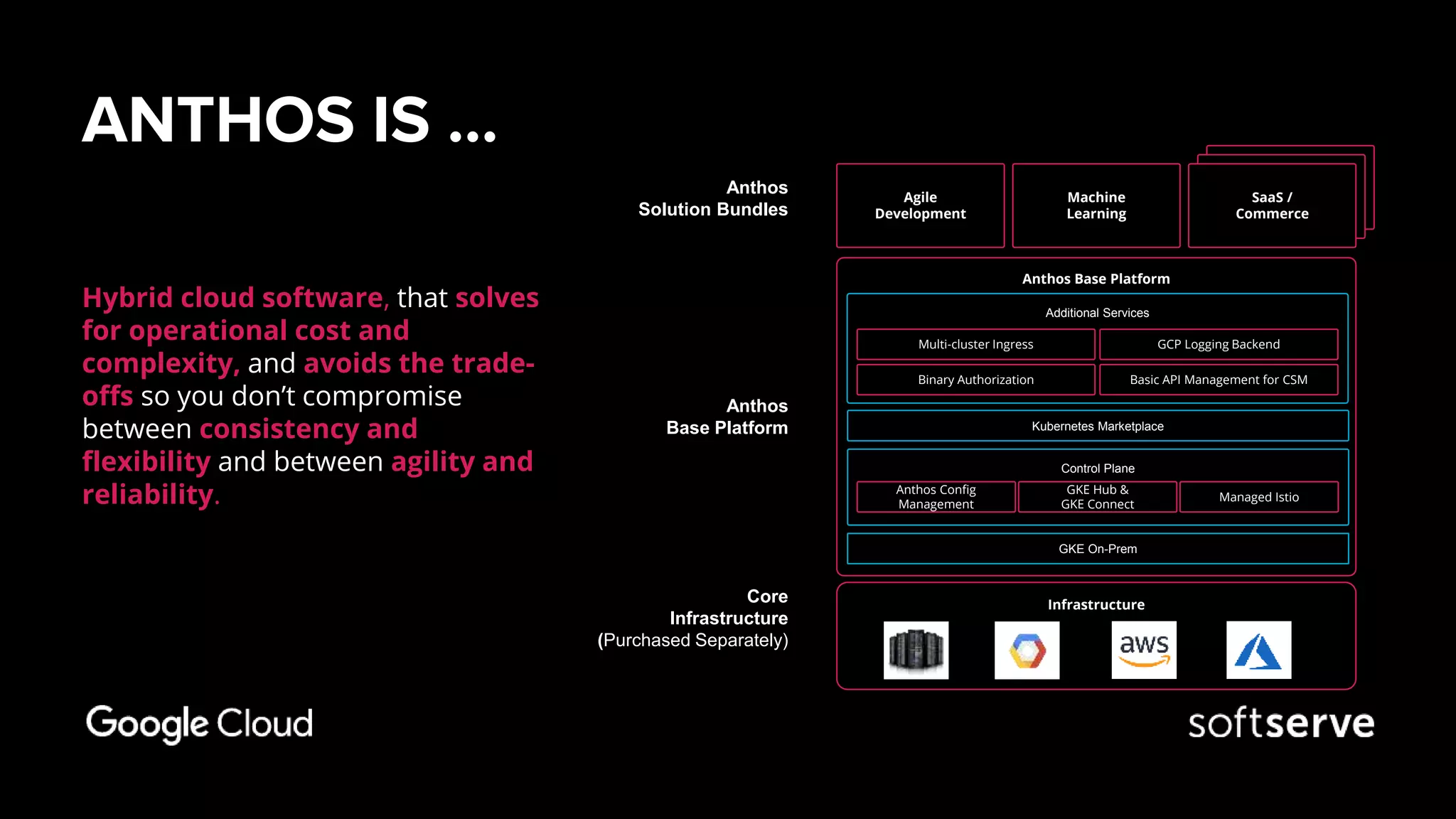

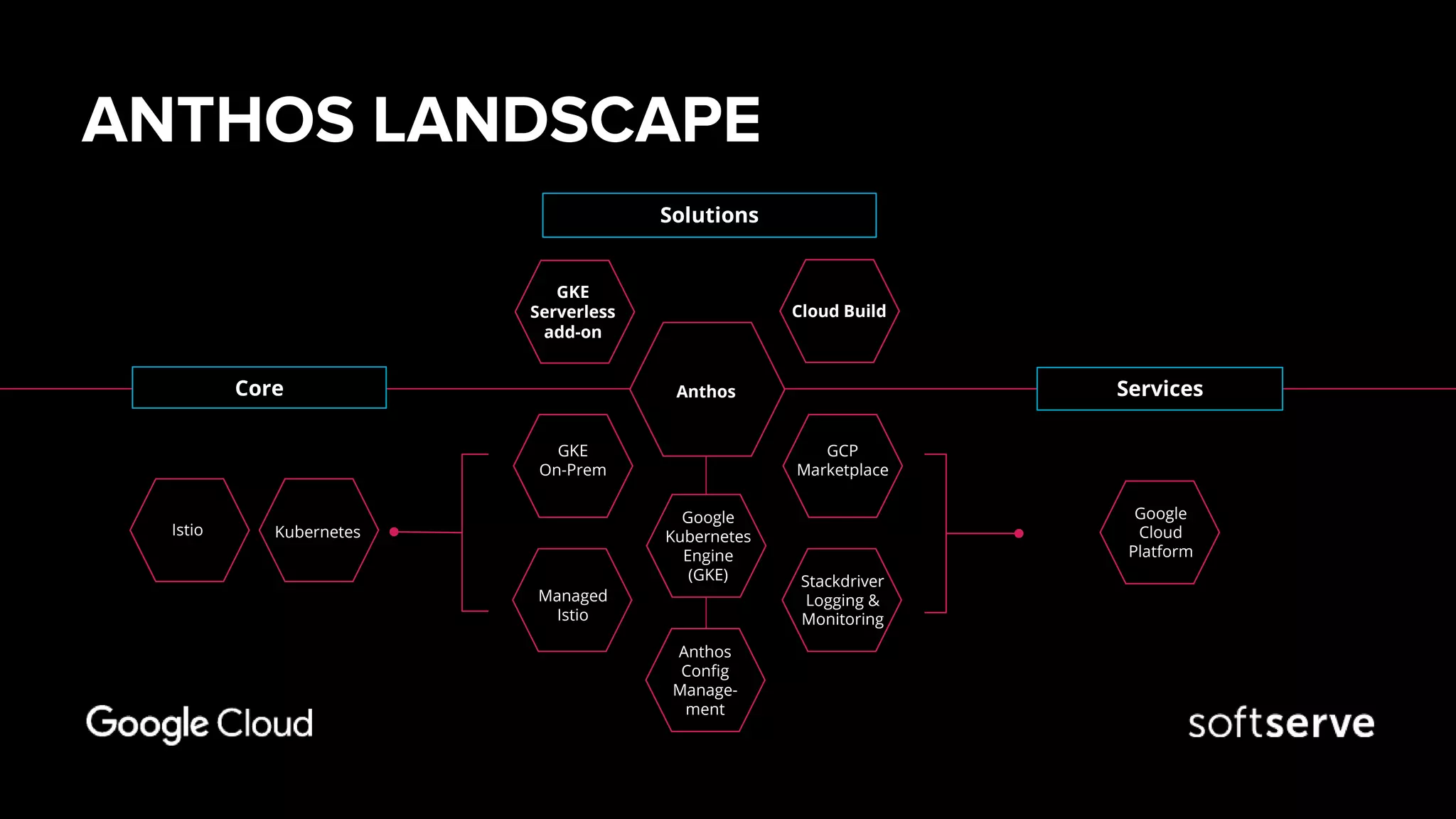

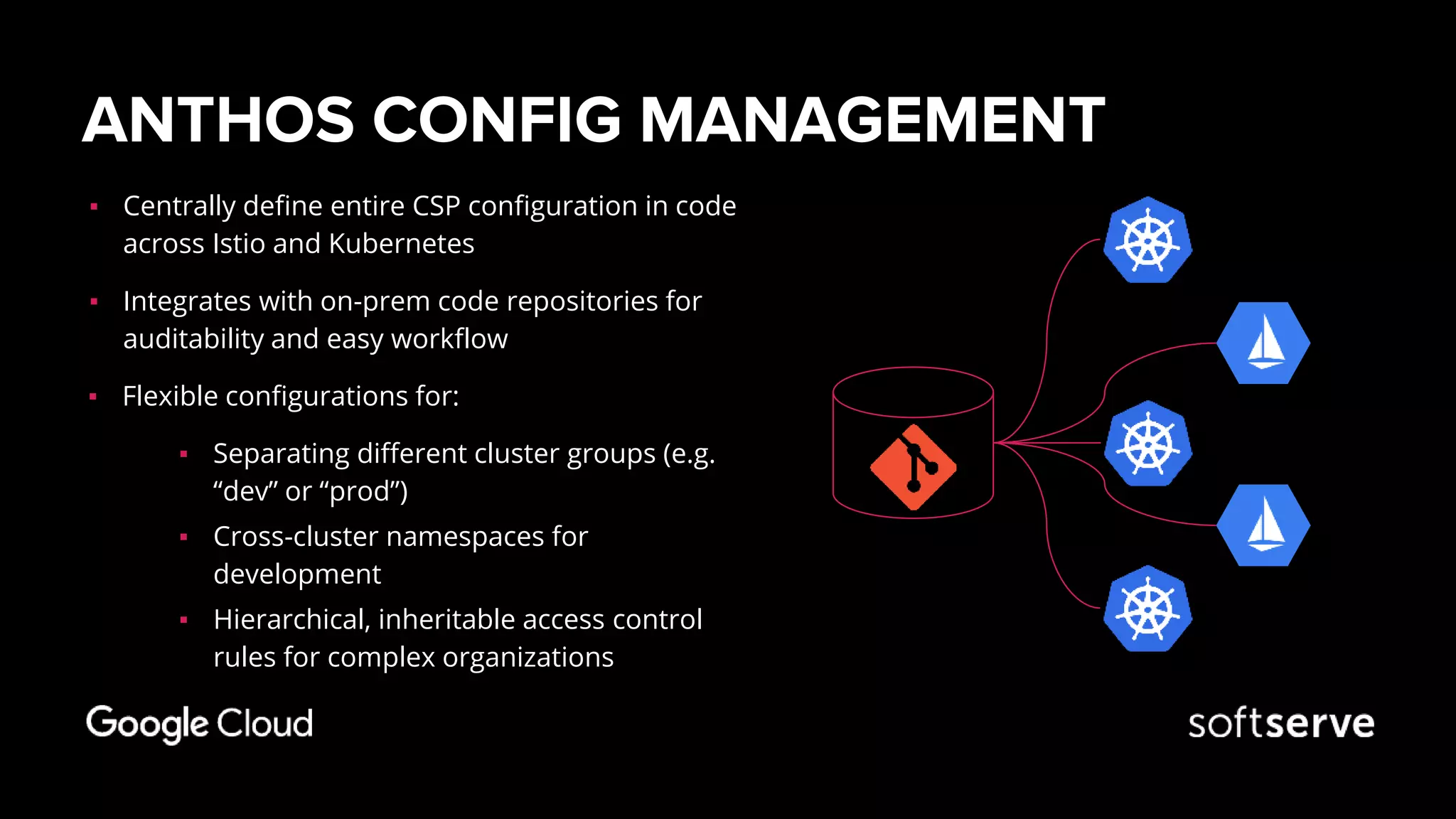

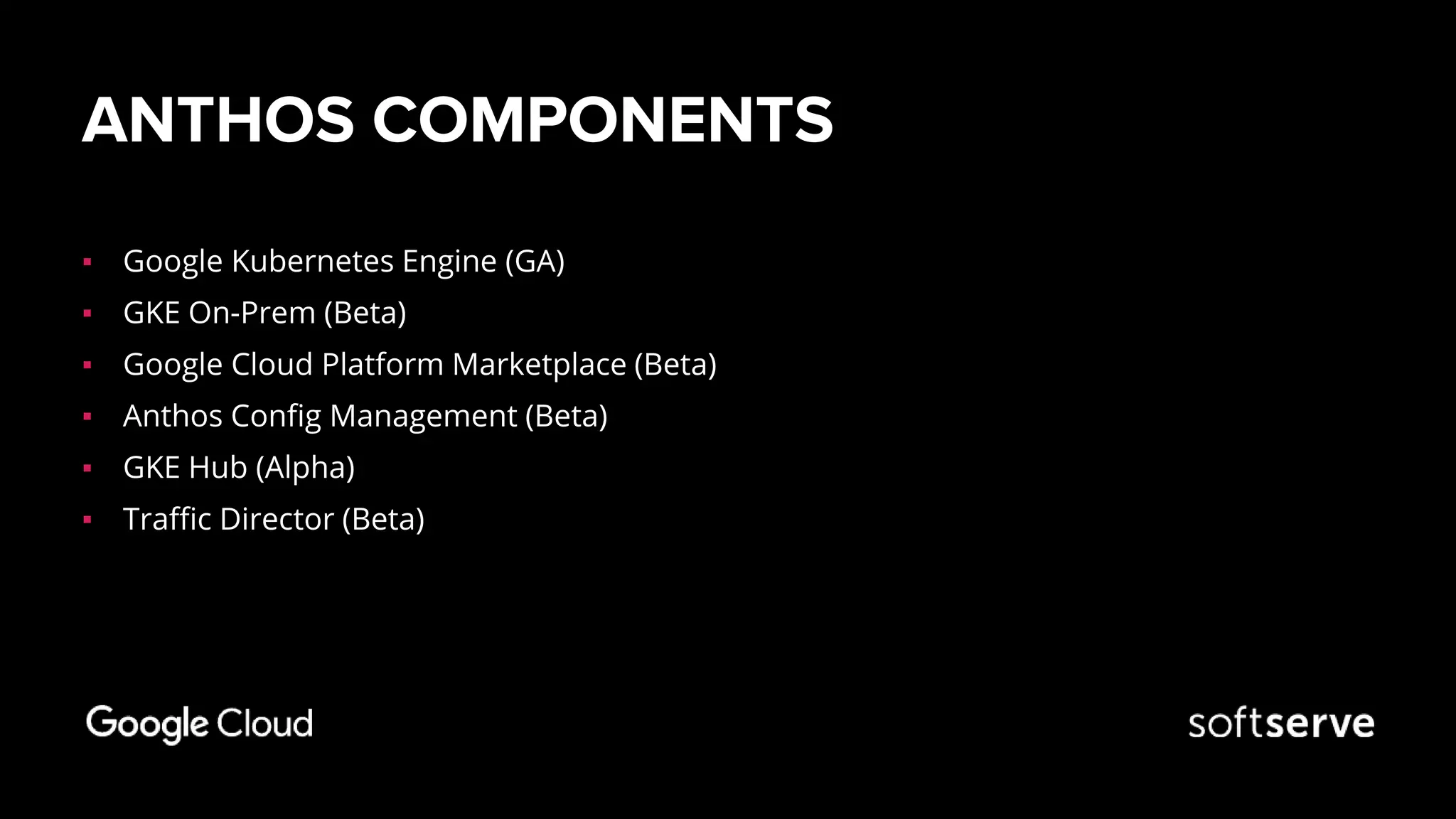

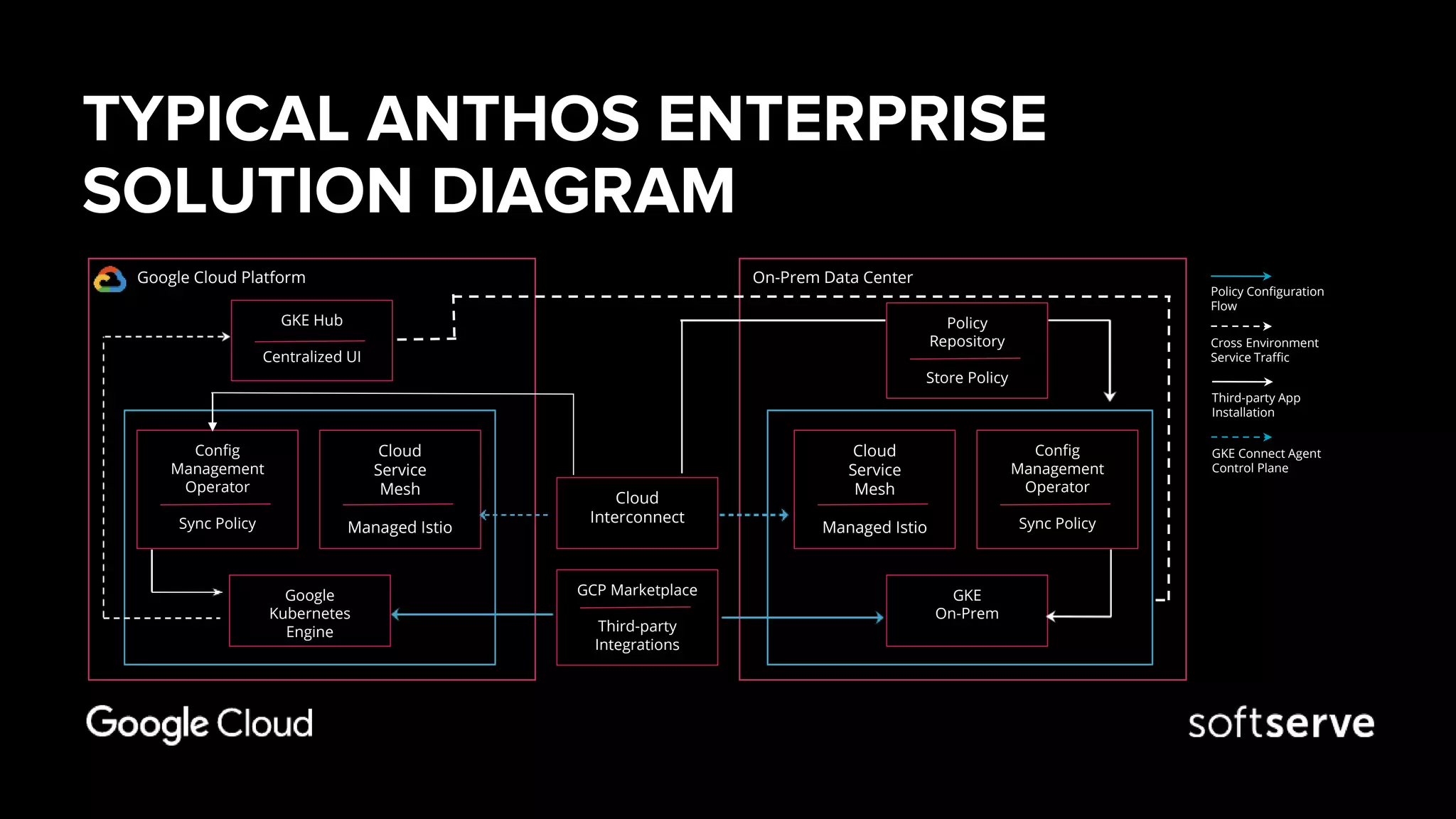

The document discusses multi-cloud strategies using Google Anthos, Kubernetes, and Istio, emphasizing the components, architecture, and benefits of these technologies for enterprises. Key features include service mesh capabilities in Istio for traffic management, security, and observability, along with the ease of deploying Kubernetes clusters using Google Kubernetes Engine (GKE). It highlights the challenges of managing microservices and the advantages of a centralized approach to configuration and operations across different environments.