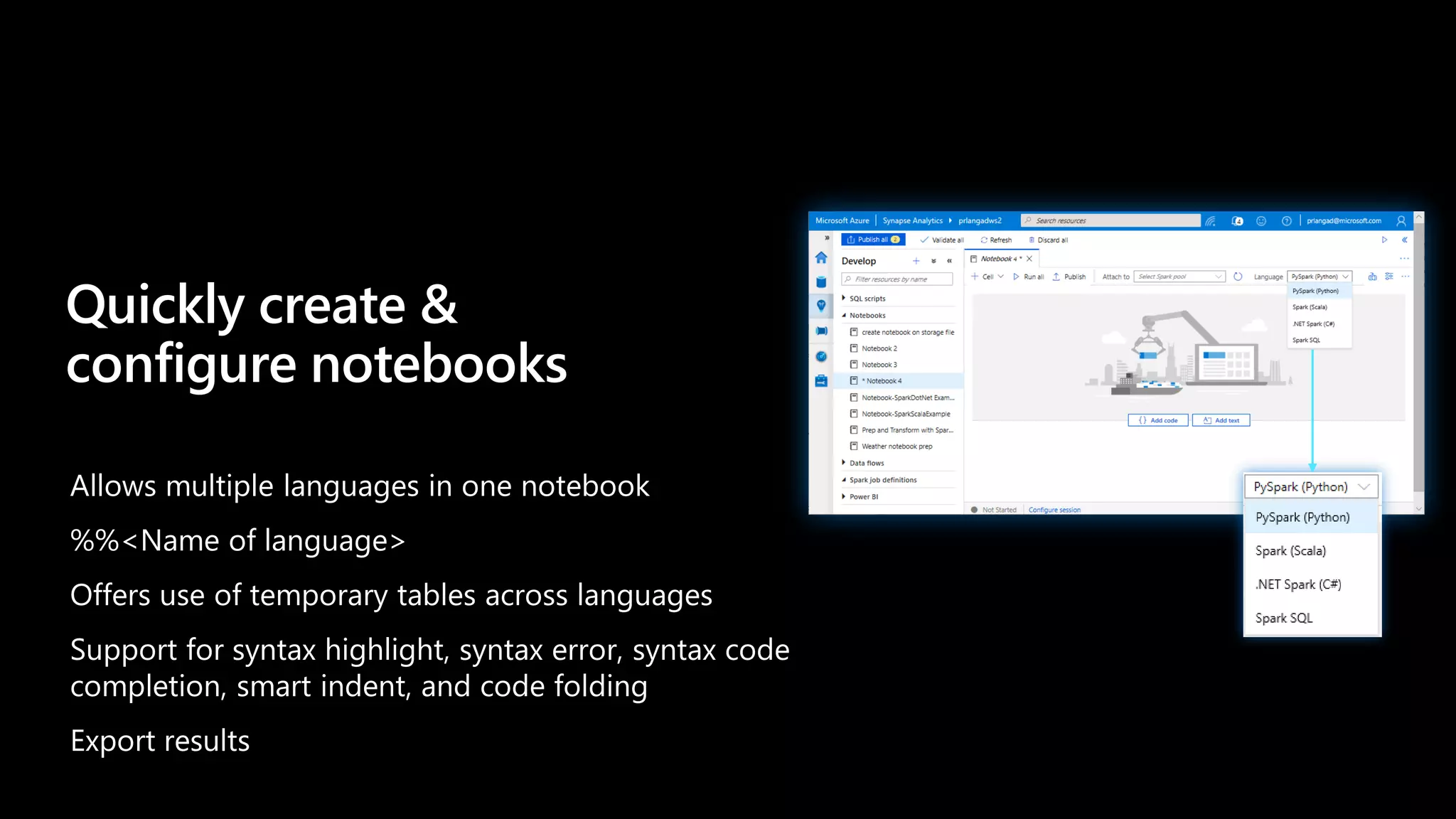

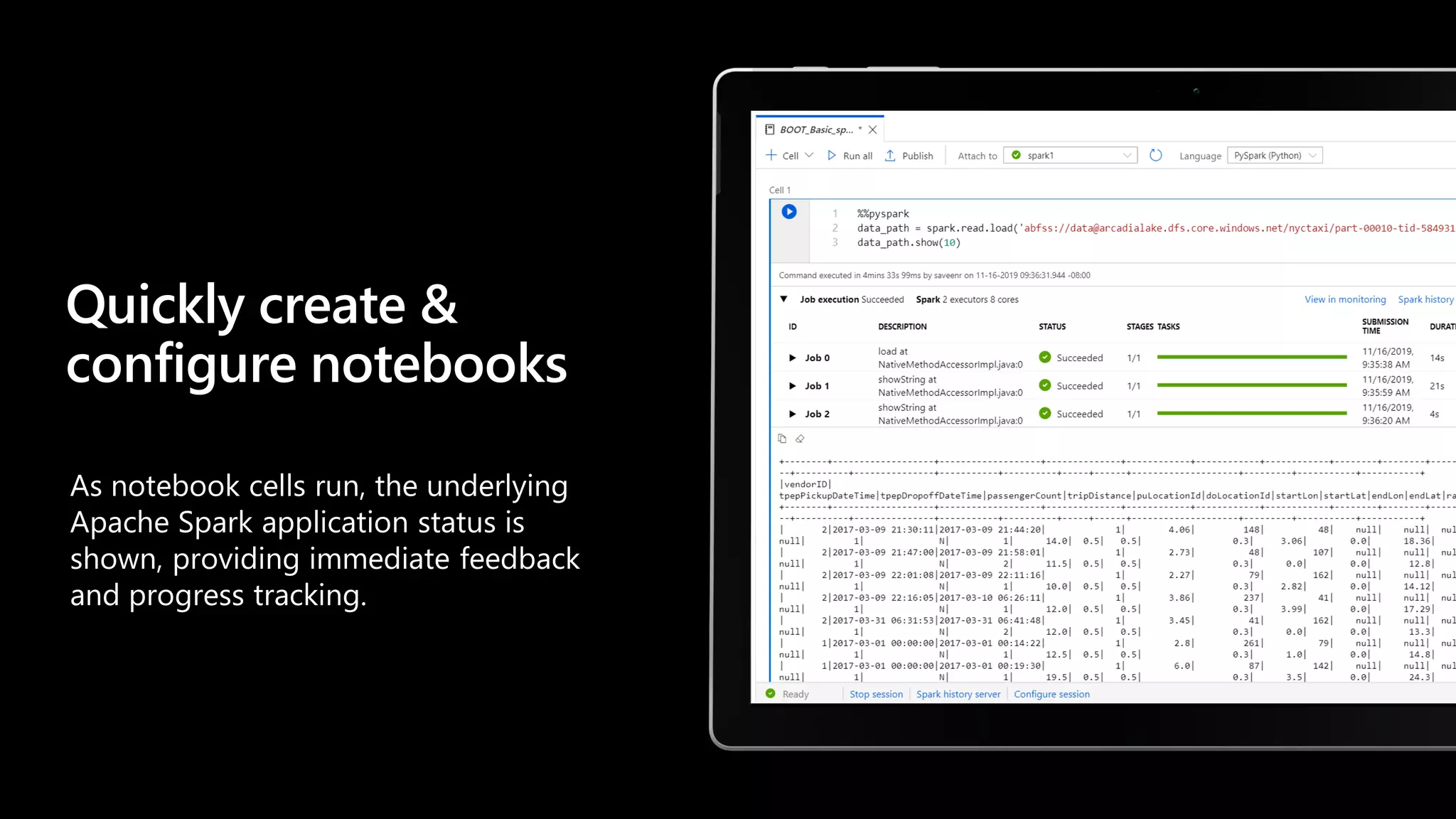

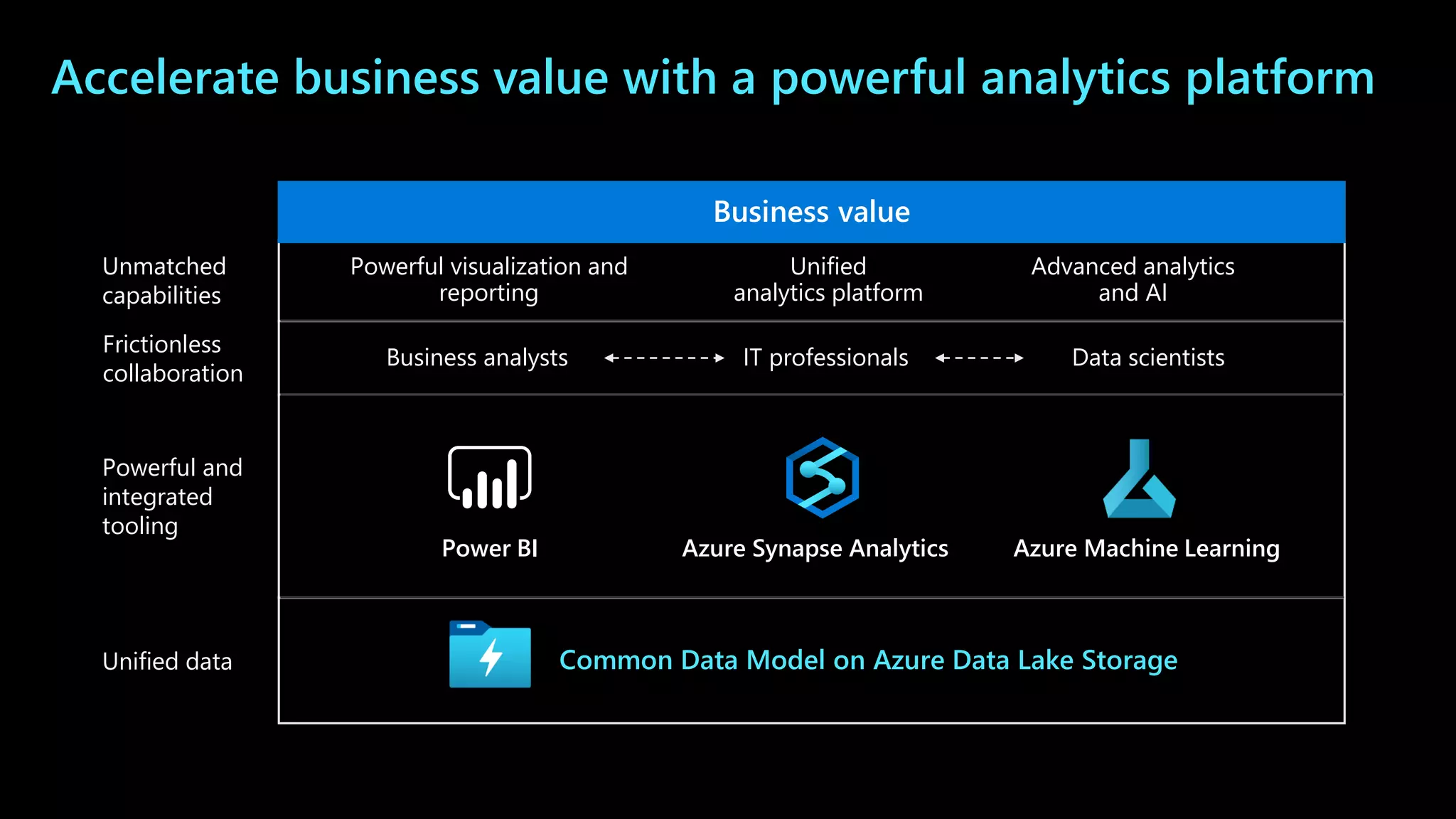

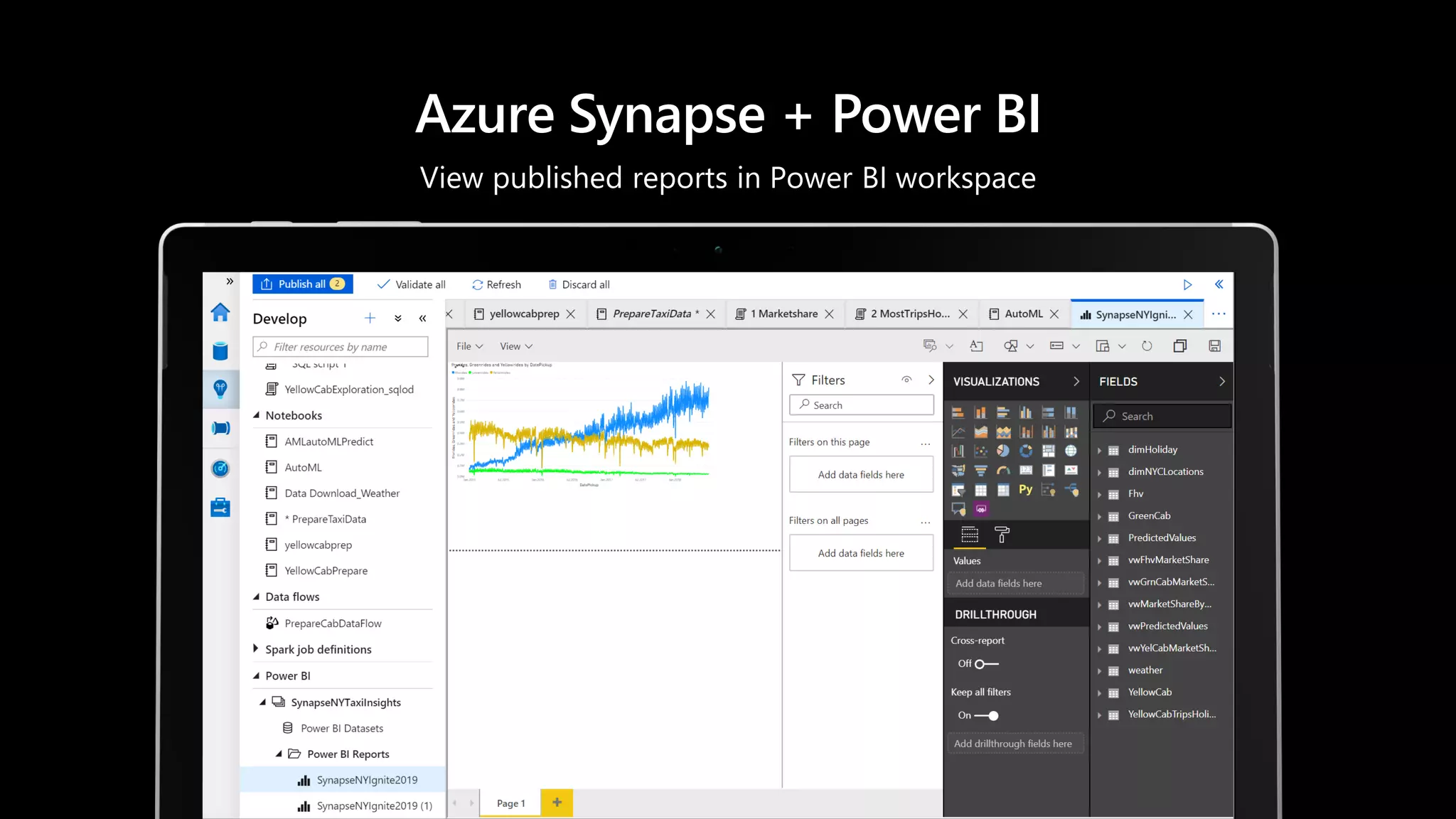

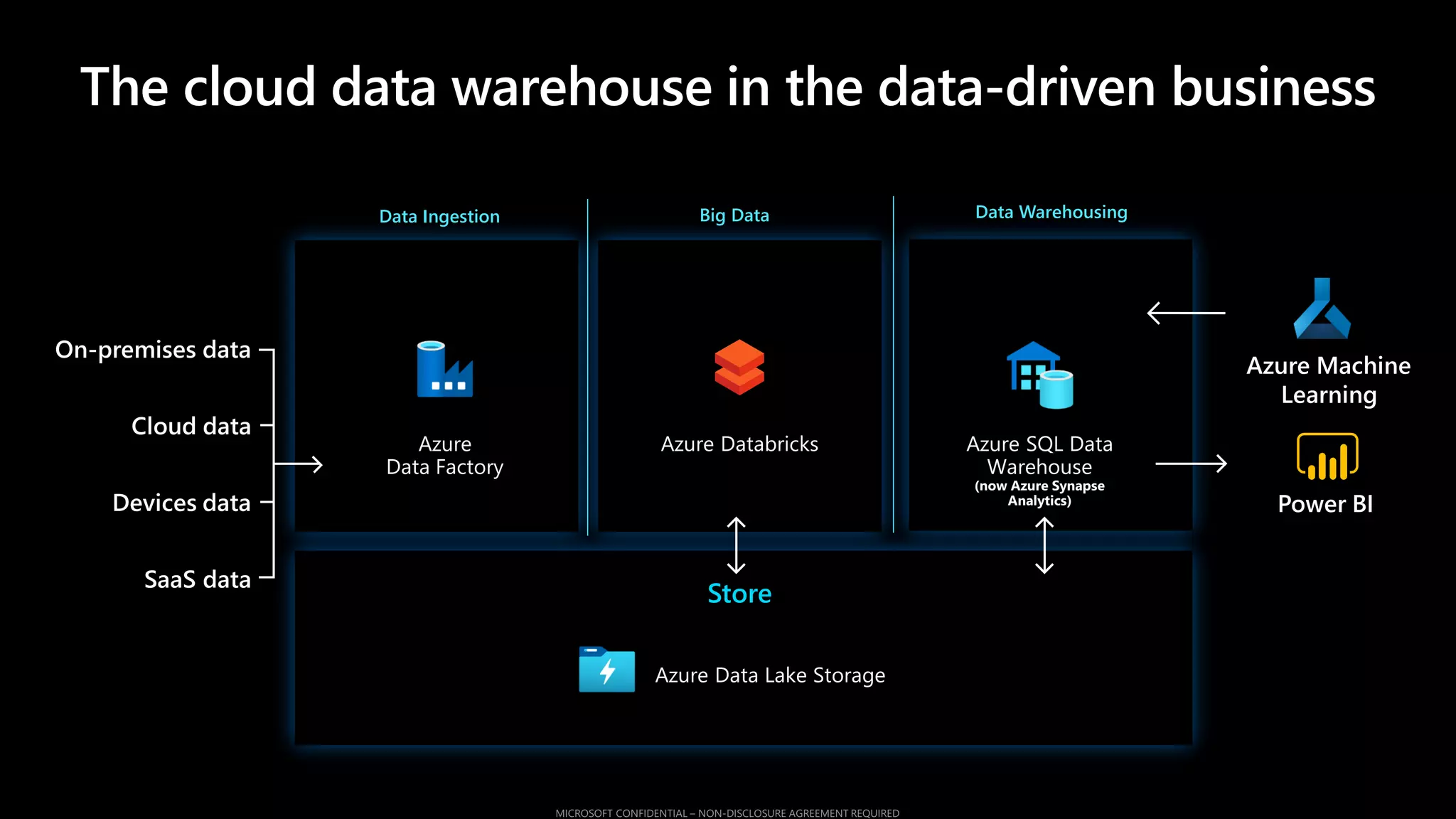

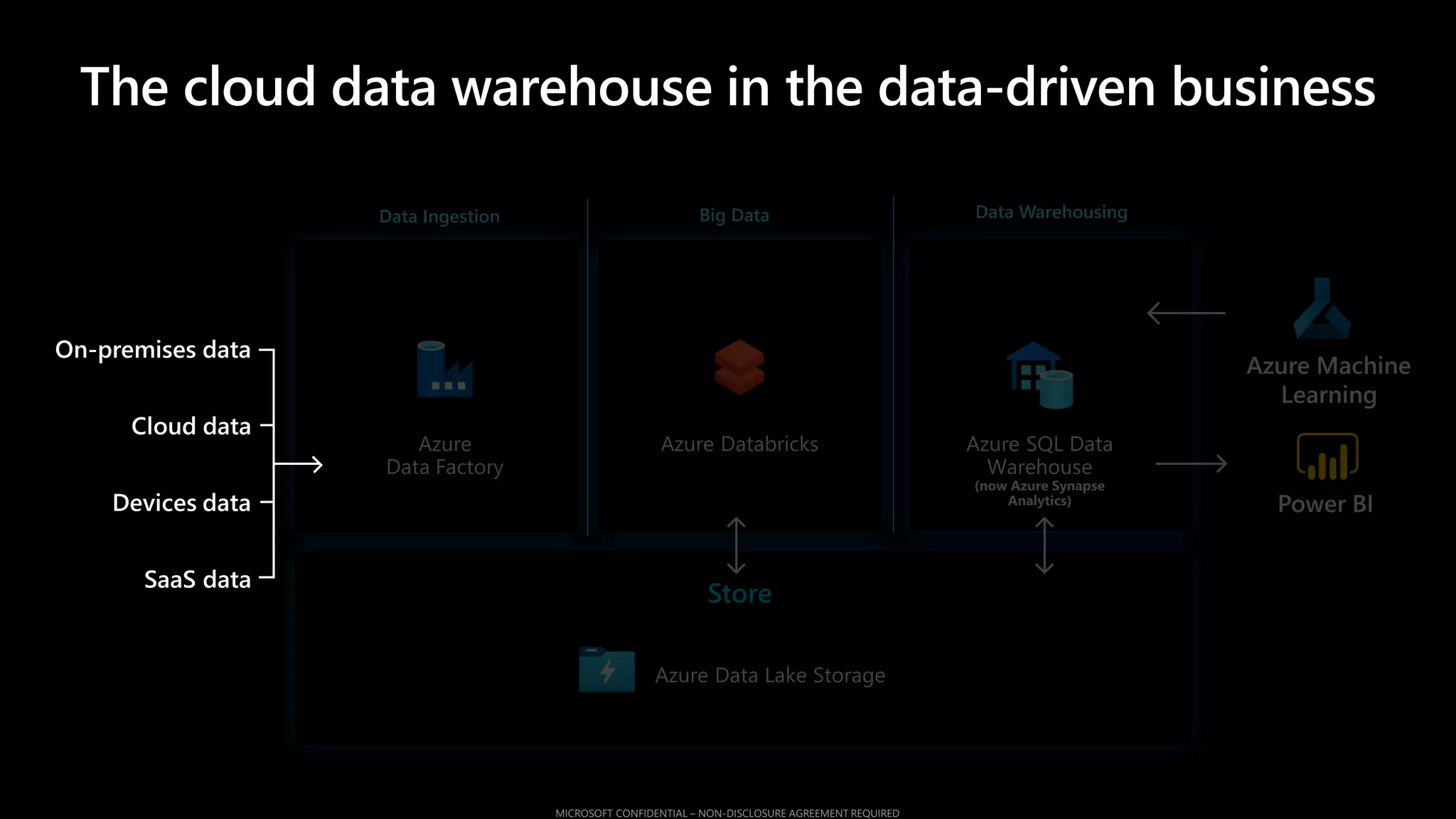

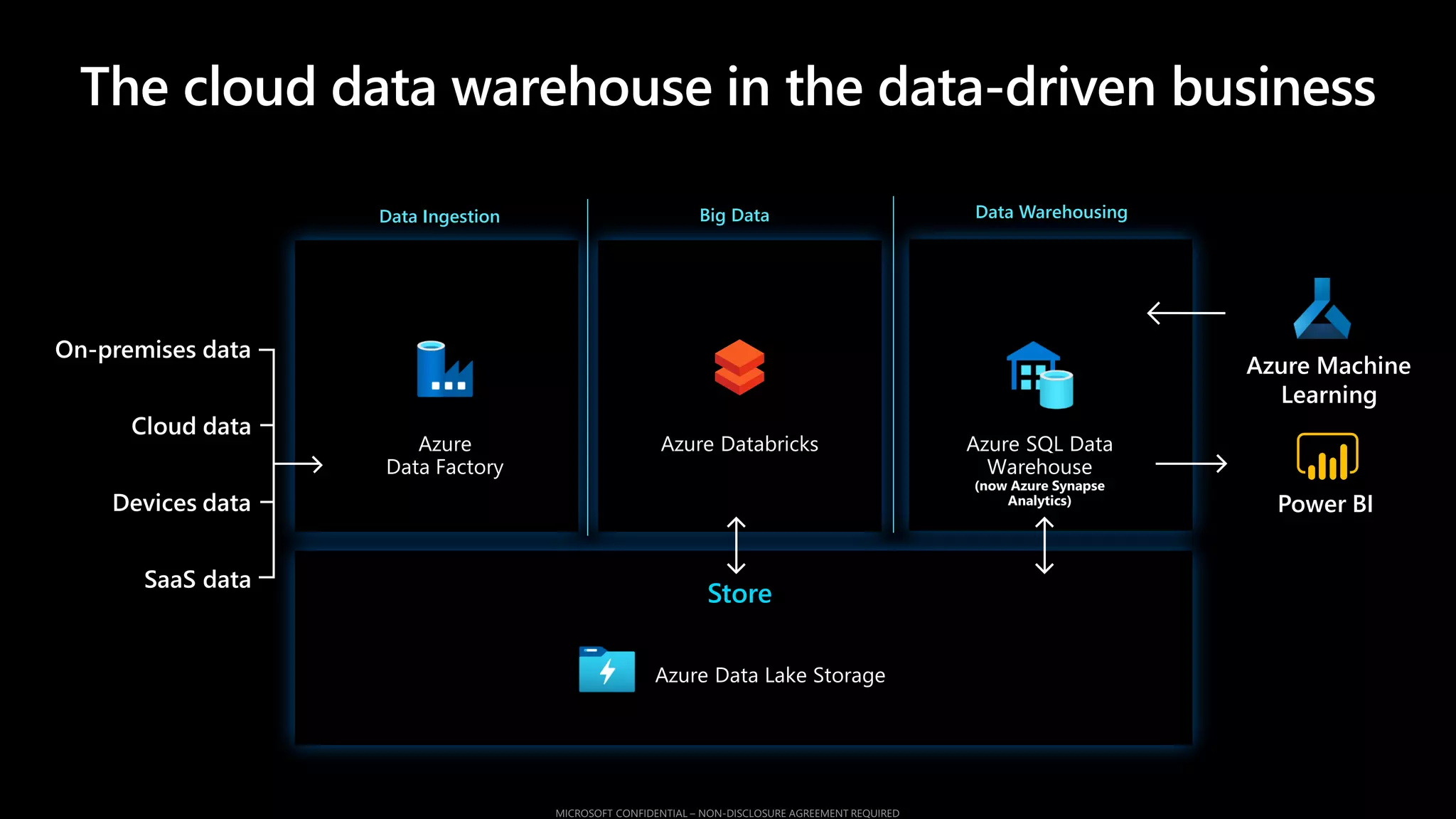

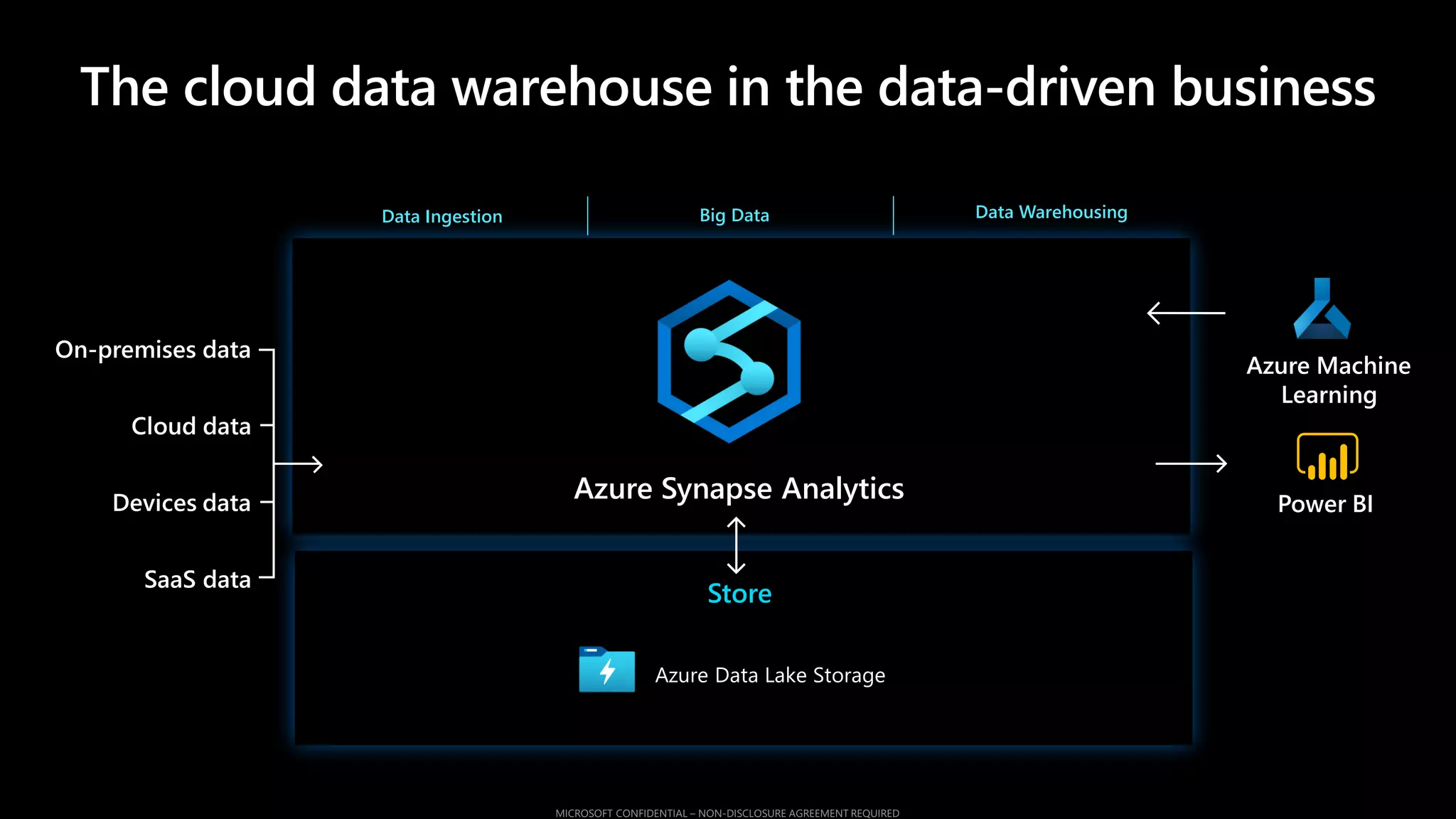

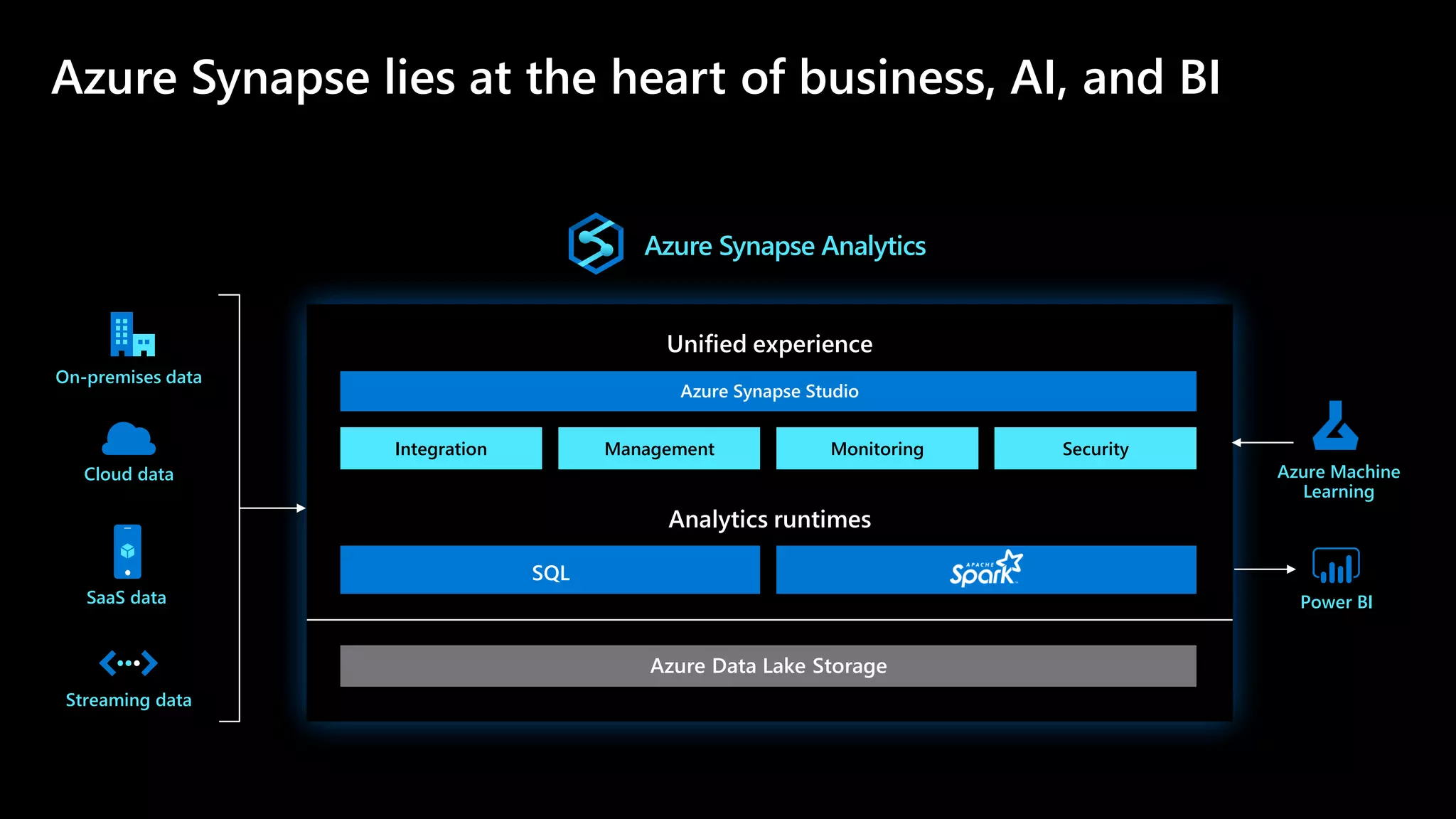

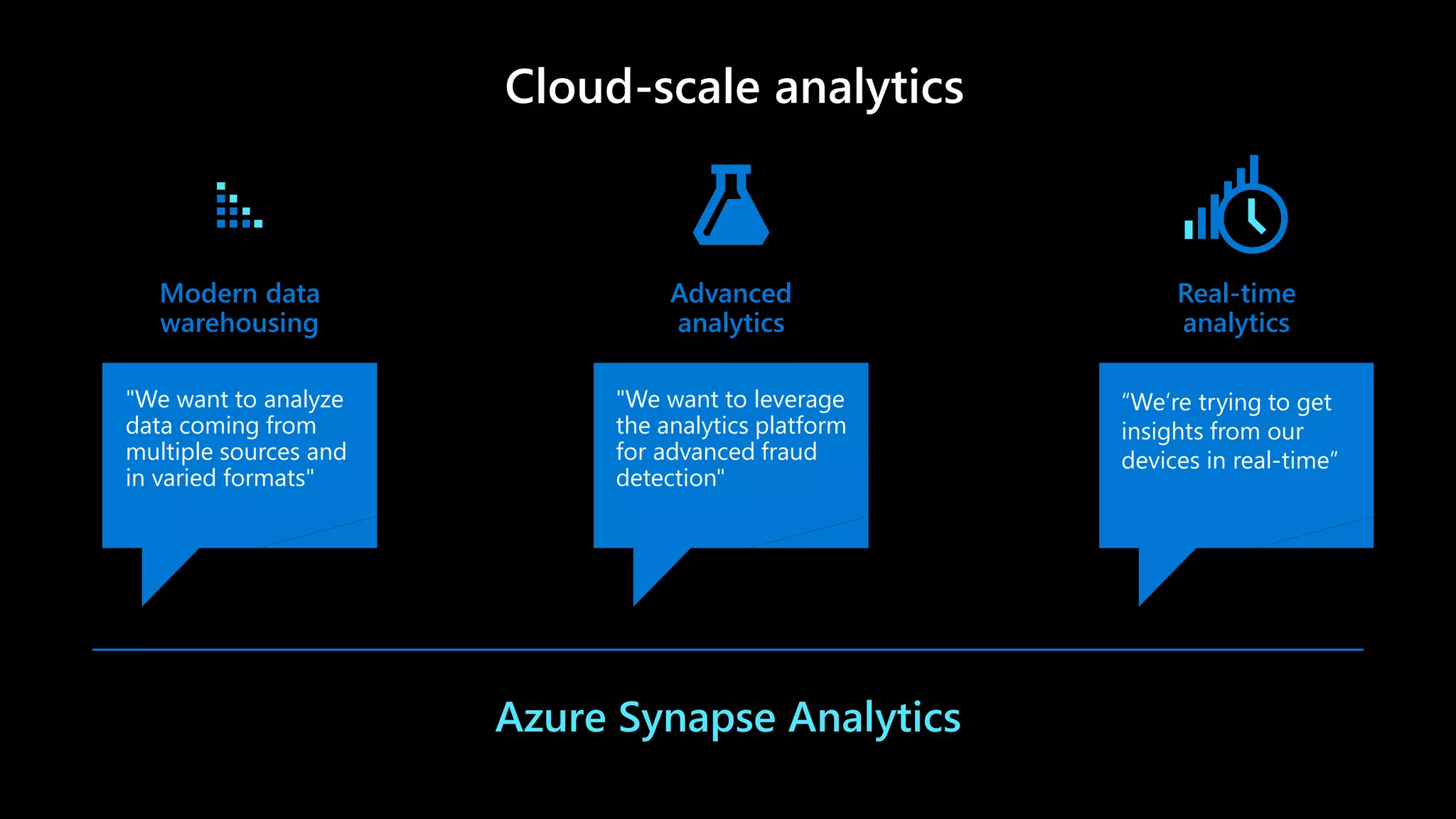

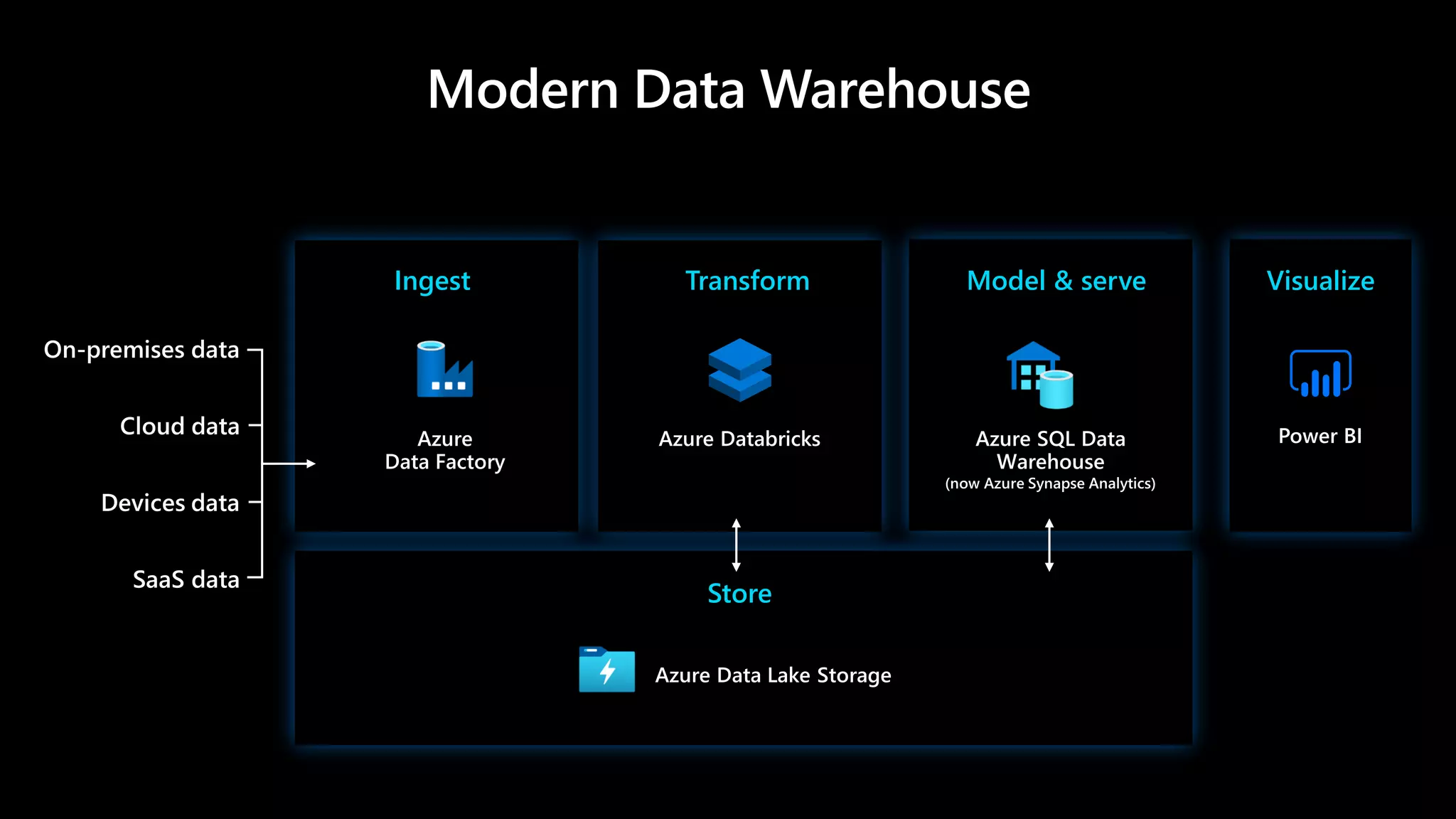

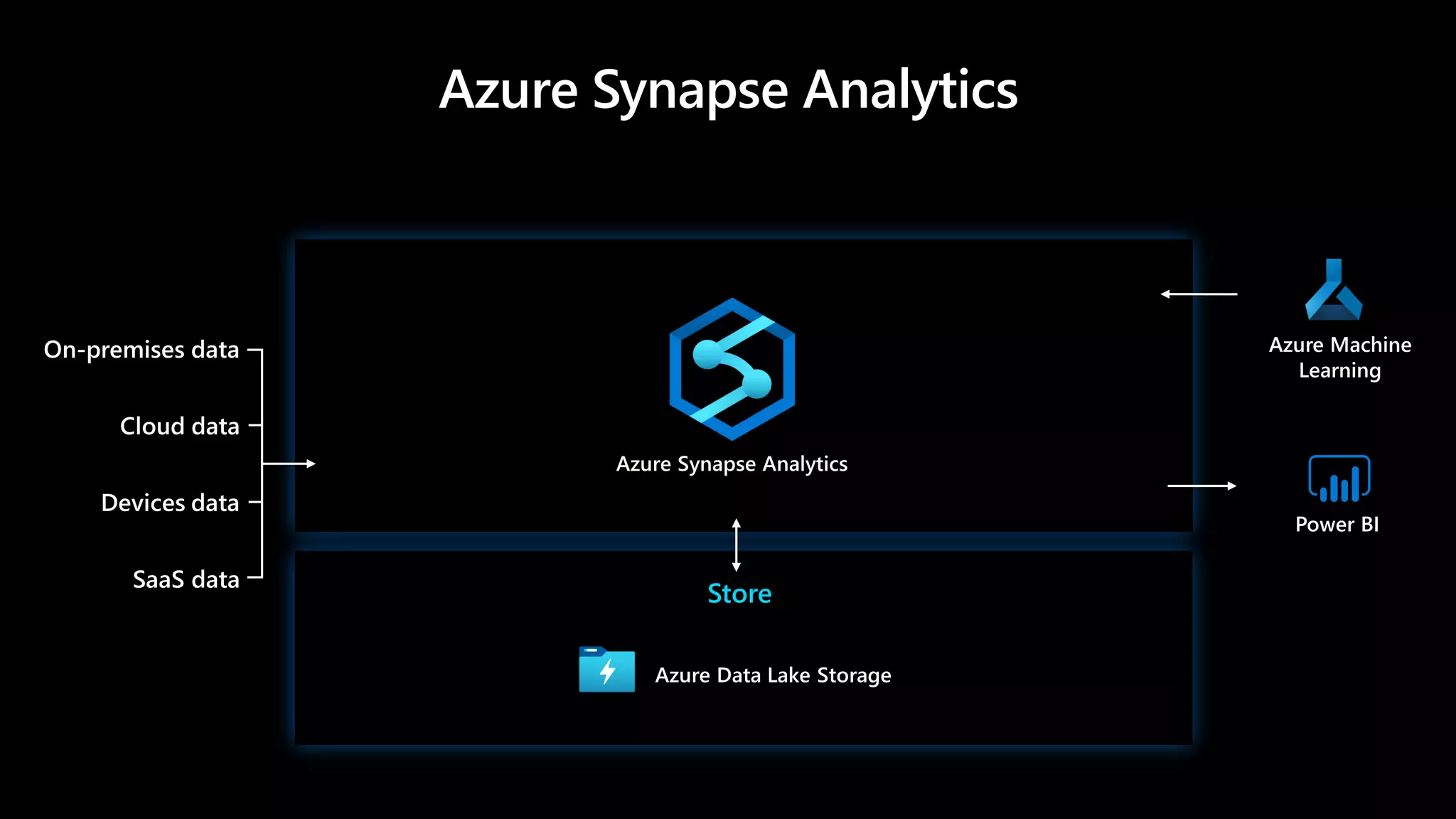

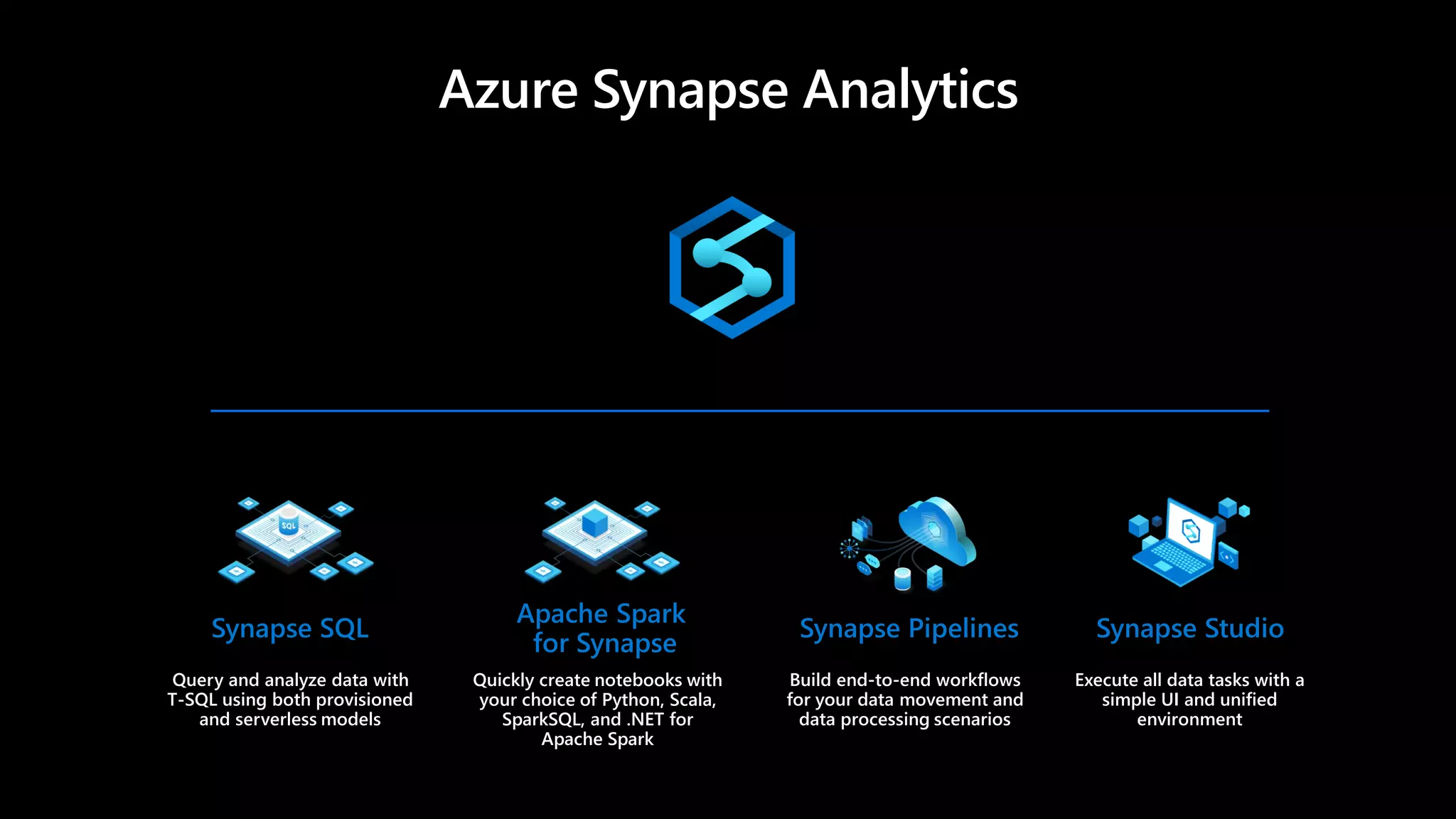

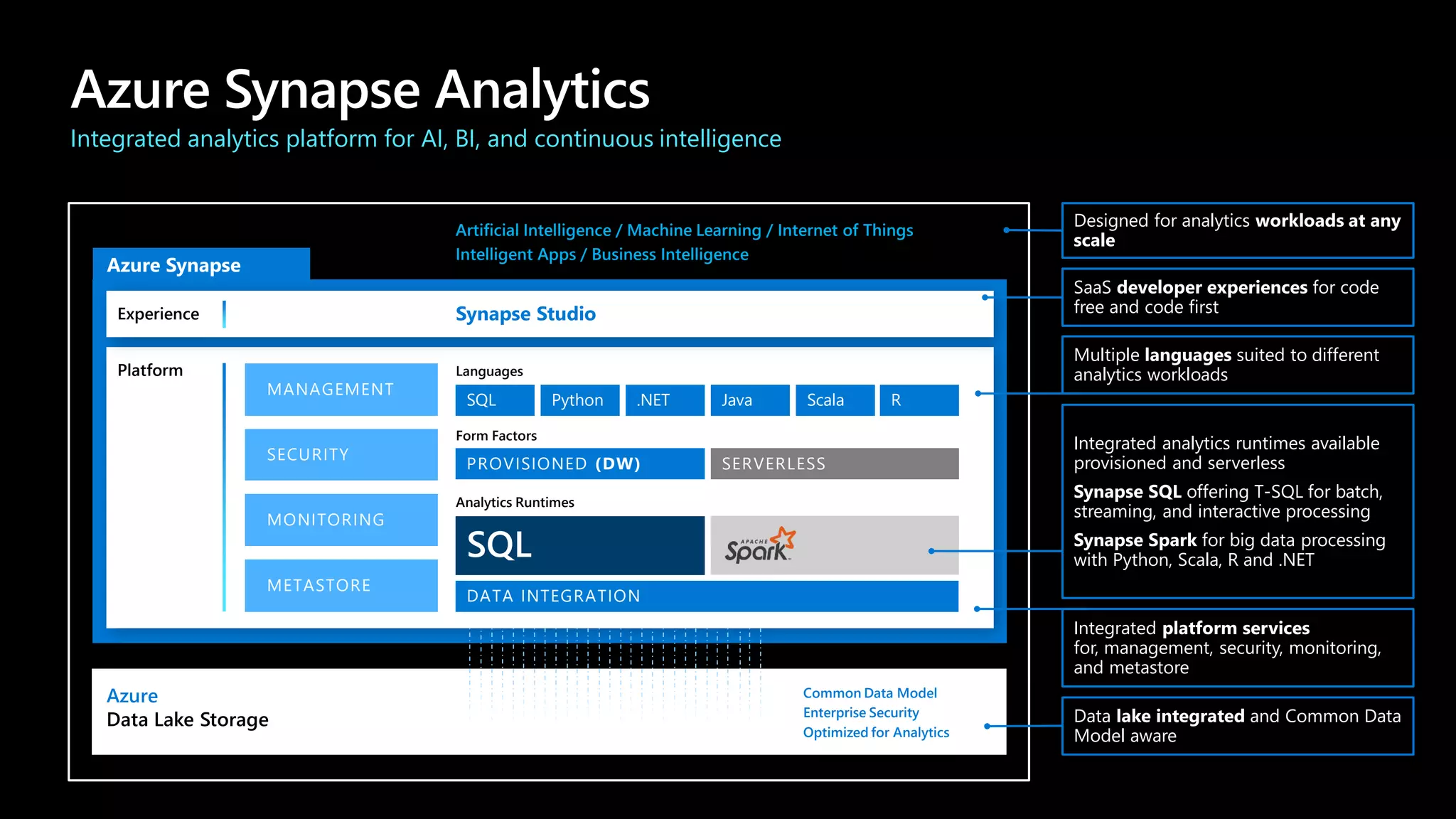

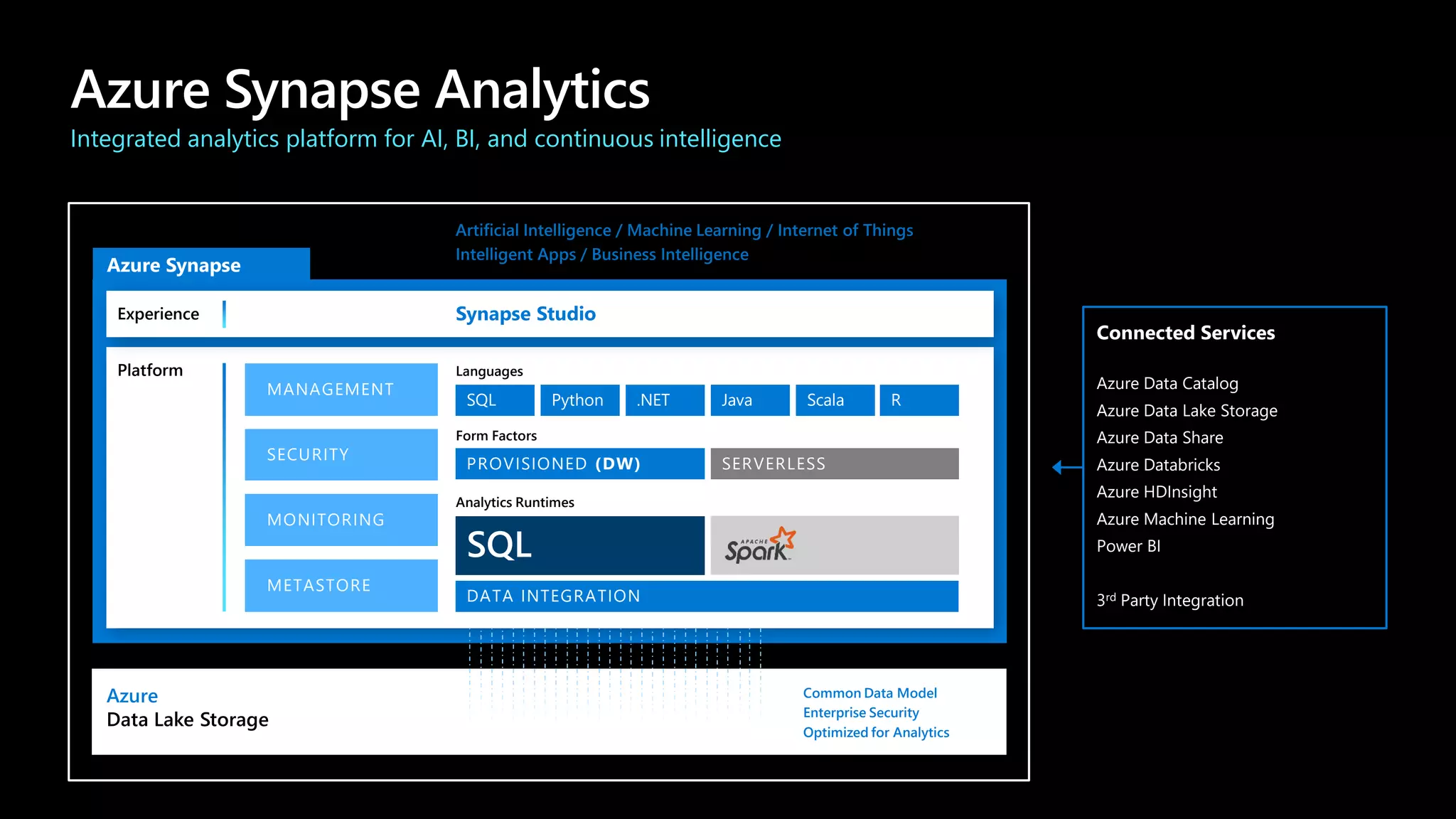

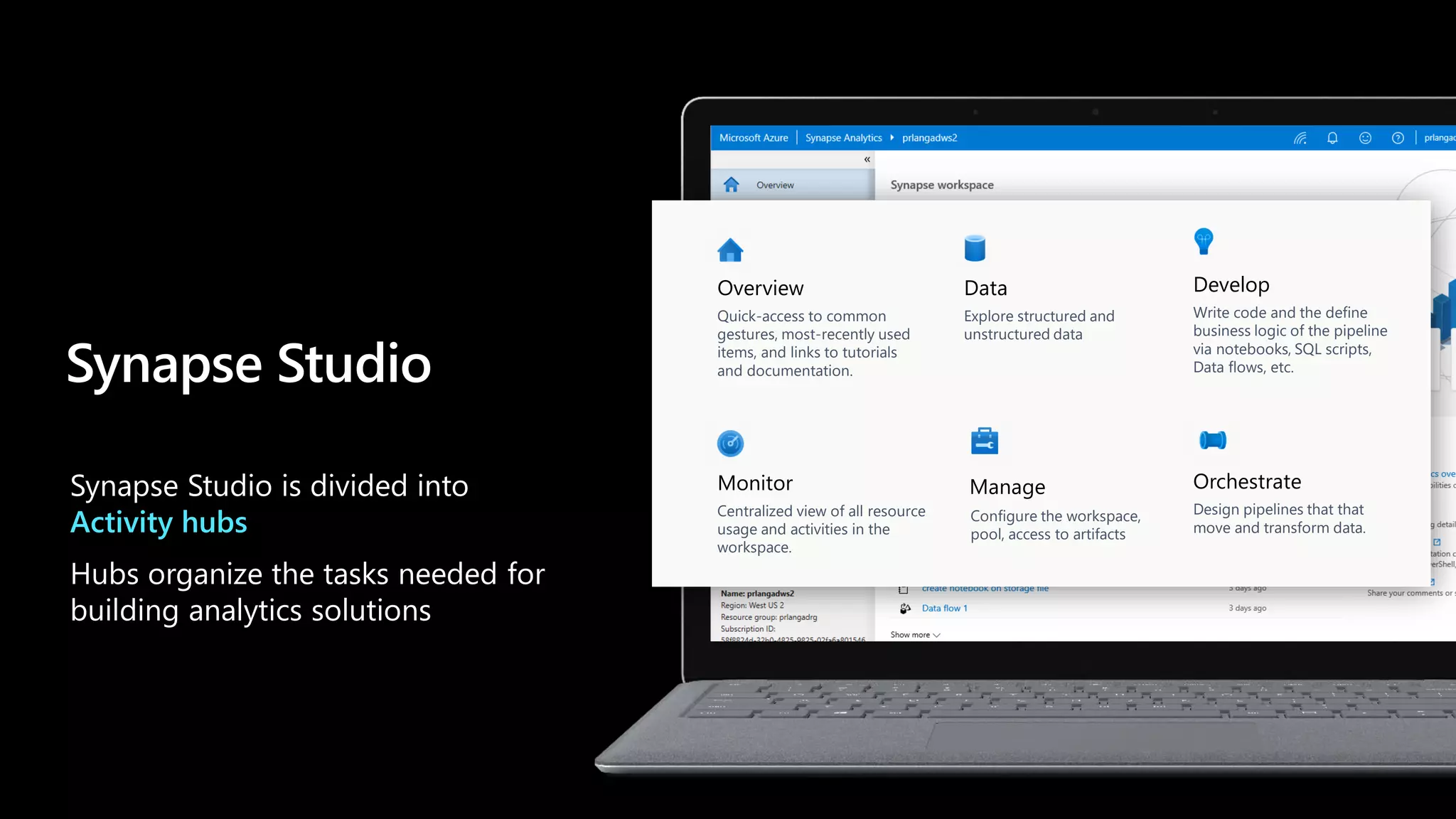

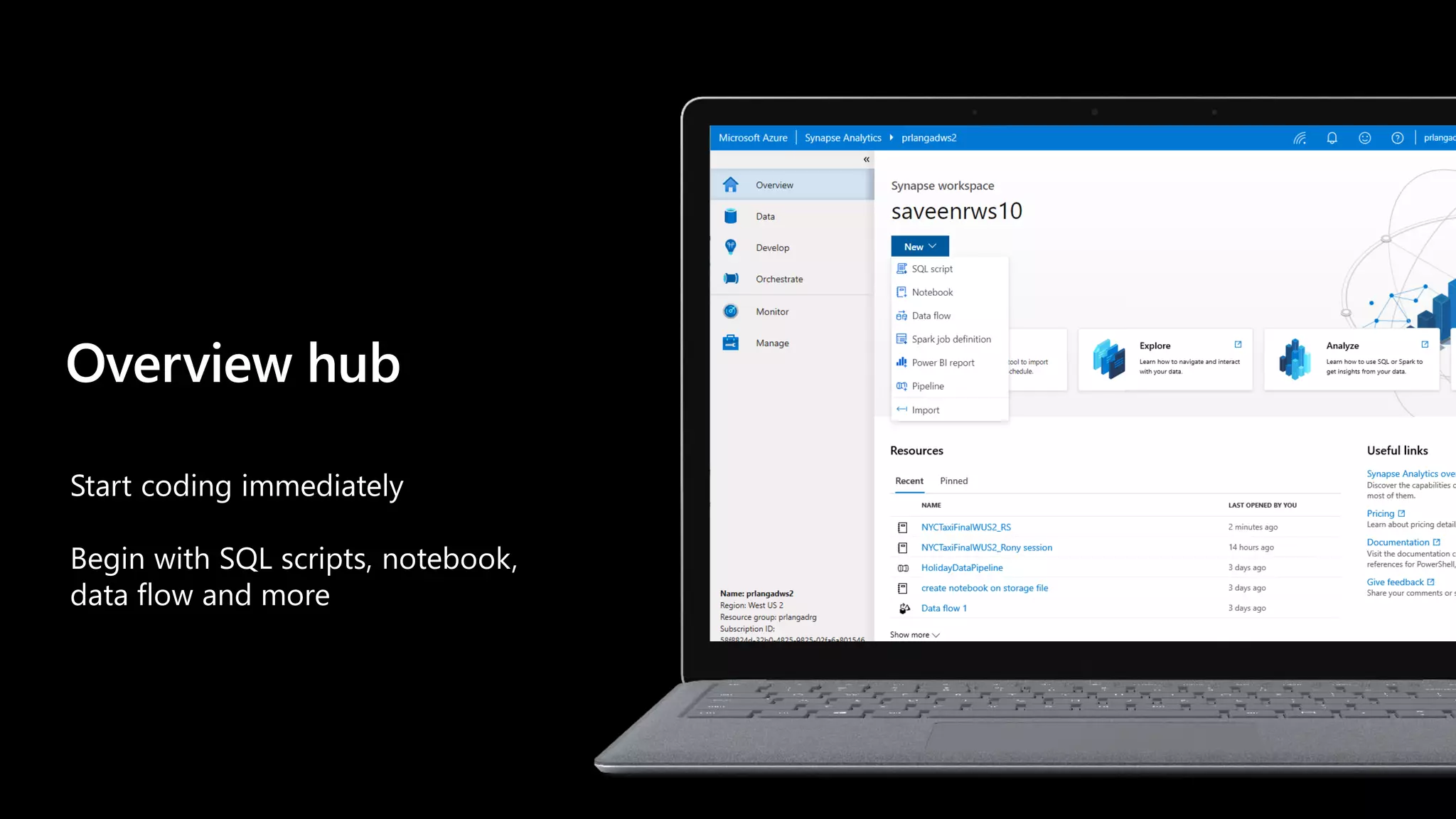

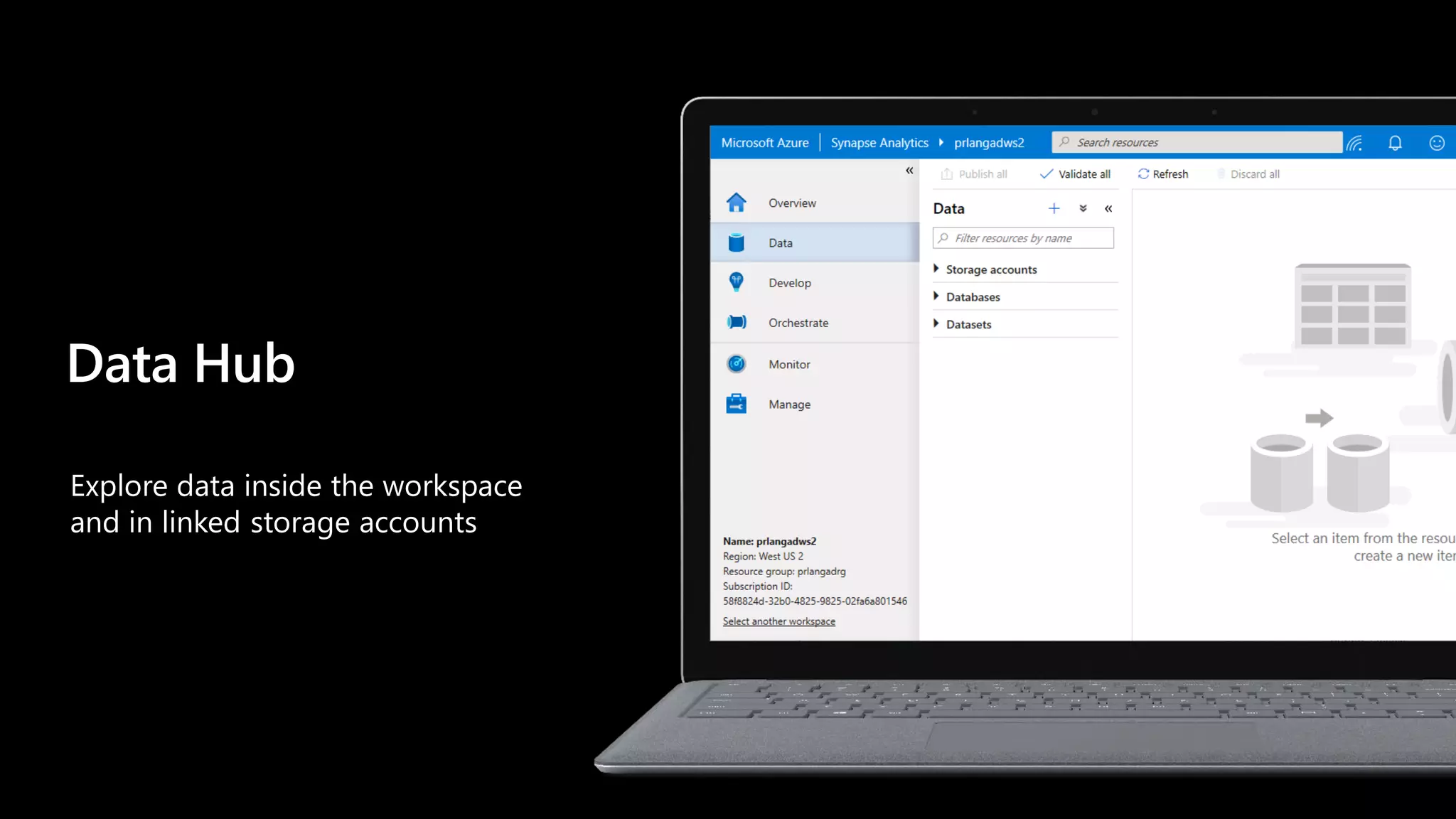

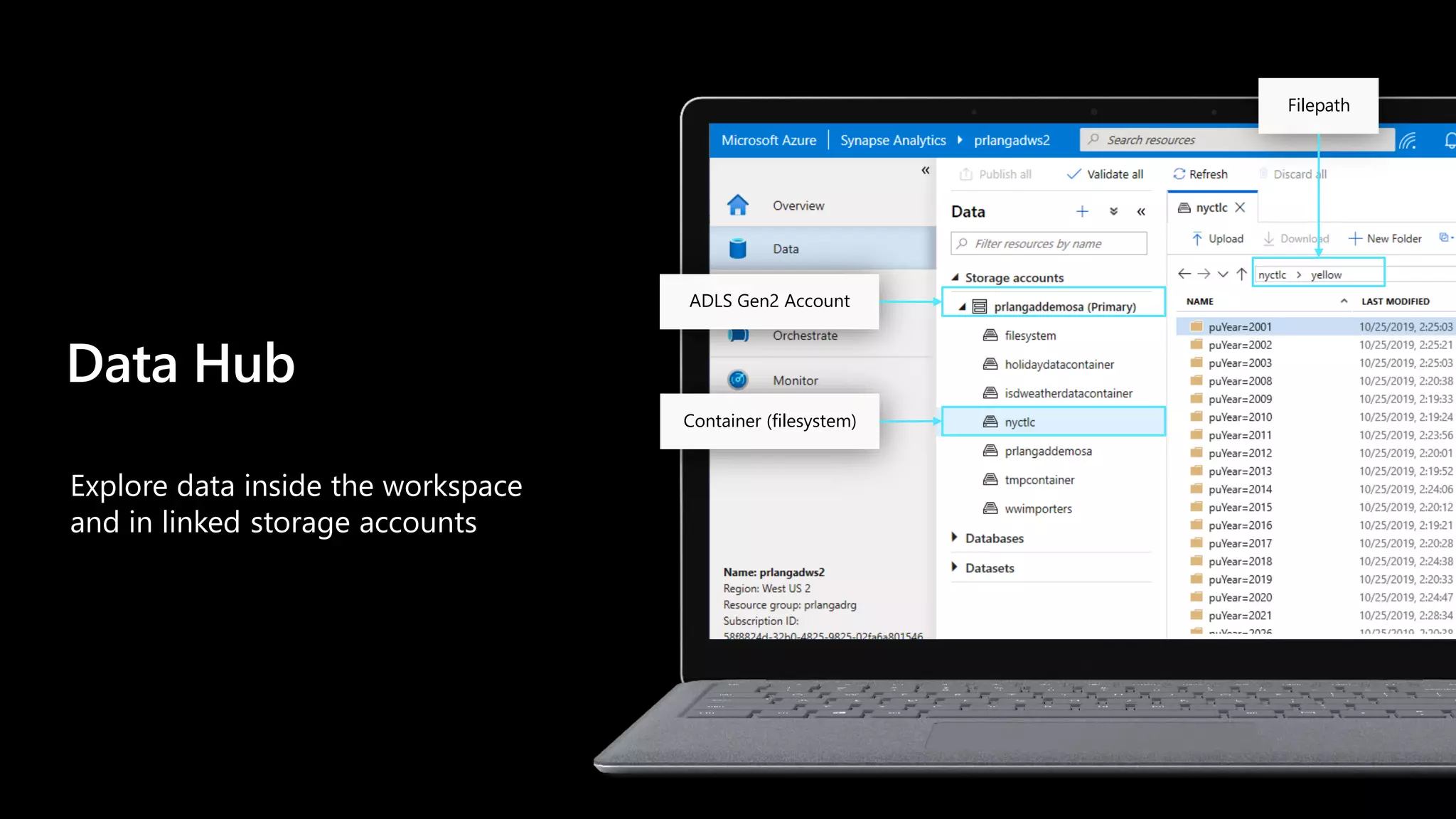

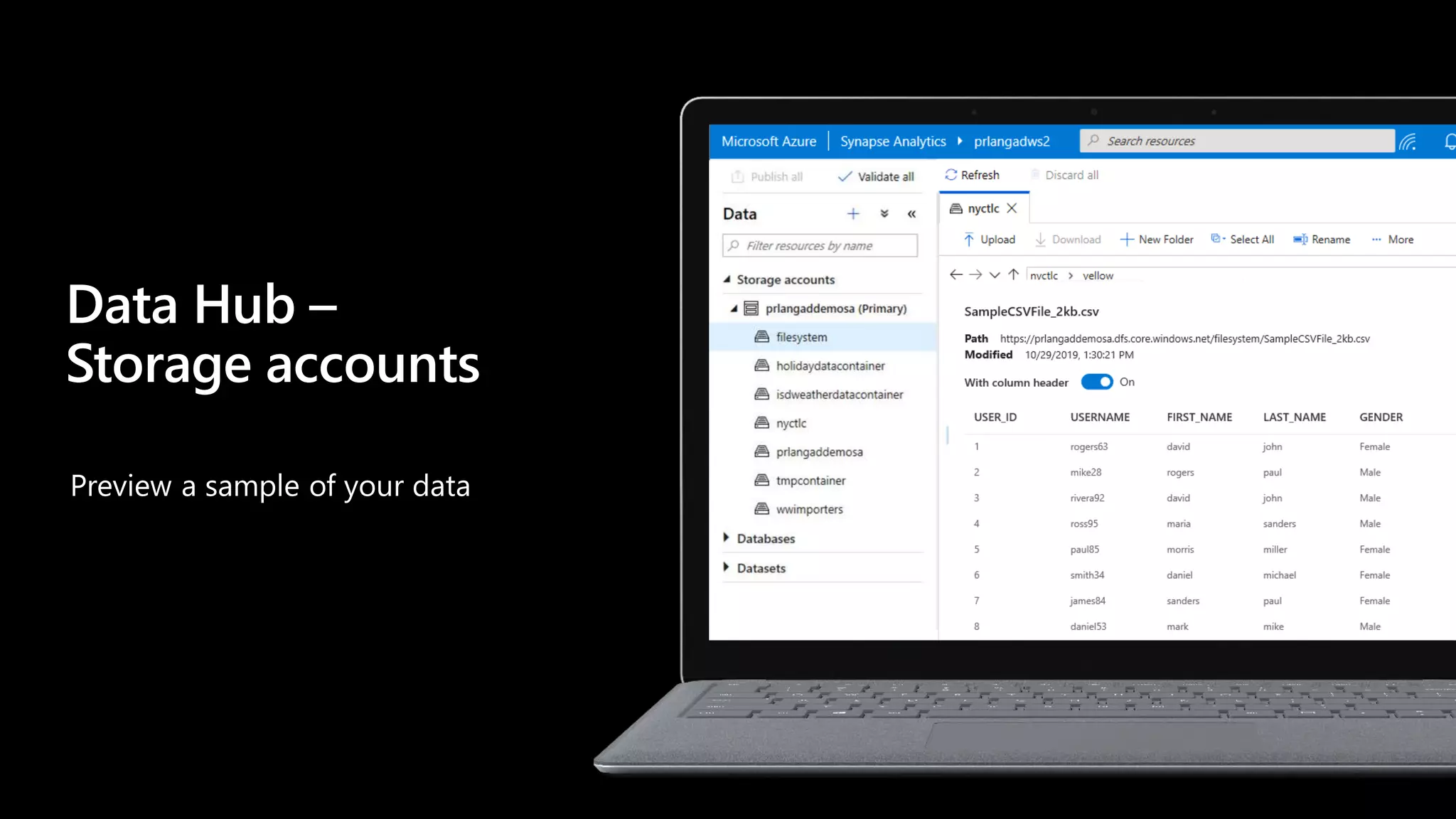

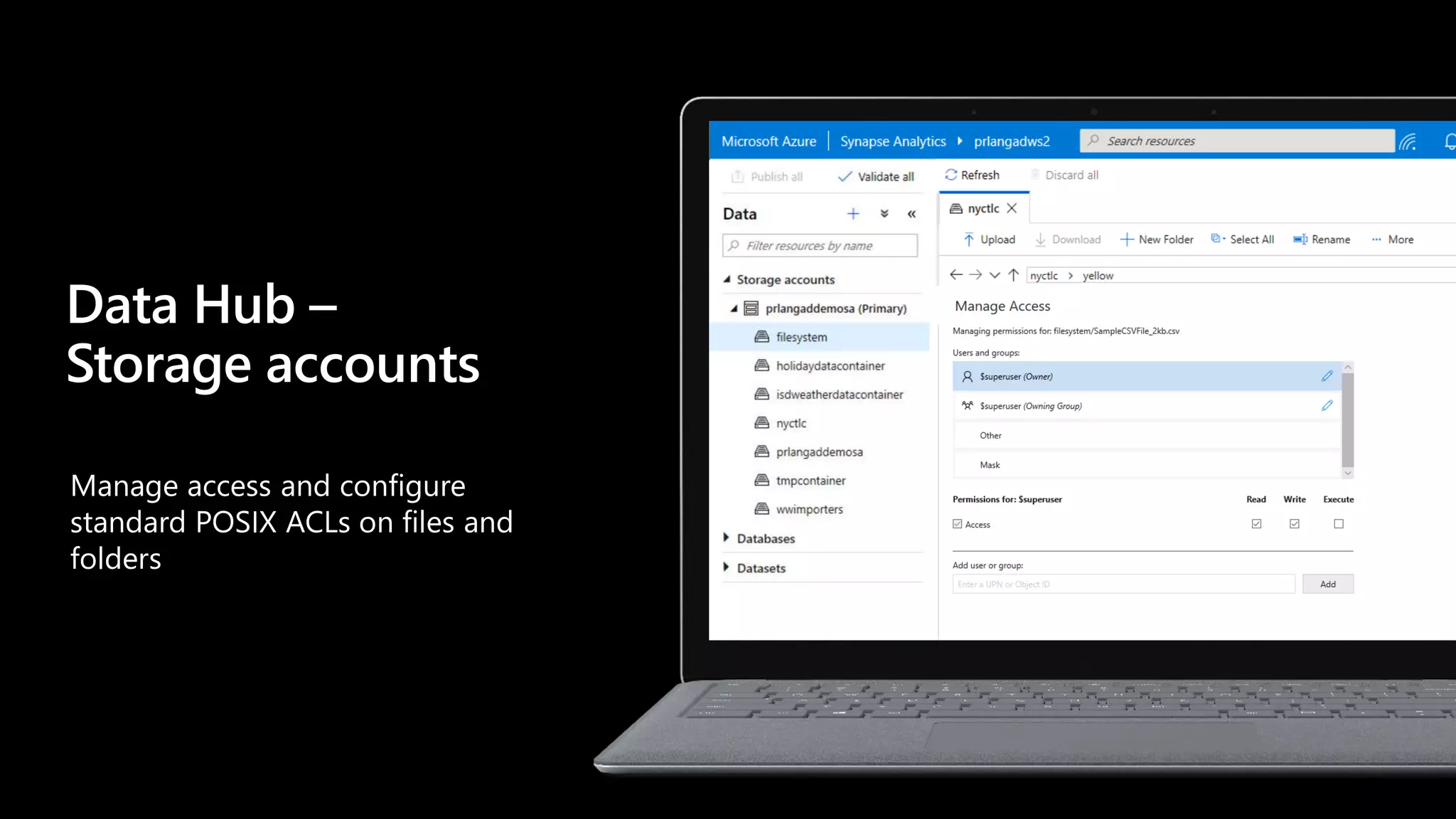

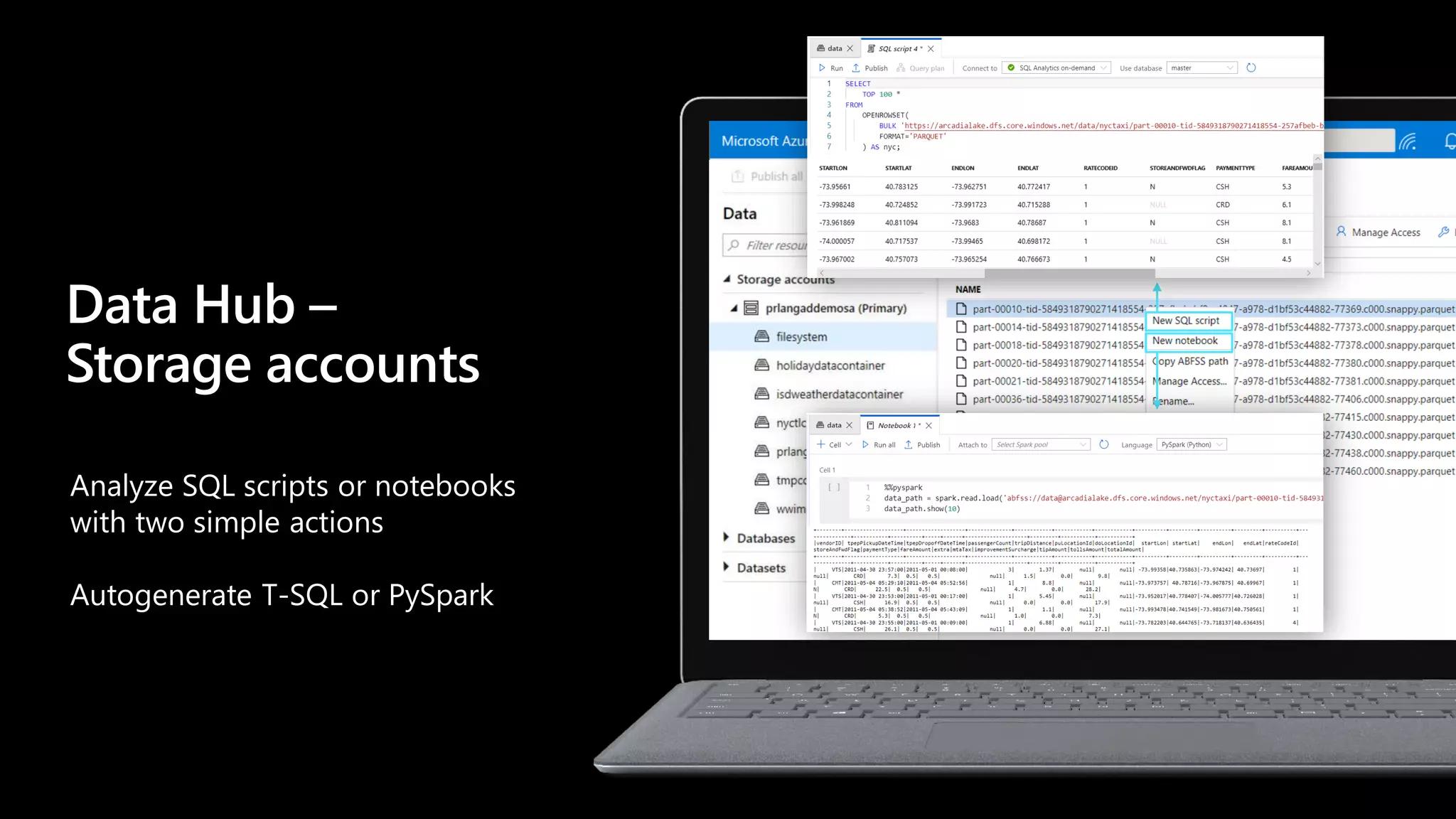

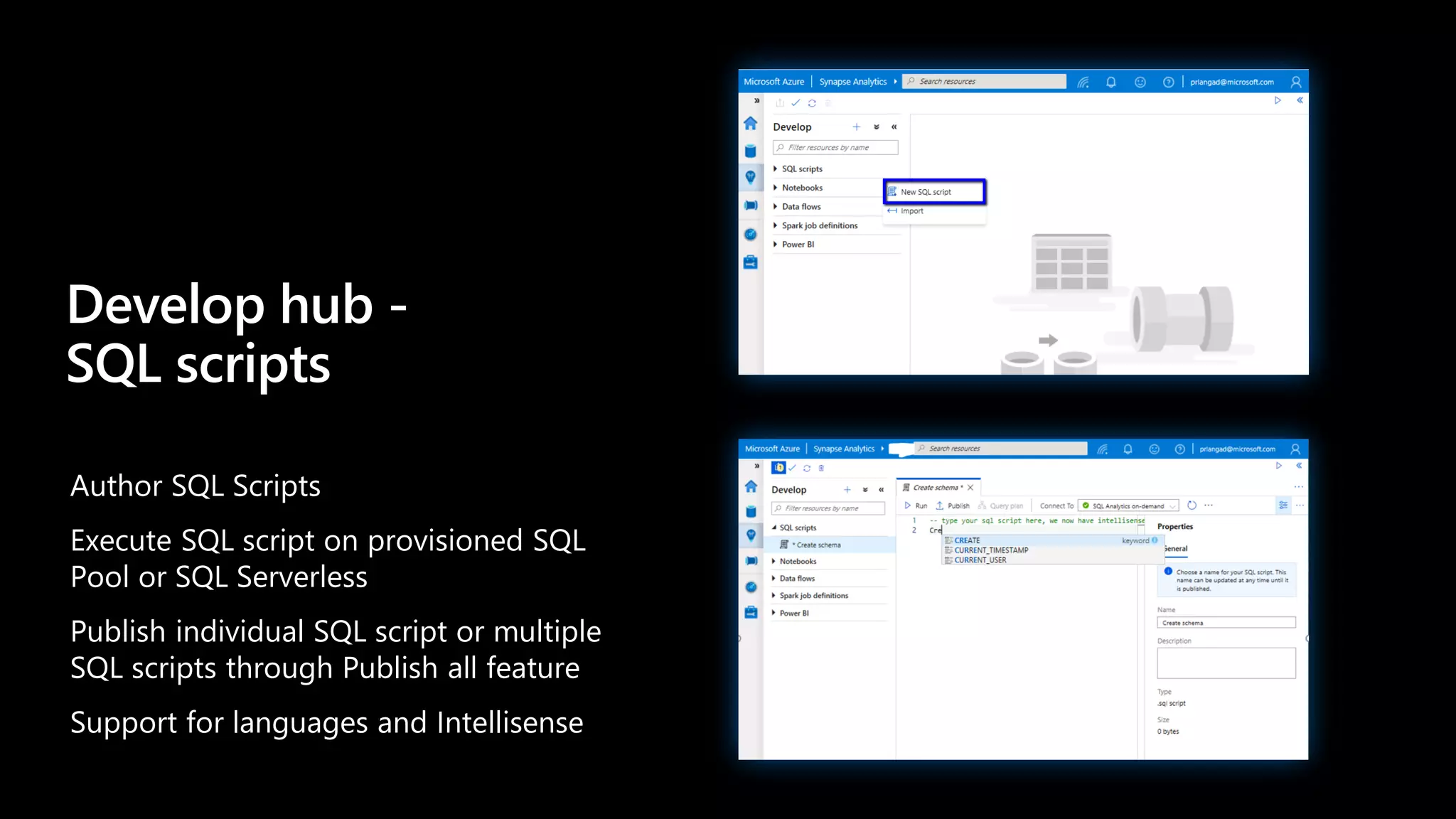

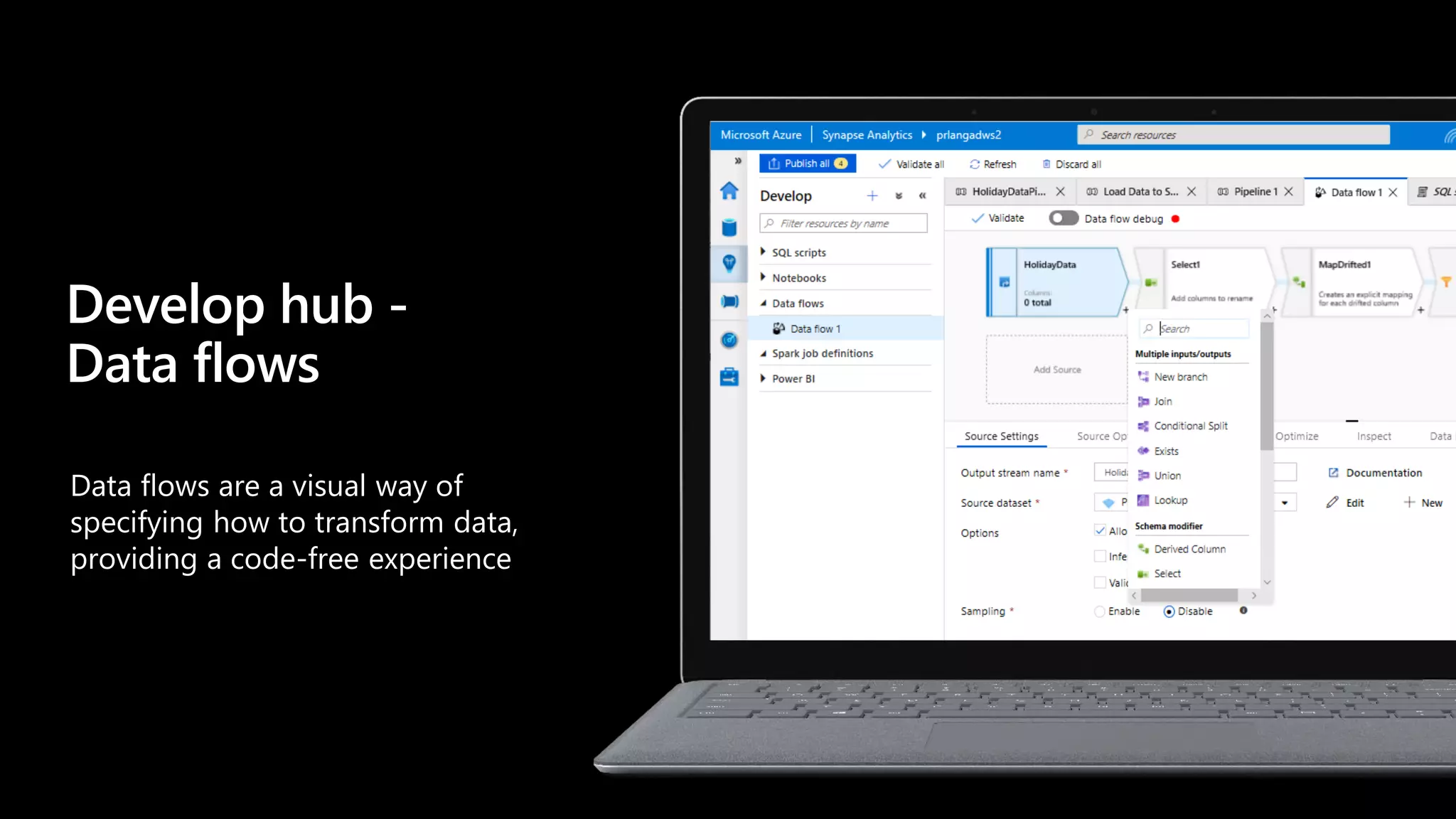

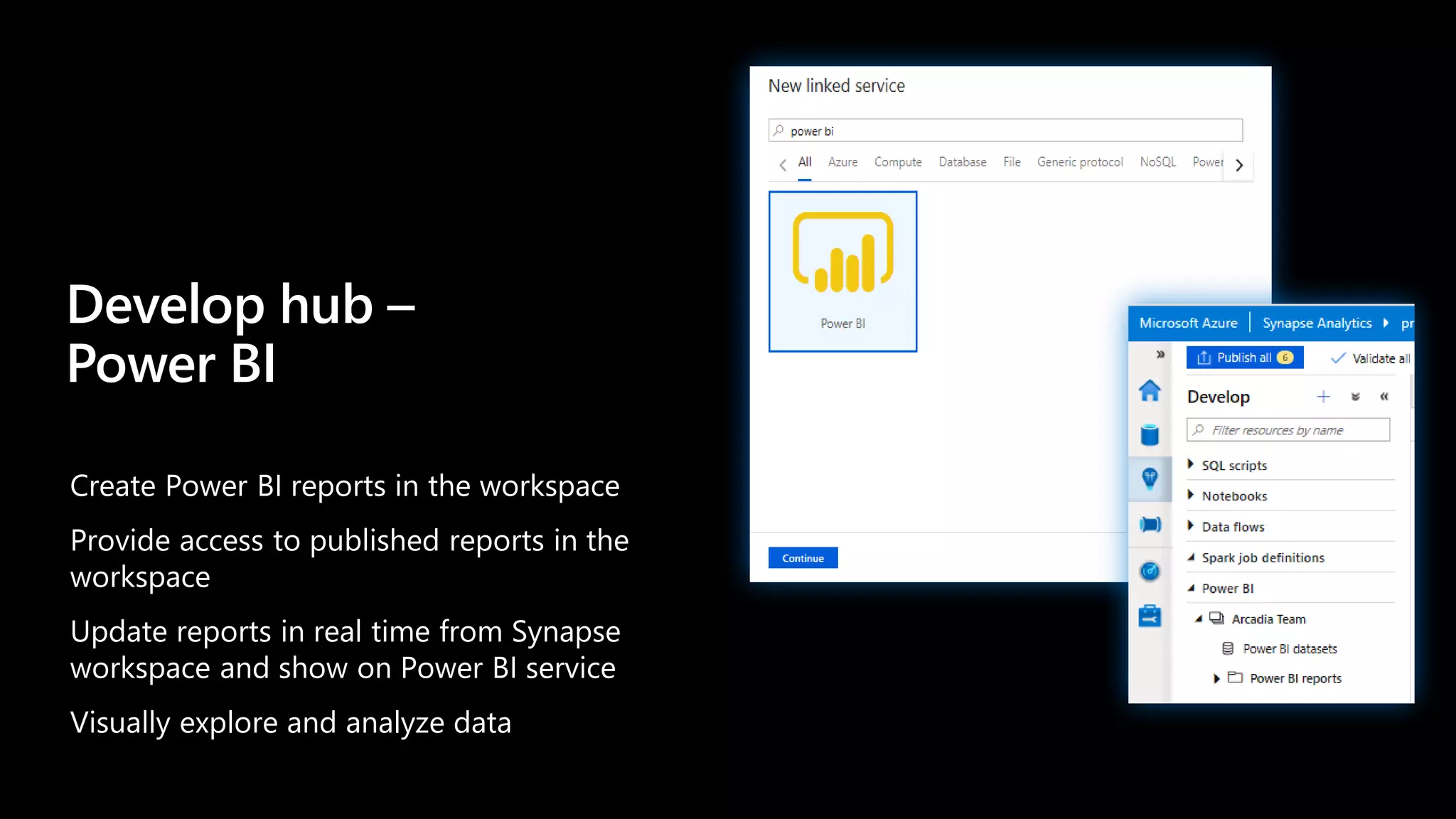

The document discusses Azure Synapse Analytics, a limitless analytics service that delivers insights from all data sources with unmatched speed. It provides a unified experience with Azure Synapse Studio for SQL, Apache Spark, pipelines, and BI/AI integration. Key capabilities include cloud-scale analytics, a modern data warehouse with SQL and Spark runtimes, and an integrated platform for AI/BI/continuous intelligence. Synapse Studio is the main interface with hubs for overview, data exploration, development, orchestration, and management.

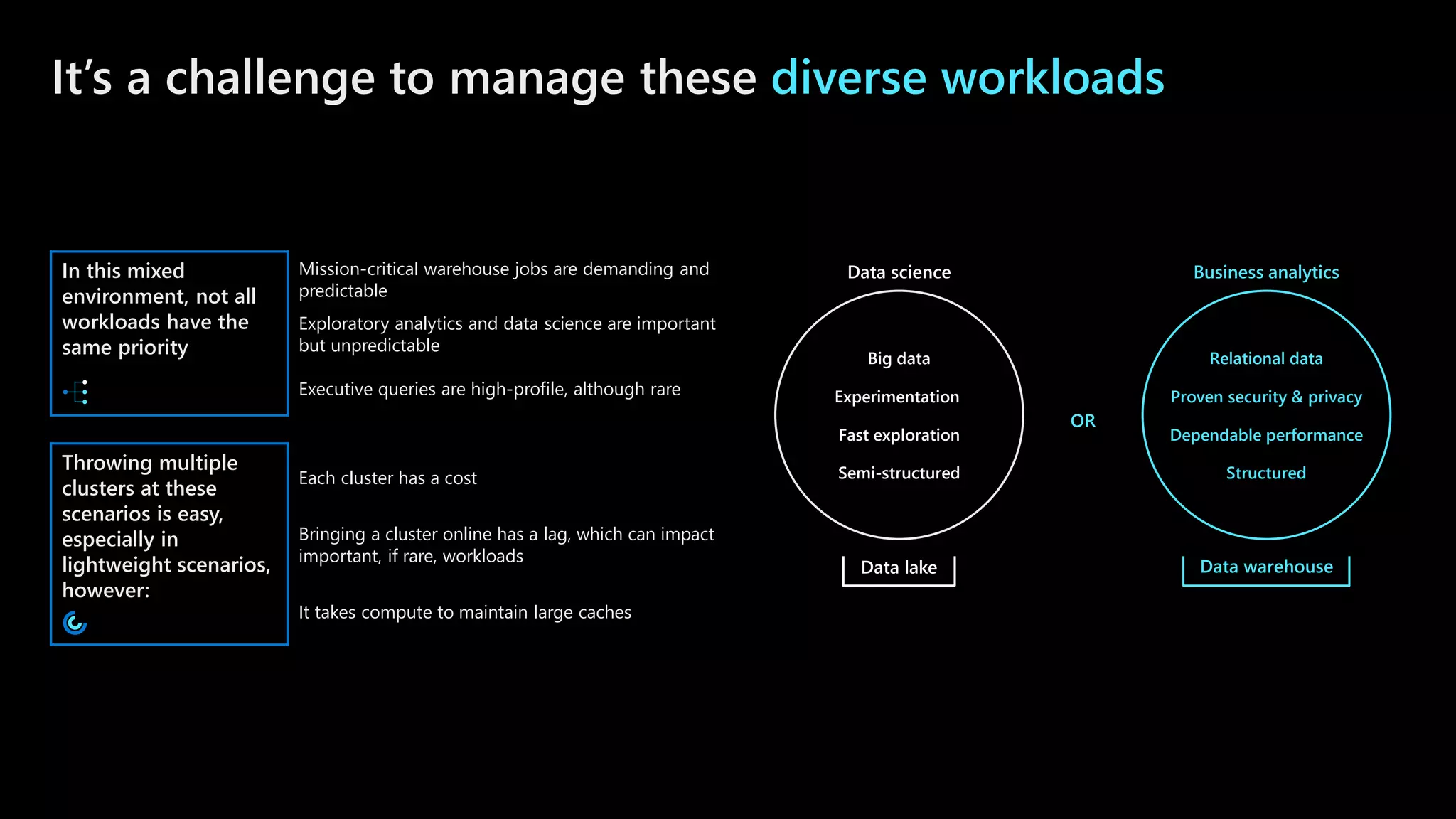

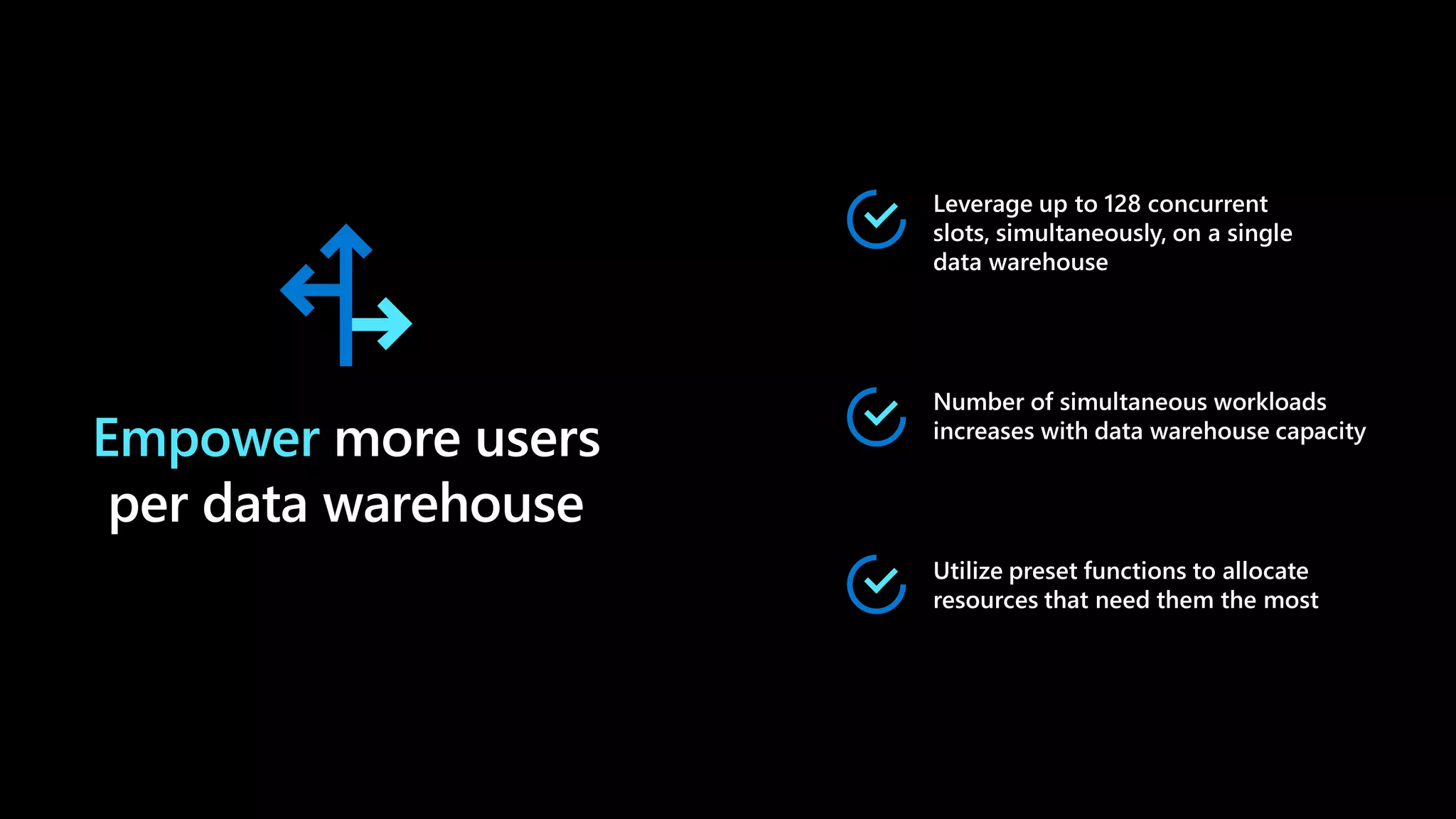

![Intra cluster workload isolation

(Scale in)

Marketing

CREATE WORKLOAD GROUP Sales

WITH

(

[ MIN_PERCENTAGE_RESOURCE = 60 ]

[ CAP_PERCENTAGE_RESOURCE = 100 ]

[ MAX_CONCURRENCY = 6 ] )

40%

Data

warehouse

Local In-Memory + SSD Cache

Compute

1000c DWU

60%

Sales

60%

100%

Workload aware

query execution

Workload isolation

Multiple workloads share

deployed resources

Reservation or shared resource

configuration

Online changes to workload policies](https://image.slidesharecdn.com/analyticsinaday09-201007042652/75/Analytics-in-a-day-80-2048.jpg)