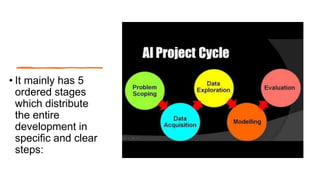

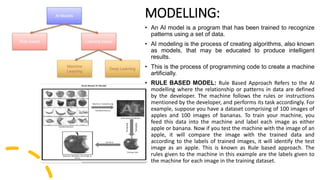

The document discusses the steps in an AI project cycle which includes problem scoping, data acquisition, data exploration, modelling, and evaluation. It provides examples of each step, such as identifying a problem in problem scoping, collecting reliable data from various sources in data acquisition, arranging data in tables and charts for better understanding in data exploration, creating models from visualized data in modelling, and testing model performance in evaluation.