Gaudet - BioDBcore

•

0 likes•229 views

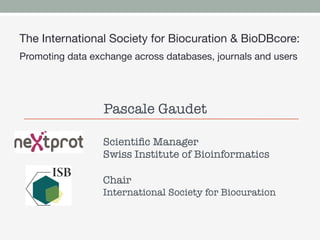

This is a slightly extended version of the BioDBcore presentation I gave at Biocuration2012 in the Workshop Databases & Journals – How to have a sustainable long term plan for journals and databases?

Report

Share

Report

Share

Download to read offline

Recommended

2018 04-03-shorthouse

Biodiversity informatics describes integrating biological research, computational science, and software engineering to deal with biotic data. Biodiversity data is used for taxonomy, biogeography, ecology, conservation and more. Data is collected, standardized, digitized and published using Darwin Core and made available through organizations like GBIF. Key challenges include dealing with synonyms and standardizing data across sources.

Cross-linked metadata standards, repositories and the data policies - The Bio...

A 20 minute presentation given in Denver (CO) on the 17th September as part of the Biosharing Registry WG, Metadata Standards Catalog WG, and Publishing Data Workflows WG joint session at the Research Data Alliance 8th Plenary (part of International Data Week).

This presentation covers the explosion of metadata standards and databases in the life, biomedical and environmental sciences and how BioSharing is helping to understand this landscape, both in terms of the relationship between standards and other standards and databases, and the life cycle and evolution of each resource. BioSharing also links these resources to the data policies that recommend them (for example, from funding agencies or journal publishers), enabling an understanding of the entire data cycle, from conception to publishing and storage.

The Diversity of Biomedical Data, Databases and Standards (Research Data Alli...

A 10 minute presentation given in Denver (CO) on the 15th September as part of the IG Elixir Bridging Force, WG Biosharing Registry,WG Data Type Registries,WG Metadata Standards Catalog joint session of the Research Data Alliance 8th Plenary (part of International Data Week).

This presentation covers the proliferation of data, databases, and data standards in biomedicine, and how BioSharing can help inform and educate users on this landscape and relationships between data, databases and data standards.

Data 101 - An Introduction to Research Data Management

An introductory class on research data management for scientists, designed and presented by Lisa Federer, Health and Life Sciences Librarian at UCLA Louise M. Darling Biomedical Library.

COBWEB Project Status

Presented by Chris Higgins, COBWEB project manager, at the Dyfi Biosphere Partnership Annual meeting, 15 May 2013, Machynlleth, Wales.

Data editors meeting at SEFS

The document discusses encouraging open data initiatives and publishing freshwater biodiversity data. It notes that projects like BioFresh aim to build a platform to improve management of freshwater biodiversity by encouraging data sharing. While basic data like species occurrences are well-suited for sharing through GBIF, richer datasets require documentation. The document proposes several options for publishing and archiving richer datasets, including metadata papers and making data available as supplementary materials with documentation in a central metadatabase.

Canadensys Explorer presentation

SiBBr 4th Workshop, Petrópolis, Rio de Janeiro, Brazil

Authors: Christian Gendreau, Anne Bruneau, Peter Desmet and David Shorthouse.

RDM engage poster_v2

This document promotes sharing openly licensed research material via Wikimedia Commons to help research have international impact. It encourages linking research back to a repository with a DOI and using shared data to improve Wikipedia articles. The document advertises an event at the University of Leeds to teach about editing Wikipedia and participate in an editathon to link research data management with open science. It provides a QR code and email to provide help with sharing research data.

Recommended

2018 04-03-shorthouse

Biodiversity informatics describes integrating biological research, computational science, and software engineering to deal with biotic data. Biodiversity data is used for taxonomy, biogeography, ecology, conservation and more. Data is collected, standardized, digitized and published using Darwin Core and made available through organizations like GBIF. Key challenges include dealing with synonyms and standardizing data across sources.

Cross-linked metadata standards, repositories and the data policies - The Bio...

A 20 minute presentation given in Denver (CO) on the 17th September as part of the Biosharing Registry WG, Metadata Standards Catalog WG, and Publishing Data Workflows WG joint session at the Research Data Alliance 8th Plenary (part of International Data Week).

This presentation covers the explosion of metadata standards and databases in the life, biomedical and environmental sciences and how BioSharing is helping to understand this landscape, both in terms of the relationship between standards and other standards and databases, and the life cycle and evolution of each resource. BioSharing also links these resources to the data policies that recommend them (for example, from funding agencies or journal publishers), enabling an understanding of the entire data cycle, from conception to publishing and storage.

The Diversity of Biomedical Data, Databases and Standards (Research Data Alli...

A 10 minute presentation given in Denver (CO) on the 15th September as part of the IG Elixir Bridging Force, WG Biosharing Registry,WG Data Type Registries,WG Metadata Standards Catalog joint session of the Research Data Alliance 8th Plenary (part of International Data Week).

This presentation covers the proliferation of data, databases, and data standards in biomedicine, and how BioSharing can help inform and educate users on this landscape and relationships between data, databases and data standards.

Data 101 - An Introduction to Research Data Management

An introductory class on research data management for scientists, designed and presented by Lisa Federer, Health and Life Sciences Librarian at UCLA Louise M. Darling Biomedical Library.

COBWEB Project Status

Presented by Chris Higgins, COBWEB project manager, at the Dyfi Biosphere Partnership Annual meeting, 15 May 2013, Machynlleth, Wales.

Data editors meeting at SEFS

The document discusses encouraging open data initiatives and publishing freshwater biodiversity data. It notes that projects like BioFresh aim to build a platform to improve management of freshwater biodiversity by encouraging data sharing. While basic data like species occurrences are well-suited for sharing through GBIF, richer datasets require documentation. The document proposes several options for publishing and archiving richer datasets, including metadata papers and making data available as supplementary materials with documentation in a central metadatabase.

Canadensys Explorer presentation

SiBBr 4th Workshop, Petrópolis, Rio de Janeiro, Brazil

Authors: Christian Gendreau, Anne Bruneau, Peter Desmet and David Shorthouse.

RDM engage poster_v2

This document promotes sharing openly licensed research material via Wikimedia Commons to help research have international impact. It encourages linking research back to a repository with a DOI and using shared data to improve Wikipedia articles. The document advertises an event at the University of Leeds to teach about editing Wikipedia and participate in an editathon to link research data management with open science. It provides a QR code and email to provide help with sharing research data.

OAIS: What is it and Where is it Going? - Don Sawyer (2002)

Open Archival Information Service (OAIS) workshop. Presented by Don Sawyer of NASA Goddard and Lou Reich, CSC contractor to NASA. Sponsored by ALA Federal and Armed Forces Libraries Roundtable (FAFLRT). Presented on June 15, 2002 at ALA Annual Conference.

Going for GOLD - Adventures in Open Linked Geospatial Metadata

Presentation by James Reid, given to the AGI GeoCommunity11 conference, in Nottingham UK, September 2011.

Promotion of open access repositories

This document discusses strategies for promoting open access repositories at institutions. It recommends establishing repositories that provide long-term preservation of research outputs, usage statistics, and professional profiles for researchers and research managers. Advocacy options include top-down approaches that require deposit mandates and gain high-level support, as well as bottom-up approaches that locate champions and engage students. Targeted advocacy involves identifying publishers that allow self-archiving and working with departments most likely to benefit. Marketing the repository is also important.

Requirement of research repository for pitad

The document proposes establishing a Research Repository for PITAD to store and distribute research outputs in response to trends in open access publishing. The repository would collect, organize, preserve and disseminate research products from PITAD in digital format to increase visibility and impact. Faculty would deposit works for long-term retention and access. The repository would be searchable on the web and mirror PITAD's organizational structure. It would contain works like articles, reports, and datasets to showcase research and provide access to publicly funded work.

Jim Woolley - Name Registration: One Less Impediment to Taxonomy

Revolutionising taxonomy through an open-access web-register for animal names and descriptions

ESA Program Symposium: December, 2005

Implementing and Institutional Repository for Sharing, Archiving, and Accessi...

Implementing and Institutional Repository for Sharing, Archiving, and Accessi...University of Michigan Taubman Health Sciences Library

A poster presentation from the Medical Library Association 2017 Annual Meeting by Lynne Frederickson, Marisa Conte, and Amy Neeser. DataOne - Suzie Allard - RDAP12

DataOne

Suzie Allard, Ph.D.

University of Tennessee

Presentation at Research Data Access & Preservation Summit

21 March 2012

Who is doing what, and how do we know? [PEPRS]![Who is doing what, and how do we know? [PEPRS]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Who is doing what, and how do we know? [PEPRS]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Presented by Peter Burnhill at e-Journals are forever? Preservation and Continuing Access to e-journal Content. A DPC, EDINA and JISC joint initiative, British Library, London, 26 April 2010.

re3data.org – Registry of Research Data Repositories

Heinz Pampel | GFZ German Research Centre for Geosciences, LIS

Maxi Kindling | Humboldt-Universität zu Berlin, Berlin School of Library and Information Science Frank Scholze | Karlsruhe Institute of Technology, KIT Library

RDA-Deutschland-Treffen 2015| Potsdam, November 26, 2015

A research passport: library requirements

Presentation from Authentication, Authorisation and Accounting Study Workshop in Brussels on the 12th July 2012

CSIRO National Research Collections

John Morrisseey - CSIRO. CSIRO National research collections Australia (NRCA). Specimen identifiers - possible futures.

2 Nov 2016, Canberra. International Geo-Sampling Number (IGSN) Symposium

David Van Enckevort - FAIR sample and data access

David van Enckevort from the University of Groningen describes FAIR Sample and Data Access in Biobanking and Biorepositories.

This talk was sponsored by the NIH Data Science Special Interest Group and part of a webinar panel on June 23, 2017 on Global Biobanking and Access to Specimens.

NIH Data Science Special Interest Group

This document discusses making biobank data and samples FAIR (Findable, Accessible, Interoperable, and Reusable).

It explains the four FAIR principles and provides examples of how to apply each one. To make resources findable, they need unique and persistent identifiers, rich metadata, and to be discoverable through other systems. To make them accessible, they need to be retrievable using open standards. To make them interoperable, standards for knowledge representation like ontologies should be used. And to make them reusable, they need to be richly described and released with clear usage terms and provenance.

The document recommends three steps to make samples and data FAIR: include sufficient metadata using

BioSharing - Mapping the landscape of Standards, Database and Data Policies i...

A 20 minute talk on BioSharing, presented at the COST CHARME data standards conference in Warsaw, Poland, on June 21th 2016

Using and extending Darwin Core for structured attribute data

Presented at the Biodiversity Information Standards (Taxonomic Databases Working Group) 2013 meeting in Florence, Italy on 29 October 2013. Essentially, an introduction to the new trait repository of Encyclopedia of Life.

Using DAF as a Data Scoping Tool, by Sarah Jones

This presentation describes the Data Asset Framework (DAF) as a tool for scoping data content for institutional repositories. It was given as part of module 1 of a 5-module course on digital preservation tools for repository managers, presented by the JISC KeepIt project. For more on this and other presentations in this course look for the tag 'KeepIt course' in the project blog http://blogs.ecs.soton.ac.uk/keepit/

BaurCHCArchivist

The document discusses innovative approaches to archival processing and making archival collections accessible. It describes three phases: 1) Assessment and processing to reduce backlogs while increasing finding aids, 2) Collaborative processing projects to share standards and training, and 3) Creating access points through descriptive standards, data management systems, digitization, and social media to advertise collections. The key is using new technologies and standards along with collaboration to connect more researchers to archival materials.

WorldCat Local: Global Network, Local Results

Sirsi Midwest Users' Group (SMUG) Annual Conference, July 27, 2007

OCLC is piloting its new WorldCat Local service that will allow your library to customize WorldCat.org as a solution for local discovery and delivery services. WorldCat Local interoperates with locally maintained services like circulation, resource sharing and resolution to full text to present a locally branded interface to your patrons. Attend this session to learn how this new service works and to see the beta being run at the University of Washington Libraries.

Biodiversity Heritage Library in Australia

The Atlas of Living Australia project is a collaboration between the Australian Government and various research institutions to create a biodiversity data management system. It aims to link biological knowledge with scientific collections and make data freely accessible. The project is funded by the Australian Government and involves developing tools and data stores to share biodiversity information and support research. Major milestones include releasing a new interface for an existing site by December 2010 and implementing ingestion workflows by March 2011.

Gsc mibbi-2010-12-01

MIGS/MIMS and MIENS extend standards requested by DDBJ/EMBL/GenBank when submitting genome sequences by providing additional context about samples and environments. The Genomic Standards Consortium coordinates workshops and exchange visits to develop shared standards for describing genomic and metagenomic samples. They also rely on local hosts and sponsors to support workshops on developing standards to support genomic and metagenomic science.

Cloud computing

This document provides an introduction to cloud computing and parallel/distributed processing. It discusses how web-scale problems involving large amounts of data require distributed computing across large data centers. Different models of cloud computing are described, including utility computing, platform as a service (PaaS), and software as a service (SaaS). The document also discusses how web applications are moving to highly-interactive models enabled by technologies like AJAX. Key aspects of cloud computing covered include large data centers, virtualization, MapReduce processing for large datasets, and interactive web applications.

More Related Content

What's hot

OAIS: What is it and Where is it Going? - Don Sawyer (2002)

Open Archival Information Service (OAIS) workshop. Presented by Don Sawyer of NASA Goddard and Lou Reich, CSC contractor to NASA. Sponsored by ALA Federal and Armed Forces Libraries Roundtable (FAFLRT). Presented on June 15, 2002 at ALA Annual Conference.

Going for GOLD - Adventures in Open Linked Geospatial Metadata

Presentation by James Reid, given to the AGI GeoCommunity11 conference, in Nottingham UK, September 2011.

Promotion of open access repositories

This document discusses strategies for promoting open access repositories at institutions. It recommends establishing repositories that provide long-term preservation of research outputs, usage statistics, and professional profiles for researchers and research managers. Advocacy options include top-down approaches that require deposit mandates and gain high-level support, as well as bottom-up approaches that locate champions and engage students. Targeted advocacy involves identifying publishers that allow self-archiving and working with departments most likely to benefit. Marketing the repository is also important.

Requirement of research repository for pitad

The document proposes establishing a Research Repository for PITAD to store and distribute research outputs in response to trends in open access publishing. The repository would collect, organize, preserve and disseminate research products from PITAD in digital format to increase visibility and impact. Faculty would deposit works for long-term retention and access. The repository would be searchable on the web and mirror PITAD's organizational structure. It would contain works like articles, reports, and datasets to showcase research and provide access to publicly funded work.

Jim Woolley - Name Registration: One Less Impediment to Taxonomy

Revolutionising taxonomy through an open-access web-register for animal names and descriptions

ESA Program Symposium: December, 2005

Implementing and Institutional Repository for Sharing, Archiving, and Accessi...

Implementing and Institutional Repository for Sharing, Archiving, and Accessi...University of Michigan Taubman Health Sciences Library

A poster presentation from the Medical Library Association 2017 Annual Meeting by Lynne Frederickson, Marisa Conte, and Amy Neeser. DataOne - Suzie Allard - RDAP12

DataOne

Suzie Allard, Ph.D.

University of Tennessee

Presentation at Research Data Access & Preservation Summit

21 March 2012

Who is doing what, and how do we know? [PEPRS]![Who is doing what, and how do we know? [PEPRS]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Who is doing what, and how do we know? [PEPRS]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Presented by Peter Burnhill at e-Journals are forever? Preservation and Continuing Access to e-journal Content. A DPC, EDINA and JISC joint initiative, British Library, London, 26 April 2010.

re3data.org – Registry of Research Data Repositories

Heinz Pampel | GFZ German Research Centre for Geosciences, LIS

Maxi Kindling | Humboldt-Universität zu Berlin, Berlin School of Library and Information Science Frank Scholze | Karlsruhe Institute of Technology, KIT Library

RDA-Deutschland-Treffen 2015| Potsdam, November 26, 2015

A research passport: library requirements

Presentation from Authentication, Authorisation and Accounting Study Workshop in Brussels on the 12th July 2012

CSIRO National Research Collections

John Morrisseey - CSIRO. CSIRO National research collections Australia (NRCA). Specimen identifiers - possible futures.

2 Nov 2016, Canberra. International Geo-Sampling Number (IGSN) Symposium

David Van Enckevort - FAIR sample and data access

David van Enckevort from the University of Groningen describes FAIR Sample and Data Access in Biobanking and Biorepositories.

This talk was sponsored by the NIH Data Science Special Interest Group and part of a webinar panel on June 23, 2017 on Global Biobanking and Access to Specimens.

NIH Data Science Special Interest Group

This document discusses making biobank data and samples FAIR (Findable, Accessible, Interoperable, and Reusable).

It explains the four FAIR principles and provides examples of how to apply each one. To make resources findable, they need unique and persistent identifiers, rich metadata, and to be discoverable through other systems. To make them accessible, they need to be retrievable using open standards. To make them interoperable, standards for knowledge representation like ontologies should be used. And to make them reusable, they need to be richly described and released with clear usage terms and provenance.

The document recommends three steps to make samples and data FAIR: include sufficient metadata using

BioSharing - Mapping the landscape of Standards, Database and Data Policies i...

A 20 minute talk on BioSharing, presented at the COST CHARME data standards conference in Warsaw, Poland, on June 21th 2016

Using and extending Darwin Core for structured attribute data

Presented at the Biodiversity Information Standards (Taxonomic Databases Working Group) 2013 meeting in Florence, Italy on 29 October 2013. Essentially, an introduction to the new trait repository of Encyclopedia of Life.

Using DAF as a Data Scoping Tool, by Sarah Jones

This presentation describes the Data Asset Framework (DAF) as a tool for scoping data content for institutional repositories. It was given as part of module 1 of a 5-module course on digital preservation tools for repository managers, presented by the JISC KeepIt project. For more on this and other presentations in this course look for the tag 'KeepIt course' in the project blog http://blogs.ecs.soton.ac.uk/keepit/

BaurCHCArchivist

The document discusses innovative approaches to archival processing and making archival collections accessible. It describes three phases: 1) Assessment and processing to reduce backlogs while increasing finding aids, 2) Collaborative processing projects to share standards and training, and 3) Creating access points through descriptive standards, data management systems, digitization, and social media to advertise collections. The key is using new technologies and standards along with collaboration to connect more researchers to archival materials.

WorldCat Local: Global Network, Local Results

Sirsi Midwest Users' Group (SMUG) Annual Conference, July 27, 2007

OCLC is piloting its new WorldCat Local service that will allow your library to customize WorldCat.org as a solution for local discovery and delivery services. WorldCat Local interoperates with locally maintained services like circulation, resource sharing and resolution to full text to present a locally branded interface to your patrons. Attend this session to learn how this new service works and to see the beta being run at the University of Washington Libraries.

Biodiversity Heritage Library in Australia

The Atlas of Living Australia project is a collaboration between the Australian Government and various research institutions to create a biodiversity data management system. It aims to link biological knowledge with scientific collections and make data freely accessible. The project is funded by the Australian Government and involves developing tools and data stores to share biodiversity information and support research. Major milestones include releasing a new interface for an existing site by December 2010 and implementing ingestion workflows by March 2011.

Gsc mibbi-2010-12-01

MIGS/MIMS and MIENS extend standards requested by DDBJ/EMBL/GenBank when submitting genome sequences by providing additional context about samples and environments. The Genomic Standards Consortium coordinates workshops and exchange visits to develop shared standards for describing genomic and metagenomic samples. They also rely on local hosts and sponsors to support workshops on developing standards to support genomic and metagenomic science.

What's hot (20)

OAIS: What is it and Where is it Going? - Don Sawyer (2002)

OAIS: What is it and Where is it Going? - Don Sawyer (2002)

Going for GOLD - Adventures in Open Linked Geospatial Metadata

Going for GOLD - Adventures in Open Linked Geospatial Metadata

Jim Woolley - Name Registration: One Less Impediment to Taxonomy

Jim Woolley - Name Registration: One Less Impediment to Taxonomy

Implementing and Institutional Repository for Sharing, Archiving, and Accessi...

Implementing and Institutional Repository for Sharing, Archiving, and Accessi...

re3data.org – Registry of Research Data Repositories

re3data.org – Registry of Research Data Repositories

BioSharing - Mapping the landscape of Standards, Database and Data Policies i...

BioSharing - Mapping the landscape of Standards, Database and Data Policies i...

Using and extending Darwin Core for structured attribute data

Using and extending Darwin Core for structured attribute data

Viewers also liked

Cloud computing

This document provides an introduction to cloud computing and parallel/distributed processing. It discusses how web-scale problems involving large amounts of data require distributed computing across large data centers. Different models of cloud computing are described, including utility computing, platform as a service (PaaS), and software as a service (SaaS). The document also discusses how web applications are moving to highly-interactive models enabled by technologies like AJAX. Key aspects of cloud computing covered include large data centers, virtualization, MapReduce processing for large datasets, and interactive web applications.

Parc national de pingualuit (evan burman)

This document appears to list voice file names without any additional context or information. It includes the voice file names 001.3ga, 002.3ga, 003.3ga, 005.3ga, and 006.3ga.

Rinaldi - ODIN

This document describes a study using the ODIN text mining system to extract relationships between genes, drugs, and diseases from biomedical literature and validate those relationships against the PharmGKB knowledge base. The researchers developed methods to improve relationship ranking and conducted a revalidation experiment with curators from Stanford evaluating a sample of automatically extracted relationships. The curators provided feedback that led to improvements in the interactive curation interface to better suit their needs. Lessons were learned about obtaining user requirements and rapidly implementing and testing prototypes to develop usable curation tools.

Masson - ViralZone

Viralzone: a web resource dedicated to viruses, by Patrick Masson, Chantal Hulo, Edouard De Castro, Lydie Bougueleret, Philippe Le Mercier and Ioannis Xenarios.

Presented at the 5th International Biocuration Conference, hosted by PIR in Washington, DC, April 2-4, 2012.

Bairoch ISB closing-talk: CALIPHO

Plenary talk from Dr Amos Bairoch presented at the 5th International Biocuration Conference, hosted by PIR in Washington, DC, April 2-4, 2012.

BioDBCore: Current Status and Next Developments

The document discusses BioDBCore, a collaborative project aimed at gathering and standardizing metadata about biological databases. It provides an overview of BioDBCore's goals of improving data integration, encouraging standards, and maximizing resources. BioDBCore is led by Pascale Gaudet and Philippe Rocca-Serra and implemented on the BioSharing website. The document outlines the BioDBCore descriptors for databases and provides an example entry for the dictyBase database. It discusses maintaining and expanding BioDBCore records with the help of database providers and journals.

José Cruz Toledo - Aptamer basebc2012

Aptamer Base: A collaborative knowledge base to describe aptamers and SELEX experiments, by Cruz-Toledo, Jose; McKeague, Maureen; Zhang, Xueru; Giamberardino, Amanda; McConnell, Erin; Francis, Tariq; DeRosa, Maria; Dumontier, Michel.

Presented at the 5th International Biocuration Conference, hosted by PIR in Washington, DC, April 2-4, 2012.

Viewers also liked (8)

Similar to Gaudet - BioDBcore

NIH iDASH meeting on data sharing - BioSharing, ISA and Scientific Data

1) The document discusses Susanna-Assunta Sansone's roles and work related to promoting FAIR data standards and practices.

2) It highlights some of her leadership positions with organizations like BioSharing that work to map and promote standards.

3) The document also discusses Scientific Data, a peer-reviewed journal launched by Nature Publishing Group to publish detailed descriptions of scientifically valuable datasets to facilitate reuse.

HKU Data Curation MLIM7350 Class 9

Chris Hunter's slides from Class 9 of the HKU Data Curation course (MLIM7350) giving a biocurators perspective of data curation.

Creating a sustainable business model for a digital repository: the Dryad exp...

Creating a sustainable business model for a digital repository: the Dryad experience

Peggy Schaeffer

Datadryad.org

Presentation at Research Data Access & Preservation Summit

22 March 2012

Scientific Data overview of Data Descriptors - WT Data-Literature integration...

Scientific Data overview of Data Descriptors - WT Data-Literature integration...Susanna-Assunta Sansone

This document introduces Scientific Data, a new peer-reviewed journal for publishing data descriptors from Nature Publishing Group. It will provide structured metadata and narrative articles to describe datasets for reuse. The journal is now open for submissions and will launch in May 2014, featuring an advisory panel and sections for standardized data descriptor articles and experimental metadata. It aims to give proper credit for data sharing and promote open access, reuse and peer review of curated scientific datasets.BioMed Central's open data initiatives

BioMed Central is a large open access publisher that is committed to open data initiatives. They have implemented several solutions to promote open data practices, including data journals, an open data award, and enabling data citation. They also work to integrate data hosting and deposition, address data licensing issues, and provide guidance on best practices. Future goals include adding more value to text and data mining applications and building business models around open data.

Scholze liber 2015-06-25_final

Researchers require infrastructures that ensure a maximum of accessibility, stability and reliability to facilitate working with and sharing of research data. Such infrastructures are being increasingly summarised under the term Research Data Repositories (RDR). The project re3data.org – Registry of Research Data Repositories – began to index research data repositories in 2012 and offers researchers, funding organisations, libraries and publishers an overview of the heterogeneous research data repository landscape. In December 2014 re3data.org listed more than 1,030 research data repositories, which are described in detail using the re3data.org schema (http://dx.doi.org/10.2312/re3.003). Information icons help researchers to identify easily an adequate repository for the storage and reuse of their data. This talk describes the heterogeneous RDR landscape and presents a typology of institutional, disciplinary, multidisciplinary and project-specific RDR. Further, it outlines the features of re3data. org and it shows current developments for integration into data management planning tools and other services.

By the end of 2015 re3data.org and Databib (Purdue University, USA) will merge their services, which will then be managed under the auspices of DataCite. The aim of this merger is to reduce duplication of effort and to serve the research community better with a single, sustainable registry of research data repositories. The talk will present this organisational development as a best practice example for the development of international research information services.

The blessing and the curse: handshaking between general and specialist data r...

This document discusses the challenges of depositing data in both generalist and specialist repositories. It notes that while specialized repositories are best for standardized data, many datasets fall into the "long tail" of less common types. Generalist repositories can accommodate long-tail data but require redundant metadata. The document explores how to link data and publications between repositories and assess data quality. It concludes that promoting standards for interoperability between repositories and rallying the research community around those standards could help address these issues.

dkNET Office Hours - "Are You Ready for 2023: New NIH Data Management and Sha...

This document summarizes an online meeting about resources available from the National Institute of Diabetes and Digestive and Kidney Diseases (NIDDK) to support researchers in implementing the new 2023 NIH data management and sharing policy. It discusses the NIDDK Central Repository, which acquires, maintains and distributes data and biospecimens from NIDDK-funded clinical studies. Eligibility and requirements for submitting resources to the repository are also covered.

ACRL STS Liaisons Forum - AIBS

Ginny Pannabecker, Life Science & Scholarly Communications Librarian at Virginia Tech, is an ACRL Science and Technology Section (STS) liaison to the American Institute of Biological Sciences (AIBS). This presentation shares key points for librarians and researchers from an AIBS workshop on "Changing Practices in Data Publications," which took place in December 2014 and involved representatives from federal funding agencies; publishers and librarians; scientific societies and journals; and data services / providers.

FAIRsharing and Engineering Research Data Management

A 7 minute presentation that formed the basis of a YouTube video presented at the RDA P12 in Gaborone, Botswana in November 2018.

Overview of standards/stakeholders in life science (RDA Engagement Interest G...

Overview of standards/stakeholders in life science (RDA Engagement Interest G...Susanna-Assunta Sansone

The document discusses standards for describing and reporting life sciences experiments. It notes that while there are over 300 such standards, fragmentation is a major issue that hinders data integration. It proposes creating a registry of standards to help stakeholders identify which standards to use or recommend based on criteria like documentation, adoption level, and interoperability. The registry would also track standards' associations with databases and data policies.Open Access Week - Oxford, 20-24 Oct 2014

This document summarizes Susanna-Assunta Sansone's presentation on open access and open data at Nature Publishing Group. Some key points discussed include:

- The benefits of open data including reducing errors/fraud and increasing return on investment in research. However, barriers also exist such as lack of incentives and standards.

- Recent initiatives at NPG to improve data/reproducibility such as requiring data behind figures and expanding methods sections.

- The role of data journals in increasing credit/visibility for shared data and promoting standards/best practices.

- Market research found researchers want increased visibility, usability, and credit for sharing their data.

Data accessibilityandchallenges

This document discusses data accessibility and challenges. It covers the data life cycle, including planning, generating data, reliability, ownership, metadata, versioning, and publishing. It discusses expectations for accessing and sharing data. Open access data policies are encouraged by research funders, journals, and initiatives like DataCite to assign identifiers to research data. Data can be shared through repositories, journals, websites, or informally between researchers. Factors that affect sharing and accessing data include size, computing needs, standards, repositories, data nature, governance, and metadata.

ELIXIR Webinar: BioSharing

This document provides an overview of BioSharing.org, a portal that monitors and curates standards, databases, and data policies to help inform and educate users. It summarizes key features including tracking over 225 content standards, 115 databases, and 554 data policies. The portal aims to help users understand how standards are used and make informed decisions on standards selection. It also links standards to training materials and allows custom collections and recommendations to be created.

RDA Presentation to the International Federation of Library Associations

A review of the purpose, status, and activity of RDA and how these all relate to the international library community.

How and Why to Share Your Data

This document discusses sharing research data. It describes the Data Services Center, which provides data services including finding and providing access to datasets. It notes that funders and publishers require data sharing, and that shared data receives more citations. It recommends sharing the minimum data needed to reproduce results, and considering timing, usability and granularity of data sharing. For sharing methods, it recommends using disciplinary or general repositories like UR Research, Dryad and REACTUR, which provide long-term preservation and access. Workshops and help are available for data management and sharing.

Managing Big Data - Berlin, July 9-10, 201.

Susanna-Assunta Sansone is a data consultant and honorary academic editor who works on several projects related to making data FAIR (Findable, Accessible, Interoperable, Reusable). She is the associate director of Scientific Data, a peer-reviewed journal focused on publishing data descriptors to describe and provide access to scientifically valuable datasets. The goal of Scientific Data is to help promote open science and data reuse by publishing structured metadata and narratives about datasets alongside traditional research articles.

Making Repositories FAIR (via metadata in FAIRsharing.org

A 10 minute presentation on how we can make repositories FAIR, primarily through storing their metadata on FAIRsharing.org. Presented at the FAIRsFAIR FAIR Semantics & FAIR Repositories pre-RDA P14 meeting in Helsinki, Finland on the 22nd October 2019. FAIRsharing can be used to edit and store metadata on repositories from across the natural sciences, engineering sciences, social sciences and humanities. This metadata is marked-up in schema.org and bioschemas (where relevant) and is given a citable DOI. This metadata can be used to power DMP tools and wizards and can also be used to perform FAIR assessments, such as through the FAIR evaluator or FAIRshake.

Data sharing as part of the research workflow

Presentation given at the CODATA "Data Perspective beyond Alliances" meeting on 3rd March 2016 in Tokyo.

SciDataCon 2014 Data Papers and their applications workshop - NPG Scientific ...

SciDataCon 2014 Data Papers and their applications workshop - NPG Scientific ...Susanna-Assunta Sansone

Part of the SciDataCon14 workshop on "Data Papers and their applications" run by myself and Brian Hole to help attendees understand current data-publishing journals and trends and help them understand the editorial processes on NPG's Scientific Data and Ubiquity's Open Health Data.Similar to Gaudet - BioDBcore (20)

NIH iDASH meeting on data sharing - BioSharing, ISA and Scientific Data

NIH iDASH meeting on data sharing - BioSharing, ISA and Scientific Data

Creating a sustainable business model for a digital repository: the Dryad exp...

Creating a sustainable business model for a digital repository: the Dryad exp...

Scientific Data overview of Data Descriptors - WT Data-Literature integration...

Scientific Data overview of Data Descriptors - WT Data-Literature integration...

The blessing and the curse: handshaking between general and specialist data r...

The blessing and the curse: handshaking between general and specialist data r...

dkNET Office Hours - "Are You Ready for 2023: New NIH Data Management and Sha...

dkNET Office Hours - "Are You Ready for 2023: New NIH Data Management and Sha...

FAIRsharing and Engineering Research Data Management

FAIRsharing and Engineering Research Data Management

Overview of standards/stakeholders in life science (RDA Engagement Interest G...

Overview of standards/stakeholders in life science (RDA Engagement Interest G...

RDA Presentation to the International Federation of Library Associations

RDA Presentation to the International Federation of Library Associations

Making Repositories FAIR (via metadata in FAIRsharing.org

Making Repositories FAIR (via metadata in FAIRsharing.org

SciDataCon 2014 Data Papers and their applications workshop - NPG Scientific ...

SciDataCon 2014 Data Papers and their applications workshop - NPG Scientific ...

Recently uploaded

Fueling AI with Great Data with Airbyte Webinar

This talk will focus on how to collect data from a variety of sources, leveraging this data for RAG and other GenAI use cases, and finally charting your course to productionalization.

AWS Cloud Cost Optimization Presentation.pptx

This presentation provides valuable insights into effective cost-saving techniques on AWS. Learn how to optimize your AWS resources by rightsizing, increasing elasticity, picking the right storage class, and choosing the best pricing model. Additionally, discover essential governance mechanisms to ensure continuous cost efficiency. Whether you are new to AWS or an experienced user, this presentation provides clear and practical tips to help you reduce your cloud costs and get the most out of your budget.

Finale of the Year: Apply for Next One!

Presentation for the event called "Finale of the Year: Apply for Next One!" organized by GDSC PJATK

HCL Notes and Domino License Cost Reduction in the World of DLAU

Webinar Recording: https://www.panagenda.com/webinars/hcl-notes-and-domino-license-cost-reduction-in-the-world-of-dlau/

The introduction of DLAU and the CCB & CCX licensing model caused quite a stir in the HCL community. As a Notes and Domino customer, you may have faced challenges with unexpected user counts and license costs. You probably have questions on how this new licensing approach works and how to benefit from it. Most importantly, you likely have budget constraints and want to save money where possible. Don’t worry, we can help with all of this!

We’ll show you how to fix common misconfigurations that cause higher-than-expected user counts, and how to identify accounts which you can deactivate to save money. There are also frequent patterns that can cause unnecessary cost, like using a person document instead of a mail-in for shared mailboxes. We’ll provide examples and solutions for those as well. And naturally we’ll explain the new licensing model.

Join HCL Ambassador Marc Thomas in this webinar with a special guest appearance from Franz Walder. It will give you the tools and know-how to stay on top of what is going on with Domino licensing. You will be able lower your cost through an optimized configuration and keep it low going forward.

These topics will be covered

- Reducing license cost by finding and fixing misconfigurations and superfluous accounts

- How do CCB and CCX licenses really work?

- Understanding the DLAU tool and how to best utilize it

- Tips for common problem areas, like team mailboxes, functional/test users, etc

- Practical examples and best practices to implement right away

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Choosing The Best AWS Service For Your Website + API.pptx

Have you ever been confused by the myriad of choices offered by AWS for hosting a website or an API?

Lambda, Elastic Beanstalk, Lightsail, Amplify, S3 (and more!) can each host websites + APIs. But which one should we choose?

Which one is cheapest? Which one is fastest? Which one will scale to meet our needs?

Join me in this session as we dive into each AWS hosting service to determine which one is best for your scenario and explain why!

dbms calicut university B. sc Cs 4th sem.pdf

Its a seminar ppt on database management system using sql

Artificial Intelligence for XMLDevelopment

In the rapidly evolving landscape of technologies, XML continues to play a vital role in structuring, storing, and transporting data across diverse systems. The recent advancements in artificial intelligence (AI) present new methodologies for enhancing XML development workflows, introducing efficiency, automation, and intelligent capabilities. This presentation will outline the scope and perspective of utilizing AI in XML development. The potential benefits and the possible pitfalls will be highlighted, providing a balanced view of the subject.

We will explore the capabilities of AI in understanding XML markup languages and autonomously creating structured XML content. Additionally, we will examine the capacity of AI to enrich plain text with appropriate XML markup. Practical examples and methodological guidelines will be provided to elucidate how AI can be effectively prompted to interpret and generate accurate XML markup.

Further emphasis will be placed on the role of AI in developing XSLT, or schemas such as XSD and Schematron. We will address the techniques and strategies adopted to create prompts for generating code, explaining code, or refactoring the code, and the results achieved.

The discussion will extend to how AI can be used to transform XML content. In particular, the focus will be on the use of AI XPath extension functions in XSLT, Schematron, Schematron Quick Fixes, or for XML content refactoring.

The presentation aims to deliver a comprehensive overview of AI usage in XML development, providing attendees with the necessary knowledge to make informed decisions. Whether you’re at the early stages of adopting AI or considering integrating it in advanced XML development, this presentation will cover all levels of expertise.

By highlighting the potential advantages and challenges of integrating AI with XML development tools and languages, the presentation seeks to inspire thoughtful conversation around the future of XML development. We’ll not only delve into the technical aspects of AI-powered XML development but also discuss practical implications and possible future directions.

June Patch Tuesday

Ivanti’s Patch Tuesday breakdown goes beyond patching your applications and brings you the intelligence and guidance needed to prioritize where to focus your attention first. Catch early analysis on our Ivanti blog, then join industry expert Chris Goettl for the Patch Tuesday Webinar Event. There we’ll do a deep dive into each of the bulletins and give guidance on the risks associated with the newly-identified vulnerabilities.

Operating System Used by Users in day-to-day life.pptx

Dive into the realm of operating systems (OS) with Pravash Chandra Das, a seasoned Digital Forensic Analyst, as your guide. 🚀 This comprehensive presentation illuminates the core concepts, types, and evolution of OS, essential for understanding modern computing landscapes.

Beginning with the foundational definition, Das clarifies the pivotal role of OS as system software orchestrating hardware resources, software applications, and user interactions. Through succinct descriptions, he delineates the diverse types of OS, from single-user, single-task environments like early MS-DOS iterations, to multi-user, multi-tasking systems exemplified by modern Linux distributions.

Crucial components like the kernel and shell are dissected, highlighting their indispensable functions in resource management and user interface interaction. Das elucidates how the kernel acts as the central nervous system, orchestrating process scheduling, memory allocation, and device management. Meanwhile, the shell serves as the gateway for user commands, bridging the gap between human input and machine execution. 💻

The narrative then shifts to a captivating exploration of prominent desktop OSs, Windows, macOS, and Linux. Windows, with its globally ubiquitous presence and user-friendly interface, emerges as a cornerstone in personal computing history. macOS, lauded for its sleek design and seamless integration with Apple's ecosystem, stands as a beacon of stability and creativity. Linux, an open-source marvel, offers unparalleled flexibility and security, revolutionizing the computing landscape. 🖥️

Moving to the realm of mobile devices, Das unravels the dominance of Android and iOS. Android's open-source ethos fosters a vibrant ecosystem of customization and innovation, while iOS boasts a seamless user experience and robust security infrastructure. Meanwhile, discontinued platforms like Symbian and Palm OS evoke nostalgia for their pioneering roles in the smartphone revolution.

The journey concludes with a reflection on the ever-evolving landscape of OS, underscored by the emergence of real-time operating systems (RTOS) and the persistent quest for innovation and efficiency. As technology continues to shape our world, understanding the foundations and evolution of operating systems remains paramount. Join Pravash Chandra Das on this illuminating journey through the heart of computing. 🌟

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Discover how MongoDB Atlas and vector search technology can revolutionize your application's search capabilities. This comprehensive presentation covers:

* What is Vector Search?

* Importance and benefits of vector search

* Practical use cases across various industries

* Step-by-step implementation guide

* Live demos with code snippets

* Enhancing LLM capabilities with vector search

* Best practices and optimization strategies

Perfect for developers, AI enthusiasts, and tech leaders. Learn how to leverage MongoDB Atlas to deliver highly relevant, context-aware search results, transforming your data retrieval process. Stay ahead in tech innovation and maximize the potential of your applications.

#MongoDB #VectorSearch #AI #SemanticSearch #TechInnovation #DataScience #LLM #MachineLearning #SearchTechnology

Monitoring and Managing Anomaly Detection on OpenShift.pdf

Monitoring and Managing Anomaly Detection on OpenShift

Overview

Dive into the world of anomaly detection on edge devices with our comprehensive hands-on tutorial. This SlideShare presentation will guide you through the entire process, from data collection and model training to edge deployment and real-time monitoring. Perfect for those looking to implement robust anomaly detection systems on resource-constrained IoT/edge devices.

Key Topics Covered

1. Introduction to Anomaly Detection

- Understand the fundamentals of anomaly detection and its importance in identifying unusual behavior or failures in systems.

2. Understanding Edge (IoT)

- Learn about edge computing and IoT, and how they enable real-time data processing and decision-making at the source.

3. What is ArgoCD?

- Discover ArgoCD, a declarative, GitOps continuous delivery tool for Kubernetes, and its role in deploying applications on edge devices.

4. Deployment Using ArgoCD for Edge Devices

- Step-by-step guide on deploying anomaly detection models on edge devices using ArgoCD.

5. Introduction to Apache Kafka and S3

- Explore Apache Kafka for real-time data streaming and Amazon S3 for scalable storage solutions.

6. Viewing Kafka Messages in the Data Lake

- Learn how to view and analyze Kafka messages stored in a data lake for better insights.

7. What is Prometheus?

- Get to know Prometheus, an open-source monitoring and alerting toolkit, and its application in monitoring edge devices.

8. Monitoring Application Metrics with Prometheus

- Detailed instructions on setting up Prometheus to monitor the performance and health of your anomaly detection system.

9. What is Camel K?

- Introduction to Camel K, a lightweight integration framework built on Apache Camel, designed for Kubernetes.

10. Configuring Camel K Integrations for Data Pipelines

- Learn how to configure Camel K for seamless data pipeline integrations in your anomaly detection workflow.

11. What is a Jupyter Notebook?

- Overview of Jupyter Notebooks, an open-source web application for creating and sharing documents with live code, equations, visualizations, and narrative text.

12. Jupyter Notebooks with Code Examples

- Hands-on examples and code snippets in Jupyter Notebooks to help you implement and test anomaly detection models.

Skybuffer SAM4U tool for SAP license adoption

Manage and optimize your license adoption and consumption with SAM4U, an SAP free customer software asset management tool.

SAM4U, an SAP complimentary software asset management tool for customers, delivers a detailed and well-structured overview of license inventory and usage with a user-friendly interface. We offer a hosted, cost-effective, and performance-optimized SAM4U setup in the Skybuffer Cloud environment. You retain ownership of the system and data, while we manage the ABAP 7.58 infrastructure, ensuring fixed Total Cost of Ownership (TCO) and exceptional services through the SAP Fiori interface.

Programming Foundation Models with DSPy - Meetup Slides

Prompting language models is hard, while programming language models is easy. In this talk, I will discuss the state-of-the-art framework DSPy for programming foundation models with its powerful optimizers and runtime constraint system.

Building Production Ready Search Pipelines with Spark and Milvus

Spark is the widely used ETL tool for processing, indexing and ingesting data to serving stack for search. Milvus is the production-ready open-source vector database. In this talk we will show how to use Spark to process unstructured data to extract vector representations, and push the vectors to Milvus vector database for search serving.

HCL Notes und Domino Lizenzkostenreduzierung in der Welt von DLAU

Webinar Recording: https://www.panagenda.com/webinars/hcl-notes-und-domino-lizenzkostenreduzierung-in-der-welt-von-dlau/

DLAU und die Lizenzen nach dem CCB- und CCX-Modell sind für viele in der HCL-Community seit letztem Jahr ein heißes Thema. Als Notes- oder Domino-Kunde haben Sie vielleicht mit unerwartet hohen Benutzerzahlen und Lizenzgebühren zu kämpfen. Sie fragen sich vielleicht, wie diese neue Art der Lizenzierung funktioniert und welchen Nutzen sie Ihnen bringt. Vor allem wollen Sie sicherlich Ihr Budget einhalten und Kosten sparen, wo immer möglich. Das verstehen wir und wir möchten Ihnen dabei helfen!

Wir erklären Ihnen, wie Sie häufige Konfigurationsprobleme lösen können, die dazu führen können, dass mehr Benutzer gezählt werden als nötig, und wie Sie überflüssige oder ungenutzte Konten identifizieren und entfernen können, um Geld zu sparen. Es gibt auch einige Ansätze, die zu unnötigen Ausgaben führen können, z. B. wenn ein Personendokument anstelle eines Mail-Ins für geteilte Mailboxen verwendet wird. Wir zeigen Ihnen solche Fälle und deren Lösungen. Und natürlich erklären wir Ihnen das neue Lizenzmodell.

Nehmen Sie an diesem Webinar teil, bei dem HCL-Ambassador Marc Thomas und Gastredner Franz Walder Ihnen diese neue Welt näherbringen. Es vermittelt Ihnen die Tools und das Know-how, um den Überblick zu bewahren. Sie werden in der Lage sein, Ihre Kosten durch eine optimierte Domino-Konfiguration zu reduzieren und auch in Zukunft gering zu halten.

Diese Themen werden behandelt

- Reduzierung der Lizenzkosten durch Auffinden und Beheben von Fehlkonfigurationen und überflüssigen Konten

- Wie funktionieren CCB- und CCX-Lizenzen wirklich?

- Verstehen des DLAU-Tools und wie man es am besten nutzt

- Tipps für häufige Problembereiche, wie z. B. Team-Postfächer, Funktions-/Testbenutzer usw.

- Praxisbeispiele und Best Practices zum sofortigen Umsetzen

Recently uploaded (20)

HCL Notes and Domino License Cost Reduction in the World of DLAU

HCL Notes and Domino License Cost Reduction in the World of DLAU

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Choosing The Best AWS Service For Your Website + API.pptx

Choosing The Best AWS Service For Your Website + API.pptx

Deep Dive: AI-Powered Marketing to Get More Leads and Customers with HyperGro...

Deep Dive: AI-Powered Marketing to Get More Leads and Customers with HyperGro...

WeTestAthens: Postman's AI & Automation Techniques

WeTestAthens: Postman's AI & Automation Techniques

Operating System Used by Users in day-to-day life.pptx

Operating System Used by Users in day-to-day life.pptx

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Unlock the Future of Search with MongoDB Atlas_ Vector Search Unleashed.pdf

Monitoring and Managing Anomaly Detection on OpenShift.pdf

Monitoring and Managing Anomaly Detection on OpenShift.pdf

Programming Foundation Models with DSPy - Meetup Slides

Programming Foundation Models with DSPy - Meetup Slides

Building Production Ready Search Pipelines with Spark and Milvus

Building Production Ready Search Pipelines with Spark and Milvus

HCL Notes und Domino Lizenzkostenreduzierung in der Welt von DLAU

HCL Notes und Domino Lizenzkostenreduzierung in der Welt von DLAU

Gaudet - BioDBcore

- 1. The International Society for Biocuration & BioDBcore: Promoting data exchange across databases, journals and users Pascale Gaudet Scientific Manager Swiss Institute of Bioinformatics Chair International Society for Biocuration

- 2. The need • Databases: improve data integration from published papers • Journals: link to databases objects • Researchers: identify resources • Grant submitters: enforce data sharing plans

- 3. An information specification for biological databases Goals • Gather information required to provide a general overview of the database landscape • Encourage consistency and interoperability • Promote the use of standards • Provide guidance for users • Maximize the collective impact of the resources

- 4. BioDBcore descriptors ² Database name ² Main resource URL ² Contact information (e-mail; postal mail) ² Date resource established (year) ² Conditions of use (Free, or type of license) ² Scope: data types captured, curation policy, standards used ² Standards: MIs, Data formats, Terminologies ² Taxonomic coverage

- 5. Descriptors (cont’d) ² Data accessibility / output options ² Data release frequency ² Versioning policy / access to historical files ² Documentation available ² User support options ² Data submission policy ² Relevant publications ² Resource’s Wikipedia URL ² Tools available

- 6. Collaborative philosophy • Many groups/resources have been providing registries and lists of databases • Often not funded, not maintained • BioDBcore seeks to collaborate with all interested parties to work together to provide a more permanent solution to database descriptions

- 7. NAR Database issue 2012 • More than 300 BioDBcore entries submitted PROTOTYPE

- 10. BioDBcore implementation plan ü Consultation with interested parties ü Collaborative development of the guidelines ü Provide examples: NAR 2012 and BioDB100 ü Infrastructure to capture BioDBcore information q Refine guidelines q Identify/develop ontologies/vocabularies q Exchange of data among various groups q Providing support for users

- 11. Identifying or developing semantic support • Terminologies (BRO, EDAM, others) • Policies and guidelines (BioSharing) • Name attribution (identifiers.org) • Author (orchid) Identifying resources is better than developing new ones !

- 12. BioDBcore: Participating groups ² BioDB100 ² BioSharing ² BioCatalogue ² Bioinformatics Links Directory ² Biositemaps ² CASIMIR ² MIBBI ² MIRIAM/identifiers.org ² Model Organism Databases ² NIF registry ² ORCHID ² … and your group ! contact the ISB

- 13. Acknowledgements Susanna-Assunta Sansone Philippe Rocca-Serra Michael Galperin, Editor, Nucleic Acids Research Database issue BioDBcore collaborators