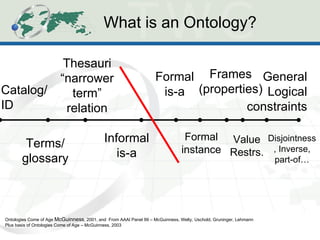

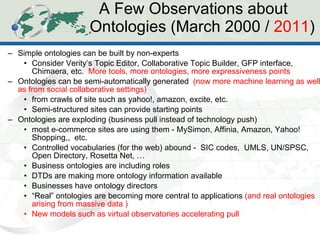

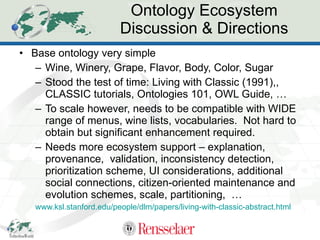

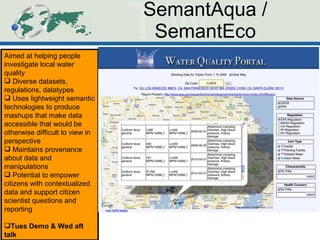

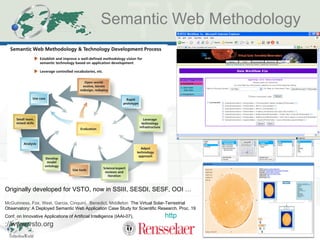

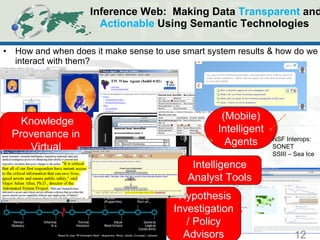

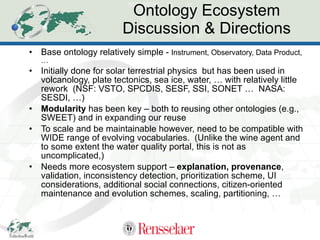

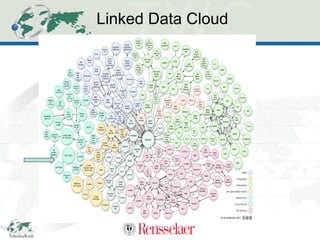

The document discusses the evolution and application of ontologies in various fields since their initial presentation in 2000, emphasizing the transition from expressiveness to ecosystem considerations for ontology-enabled applications. It highlights the growing integration of ontologies in e-commerce and scientific research, with a focus on the challenges of compatibility, provenance, and maintenance. Key observations include the democratization of ontology creation, the rise of semi-automated tools, and the importance of modular design and community engagement.