2007-12-15 AGU SanFrancisco ExceptEvent

•Download as PPT, PDF•

1 like•143 views

Report

Share

Report

Share

Recommended

Multimedia Para Docentes

El documento presenta una serie de preguntas sobre conceptos clave relacionados con Internet y las tecnologías de la información y comunicación (TIC) aplicadas a la educación. Explora temas como navegadores web, buscadores, correo electrónico, redes sociales, herramientas de oficina, bases de datos, presentaciones, aplicaciones educativas, metodologías pedagógicas, aprendizaje colaborativo, investigación en Internet, evaluación de estudiantes y recursos educativos en línea.

17 CCONSEJOS PARA TU VIDA

El documento ofrece 17 consejos para tener un feliz día, incluyendo establecer metas alcanzables, sonreír, compartir con los demás, ayudar a los demás, mantener un espíritu joven, ser simpático con todos, conservar la calma bajo presión, hacer el ambiente menos tenso con simpatía, perdonar molestias de otros, valorar amigos verdaderos, cooperar para obtener grandes resultados, tener confianza en uno mismo, respetar a quienes pasan por momentos difíciles, dejarse llevar

P F C

Este documento describe el desarrollo de un simulador de encaminamiento llamado "RIP Application" para apoyar la enseñanza de protocolos de encaminamiento. Explica las herramientas de simulación existentes y cómo éstas son complejas. Luego detalla el desarrollo del simulador, incluyendo su diseño, estructura modular, interfaz gráfica y evaluación. Concluye que el simulador cumple con los objetivos de ser intuitivo, flexible y útil para la docencia, aunque señala posibles mejoras futuras.

ASAMBLEA TACUAREMBÓ - ASAMBLEA REGIONAL DICIEMBRE 2007

The document is a short notice providing dates and a location. It states that events will take place on December 15th and 16th, 2007 in Tacuarembó, Uruguay.

Aguila

El documento describe el proceso de renovación que experimentan las águilas mayores de 40 años. Cuando sus garras y pico se vuelven inútiles para cazar, deben arrancarse el pico contra una pared y desprenderse de sus plumas viejas, un proceso doloroso de 150 días que les permite regenerarse y vivir 30 años más.

Recommended

Multimedia Para Docentes

El documento presenta una serie de preguntas sobre conceptos clave relacionados con Internet y las tecnologías de la información y comunicación (TIC) aplicadas a la educación. Explora temas como navegadores web, buscadores, correo electrónico, redes sociales, herramientas de oficina, bases de datos, presentaciones, aplicaciones educativas, metodologías pedagógicas, aprendizaje colaborativo, investigación en Internet, evaluación de estudiantes y recursos educativos en línea.

17 CCONSEJOS PARA TU VIDA

El documento ofrece 17 consejos para tener un feliz día, incluyendo establecer metas alcanzables, sonreír, compartir con los demás, ayudar a los demás, mantener un espíritu joven, ser simpático con todos, conservar la calma bajo presión, hacer el ambiente menos tenso con simpatía, perdonar molestias de otros, valorar amigos verdaderos, cooperar para obtener grandes resultados, tener confianza en uno mismo, respetar a quienes pasan por momentos difíciles, dejarse llevar

P F C

Este documento describe el desarrollo de un simulador de encaminamiento llamado "RIP Application" para apoyar la enseñanza de protocolos de encaminamiento. Explica las herramientas de simulación existentes y cómo éstas son complejas. Luego detalla el desarrollo del simulador, incluyendo su diseño, estructura modular, interfaz gráfica y evaluación. Concluye que el simulador cumple con los objetivos de ser intuitivo, flexible y útil para la docencia, aunque señala posibles mejoras futuras.

ASAMBLEA TACUAREMBÓ - ASAMBLEA REGIONAL DICIEMBRE 2007

The document is a short notice providing dates and a location. It states that events will take place on December 15th and 16th, 2007 in Tacuarembó, Uruguay.

Aguila

El documento describe el proceso de renovación que experimentan las águilas mayores de 40 años. Cuando sus garras y pico se vuelven inútiles para cazar, deben arrancarse el pico contra una pared y desprenderse de sus plumas viejas, un proceso doloroso de 150 días que les permite regenerarse y vivir 30 años más.

100528 satellite obs_china_husar

This document discusses the challenges of characterizing air pollution using remote sensing observations over China. It describes the seven dimensions of data - spatial, height, time, particle size, composition, shape, and mixing - needed to fully characterize air pollution. While each individual observation method or data set has limitations, together they can provide consistent global-scale observations. There remain significant challenges to integrating data from multiple sensors to accurately measure air pollution. International collaboration combining global satellite data with detailed local observations in China may help advance progress in addressing this issue.

2013-04-30 EE DSS Approach and Demo

The document describes the Exceptional Event Decision Support System (EE DSS), a tool to help states and EPA regions implement the EPA's Exceptional Events Rule. The EE DSS uses air quality, meteorological, and other data to screen for exceedances and flag those likely caused by exceptional events like dust storms, wildfires, or July 4th fireworks. It aims to minimize the technical hurdles of the EE rule and provide a uniform, transparent methodology. The document outlines the EE DSS's data sources and modeling, screening approach, tools for visualizing events, and provides an example demo of the system in action.

Exceptional Event Decision Support System Description

This slideshow is a brief description of the Exceptional Event Decision Support System Description (EE DSS).

130205 epa exc_event_seminar

This document summarizes Rudolf Husar's presentation on exceptional event analysis and decision support systems. It discusses using diverse data like satellites, models, and real-time monitoring to evaluate exceptional events like wildfires, dust storms, and their impact on air quality measurements. Specific examples are presented of exceptional events from dust from Asia and Africa impacting North America, as well as wildfires in Georgia impacting ozone and PM2.5 levels. Tools like the Navy Aerosol Analysis and Prediction System model and satellite data are highlighted for their ability to analyze the transport and impact of these aerosol plumes to support regulatory decisions. The goal of reconciliation of emissions, observations, and models is discussed to improve the evaluation of exceptional events

130205 epa ee_presentation_subm

Rudolf B. Husar presented at the EPA on exceptional smoke and dust events. He discussed using diverse data like satellites, models, and real-time data in a decision support system to evaluate these events. The NAAPS aerosol model assimilates satellite data to provide the 3D structure of smoke, dust, and other aerosols. Long-term NAAPS data from 2006 to present show the vertical distribution of different aerosols. Satellite data help reduce biases between surface PM measurements and air quality models.

111018 geo sif_aq_interop

The document discusses the Air Quality Community of Practice (AQ CoP) which facilitates interoperability and data networking for air quality and health applications. The AQ CoP has developed an open-source Air Quality Data Network (ADN) consisting of 7 interoperable air quality data servers that provide access to diverse observational and model datasets using international standards. The ADN demonstrates GEO principles and infrastructure but requires further development to support real applications. The main role of the AQ CoP is to connect different initiatives and enable the ADN network.

110823 solta11 intro

The workshop will bring together practitioners from Europe and North America to discuss progress and challenges in realizing an interoperable air quality data network. Participants will assess the current state of the pilot network, address key technical issues around data standards, server implementation and maintenance, and catalog design. The goal is to advance the network from a virtual concept to an operational reality, facilitating improved access, integration and reuse of air quality observation and model data.

110823 data fed_solta11

The document describes DataFed, a federated data system that provides non-intrusive integration of diverse environmental datasets using open standards. DataFed allows users to find and access datasets through a catalog and flexible tools for processing and visualizing the data. It facilitates publishing, finding, and accessing geospatial and environmental data through loose coupling of autonomous nodes and OGC web service protocols.

110510 aq co_p_network

This document discusses the emerging pattern in the air quality information ecosystem. It notes that individual data providers, scientists, and decision supporters are being replaced by groups that facilitate access, sharing, and integration. These include data portals, science teams, and decision support systems. The ecosystem involves multiple stages from observations to decisions, with value added at each stage through activities like data aggregation, scientific collaboration, and predictive analysis. This new structure is more efficient and supports the goals of initiatives like GEOSS.

110509 aq co_p_solta

The document discusses a workshop on networking air quality observations and models to support decision making. The workshop aims to (1) introduce participants and identify shared data and applications, (2) exchange best practices for interoperability, and (3) address technical and collaboration issues. The preliminary agenda covers assessing the current state of air quality interoperability and the technical requirements for improved data sharing and integration to support applications and decision support systems.

110421 exploration of_pm_networks_and_data_over_the_us-_aqs_and_views

The document summarizes the exploration of PM networks and data over the US using two datasets: AQS and VIEWS. It presents information on the coverage and frequency of EPA monitoring data, as well as data from the VIEWS network. It also describes the user interface for the Datafed browser and schemes for processing and aggregating raw monitoring data spatially and temporally. Finally, it analyzes the spatial and temporal variation of PM levels and the correlation between continuous and EPA monitoring data in different regions of the US.

110410 aq user_req_methodology_sydney_subm

This document proposes a methodology to determine user requirements for Earth observations related to air quality management. The methodology is a bottom-up approach that (1) defines the major workflow steps of air quality management, (2) identifies the value-adding activities within each step, (3) determines the participants ("users") for each activity, and (4) establishes the Earth observation needs of each user. The methodology is intended to facilitate ongoing feedback to optimize the value of Earth observations for air quality management and reduce gaps. It provides a systematic way to account for user needs based on the specific activities and users involved in the air quality management process.

110408 aq co_p_uic_sydney_husar

This document provides a 2011 progress report for the GEOSS Air Quality Community of Practice (AQ CoP). It summarizes activities undertaken in 2011, including developing an air quality data server software to make data more accessible and interoperable, creating a user requirements registry to identify needed observations and models, and matching user needs with available data through a community catalog. It outlines ongoing projects and plans to further expand the air quality data network through coordination and workshops in 2011. The overall goal is to integrate air quality initiatives and make relevant data more findable, accessible and interoperable to support applications in air quality and health.

110105 htap pilot_aqco_p_esip_dc

The document describes the HTAP Data Network, which demonstrates a service-oriented approach to sharing atmospheric model outputs and air quality observations between various data servers using open standards. The main output is open-source WCS data server software and tools that allow different organizations to publish, find, and access distributed air quality data holdings in a interoperable way as part of the GEO Task DA-09-02d: Atmospheric Model Evaluation Network. The network aims to connect air quality data providers and users to enable effective air quality science and management.

100615 htap network_brussels

The REASoN Project will link NASA's air quality data, modeling, and systems to users in research, education, and applications. It aims to address hurdles users face in finding, accessing, evaluating, and merging relevant data. The project will utilize service orientation and interoperability standards to build an adaptable information infrastructure. This will include becoming a node on the air quality network, implementing standards for sharing data and tools, and participating in the GEOSS Architecture Implementation Pilot.

121117 eedss briefing_nasa_epa

This document summarizes the Exceptional Event Decision Support System (EE DSS) which uses NASA satellite data and the Navy Aerosol Analysis and Prediction System (NAAPS) model to help with air quality management decisions regarding exceptional events like smoke and dust events. The EE DSS has been developed since 2005 with NASA support and is now ready to serve air quality management at the federal, regional, and state levels. It can automatically detect and analyze events, display relevant data through interactive maps and cross-sections, and its tools have helped explain declines in exceptional event flags and PM2.5 concentrations from 2006-2012. Coordination is proposed with NASA and EPA for continued application of the EE DSS to smoke and dust events in

120910 nasa satellite_outline

This document discusses the usefulness of satellite observations for air quality applications and regulatory requirements. It outlines six key air quality requirements that satellites can help address, such as determining compliance with air quality standards and identifying long-range pollution transport events. The document also notes how satellites can help improve emissions estimates, characterize long-range transport of pollution, and increase interaction between air quality and remote sensing scientists. However, it cautions that relating satellite aerosol optical depth measurements directly to ground-level PM concentrations currently has too much uncertainty for regulatory or public health applications.

120612 geia closure_ofeo_ms_soa_subm

The document discusses tools for closing the gap between emissions, observations, and models of air quality. It proposes a service oriented architecture and network to integrate multiple datasets from observations, emissions, and models. This would allow iterative evaluation and improvement of models by comparing them to observations and adjusting emissions estimates to reduce biases. The end goal is to provide the best available composition of the atmosphere by integrating the best observations, emissions estimates, and models.

110414 extreme dustsmokesulfate

This proposal outlines a study on the influence of weather and climate events on air quality issues like dust, smoke, and sulfate events. The study would examine these events at both the continental/hemispherical scale and regional scale. At the continental scale, the analysis would demonstrate the role of global climate and emissions and identify tipping points for air quality regulations. At the regional scale, the study would analyze the effects of regional emissions, climate, and precipitation on air quality. The proposal describes tools and methods for conducting continental and regional air quality-climate analysis, including models, datasets, and satellite data. The goals are to support air quality management and identify implications for policy.

Aq Gci Infrastructure

The document discusses various applications of air quality data including regulatory exceptions, hemispheric transport projects, and atmospheric composition portals. It also describes the Air Quality Community of Practice's contributions to the GEOSS Common Infrastructure through developing an air quality community catalog and data finder to help users discover and access air quality data and metadata registered in the GEOSS clearinghouse and registry.

Digital Marketing Trends in 2024 | Guide for Staying Ahead

https://www.wask.co/ebooks/digital-marketing-trends-in-2024

Feeling lost in the digital marketing whirlwind of 2024? Technology is changing, consumer habits are evolving, and staying ahead of the curve feels like a never-ending pursuit. This e-book is your compass. Dive into actionable insights to handle the complexities of modern marketing. From hyper-personalization to the power of user-generated content, learn how to build long-term relationships with your audience and unlock the secrets to success in the ever-shifting digital landscape.

Project Management Semester Long Project - Acuity

Acuity is an innovative learning app designed to transform the way you engage with knowledge. Powered by AI technology, Acuity takes complex topics and distills them into concise, interactive summaries that are easy to read & understand. Whether you're exploring the depths of quantum mechanics or seeking insight into historical events, Acuity provides the key information you need without the burden of lengthy texts.

More Related Content

More from Rudolf Husar

100528 satellite obs_china_husar

This document discusses the challenges of characterizing air pollution using remote sensing observations over China. It describes the seven dimensions of data - spatial, height, time, particle size, composition, shape, and mixing - needed to fully characterize air pollution. While each individual observation method or data set has limitations, together they can provide consistent global-scale observations. There remain significant challenges to integrating data from multiple sensors to accurately measure air pollution. International collaboration combining global satellite data with detailed local observations in China may help advance progress in addressing this issue.

2013-04-30 EE DSS Approach and Demo

The document describes the Exceptional Event Decision Support System (EE DSS), a tool to help states and EPA regions implement the EPA's Exceptional Events Rule. The EE DSS uses air quality, meteorological, and other data to screen for exceedances and flag those likely caused by exceptional events like dust storms, wildfires, or July 4th fireworks. It aims to minimize the technical hurdles of the EE rule and provide a uniform, transparent methodology. The document outlines the EE DSS's data sources and modeling, screening approach, tools for visualizing events, and provides an example demo of the system in action.

Exceptional Event Decision Support System Description

This slideshow is a brief description of the Exceptional Event Decision Support System Description (EE DSS).

130205 epa exc_event_seminar

This document summarizes Rudolf Husar's presentation on exceptional event analysis and decision support systems. It discusses using diverse data like satellites, models, and real-time monitoring to evaluate exceptional events like wildfires, dust storms, and their impact on air quality measurements. Specific examples are presented of exceptional events from dust from Asia and Africa impacting North America, as well as wildfires in Georgia impacting ozone and PM2.5 levels. Tools like the Navy Aerosol Analysis and Prediction System model and satellite data are highlighted for their ability to analyze the transport and impact of these aerosol plumes to support regulatory decisions. The goal of reconciliation of emissions, observations, and models is discussed to improve the evaluation of exceptional events

130205 epa ee_presentation_subm

Rudolf B. Husar presented at the EPA on exceptional smoke and dust events. He discussed using diverse data like satellites, models, and real-time data in a decision support system to evaluate these events. The NAAPS aerosol model assimilates satellite data to provide the 3D structure of smoke, dust, and other aerosols. Long-term NAAPS data from 2006 to present show the vertical distribution of different aerosols. Satellite data help reduce biases between surface PM measurements and air quality models.

111018 geo sif_aq_interop

The document discusses the Air Quality Community of Practice (AQ CoP) which facilitates interoperability and data networking for air quality and health applications. The AQ CoP has developed an open-source Air Quality Data Network (ADN) consisting of 7 interoperable air quality data servers that provide access to diverse observational and model datasets using international standards. The ADN demonstrates GEO principles and infrastructure but requires further development to support real applications. The main role of the AQ CoP is to connect different initiatives and enable the ADN network.

110823 solta11 intro

The workshop will bring together practitioners from Europe and North America to discuss progress and challenges in realizing an interoperable air quality data network. Participants will assess the current state of the pilot network, address key technical issues around data standards, server implementation and maintenance, and catalog design. The goal is to advance the network from a virtual concept to an operational reality, facilitating improved access, integration and reuse of air quality observation and model data.

110823 data fed_solta11

The document describes DataFed, a federated data system that provides non-intrusive integration of diverse environmental datasets using open standards. DataFed allows users to find and access datasets through a catalog and flexible tools for processing and visualizing the data. It facilitates publishing, finding, and accessing geospatial and environmental data through loose coupling of autonomous nodes and OGC web service protocols.

110510 aq co_p_network

This document discusses the emerging pattern in the air quality information ecosystem. It notes that individual data providers, scientists, and decision supporters are being replaced by groups that facilitate access, sharing, and integration. These include data portals, science teams, and decision support systems. The ecosystem involves multiple stages from observations to decisions, with value added at each stage through activities like data aggregation, scientific collaboration, and predictive analysis. This new structure is more efficient and supports the goals of initiatives like GEOSS.

110509 aq co_p_solta

The document discusses a workshop on networking air quality observations and models to support decision making. The workshop aims to (1) introduce participants and identify shared data and applications, (2) exchange best practices for interoperability, and (3) address technical and collaboration issues. The preliminary agenda covers assessing the current state of air quality interoperability and the technical requirements for improved data sharing and integration to support applications and decision support systems.

110421 exploration of_pm_networks_and_data_over_the_us-_aqs_and_views

The document summarizes the exploration of PM networks and data over the US using two datasets: AQS and VIEWS. It presents information on the coverage and frequency of EPA monitoring data, as well as data from the VIEWS network. It also describes the user interface for the Datafed browser and schemes for processing and aggregating raw monitoring data spatially and temporally. Finally, it analyzes the spatial and temporal variation of PM levels and the correlation between continuous and EPA monitoring data in different regions of the US.

110410 aq user_req_methodology_sydney_subm

This document proposes a methodology to determine user requirements for Earth observations related to air quality management. The methodology is a bottom-up approach that (1) defines the major workflow steps of air quality management, (2) identifies the value-adding activities within each step, (3) determines the participants ("users") for each activity, and (4) establishes the Earth observation needs of each user. The methodology is intended to facilitate ongoing feedback to optimize the value of Earth observations for air quality management and reduce gaps. It provides a systematic way to account for user needs based on the specific activities and users involved in the air quality management process.

110408 aq co_p_uic_sydney_husar

This document provides a 2011 progress report for the GEOSS Air Quality Community of Practice (AQ CoP). It summarizes activities undertaken in 2011, including developing an air quality data server software to make data more accessible and interoperable, creating a user requirements registry to identify needed observations and models, and matching user needs with available data through a community catalog. It outlines ongoing projects and plans to further expand the air quality data network through coordination and workshops in 2011. The overall goal is to integrate air quality initiatives and make relevant data more findable, accessible and interoperable to support applications in air quality and health.

110105 htap pilot_aqco_p_esip_dc

The document describes the HTAP Data Network, which demonstrates a service-oriented approach to sharing atmospheric model outputs and air quality observations between various data servers using open standards. The main output is open-source WCS data server software and tools that allow different organizations to publish, find, and access distributed air quality data holdings in a interoperable way as part of the GEO Task DA-09-02d: Atmospheric Model Evaluation Network. The network aims to connect air quality data providers and users to enable effective air quality science and management.

100615 htap network_brussels

The REASoN Project will link NASA's air quality data, modeling, and systems to users in research, education, and applications. It aims to address hurdles users face in finding, accessing, evaluating, and merging relevant data. The project will utilize service orientation and interoperability standards to build an adaptable information infrastructure. This will include becoming a node on the air quality network, implementing standards for sharing data and tools, and participating in the GEOSS Architecture Implementation Pilot.

121117 eedss briefing_nasa_epa

This document summarizes the Exceptional Event Decision Support System (EE DSS) which uses NASA satellite data and the Navy Aerosol Analysis and Prediction System (NAAPS) model to help with air quality management decisions regarding exceptional events like smoke and dust events. The EE DSS has been developed since 2005 with NASA support and is now ready to serve air quality management at the federal, regional, and state levels. It can automatically detect and analyze events, display relevant data through interactive maps and cross-sections, and its tools have helped explain declines in exceptional event flags and PM2.5 concentrations from 2006-2012. Coordination is proposed with NASA and EPA for continued application of the EE DSS to smoke and dust events in

120910 nasa satellite_outline

This document discusses the usefulness of satellite observations for air quality applications and regulatory requirements. It outlines six key air quality requirements that satellites can help address, such as determining compliance with air quality standards and identifying long-range pollution transport events. The document also notes how satellites can help improve emissions estimates, characterize long-range transport of pollution, and increase interaction between air quality and remote sensing scientists. However, it cautions that relating satellite aerosol optical depth measurements directly to ground-level PM concentrations currently has too much uncertainty for regulatory or public health applications.

120612 geia closure_ofeo_ms_soa_subm

The document discusses tools for closing the gap between emissions, observations, and models of air quality. It proposes a service oriented architecture and network to integrate multiple datasets from observations, emissions, and models. This would allow iterative evaluation and improvement of models by comparing them to observations and adjusting emissions estimates to reduce biases. The end goal is to provide the best available composition of the atmosphere by integrating the best observations, emissions estimates, and models.

110414 extreme dustsmokesulfate

This proposal outlines a study on the influence of weather and climate events on air quality issues like dust, smoke, and sulfate events. The study would examine these events at both the continental/hemispherical scale and regional scale. At the continental scale, the analysis would demonstrate the role of global climate and emissions and identify tipping points for air quality regulations. At the regional scale, the study would analyze the effects of regional emissions, climate, and precipitation on air quality. The proposal describes tools and methods for conducting continental and regional air quality-climate analysis, including models, datasets, and satellite data. The goals are to support air quality management and identify implications for policy.

Aq Gci Infrastructure

The document discusses various applications of air quality data including regulatory exceptions, hemispheric transport projects, and atmospheric composition portals. It also describes the Air Quality Community of Practice's contributions to the GEOSS Common Infrastructure through developing an air quality community catalog and data finder to help users discover and access air quality data and metadata registered in the GEOSS clearinghouse and registry.

More from Rudolf Husar (20)

Exceptional Event Decision Support System Description

Exceptional Event Decision Support System Description

110421 exploration of_pm_networks_and_data_over_the_us-_aqs_and_views

110421 exploration of_pm_networks_and_data_over_the_us-_aqs_and_views

Recently uploaded

Digital Marketing Trends in 2024 | Guide for Staying Ahead

https://www.wask.co/ebooks/digital-marketing-trends-in-2024

Feeling lost in the digital marketing whirlwind of 2024? Technology is changing, consumer habits are evolving, and staying ahead of the curve feels like a never-ending pursuit. This e-book is your compass. Dive into actionable insights to handle the complexities of modern marketing. From hyper-personalization to the power of user-generated content, learn how to build long-term relationships with your audience and unlock the secrets to success in the ever-shifting digital landscape.

Project Management Semester Long Project - Acuity

Acuity is an innovative learning app designed to transform the way you engage with knowledge. Powered by AI technology, Acuity takes complex topics and distills them into concise, interactive summaries that are easy to read & understand. Whether you're exploring the depths of quantum mechanics or seeking insight into historical events, Acuity provides the key information you need without the burden of lengthy texts.

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

ABSTRACT: A prima vista, un mattoncino Lego e la backdoor XZ potrebbero avere in comune il fatto di essere entrambi blocchi di costruzione, o dipendenze di progetti creativi e software. La realtà è che un mattoncino Lego e il caso della backdoor XZ hanno molto di più di tutto ciò in comune.

Partecipate alla presentazione per immergervi in una storia di interoperabilità, standard e formati aperti, per poi discutere del ruolo importante che i contributori hanno in una comunità open source sostenibile.

BIO: Sostenitrice del software libero e dei formati standard e aperti. È stata un membro attivo dei progetti Fedora e openSUSE e ha co-fondato l'Associazione LibreItalia dove è stata coinvolta in diversi eventi, migrazioni e formazione relativi a LibreOffice. In precedenza ha lavorato a migrazioni e corsi di formazione su LibreOffice per diverse amministrazioni pubbliche e privati. Da gennaio 2020 lavora in SUSE come Software Release Engineer per Uyuni e SUSE Manager e quando non segue la sua passione per i computer e per Geeko coltiva la sua curiosità per l'astronomia (da cui deriva il suo nickname deneb_alpha).

Salesforce Integration for Bonterra Impact Management (fka Social Solutions A...

Sidekick Solutions uses Bonterra Impact Management (fka Social Solutions Apricot) and automation solutions to integrate data for business workflows.

We believe integration and automation are essential to user experience and the promise of efficient work through technology. Automation is the critical ingredient to realizing that full vision. We develop integration products and services for Bonterra Case Management software to support the deployment of automations for a variety of use cases.

This video focuses on integration of Salesforce with Bonterra Impact Management.

Interested in deploying an integration with Salesforce for Bonterra Impact Management? Contact us at sales@sidekicksolutionsllc.com to discuss next steps.

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Artificial Intelligence for XMLDevelopment

In the rapidly evolving landscape of technologies, XML continues to play a vital role in structuring, storing, and transporting data across diverse systems. The recent advancements in artificial intelligence (AI) present new methodologies for enhancing XML development workflows, introducing efficiency, automation, and intelligent capabilities. This presentation will outline the scope and perspective of utilizing AI in XML development. The potential benefits and the possible pitfalls will be highlighted, providing a balanced view of the subject.

We will explore the capabilities of AI in understanding XML markup languages and autonomously creating structured XML content. Additionally, we will examine the capacity of AI to enrich plain text with appropriate XML markup. Practical examples and methodological guidelines will be provided to elucidate how AI can be effectively prompted to interpret and generate accurate XML markup.

Further emphasis will be placed on the role of AI in developing XSLT, or schemas such as XSD and Schematron. We will address the techniques and strategies adopted to create prompts for generating code, explaining code, or refactoring the code, and the results achieved.

The discussion will extend to how AI can be used to transform XML content. In particular, the focus will be on the use of AI XPath extension functions in XSLT, Schematron, Schematron Quick Fixes, or for XML content refactoring.

The presentation aims to deliver a comprehensive overview of AI usage in XML development, providing attendees with the necessary knowledge to make informed decisions. Whether you’re at the early stages of adopting AI or considering integrating it in advanced XML development, this presentation will cover all levels of expertise.

By highlighting the potential advantages and challenges of integrating AI with XML development tools and languages, the presentation seeks to inspire thoughtful conversation around the future of XML development. We’ll not only delve into the technical aspects of AI-powered XML development but also discuss practical implications and possible future directions.

Taking AI to the Next Level in Manufacturing.pdf

Read Taking AI to the Next Level in Manufacturing to gain insights on AI adoption in the manufacturing industry, such as:

1. How quickly AI is being implemented in manufacturing.

2. Which barriers stand in the way of AI adoption.

3. How data quality and governance form the backbone of AI.

4. Organizational processes and structures that may inhibit effective AI adoption.

6. Ideas and approaches to help build your organization's AI strategy.

Generating privacy-protected synthetic data using Secludy and Milvus

During this demo, the founders of Secludy will demonstrate how their system utilizes Milvus to store and manipulate embeddings for generating privacy-protected synthetic data. Their approach not only maintains the confidentiality of the original data but also enhances the utility and scalability of LLMs under privacy constraints. Attendees, including machine learning engineers, data scientists, and data managers, will witness first-hand how Secludy's integration with Milvus empowers organizations to harness the power of LLMs securely and efficiently.

Programming Foundation Models with DSPy - Meetup Slides

Prompting language models is hard, while programming language models is easy. In this talk, I will discuss the state-of-the-art framework DSPy for programming foundation models with its powerful optimizers and runtime constraint system.

HCL Notes und Domino Lizenzkostenreduzierung in der Welt von DLAU

Webinar Recording: https://www.panagenda.com/webinars/hcl-notes-und-domino-lizenzkostenreduzierung-in-der-welt-von-dlau/

DLAU und die Lizenzen nach dem CCB- und CCX-Modell sind für viele in der HCL-Community seit letztem Jahr ein heißes Thema. Als Notes- oder Domino-Kunde haben Sie vielleicht mit unerwartet hohen Benutzerzahlen und Lizenzgebühren zu kämpfen. Sie fragen sich vielleicht, wie diese neue Art der Lizenzierung funktioniert und welchen Nutzen sie Ihnen bringt. Vor allem wollen Sie sicherlich Ihr Budget einhalten und Kosten sparen, wo immer möglich. Das verstehen wir und wir möchten Ihnen dabei helfen!

Wir erklären Ihnen, wie Sie häufige Konfigurationsprobleme lösen können, die dazu führen können, dass mehr Benutzer gezählt werden als nötig, und wie Sie überflüssige oder ungenutzte Konten identifizieren und entfernen können, um Geld zu sparen. Es gibt auch einige Ansätze, die zu unnötigen Ausgaben führen können, z. B. wenn ein Personendokument anstelle eines Mail-Ins für geteilte Mailboxen verwendet wird. Wir zeigen Ihnen solche Fälle und deren Lösungen. Und natürlich erklären wir Ihnen das neue Lizenzmodell.

Nehmen Sie an diesem Webinar teil, bei dem HCL-Ambassador Marc Thomas und Gastredner Franz Walder Ihnen diese neue Welt näherbringen. Es vermittelt Ihnen die Tools und das Know-how, um den Überblick zu bewahren. Sie werden in der Lage sein, Ihre Kosten durch eine optimierte Domino-Konfiguration zu reduzieren und auch in Zukunft gering zu halten.

Diese Themen werden behandelt

- Reduzierung der Lizenzkosten durch Auffinden und Beheben von Fehlkonfigurationen und überflüssigen Konten

- Wie funktionieren CCB- und CCX-Lizenzen wirklich?

- Verstehen des DLAU-Tools und wie man es am besten nutzt

- Tipps für häufige Problembereiche, wie z. B. Team-Postfächer, Funktions-/Testbenutzer usw.

- Praxisbeispiele und Best Practices zum sofortigen Umsetzen

Building Production Ready Search Pipelines with Spark and Milvus

Spark is the widely used ETL tool for processing, indexing and ingesting data to serving stack for search. Milvus is the production-ready open-source vector database. In this talk we will show how to use Spark to process unstructured data to extract vector representations, and push the vectors to Milvus vector database for search serving.

Skybuffer SAM4U tool for SAP license adoption

Manage and optimize your license adoption and consumption with SAM4U, an SAP free customer software asset management tool.

SAM4U, an SAP complimentary software asset management tool for customers, delivers a detailed and well-structured overview of license inventory and usage with a user-friendly interface. We offer a hosted, cost-effective, and performance-optimized SAM4U setup in the Skybuffer Cloud environment. You retain ownership of the system and data, while we manage the ABAP 7.58 infrastructure, ensuring fixed Total Cost of Ownership (TCO) and exceptional services through the SAP Fiori interface.

How to Interpret Trends in the Kalyan Rajdhani Mix Chart.pdf

A Mix Chart displays historical data of numbers in a graphical or tabular form. The Kalyan Rajdhani Mix Chart specifically shows the results of a sequence of numbers over different periods.

20240607 QFM018 Elixir Reading List May 2024

Everything I found interesting about the Elixir programming ecosystem in May 2024

HCL Notes and Domino License Cost Reduction in the World of DLAU

Webinar Recording: https://www.panagenda.com/webinars/hcl-notes-and-domino-license-cost-reduction-in-the-world-of-dlau/

The introduction of DLAU and the CCB & CCX licensing model caused quite a stir in the HCL community. As a Notes and Domino customer, you may have faced challenges with unexpected user counts and license costs. You probably have questions on how this new licensing approach works and how to benefit from it. Most importantly, you likely have budget constraints and want to save money where possible. Don’t worry, we can help with all of this!

We’ll show you how to fix common misconfigurations that cause higher-than-expected user counts, and how to identify accounts which you can deactivate to save money. There are also frequent patterns that can cause unnecessary cost, like using a person document instead of a mail-in for shared mailboxes. We’ll provide examples and solutions for those as well. And naturally we’ll explain the new licensing model.

Join HCL Ambassador Marc Thomas in this webinar with a special guest appearance from Franz Walder. It will give you the tools and know-how to stay on top of what is going on with Domino licensing. You will be able lower your cost through an optimized configuration and keep it low going forward.

These topics will be covered

- Reducing license cost by finding and fixing misconfigurations and superfluous accounts

- How do CCB and CCX licenses really work?

- Understanding the DLAU tool and how to best utilize it

- Tips for common problem areas, like team mailboxes, functional/test users, etc

- Practical examples and best practices to implement right away

AI 101: An Introduction to the Basics and Impact of Artificial Intelligence

Imagine a world where machines not only perform tasks but also learn, adapt, and make decisions. This is the promise of Artificial Intelligence (AI), a technology that's not just enhancing our lives but revolutionizing entire industries.

Recently uploaded (20)

Digital Marketing Trends in 2024 | Guide for Staying Ahead

Digital Marketing Trends in 2024 | Guide for Staying Ahead

Nordic Marketo Engage User Group_June 13_ 2024.pptx

Nordic Marketo Engage User Group_June 13_ 2024.pptx

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

Cosa hanno in comune un mattoncino Lego e la backdoor XZ?

Salesforce Integration for Bonterra Impact Management (fka Social Solutions A...

Salesforce Integration for Bonterra Impact Management (fka Social Solutions A...

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

Energy Efficient Video Encoding for Cloud and Edge Computing Instances

WeTestAthens: Postman's AI & Automation Techniques

WeTestAthens: Postman's AI & Automation Techniques

Generating privacy-protected synthetic data using Secludy and Milvus

Generating privacy-protected synthetic data using Secludy and Milvus

Programming Foundation Models with DSPy - Meetup Slides

Programming Foundation Models with DSPy - Meetup Slides

Deep Dive: AI-Powered Marketing to Get More Leads and Customers with HyperGro...

Deep Dive: AI-Powered Marketing to Get More Leads and Customers with HyperGro...

HCL Notes und Domino Lizenzkostenreduzierung in der Welt von DLAU

HCL Notes und Domino Lizenzkostenreduzierung in der Welt von DLAU

Building Production Ready Search Pipelines with Spark and Milvus

Building Production Ready Search Pipelines with Spark and Milvus

How to Interpret Trends in the Kalyan Rajdhani Mix Chart.pdf

How to Interpret Trends in the Kalyan Rajdhani Mix Chart.pdf

HCL Notes and Domino License Cost Reduction in the World of DLAU

HCL Notes and Domino License Cost Reduction in the World of DLAU

AI 101: An Introduction to the Basics and Impact of Artificial Intelligence

AI 101: An Introduction to the Basics and Impact of Artificial Intelligence

2007-12-15 AGU SanFrancisco ExceptEvent

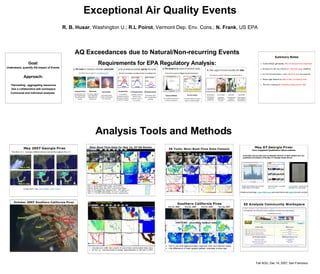

- 1. Exceptional Air Quality Events R. B. Husar , Washington U.; R.L Poirot , Vermont Dep. Env. Cons.; N. Frank , US EPA Fall AGU, Dec 14, 2007, San Francisco AQ Exceedances due to Natural/Non-recurring Events Requirements for EPA Regulatory Analysis: Analysis Tools and Methods Goal: Understand, quantify AQ impact of Events Approach: ‘ Harvesting’, aggregating resources Use a collaborative wiki workspace Communal and individual analyses