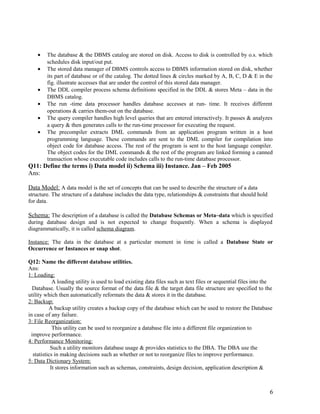

1) Database systems provide several key advantages over file-based systems, including controlling redundancy, restricting unauthorized access, and representing complex relationships among data. They allow data to be stored logically in one place while supporting multiple views.

2) A DBMS allows defining data structures, manipulating and sharing databases for applications. It provides facilities for backup/recovery and enforcing integrity constraints.

3) A database administrator is responsible for authorizing access, coordinating use, and acquiring resources for the database and DBMS. Their role is to oversee the primary resource of the database and secondary resources of related software.

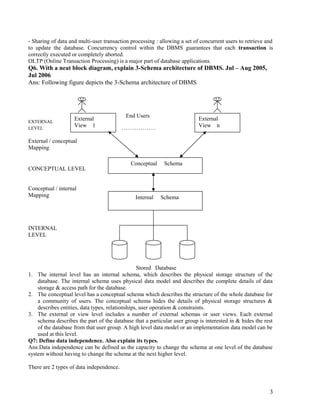

![1. Logical Data Independence:

It’s the capacity to change the conceptual schema without having to change external schemas or

application programs. Conceptual schema may change, to expand the database or to reduce the database

the database. In either case only the view definition & the mapping need to be changed in a DBMS that

supports logical data independence. Application program that references the external schema constructs

work as before after the conceptual schema undergoes a logical reorganization.

2. Physical Data Independence :

It’s the capacity to change to the interval schema without having to change the conceptual &

external schemas. Changes to the internal schema may be needed because some physical files had to be

reorganized.

Ex: By creating additional access structure to improve the performance of retrieval or update.

Q8: Define the terms i) DDL ii) DML iii) Host Language iv) Data Sublanguage

Ans:

Data Definition Language (DDL): DDL is used (by the DBA & by database designers) to define both

schemas. The DBMS will have a DDL compiler which process DDL statements in order to identify

description of the schema constructs & to store schema description in the DBMS catalog.

Data Manipulation Language (DML): There are two main types of DMLs.

• A High – Level or Non – Procedural DML can be used on its own to specify complex

database operations in a concise manner. High level DML statements can be either entered

interactively from a terminal or to be embedded in a general-purpose programming language.

High level DMLs can specify & retrieve many records in a single statement & are hence

called set-at-a time or set-oriented DMLs.

• Low-level or procedural DML must be embedded in a general purpose programming

language. This type of DML retrieves individual records from the database & processes each

record separately. Because of this property they are also called as record-at-a-time DMLs.

Data Sub Language & Host Language :Whenever DML commands (whether high level or low level) are

embedded in a general purpose programming language, that language is called host language & DML is

called data sublanguage. [In never DBMSs such as 0.0. systems the host & data sublanguages typically

form are integrated languages such as c++].

Q9: List out the various interfaces supported by DBMS Jun-Jul 2008

Ans: Menu-Based Interface:

These interfaces present the user with lists of options called menus, which lead the user through

the formation of a request.

Graphical Interfaces:

A graphical interface displays a schema to the user in diagrammatic form. The user can then

specify a query by manipulating the diagram. In many cases, graphical interfaces are combined with

menus.

Forms-Based Interfaces:

It displays a form to each user. User can fill out all of the form entries to insert new data or they

fill out only certain entries in which case the DBMS will retrieve matching data for the remaining entries.

Many DBMSs have special languages called forms.

Natural Language Interfaces:

These interfaces accept requests written in english or some other language & attempt understand

them. A natural language interface usually has its own schema which is similar to the database conceptual

schema. The interface refers to the words in its schema as well as to a set of standard words in

interpreting the request. If the interpretation is successful, the interface generates a high-level query

corresponding to the natural language request & submits it to the DBMS for processing. Otherwise user

will be asked to classify the request.

4](https://image.slidesharecdn.com/1-131201120506-phpapp01/85/1-introduction-qb-4-320.jpg)