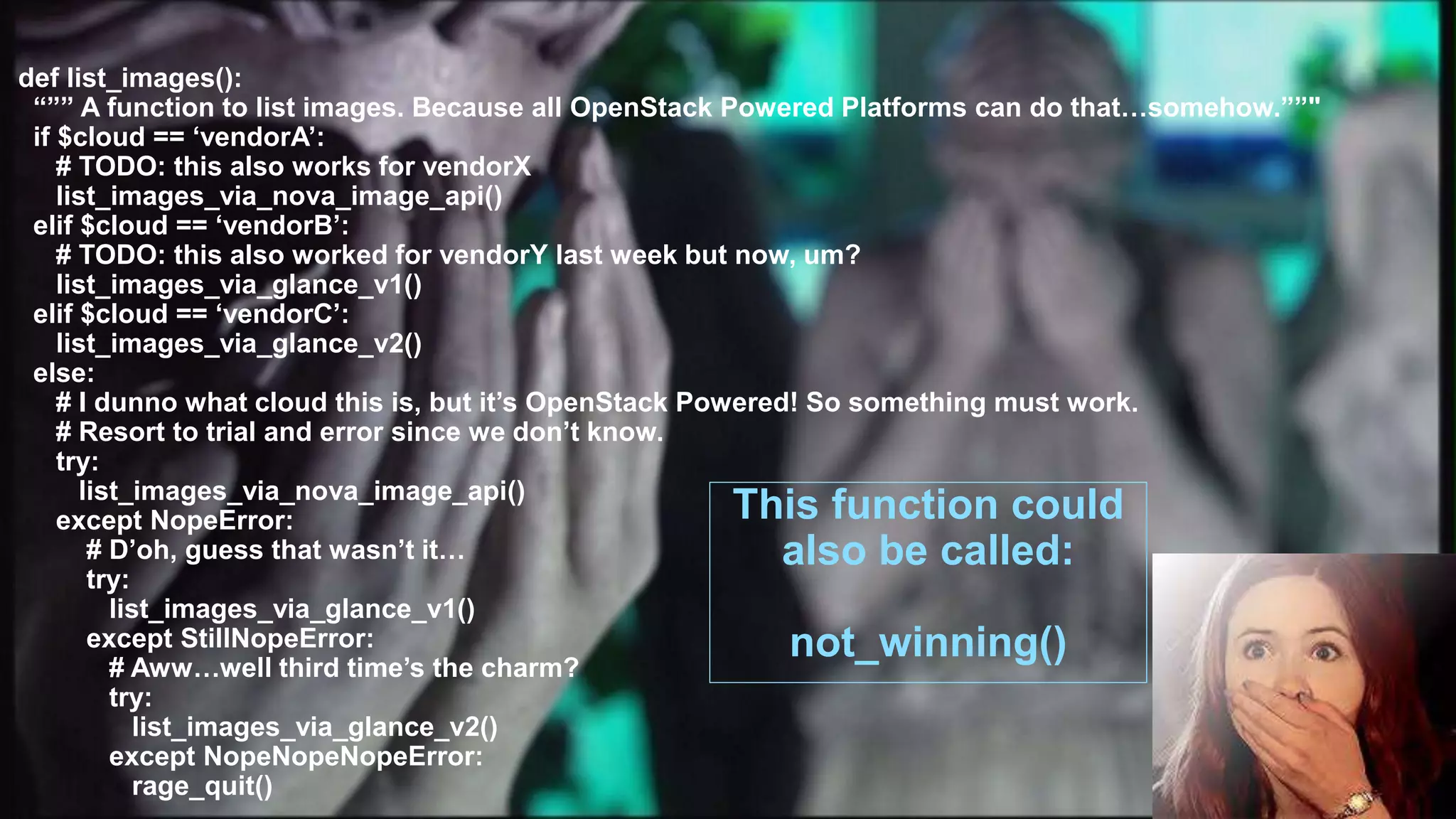

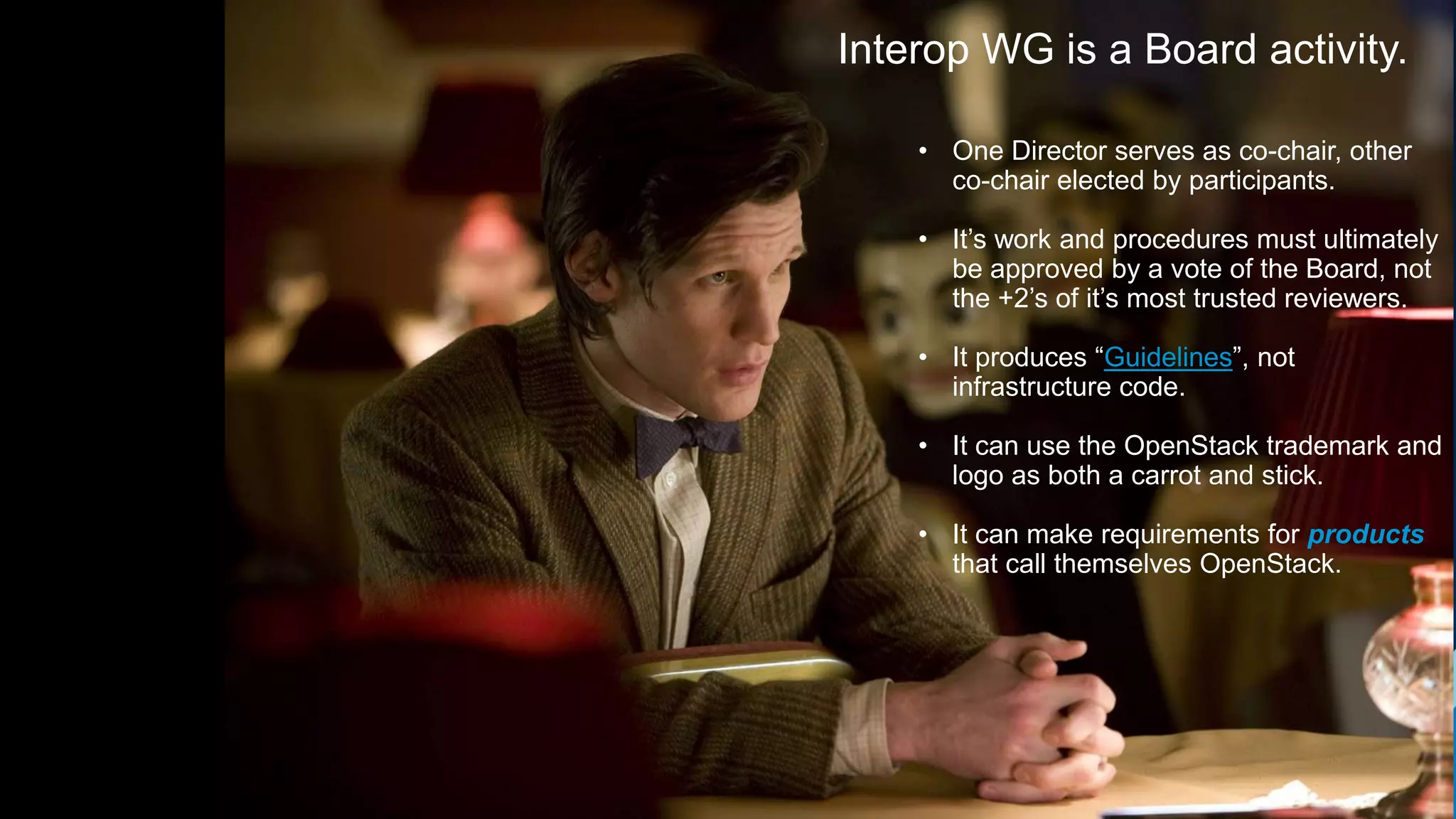

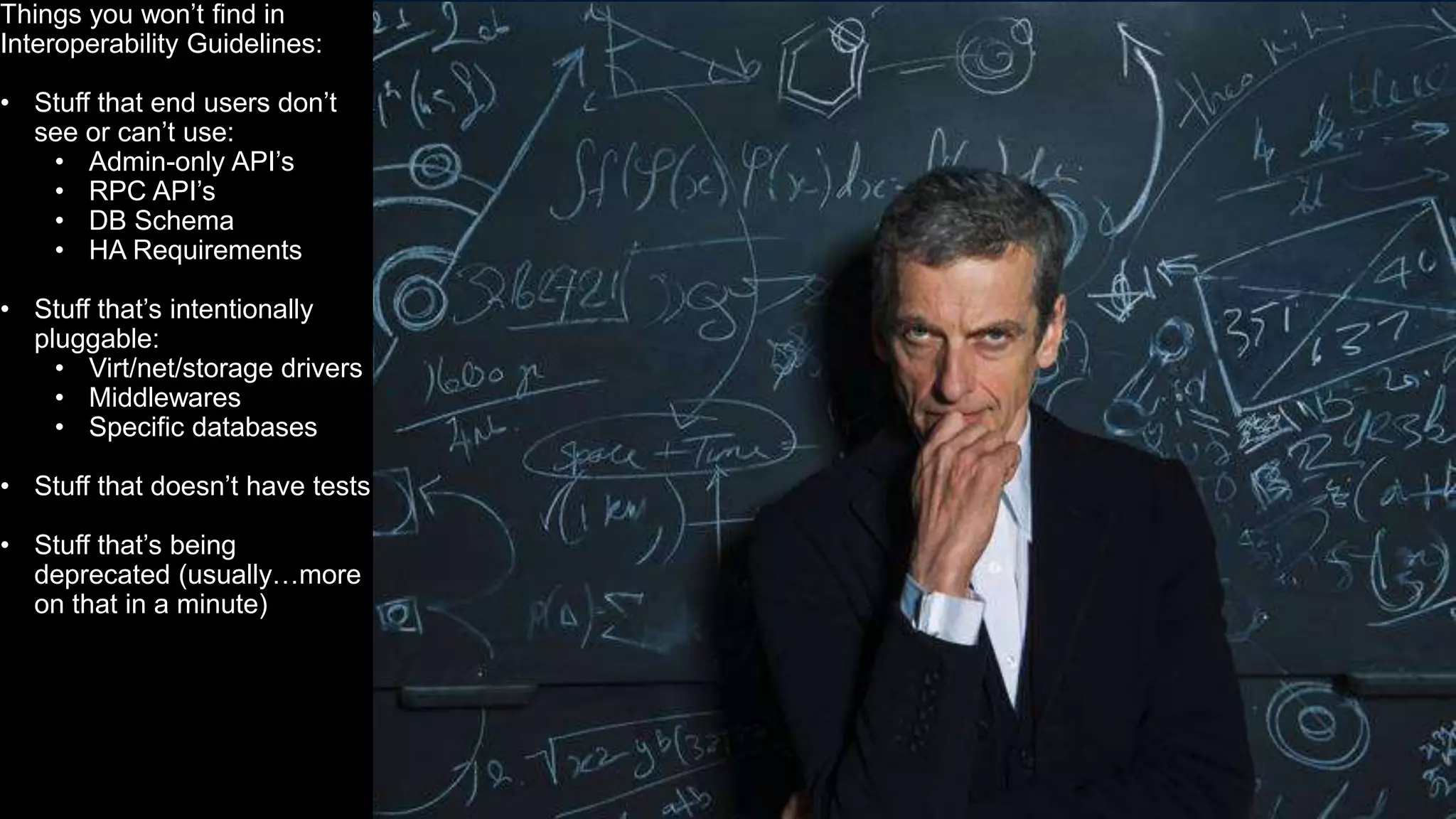

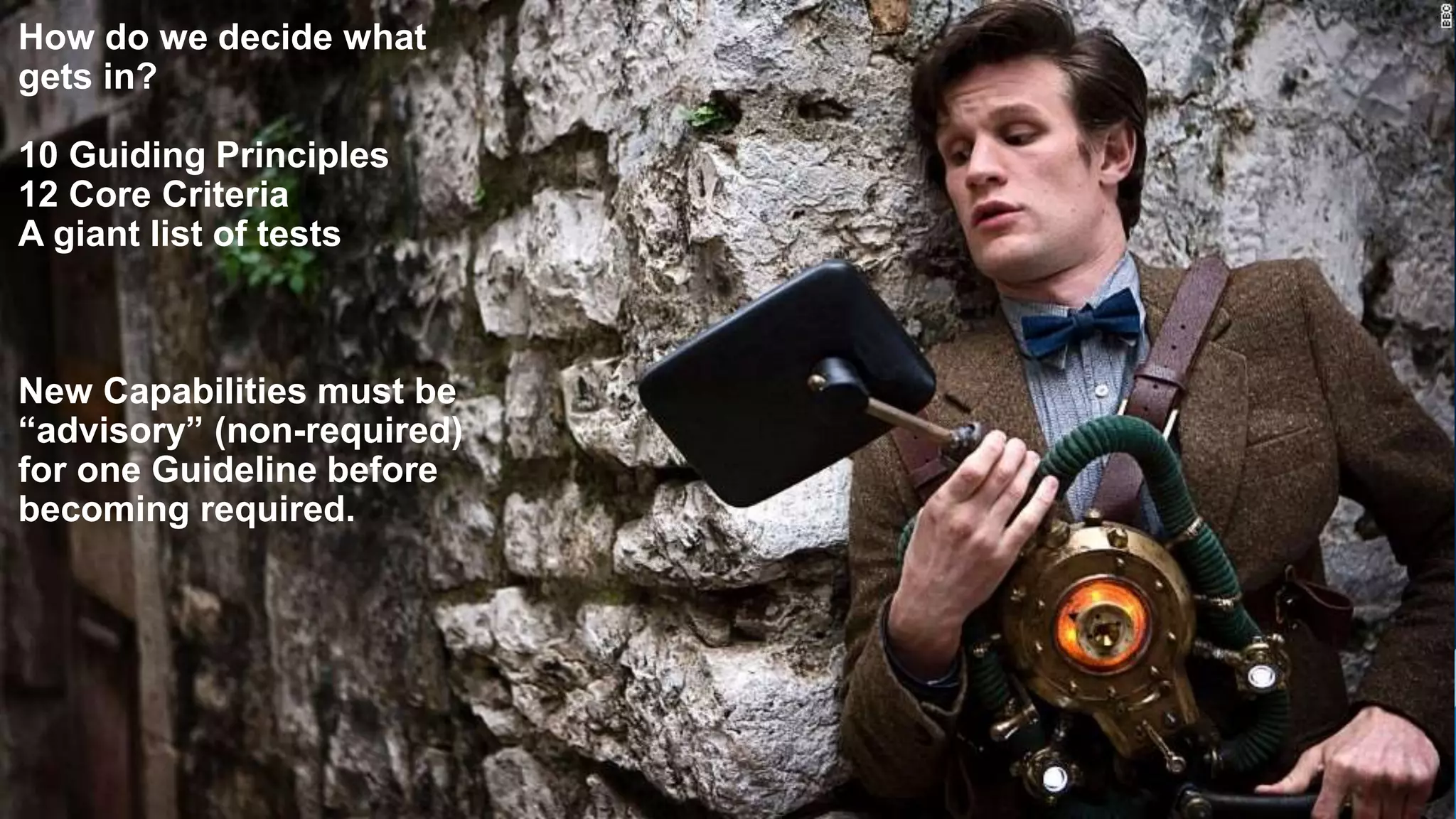

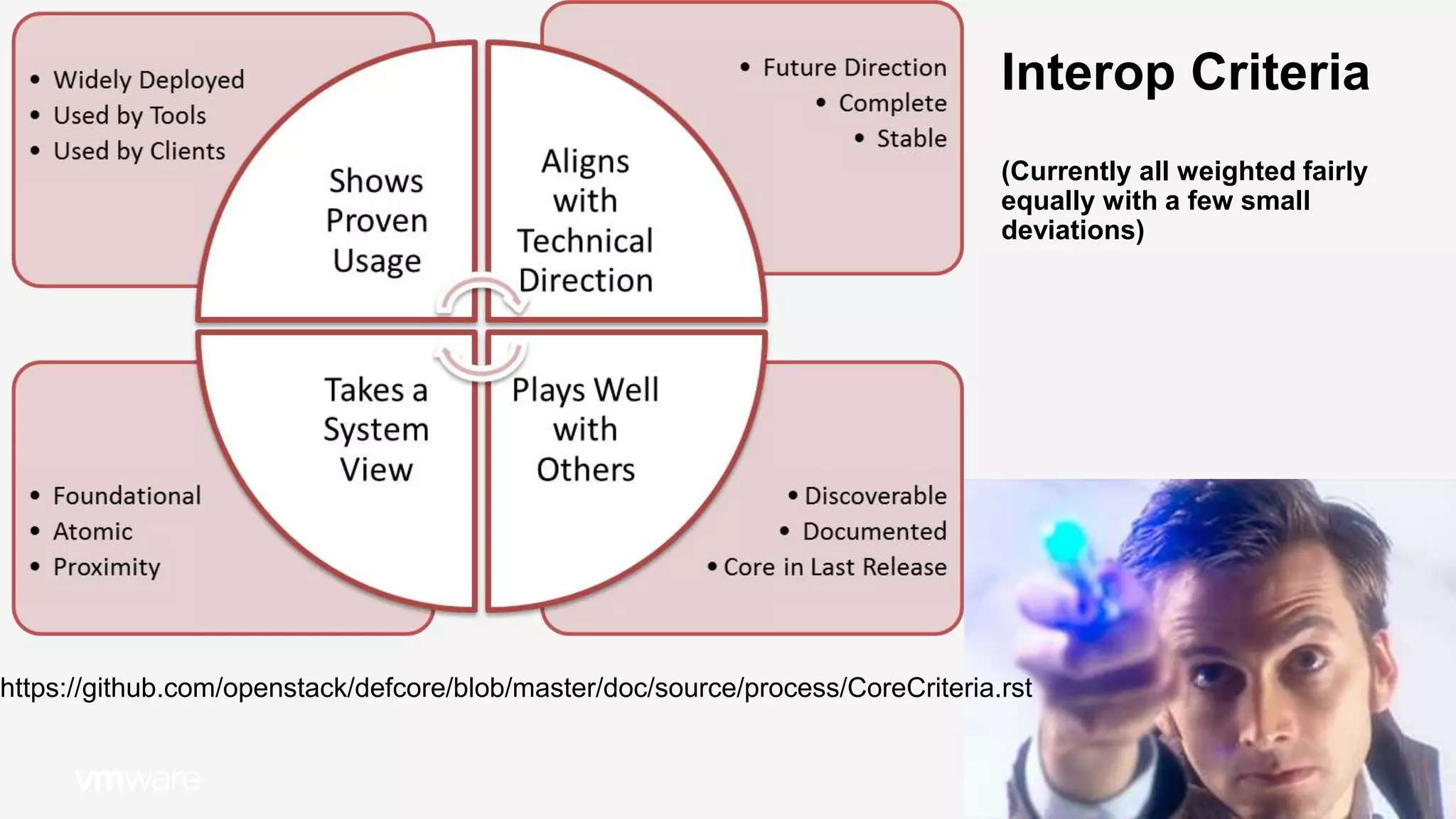

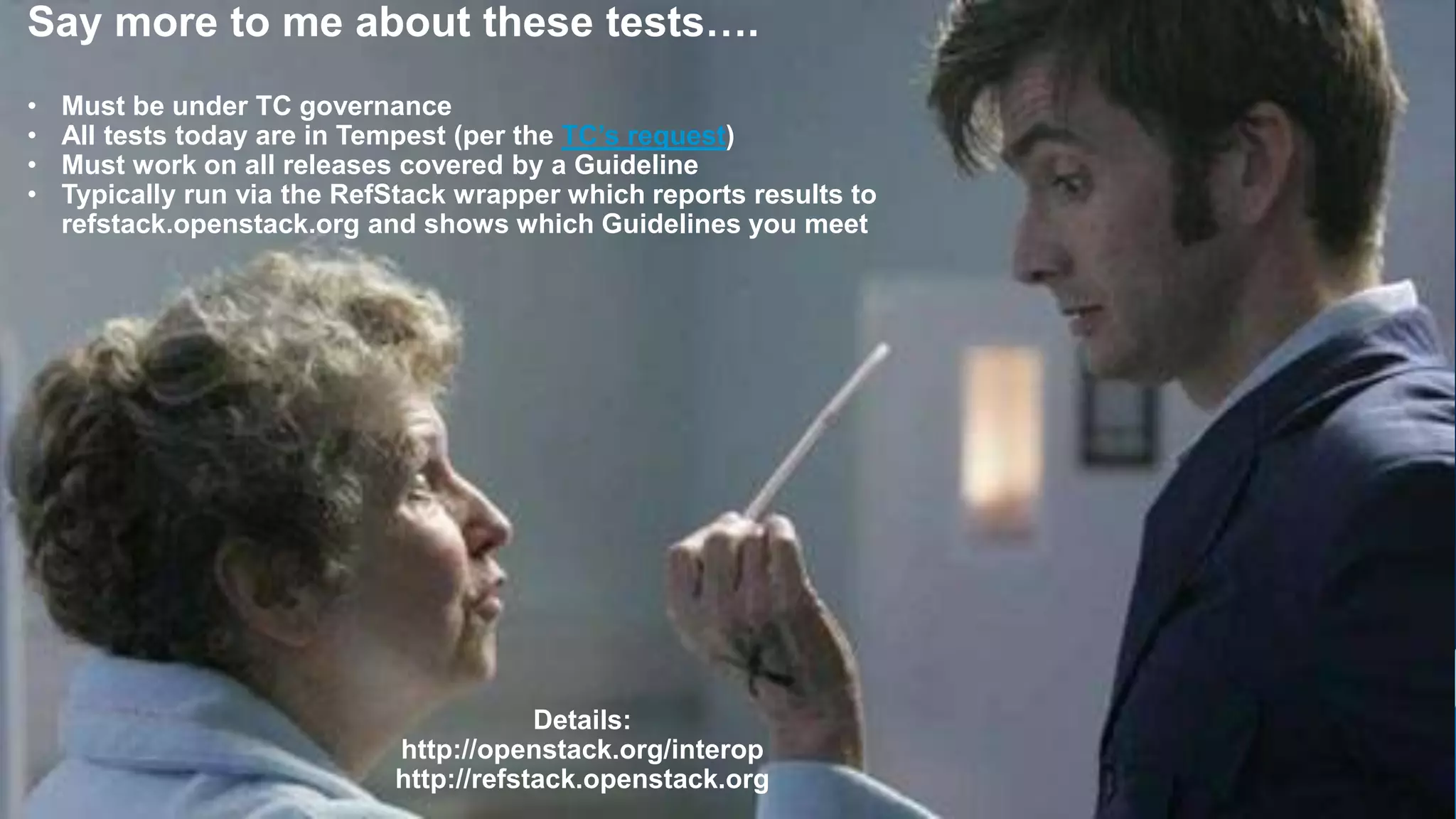

The document summarizes the OpenStack Interoperability Working Group's efforts to promote interoperability across OpenStack distributions and products. It discusses how the group develops guidelines specifying required capabilities and tests. Products must pass these tests to be considered interoperable and qualify for the OpenStack logo program. The guidelines aim to ensure a consistent user experience while allowing flexibility in implementations. The document also outlines the group's governance process and opportunities for participants to provide feedback to help improve interoperability standards over time.

![9

[note: this talk will be slightly more entertaining if you’re a science fiction fan…

…otherwise it will merely be somewhat informative.]](https://image.slidesharecdn.com/osedefcorelongtalk2017-170207044211/75/InteropWG-Intro-Vertical-Programs-May-2017-9-2048.jpg)

![62

Thank you.

[with apologies to fine the folks at the BBC’s “Doctor Who”]

(please don’t have me arrested)](https://image.slidesharecdn.com/osedefcorelongtalk2017-170207044211/75/InteropWG-Intro-Vertical-Programs-May-2017-62-2048.jpg)