Resume_Akanksha_Pandya_2022.docx

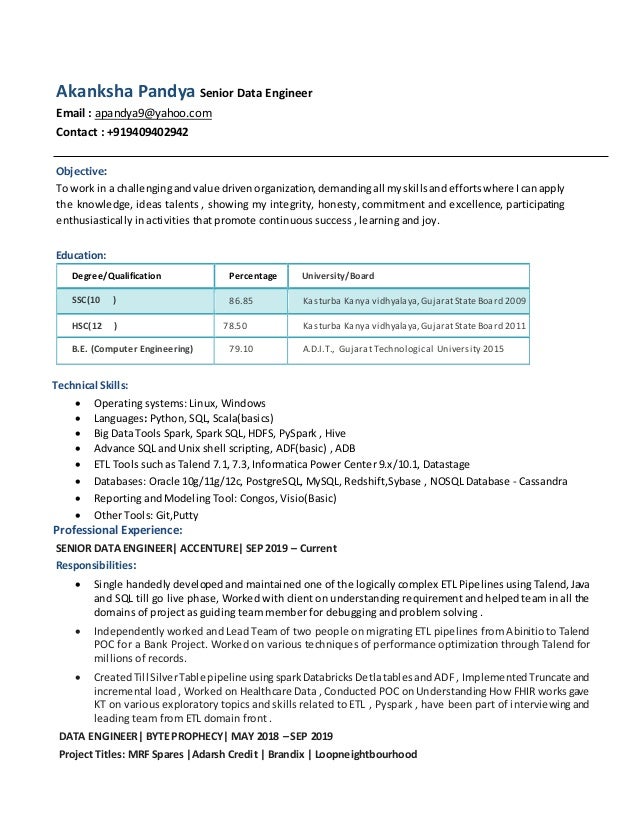

- 1. Akanksha Pandya Senior Data Engineer Email : apandya9@yahoo.com Contact : +919409402942 Objective: To work in a challengingandvalue driven organization, demandingall myskillsandefforts where Icanapply the knowledge, ideas talents , showing my integrity, honesty, commitment and excellence, participating enthusiastically in activities that promote continuous success , learning and joy. Education: Degree/Qualification Percentage University/Board SSC(10 th ) 86.85 Kasturba Kanya vidhyalaya,GujaratStateBoard 2009 HSC(12 th ) 78.50 Kasturba Kanya vidhyalaya,GujaratStateBoard 2011 B.E. (Computer Engineering) 79.10 A.D.I.T., Gujarat Technological University 2015 Technical Skills: Operating systems: Linux, Windows Languages: Python, SQL, Scala(basics) Big Data Tools Spark, Spark SQL, HDFS, PySpark , Hive Advance SQL and Unix shell scripting, ADF(basic) , ADB ETL Tools such as Talend 7.1, 7.3, Informatica Power Center 9.x/10.1, Datastage Databases: Oracle 10g/11g/12c, PostgreSQL, MySQL, Redshift,Sybase , NOSQL Database - Cassandra Reporting and Modeling Tool: Congos, Visio(Basic) Other Tools: Git,Putty Professional Experience: SENIOR DATA ENGINEER| ACCENTURE| SEP 2019 – Current Responsibilities: Single handedly developed and maintained one of the logically complex ETL Pipelines using Talend, Java and SQL till go live phase, Worked with client on understanding requirement and helped team in all the domains of project as guiding team member for debugging and problem solving . Independently worked and Lead Team of two people on migrating ETL pipelines from Abinitio to Talend POC for a Bank Project. Worked on various techniques of performance optimization through Talend for millions of records. CreatedTill SilverTable pipeline usingspark Databricks Detlatablesand ADF , Implemented Truncate and incremental load , Worked on Healthcare Data , Conducted POC on Understanding How FHIR works gave KT on various exploratory topics and skills related to ETL , Pyspark , have been part of interviewing and leading team from ETL domain front . DATA ENGINEER| BYTE PROPHECY| MAY 2018 – SEP 2019 Project Titles: MRF Spares |Adarsh Credit | Brandix | Loopneightbourhood

- 2. Responsibilities: Major responsibilities consist of Building ETL Pipelines from scratch while identifying areas of automation to its production deployment using structured or unstructured data sets and maintaining data infrastructure andarchitecturewiththe goalof helpingbigbusinessestake strategicdecisionsusinginsights through data. BusinessessuchasBrandix anApparel Manufacturer, MRFIndiafromLogistics domain, AdarshCreditfrom Finance Domain, Loopneighborhood fromRetail domainETLpipelines have beendeveloped usingBigdata Technologies Spark and Scala, Spark SQL, Java, Talend and Unix for automation of tasks. Engage withdata consumers andproducers to design appropriate models to suit all of their daily needs. Managing delivery, EnsuringFunctionalityvalidation, datavalidation androbustdata pipelines withalerts of success and failure with reasons time to time. Work with stake holders including the Client, Product, Design Teams to assist with data related technical issues and hence conforming to client requirements. ASSISTANT SYSTEMS ENGINEER| TATA CONSULTANCY SERVICES|AUG 2015-MAY 2017 Project Title: Master Data Management (MDM)| Client: GE Capital Corporation (Treasury Unit), CT Responsibilities: Worked on all the phases of software development life cycle for Master Data Management implementation involvingRequirementAnalysis, Design, Coding, Testing,andDeploymentof applications in data warehousing (ETL) using Informatica Power Center, Oracle/SQL and Unix Interacted with the Business Analysts to provide the technical requirements such as file formats, Data behavior of various source systems in order to have smoothETL development also for understanding of business requirements, worked with various teams to identify root cause of the technical issues of the application at production level. Design ETL solutions using advance SQL and advance ETL transformations of Informatica which can interact with MDM front end JAVA application to enable the user to perform bulk data loads from the front-end screen or prior scheduling, while also ensuring error free ETL pipeline. Developingof DMS, Impact Analysis document, UTP, UTRs based on business requirements andAssisted at developing High Level Technical Design specification, Low-level specifications for each release. Accomplishments: AWS Certified Developer – Associate Certified 2020 Received Two Best Team Awards at TCS for the successful release of the projects. Received 'On the Spot' award at TCS for special contribution in multiple projects in MDMTeam. Six sigma green belt for WSS Efficient data management from TCS. Sustenance Activity: Reproduced the issuesatvarious situations hence shownthe ability toworkindifferentenvironmentand situations. Developed Spark SQL Libraries Extract component/Transform/Load Components in order to maintain a process and ease of developers due to its reusability and logging across any Project for Byte Prophecy . Extracurricular Activities: Lead Save Pollution Drive and Road-Safety drive at TCS. Played part in the TCS-GE Dance confluence 2016. Played role of Zonal manager (Acting) in the Byte Prophecy’s Marketing video. Hobbies: Dancing, Clicking Random pictures, making food cooked by me reach its best level.