Continuous and Discrete-Time Analysis of SGD

•

0 likes•248 views

Continuous and Discrete-Time Analysis of SGD

Report

Share

Report

Share

Download to read offline

Recommended

The generation of Gaussian random fields over a physical domain is a challenging problem in computational mathematics, especially when the correlation length is short and the field is rough. The traditional approach is to make use of a truncated Karhunen-Loeve (KL) expansion, but the generation of even a single realisation of the field may then be effectively beyond reach (especially for 3-dimensional domains) if the need is to obtain an expected L2 error of say 5%, because of the potentially very slow convergence of the KL expansion. In this talk, based on joint work with Ivan Graham, Frances Kuo, Dirk Nuyens, and Rob Scheichl, a completely different approach is used, in which the field is initially generated at a regular grid on a 2- or 3-dimensional rectangle that contains the physical domain, and then possibly interpolated to obtain the field at other points. In that case there is no need for any truncation. Rather the main problem becomes the factorisation of a large dense matrix. For this we use circulant embedding and FFT ideas. Quasi-Monte Carlo integration is then used to evaluate the expected value of some functional of the finite-element solution of an elliptic PDE with a random field as input.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

Recommended

The generation of Gaussian random fields over a physical domain is a challenging problem in computational mathematics, especially when the correlation length is short and the field is rough. The traditional approach is to make use of a truncated Karhunen-Loeve (KL) expansion, but the generation of even a single realisation of the field may then be effectively beyond reach (especially for 3-dimensional domains) if the need is to obtain an expected L2 error of say 5%, because of the potentially very slow convergence of the KL expansion. In this talk, based on joint work with Ivan Graham, Frances Kuo, Dirk Nuyens, and Rob Scheichl, a completely different approach is used, in which the field is initially generated at a regular grid on a 2- or 3-dimensional rectangle that contains the physical domain, and then possibly interpolated to obtain the field at other points. In that case there is no need for any truncation. Rather the main problem becomes the factorisation of a large dense matrix. For this we use circulant embedding and FFT ideas. Quasi-Monte Carlo integration is then used to evaluate the expected value of some functional of the finite-element solution of an elliptic PDE with a random field as input.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

In this talk, we give an overview of results on numerical integration in Hermite spaces. These spaces contain functions defined on $\mathbb{R}^d$, and can be characterized by the decay of their Hermite coefficients. We consider the case of exponentially as well as polynomially decaying Hermite coefficients. For numerical integration, we either use Gauss-Hermite quadrature rules or algorithms based on quasi-Monte Carlo rules. We present upper and lower error bounds for these algorithms, and discuss their dependence on the dimension $d$. Furthermore, we comment on open problems for future research. Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Appli...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Appli...The Statistical and Applied Mathematical Sciences Institute

We will describe and analyze accurate and efficient numerical algorithms to interpolate and approximate the integral of multivariate functions. The algorithms can be applied when we are given the function values at an arbitrary positioned, and usually small, existing sparse set of function values (samples), and additional samples are impossible, or difficult (e.g. expensive) to obtain. The methods are based on local, and global, tensor-product sparse quasi-interpolation methods that are exact for a class of sparse multivariate orthogonal polynomials.QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...The Statistical and Applied Mathematical Sciences Institute

Sequential quasi-Monte Carlo (SQMC) is a quasi-Monte Carlo (QMC) version of sequential Monte Carlo (or particle filtering), a popular class of Monte Carlo techniques used to carry out inference in state space models. In this talk I will first review the SQMC methodology as well as some theoretical results. Although SQMC converges faster than the usual Monte Carlo error rate its performance deteriorates quickly as the dimension of the hidden variable increases. However, I will show with an example that SQMC may perform well for some "high" dimensional problems. I will conclude this talk with some open problems and potential applications of SQMC in complicated settings.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

QMC algorithms usually rely on a choice of “N” evenly distributed integration nodes in $[0,1)^d$. A common means to assess such an equidistributional property for a point set or sequence is the so-called discrepancy function, which compares the actual number of points to the expected number of points (assuming uniform distribution on $[0,1)^{d}$) that lie within an arbitrary axis parallel rectangle anchored at the origin. The dependence of the integration error using QMC rules on various norms of the discrepancy function is made precise within the well-known Koksma--Hlawka inequality and its variations. In many cases, such as $L^{p}$ spaces, $1<p<\infty$, the best growth rate in terms of the number of points “N” as well as corresponding explicit constructions are known. In the classical setting $p=\infty$ sharp results are absent for $d\geq3$ already and appear to be intriguingly hard to obtain. This talk shall serve as a survey on discrepancy theory with a special emphasis on the $L^{\infty}$ setting. Furthermore, it highlights the evolution of recent techniques and presents the latest results.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

One of the central tasks in computational mathematics and statistics is to accurately approximate unknown target functions. This is typically done with the help of data — samples of the unknown functions. The emergence of Big Data presents both opportunities and challenges. On one hand, big data introduces more information about the unknowns and, in principle, allows us to create more accurate models. On the other hand, data storage and processing become highly challenging. In this talk, we present a set of sequential algorithms for function approximation in high dimensions with large data sets. The algorithms are of iterative nature and involve only vector operations. They use one data sample at each step and can handle dynamic/stream data. We present both the numerical algorithms, which are easy to implement, as well as rigorous analysis for their theoretical foundation.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

More Related Content

What's hot

In this talk, we give an overview of results on numerical integration in Hermite spaces. These spaces contain functions defined on $\mathbb{R}^d$, and can be characterized by the decay of their Hermite coefficients. We consider the case of exponentially as well as polynomially decaying Hermite coefficients. For numerical integration, we either use Gauss-Hermite quadrature rules or algorithms based on quasi-Monte Carlo rules. We present upper and lower error bounds for these algorithms, and discuss their dependence on the dimension $d$. Furthermore, we comment on open problems for future research. Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Appli...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Appli...The Statistical and Applied Mathematical Sciences Institute

We will describe and analyze accurate and efficient numerical algorithms to interpolate and approximate the integral of multivariate functions. The algorithms can be applied when we are given the function values at an arbitrary positioned, and usually small, existing sparse set of function values (samples), and additional samples are impossible, or difficult (e.g. expensive) to obtain. The methods are based on local, and global, tensor-product sparse quasi-interpolation methods that are exact for a class of sparse multivariate orthogonal polynomials.QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...The Statistical and Applied Mathematical Sciences Institute

Sequential quasi-Monte Carlo (SQMC) is a quasi-Monte Carlo (QMC) version of sequential Monte Carlo (or particle filtering), a popular class of Monte Carlo techniques used to carry out inference in state space models. In this talk I will first review the SQMC methodology as well as some theoretical results. Although SQMC converges faster than the usual Monte Carlo error rate its performance deteriorates quickly as the dimension of the hidden variable increases. However, I will show with an example that SQMC may perform well for some "high" dimensional problems. I will conclude this talk with some open problems and potential applications of SQMC in complicated settings.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

QMC algorithms usually rely on a choice of “N” evenly distributed integration nodes in $[0,1)^d$. A common means to assess such an equidistributional property for a point set or sequence is the so-called discrepancy function, which compares the actual number of points to the expected number of points (assuming uniform distribution on $[0,1)^{d}$) that lie within an arbitrary axis parallel rectangle anchored at the origin. The dependence of the integration error using QMC rules on various norms of the discrepancy function is made precise within the well-known Koksma--Hlawka inequality and its variations. In many cases, such as $L^{p}$ spaces, $1<p<\infty$, the best growth rate in terms of the number of points “N” as well as corresponding explicit constructions are known. In the classical setting $p=\infty$ sharp results are absent for $d\geq3$ already and appear to be intriguingly hard to obtain. This talk shall serve as a survey on discrepancy theory with a special emphasis on the $L^{\infty}$ setting. Furthermore, it highlights the evolution of recent techniques and presents the latest results.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

One of the central tasks in computational mathematics and statistics is to accurately approximate unknown target functions. This is typically done with the help of data — samples of the unknown functions. The emergence of Big Data presents both opportunities and challenges. On one hand, big data introduces more information about the unknowns and, in principle, allows us to create more accurate models. On the other hand, data storage and processing become highly challenging. In this talk, we present a set of sequential algorithms for function approximation in high dimensions with large data sets. The algorithms are of iterative nature and involve only vector operations. They use one data sample at each step and can handle dynamic/stream data. We present both the numerical algorithms, which are easy to implement, as well as rigorous analysis for their theoretical foundation.Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

This talk was presented as part of the Trends and Advances in Monte Carlo Sampling Algorithms Workshop.QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...The Statistical and Applied Mathematical Sciences Institute

What's hot (20)

Coordinate sampler : A non-reversible Gibbs-like sampler

Coordinate sampler : A non-reversible Gibbs-like sampler

Tailored Bregman Ball Trees for Effective Nearest Neighbors

Tailored Bregman Ball Trees for Effective Nearest Neighbors

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Appli...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Appli...

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...

QMC Opening Workshop, High Accuracy Algorithms for Interpolating and Integrat...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Slides: A glance at information-geometric signal processing

Slides: A glance at information-geometric signal processing

On learning statistical mixtures maximizing the complete likelihood

On learning statistical mixtures maximizing the complete likelihood

Patch Matching with Polynomial Exponential Families and Projective Divergences

Patch Matching with Polynomial Exponential Families and Projective Divergences

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

Program on Quasi-Monte Carlo and High-Dimensional Sampling Methods for Applie...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

Computational Information Geometry on Matrix Manifolds (ICTP 2013)

Computational Information Geometry on Matrix Manifolds (ICTP 2013)

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

QMC Program: Trends and Advances in Monte Carlo Sampling Algorithms Workshop,...

Similar to Continuous and Discrete-Time Analysis of SGD

This talk was presented as part of the panel discussion on Day 2 of the MUMS Opening Workshop.MUMS Opening Workshop - Panel Discussion: Facts About Some Statisitcal Models...

MUMS Opening Workshop - Panel Discussion: Facts About Some Statisitcal Models...The Statistical and Applied Mathematical Sciences Institute

Similar to Continuous and Discrete-Time Analysis of SGD (20)

A common fixed point theorem in cone metric spaces

A common fixed point theorem in cone metric spaces

MUMS Opening Workshop - Panel Discussion: Facts About Some Statisitcal Models...

MUMS Opening Workshop - Panel Discussion: Facts About Some Statisitcal Models...

Simplified Runtime Analysis of Estimation of Distribution Algorithms

Simplified Runtime Analysis of Estimation of Distribution Algorithms

Simplified Runtime Analysis of Estimation of Distribution Algorithms

Simplified Runtime Analysis of Estimation of Distribution Algorithms

Delayed acceptance for Metropolis-Hastings algorithms

Delayed acceptance for Metropolis-Hastings algorithms

Seminar Talk: Multilevel Hybrid Split Step Implicit Tau-Leap for Stochastic R...

Seminar Talk: Multilevel Hybrid Split Step Implicit Tau-Leap for Stochastic R...

SOLVING BVPs OF SINGULARLY PERTURBED DISCRETE SYSTEMS

SOLVING BVPs OF SINGULARLY PERTURBED DISCRETE SYSTEMS

Fixed points of contractive and Geraghty contraction mappings under the influ...

Fixed points of contractive and Geraghty contraction mappings under the influ...

Recently uploaded

Recently uploaded (20)

Biopesticide (2).pptx .This slides helps to know the different types of biop...

Biopesticide (2).pptx .This slides helps to know the different types of biop...

Asymmetry in the atmosphere of the ultra-hot Jupiter WASP-76 b

Asymmetry in the atmosphere of the ultra-hot Jupiter WASP-76 b

Formation of low mass protostars and their circumstellar disks

Formation of low mass protostars and their circumstellar disks

Labelling Requirements and Label Claims for Dietary Supplements and Recommend...

Labelling Requirements and Label Claims for Dietary Supplements and Recommend...

Recombinant DNA technology (Immunological screening)

Recombinant DNA technology (Immunological screening)

Vip profile Call Girls In Lonavala 9748763073 For Genuine Sex Service At Just...

Vip profile Call Girls In Lonavala 9748763073 For Genuine Sex Service At Just...

Creating and Analyzing Definitive Screening Designs

Creating and Analyzing Definitive Screening Designs

Hire 💕 9907093804 Hooghly Call Girls Service Call Girls Agency

Hire 💕 9907093804 Hooghly Call Girls Service Call Girls Agency

SAMASTIPUR CALL GIRL 7857803690 LOW PRICE ESCORT SERVICE

SAMASTIPUR CALL GIRL 7857803690 LOW PRICE ESCORT SERVICE

PossibleEoarcheanRecordsoftheGeomagneticFieldPreservedintheIsuaSupracrustalBe...

PossibleEoarcheanRecordsoftheGeomagneticFieldPreservedintheIsuaSupracrustalBe...

Seismic Method Estimate velocity from seismic data.pptx

Seismic Method Estimate velocity from seismic data.pptx

❤Jammu Kashmir Call Girls 8617697112 Personal Whatsapp Number 💦✅.

❤Jammu Kashmir Call Girls 8617697112 Personal Whatsapp Number 💦✅.

Pulmonary drug delivery system M.pharm -2nd sem P'ceutics

Pulmonary drug delivery system M.pharm -2nd sem P'ceutics

Continuous and Discrete-Time Analysis of SGD

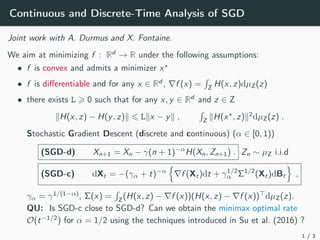

- 1. Continuous and Discrete-Time Analysis of SGD Joint work with A. Durmus and X. Fontaine. We aim at minimizing f : Rd → R under the following assumptions: • f is convex and admits a minimizer x? • f is differentiable and for any x ∈ Rd , ∇f (x) = R Z H(x, z)dµZ(z) • there exists L > 0 such that for any x, y ∈ Rd and z ∈ Z kH(x, z) − H(y, z)k 6 Lkx − yk , R Z kH(x? , z)k2 dµZ(z) . Stochastic Gradient Descent (discrete and continuous) (α ∈ [0, 1)) (SGD-d) Xn+1 = Xn − γ(n + 1)−α H(Xn, Zn+1) . Zn ∼ µZ i.i.d (SGD-c) dXt = −(γα + t)−α n ∇f (Xt)dt + γ1/2 α Σ1/2 (Xt)dBt o , γα = γ1/(1−α) , Σ(x) = R Z (H(x, z) − ∇f (x))(H(x, z) − ∇f (x))> dµZ(z). QU: Is SGD-c close to SGD-d? Can we obtain the minimax optimal rate O(t−1/2 ) for α = 1/2 using the techniques introduced in Su et al. (2016) ? 1 / 3

- 2. Approximation results QU: can we show that SGD-d is close to SGD-c? Yes! Finite horizon strong approximation For any T > 0, there exists CT > 0 such that for any t ∈ [0, T], E1/2 " sup t∈[0,T] kXbtγαc − Xtk2 # 6 CT (ε1/2 γδ + γ)(1 + log(1/γ)) , with δ = min(1, 1/(2 − 2α)) and ε = sup nγα6T E W2 2(νn, N(0, Σ(Xn))) , with νn the distribution of H(Xn, ·) − ∇f (Xn) conditionally to Xn. Proof based on Milstein (1994) and Kloeden and Platen (2013). If H(x, {zi }M i=1) = M−1 PM k=1 ∇ˆ f (x, zi ) then ε = O(M−2 ) using recent advances in Stein’s method Bonis (2020) (effect of the batch size). 2 / 3

- 3. Convergence results QU: what is the optimal convergence rate? Previous works: • Minimax lower-bound → O(t−1/2 ) (Agarwal et al. (2009)) • Bounded gradient case → O(t−1/2 ) (Shamir and Zhang (2013)) • Our setting → O(t−1/3 ) (Moulines and Bach (2011)) We close the gap between lower and upper bounds. Optimal convergence rates In our setting, for any α ∈ [0, 1) there exists Cα 0 such that for any n ∈ N E [f (Xn) − f (x? )] 6 Cα max(n−α , n−1+α ) . The proof relies on the “averaging from the past” procedure of Shamir and Zhang (2013) and is also valid for SGD-c. 3 / 3

- 4. References Alekh Agarwal, Martin J Wainwright, Peter L Bartlett, and Pradeep K Ravikumar. Information-theoretic lower bounds on the oracle complexity of convex optimization. In Advances in Neural Information Processing Systems, pages 1–9, 2009. Thomas Bonis. Stein’s method for normal approximation in wasserstein distances with application to the multivariate central limit theorem. Probability Theory and Related Fields, pages 1–34, 2020. Peter E Kloeden and Eckhard Platen. Numerical solution of stochastic differential equations, volume 23. Springer Science Business Media, 2013. Grigorii Noikhovich Milstein. Numerical integration of stochastic differential equations, volume 313. Springer Science Business Media, 1994. Eric Moulines and Francis R Bach. Non-asymptotic analysis of stochastic approximation algorithms for machine learning. In Advances in Neural Information Processing Systems, pages 451–459, 2011. Ohad Shamir and Tong Zhang. Stochastic gradient descent for non-smooth optimization: Convergence results and optimal averaging schemes. In International conference on machine learning, pages 71–79, 2013. Weijie Su, Stephen Boyd, and Emmanuel J Candes. A differential equation for modeling nesterov’s accelerated gradient method: theory and insights. The Journal of Machine Learning Research, 17(1):5312–5354, 2016. 4 / 3