Backend Cloud Storage Access in Video Streaming

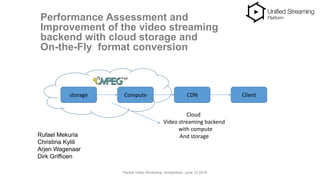

- 1. Performance Assessment and Improvement of the video streaming backend with cloud storage and On-the-Fly format conversion Rufael Mekuria Christina Kylili Arjen Wagenaar Dirk Griffioen storage Compute CDN Client Cloud Video streaming backend with compute And storage Packet Video Workshop, Amsterdam, June 12 2018

- 2. Overview About Unified Streaming Cloud Streaming backend with storage and compute Performance assessment Improvement with MPEG-4 dref approach Performance assessment with dref approach Large scale testing (bonus) Conclusion, future work and standards http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html Packet Video Workshop, Amsterdam, June 12 2018

- 3. Unified Streaming Software for video streaming workflows DRM, Packaging, Content Stitching, live video Embedded in cloud, Telco and CDN environments Standards: DASH-IF, MPEG, 3GPP, DVB Cloud: origin server and packager Azure, Amazon and soon Google Market place Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 4. Cloud Streaming backend with storage and compute Modern video streaming uses cloud infrastructure which goes beyond the simple client server e.g. Netflix, BBC iPlayer, HBO, ViaPlay etc.. Compute and storage available as separate resources in cloud infrastructure Combining storage and compute key for efficient video streaming (both quality and cost wise). Commercial video platforms use this, but implementation details often hidden We deliver software for video streaming platforms, we help you design/optimize your backend, so we Are open about the video streaming backend. By doing so we identify bottlenecks and research challenges for video streaming in current architectures Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 5. Cloud Streaming backend with storage and compute Object Storage: ideal for storing large asset repositories for VoD/DVR due to persistence HTTP interface etc, flexibility: e.g amazon s3 Openstack swift, low costs Compute capabilities: virtual machine, container, deal for conversion e.g. transcoding, format conversion, manifest generation, personalization of presentation, reduces redundant storage, Time to market etc. Combining storage and compute in cloud key for efficient video streaming We provide the performance analysis and propose an improvement by introducing a new intermediate file format -> large increase in throughput/conversion efficiency at compute node was achieved Reduced startup delay was achieved Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 6. Performance Assessment: KPI Packet Video Workshop, Amsterdam, June 12 2018 1. Latency (ms), including startup delay, segment delay 2. Data volume throughput Tensor (MB/s received and send from server) AB (request /second) 3. Conversion efficiency of media processing server

- 7. Performance Assessment: Setup Packet Video Workshop, Amsterdam, June 12 2018 Load Generator tools: Tensor and AB different testing range Unified Origin: dynamic packaging and manifest generation: stream DASH+HLS Simple Storage Solution (S3) as object based storage 3 movies packaged with Unified Packager in MP4 and fMP4 Load Generator S3 storage Fmp4, mp4, Ism Origin Server Amazon Instance m3.xlarge Client Amazon Instance c4.xlarge Ism, video segments Client manifest (mpd, m3u8) Video segments (dash,ts)

- 8. Performance Assessment: Result Packet Video Workshop, Amsterdam, June 12 2018 The Conversion node needs more data than what it produces Dout Din 0,82 0,84 0,74 0,51 0,45 0,31

- 9. Performance Assessment: Analysis Packet Video Workshop, Amsterdam, June 12 2018 Outgoing traffic decreases with cloud storage Latency when backend storage is increased due to extra communication between origin s3 <20ms Maximum throughout of the instance cannot be used Resources go to waste

- 10. Traffic Analysis: manifest generation Packet Video Workshop, Amsterdam, June 12 2018 Origin Server S3 storage Intercept traffic Origin does multiple byte range request to S3 MP4 fMP4 Ism Ism ftyp ftyp Moov box header moov (hundreds of bytes) moov (hundreds of KB) Mfra size Mfra (few KB) last moof box header(DASH) Last moof (DASH) To see bitrates Requests for each bitrate stream to construct URL segments

- 11. Traffic Analysis: segment generation Packet Video Workshop, Amsterdam, June 12 2018 Origin Server S3 storage Intercept traffic MP4 fMP4 Ism ism ftyp ftyp mvhd moov (hundreds of bytes) moov (hundreds of KB) Mfra size Mfra (few KB) mdat Moof & mdat box Locate bitrate stream Locate samples (indexing and timing info) Media samples of segment

- 12. • Critical data for on-the-fly conversion: sample boxes • In mp4 this is bigger than fmp4 bad performance • Optimal file format could be designed • Caching the critical data closer to the conversion server can improve communication So.. Packet Video Workshop, Amsterdam, June 12 2018

- 13. • Cache the critical data close to server: • metadata & ism • Reduce the requests to S3: • Only for media samples • Using existing technology based on ISOBMFF Improvement Proposal Packet Video Workshop, Amsterdam, June 12 2018

- 14. • Part of ISOBMFF media file specification • Only moov box with metadata, no media data • Points to external file of the media data • Same structure for fMP4 and MP4 DREF MPEG-4 Packet Video Workshop, Amsterdam, June 12 2018

- 15. • AB for understanding the effect of caching • Requesting a manifest • Requesting a segment • Tested configurations • Setup 1: Client, Origin, Storage in the same cloud environment. • Setup 2: Origin, Storage in one cloud. Client in a different cloud. • Setup 3: Origin and Client in one cloud. Storage in a different cloud. Performance Assessment Load Generator S3 storage Origin Server Amazon Instance m3.xlarge Amazon Instance c4.xlarge c a c h e Apache cache

- 16. Results: Requesting a Segment (1)

- 17. Results: Requesting a Segment (2) Packet Video Workshop, Amsterdam, June 12 2018

- 18. Results: Requesting a manifest file Could decrease startup delay Reduction: 97% Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 19. • Time from when the viewer intends the video to play until the first frame of the video is displayed • Measure the time manually: • Dash.js 2.5.0 player • Using screen recorder • Measure time in slow speed • Experiment repeated 10 times • Sintel, tears of steel, mp4 case • Setup 3 with remote storage • Compare startup delay between baseline and proposed solution Measuring startup delay Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 20. • Average Startup Delay Measuring startup delay -30% -60% Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 21. • Caching the dref performs at least as good as non caching and even better • Startup delay is decreased • Less performance variation between MP4 and fMP4 • What about large scale testing? (bonus) Conclusions Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 22. • Realistic workload from video players • High concurrent video traffic • Requires to Tune Apache: • High concurrency multiple activities executed at the same time: cache connections, origin connections. • Heavy load on server efficient scheduling of connections • See paper appendix for tuned configuration Large Scale testing Tensor C10K problem: Hardware is not an issue, Software implementations (OS Multi threading, context switching) can be a bottleneck Use suitable concurrency models ,I/O strategies offered by servers Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 23. Large scale Testing: Results MP4 0,84 65% fMP4 0,84 2% MP4 0,86 91% fMP4 0,86 2% Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 24. Large scale Testing: Results MP4 0,74 139% fMP4 0,75 1% Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 25. Increase in throughput per resolution Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 26. • Increased outgoing traffic towards client • Conversion efficiency increases for MP4 • Latency is reduced for segment and manifest request • Design and standardization of optimal formats such as dref for media processing operations can improve streaming performance, target of emerging NBMP standard Conclusions Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

- 27. • Using a different media processing function • Stitching content from multiple sources • Crowd-sourcing • Ad insertion • Personalize streams • Setup is reproducible • Trial license for conversion software • Paper on Thursday on profiling conversion Server with machine learning and telemetry 14:45-16.15 • Unified Streaming docs: • http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html Future work Is dref sufficient? What more can we do? Packet Video Workshop, Amsterdam, June 12 2018 http://docs.unified-streaming.com/documentation/vod/optimizing-storage-caching.html

Editor's Notes

- For comparison purpose we set the SC3DMC coders to code with 8 bits and differential coding options. We compared the orignal captured meshes to the meshes decoded using a tool to measure the symmetric distance between the surfaces. This metric is often known as haussdorf distance. A set of live captured models compressed and decoded shows that the qualities are comparible. Note that lower value implies better quality.

- So from this information , what is important to understand is that there are some parts of the media container that are important for this specific media processing operation. In our case this is the moov atom and sample boxes and the size of it matters as a bigger box means worst performance. This means that we could have a file format that will be designed in an optimal way to access these information. Finally, from this information we can conclude that fetching the critical data closer to the conversion node will improve the communication.

- So based on that , to improve the communication between the origin and the storage, we propose to cache the critical data closer to the origin. Also the manifest file could be cached. This way the number of requests to s3 is reduced. To be able to do this we are using an existing technology that is part of the iso base media file format standard of mpeg.

- This technology refers to the dref mpeg box. This box is part of the moov box and it can specify the location of the media data. So using this we can create containers which we call dref, that only hold metadata of the content and not any media data. The dref box will point to an external file that will hold the media data and it can be either a mp4 or fmp4. So by caching this dref together with the manifest file we can have a more efficient access to the remote storage.

- To evaluate the proposed setup we carried out a set of experiments using this testbed that is the same with what we saw before but now we also the apache cache on the system and we have also created the dref boxes into the storage. For this evaluation we have used apache benchmark by generating a big amount of requests for a manifest and a segment both in dash and hls. Further for this experiments we have tested the testbed with three different configurations as you see here, where each the physical location of the these components is changed. For setup 1 we have everything in the same cloud like we did before, then for seup2 we move the client far in a different cloud, which is actually more realistic, and last we the Origin is moved in the edge lets say together with the client while the storage is in a different cloud.

- So here you can see the results for latency when requesting two segments of sintel, that one is encoded in a low bitrate and the other in higher bitrate, in dash and hls. So here, the blue bars are the results when only the manifest file is cached, and the other bars are the results for dref for mp4 and fmp4. So as you can see here, in all setups caching the dref will perform better, and the latency of segments will be reduced since there will be less request to S3. The highest reduction we get for setup 3, with a reduction of 70% in the latency., even though in setup 3 the origin and the storage are far from each other.

- Here you see the results for two segments of elephants dream encoded into a higer resolution what we see here, is that for the big segments encoded into 2Mbps, there is littlie improvement. This could be because the segment in this case is very big, is almost 11 times bigger than the same segment encoded at 200 kbps, so for this reason you don’t see much improvement. // Another interesting observation is that when dref is cached the file format of the source video does not matter and MP4 and fMP4 have almost similar performance, as anticipated by this approach. This allows one to avoid repackaging media collections stored using non fragmented MP4 file format. Since dref has the same performance for both fmp4 and mp4

- Memory usage M and CPU usage are not increased significantly Next, you can see the results when requesting a manifest file for setup 3. As you can see here, when the dref is cached, the latency is greatly reduce almost to 97% This is because everything that the origin needs to generate a manifest file is basically in the cache so you don’t need to do any request to the storage. This results is very important as it can indicate that the starup delay of a video could be decrease, because in the startup of a video the client first request the manifest file, and an initialization segment which contains metadata that are stored in the dref.

- So for this reason we tried to measure the startup delay when streaming from the proposed setup, by performing a simple experiment. So we try to measure manually the startup delay. So what we did is that we are using the dash.js player to stream video and at the same time we record this streaming . Then using a video player with high precision timing we put the stream in slow speed and we just measure the time from when the viewer selects the play until the first frame is rendered in the screen. And we have done this for both the baseline setup and the proposed setup with the dref caching. Of course there are some dependencies with the video player, like for example, when is the player loading the video, as there are players that do preload, also there is the buffering , some players need to do some buffering before they start measuring. But in any case we ignore this parameters as they affect the results the same way.

- So here you can see the average startup delay, that is measured by repeating the experiment. Here we can see that is some reduction on the starup delay, as you see, for sintel you even achieve a reduction of 60% which is very big gain. This is an important achievement if you consider that startup delay is one of the most influential factors for quality of experience of the user. ider. This is mp4 case

- So concluding on these results we have seen that caching the dref performs most of the time best. We have also seen that the starup delay is decreased. Furthermore when using the dref, the performance between mp4 and fmp4 is almost the same But this evaluation was done just by performing request for a manifest or a segment and we still havent test in large scale, which is what we are going to do next.

- So doing a large scale testing means testing with a realistic workload from video player and also with a high number of concurrent connections. So this can be done using Tensor. But in this case we need to tune the apache server for high concurrent video streaming. And this is because we have a setup with high concurrency requirements when multiple activities need to be executed at the same time (cache connections, origin connection and their communication). And the server is under heavy load and so the server needs to handle large number of concurrent connections in an efficient way. And in this case the server performance can be the bottleneck due to the OS or web server constrains. This relates to the famous c10k problem, of course there are concurrency models developed in the server to be able to support high concurrent traffic, but some customization might be needed to adjust this with the application.

- So finally the results for large scale testing, these are the results fro sinte and elephtnts dream for both mpd and fmp4. What we see is that the outgoing traffic is increased compared to the baseline results especially for mp4 we have a big increase. Also here you can see the conversion efficiency metric that we introduce before. As you see here the mp4 case has improved a lot for all videos reaching almost 1. Furthermore we see that there is no performance variation between mp4 and fmp4. their performance is almost the same.

- Here you see the results for tears of steel. Again we see an improvement when caching the dref. As we see for fmp4 the improvement Is not that big, if you consider that fmp4 was performing well and fmp4 is ready for dash streaming and the moov atom is not that big

- Here you can see the increase that we achieved in the throughput when looking at the different resolutions and for the two videos sintel and elpehadns dream for mp4 and fmp4 So as you see the throughput is always increase in all cases, even if for fmp4 the increase is jus 20%

- So concluding on those results, we see that caching the dref increases the outgoing throughput, the conversion efficinecy is increase, latency is reduce and we have seen reduced startup delay in a test player. Furthermore a key contribution of this work is the design of an intermediate file format based on dref that can improve the cloud based media processing and reduce the storage access latency. Which relates to the NBMP emerging standard for standardization of formats and API’S for distributed media processing

- Regarding future work, this work could be extended by using a more complex media processing function. For example you could have a media processing function that has multiple sources and you need to stitch content together. And this is possible in many applications such as crowsoursing video, ad insertion and personalize streams. Then you have more that one remote access on content, you have heterogenous inputs and you need to do stitching of content, video synchronization and others. Then it would be interesting to know how dref works in this case and what more we can do.