All About Econometrics

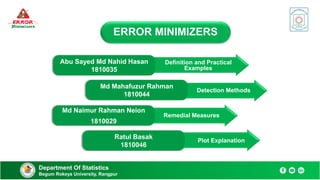

- 1. Department Of Statistics Begum Rokeya University, Rangpur ERROR MINIMIZERS Abu Sayed Md Nahid Hasan 1810035 Md Mahafuzur Rahman 1810044 Md Naimur Rahman Neion 1810029 Ratul Basak 1810046 Definition and Practical Examples Detection Methods Remedial Measures Plot Explanation

- 2. Multicollinearity Heteroscedasticity Autocorrelation Definition Linear relationship between two or more independent variables. Makes overfitting problems. Hard to interpret of a model. Reduces the precision of the estimated coefficients. Department Of Statistics Begum Rokeya University, Rangpur 02

- 3. Multicollinearity Heteroscedasticity Autocorrelation Practical Examples Physical Activity & Body Fat 1. Age & Sales Price of A Car 2. Quality of Product And Network 3. The Population & Total Gross Income 4. Years of Education & Annual Income 5. Department Of Statistics Begum Rokeya University, Rangpur 03

- 4. Multicollinearity Heteroscedasticity Autocorrelation Definition Variance of the residuals is unequal over a range of measured values. It arises when 𝐄 𝒖𝒊 𝟐 = 𝝈𝒊 𝟐 In the presents of outlier it arises. Department Of Statistics Begum Rokeya University, Rangpur 04

- 5. Multicollinearity Heteroscedasticity Autocorrelation Practical Examples Food expenditures & income 2. Explaining student’s test scores & study time 4. Hours of typing practice & errors per page. 5. 3. Number of populations & flower shops in a city Department Of Statistics Begum Rokeya University, Rangpur 05 Distance of a rocket over time 1.

- 6. Multicollinearity Heteroscedasticity Autocorrelation Definition Relationship between a variable's current value and its past values. Correlation between members of a series of observations ordered in time or space Department Of Statistics Begum Rokeya University, Rangpur 06 It arises when,𝑬 𝒖𝒊𝒖𝒋 ≠ 𝟎

- 7. Multicollinearity Heteroscedasticity Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 07 Practical Examples Daily stock returns involving regression analysis using time series data 2. Temperatures on different days in a month 1. Similar perform from same class students more than different classes students 4. Similar answer from nearby geographic locations & geographically distant people 3. Expenditure on households is influenced by the expenditure of the preceding month 5.

- 8. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Detection Methods High 𝑹𝟐 but few significan t ratios High pair-wise correlations among regressors Eigen values & condition index Tolerance & variance inflation factor Department Of Statistics Begum Rokeya University, Rangpur 08 Multicollinearity Heteroscedasticity Autocorrelation

- 9. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 09 High 𝑹^𝟐 But Few Significant T Ratios 𝑹𝟐 ≈ 1, it indicates the variable can be explained by other predictor variables. But t tests show that none or very few of the predictors are statistically different from zero.

- 10. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 10 High pair-wise correlations among regressors If the pair-wise correlation between two independent variable is high (greater than 0.8). High zero order correlations may suggest collinearity, it is not necessary that they be high to have collinearity in any specific case.

- 11. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 11 Value Eigen and Condition Index Condition number, 𝐤 = 𝑴𝒂𝒙𝒊𝒎𝒖𝒎 𝒆𝒊𝒈𝒆𝒏𝒗𝒂𝒍𝒖𝒆 𝑴𝒊𝒏𝒊𝒎𝒖𝒎 𝒆𝒊𝒈𝒆𝒏𝒗𝒂𝒍𝒖𝒆 Condition index, CI= 𝑴𝒂𝒙𝒊𝒎𝒖𝒎 𝒆𝒊𝒈𝒆𝒏𝒗𝒂𝒍𝒖𝒆 𝑴𝒊𝒏𝒊𝒎𝒖𝒎 𝒆𝒊𝒈𝒆𝒏𝒗𝒂𝒍𝒖𝒆 = 𝒌 100 1000 ∞ 0 0 No Multicollinearity Moderate to Strong Multicollinearity Severe Multicollinearity 10 30 ∞ 0 0 No Multicollinearity Moderate to Strong Multicollinearity Severe Multicollinearity

- 12. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 12 Tolerance & Variance Inflation Factor (VIF) V𝐈𝐅 = 𝟏 𝟏− 𝑹𝟐 Tolerance, TOL = 𝟏 𝑽𝑰𝑭 5 10 ∞ 0 0 No Multicollinearity Moderate to Strong Multicollinearity Severe Multicollinearity 0.1 0.2 ∞ 0 0 Severe Multicollinearity Moderate to Strong Multicollinearity No Multicollinearity

- 13. Park’s Test Glejser Test Spearman's Rank Correlation Test Goldfeld – Quandt Test Detection Methods Breusch – Pagan – Godfrey Test Multicollinearity Heteroscedasticity Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 13

- 14. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Park’s Test Department Of Statistics Begum Rokeya University, Rangpur 14 Take the natural log of squared residuals Take the natural log of Independent variable suspecting heteroscedastic behavior. Run OLS for the natural log of regressor against the natural log of the squared residuals Run OLS on our data & find squared residuals from it If the model is insignificant ,there is no heteroscedasticity in the error variance

- 15. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 15 Glejser Test 15 Run OLS from our data set & find the residuals Take absolute value of the residuals Run OLS for regressor against residuals used: |𝒓𝒊| = 𝜷𝟏 + 𝜷𝟐𝒙𝒊 + 𝒗𝒊 |𝒓𝒊| = 𝜷𝟏 + 𝜷𝟐 𝒙𝒊 + 𝒗𝒊 |𝒓𝒊| = 𝜷𝟏 + 𝜷𝟐 𝟏 𝒙𝒊 + 𝒗𝒊 |𝒓𝒊| = 𝜷𝟏 + 𝜷𝟐 𝟏 𝒙𝒊 + 𝒗𝒊 If the model is insignificant ,there is no heteroscedasticity in the error variance

- 16. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 16 Spearman's Rank Correlation Test 16 Fit the regression model on Y & X and obtain the residuals 𝒖𝒊 Rank the absolute value of residuals & independent variable Find the spearman’s rank correlation coefficient, 𝒓𝒔 = 𝟏 − 𝟔 𝒅𝒊 𝟐 𝒏 𝒏𝟐 − 𝟏 t statistic where, 𝒕 = 𝒓𝒔 𝒏−𝟐 𝟏−𝒓𝒔 𝟐 with n-2 df If 𝒕𝒄𝒂𝒍 > 𝒕𝒕𝒂𝒃 ,we may reject null hypothesis and there will be heteroscedasticity in the error variance

- 17. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 17 Goldfeld – Quandt Test 𝑭 = 𝑹𝑺𝑺𝟐/𝒅𝒇 𝑹𝑺𝑺𝟏/𝒅𝒇 [ df 𝑹𝑺𝑺𝟏 =(n-c)/2 – k & df 𝑹𝑺𝑺𝟐 =(n-c-2k)/2 Rank the observations ascending order according to 𝑿𝒊 Omit c central observations and devide the remaining (n-c) observations into two groups. [If n ≈ 30 then c=4, n ≈ 60 then c=8 ] Fit OLS for the two group in step-2 & find residuals sum of square 𝑹𝑺𝑺𝟏 & 𝑹𝑺𝑺𝟐 Fit OLS for the two group in step-2 & find residuals sum of square 𝑹𝑺𝑺𝟏 & 𝑹𝑺𝑺𝟐 If 𝑭𝒄𝒂𝒍 > 𝑭𝒕𝒂𝒃 ,we may reject null hypothesis and there will be heteroscedasticity in the error variance.

- 18. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 18 Breusch – Pagan – Godfrey Test Obtain ESS from constructed model and find Θ = ESS/2 where Θ~ 𝓧𝒎−𝟏 𝟐 & ESS = total sum of squares – residual sum of squares Fit a regression model 𝒀𝒊 = 𝜷𝟏 + 𝜷𝟐𝒙𝟐𝒊 + ⋯ + 𝒖𝒊 & obtain residuals 𝒖𝒊 Obtain 𝝈𝟐 = 𝒖𝒊 𝟐 𝒏 & Construct variables 𝒑𝒊 = 𝒖𝒊 𝟐 𝝈𝟐 Construct the model, 𝒑𝒊 = 𝜶 + 𝜶𝟐𝒙𝟐𝒊 + 𝜶𝟑𝒙𝟑𝒊 + ⋯ + 𝒖𝒊 If𝓧𝒄𝒂𝒍 𝟐 > 𝓧𝒕𝒂𝒃 𝟐 ,we may reject null hypothesis and there will be heteroscedasticity in the error variance.

- 19. Detection Methods Durbin Watson Test Run Test Department Of Statistics Begum Rokeya University, Rangpur 19 Multicollinearity Heteroscedasticity Autocorrelation

- 20. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Durbin Watson test Department Of Statistics Begum Rokeya University, Rangpur 20 Fit a model, 𝒀𝒕 = 𝜷𝟏 + 𝜷𝟐𝑿𝟐𝒕 + 𝜷𝟑𝑿𝟑𝒕+ 𝒖𝒕 Find the residuals 𝒖𝒊 and take a lag 𝒖𝒕−𝟏 Calculate, 𝒅 = 𝒕=𝟐 𝒕=𝒏 (𝒖𝒕−𝒖𝒕−𝟏)𝟐 𝒕=𝟏 𝒕=𝒏 𝒖𝒕 𝟐 ; d lies between 0 to 4 For the given sample size & number of regressor find out the critical 𝒅𝑳 & 𝒅𝒖

- 21. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur Decision Rules Durbin Watson Test Department Of Statistics Begum Rokeya University, Rangpur 21 Reject 𝑯𝟎: Positive autocorrelation Inconclusive Do not Reject 𝑯𝟎:No evidence autocorrelation Inconclusive Reject 𝑯𝟎:Negative autocorrelation 𝒅𝑳 𝒅𝒖 2 4-𝒅𝑳 4-𝒅𝒖 4 0 𝒅𝑳 𝒅𝒖 0

- 22. Find Prob [E (R) – 1.96𝝈𝑹 ≤ 𝑹 ≤ E (R) + 1.96𝝈𝑹] = 0.95 Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation Run test Department Of Statistics Begum Rokeya University, Rangpur 22 Mean: 𝑬 𝑹 = 𝟐𝑵𝟏𝑵𝟐 𝑵 + 𝟏 Variance: 𝝈𝑹 𝟐 = 𝟐𝑵𝟏𝑵𝟐(𝟐𝑵𝟏𝑵𝟐 − 𝑵) 𝑵𝟐(𝑵 −𝟏) Here, 𝑁1 = 𝑁𝑢𝑚𝑏𝑒𝑟 𝑜𝑓 " + “, and 𝑁2 = 𝑁𝑢𝑚𝑏𝑒𝑟 𝑜𝑓 " − “ 𝑁 = 𝑁1 + 𝑁2 and R = Numbers of Runs Note the signs (+ or -) of the residuals Count the number of runs & define the length of a run Fit a regression model and find the residuals

- 23. Detection of Multicollinearity Detection of Heteroscedasticity Detection of Autocorrelation 𝑯𝟎: No autocorrelation 𝑯𝟏: There is autocorrelation Decision Rule: Run Test Decision Rules Run Test Accept 𝑯𝟎 if R lies in the confidence interval otherwise reject Positive autocorrelation when R will be few Negative autocorrelation when r will be many Department Of Statistics Begum Rokeya University, Rangpur 23

- 24. Multicollinearity Heteroscedasticity Autocorrelation Remedial Measures Department Of Statistics Begum Rokeya University, Rangpur 24 A priori information 1 Combining cross sectional & time series data 2 Dropping a variable(s) and specification bias 3 Transformation of variables 4 Additional or new data 5

- 25. Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 25 A Priori Information Consider a regression model: Supose a priori believe that β𝟑 = 𝟎. 𝟏𝟎𝛃𝟐 𝒀𝒊 = 𝜷𝟏 + 𝜷𝟐𝑿𝟐𝒊 + 𝜷𝟑𝑿𝟑𝒊+ 𝒖𝒊 Run the model: 𝒀𝒊 = 𝜷𝟏 + 𝜷𝟐𝑿𝟐𝒊 + 𝟎. 𝟏𝟎 𝜷𝟐𝑿𝟑𝒊+ 𝒖𝒊 = 𝜷𝟏 + 𝜷𝟐𝑿𝒊+ 𝒖𝒊 Where 𝑿𝒊 = 𝑿𝟐𝒊 + 𝟎. 𝟏𝟎 𝑿𝟑𝒊

- 26. Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 26 Combining Cross-sectional And Time Series Data Fit a model having cross section & time series data: 𝒍𝒏𝒀𝒕 = 𝜷𝟏 + 𝜷𝟐𝒍𝒏𝑷𝒕 + 𝜷𝟑𝒍𝒏𝑰𝒕 + 𝒖𝒕 where P and I are highly correlated We have to fit: 𝒀𝒕 ∗ = 𝜷𝟏 + 𝜷𝟐𝒍𝒏𝑷𝒕 + 𝒖𝒕 Where 𝒀∗ = 𝒍𝒏𝒀 − 𝜷𝟑𝒍𝒏𝑰 𝐘𝐭 ∗ represent that value of 𝐘 after removing Multicollinearity problem

- 27. Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 27 Dropping A Variable(s) And Specification Bias Consider a regression model: 𝒀𝒊 = 𝜷𝟏 + 𝜷𝟐𝑿𝟐𝒊 + 𝜷𝟑𝑿𝟑𝒊+ 𝒖𝒊 • If in the model , the regressor are highly correlated then drop a variable and fit a model having no multicollinearity problem.

- 28. Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 28 Transformation of Variables Regression model for time series data: 𝒀𝒕= 𝜷𝟏 + 𝜷𝟐𝑿𝟐𝒕 + 𝜷𝟑𝑿𝟑𝒕+ 𝒖𝒕 Subtract above two model we get: 𝒀𝒕 - 𝒀𝒕−𝟏= 𝜷𝟐(𝑿𝟐𝒕 − 𝑿𝟐,𝒕−𝟏+ 𝜷𝟑(𝑿𝟑𝒕 −𝑿𝟑,𝒕−𝟏)+ 𝒗𝒕 Where, 𝒗𝒕 = 𝒖𝒕 − 𝒖𝒕−𝟏 Fit a model at time t-1: 𝒀𝒕−𝟏 = 𝜷𝟏 + 𝜷𝟐𝑿𝟐,𝒕−𝟏 + 𝜷𝟑𝑿𝟑,𝒕−𝟏+ 𝒖𝒕−𝟏 Which model will reduce the multicollinearity problem.

- 29. Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 29 Additional or New Data Multicollinearity is a sample feature. So, we can add another sample to the same variable. Increasing sample size, multicollinearity problem may reduce.

- 30. Remedial Measures when 𝝈𝟐 is known when 𝝈𝟐 is unknown There are two approaches to remediation Multicollinearity Heteroscedasticity Autocorrelation Department Of Statistics Begum Rokeya University, Rangpur 30

- 31. Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation When 𝝈𝟐 is Known: Method of Weighted Least Square Department Of Statistics Begum Rokeya University, Rangpur 31 Consider the simple linear regression model: 𝒀𝒊 = 𝜶 + 𝜷𝑿𝒊 + 𝒖𝒊 Then the transformed error will have a constant variance, 𝑽 𝝁𝒊 ∗ = 𝑽 𝝁𝒊 𝝈𝒊 = 𝟏 𝝈𝒊 𝟐 𝑽 𝝁𝒊 + 𝟏 𝝈𝒊 𝟐 𝝈𝒊 𝟐 = 𝟏 Run a model: 𝒀𝒊 𝝈𝒊 = 𝜷𝟏 ∗ 𝟏 𝝈𝒊 + 𝜷𝟐 ∗ 𝑿𝟐𝒊 𝝈𝒊 + 𝜷𝟑 ∗ 𝑿𝟑𝒊 𝝈𝒊 + 𝑼𝒊 𝝈𝒊 If 𝐕(𝒖𝒊) = 𝝈𝒊 𝟐 then heteroscedasticity is present

- 32. Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation When 𝝈𝟐 is Unknown Department Of Statistics Begum Rokeya University, Rangpur 32 The error variance is proportional to 𝑿𝒊 𝟐 : 𝑬(𝑼𝒊 𝟐 ) = 𝝈𝟐 𝑿𝒊 𝟐 The error variance is proportional to 𝑿𝒊: 𝑬(𝑼𝒊 𝟐 ) = 𝝈𝟐𝑿𝒊 The error variance is proportional to the square of the mean value of Y : 𝑬(𝑼𝒊 𝟐 ) = 𝝈𝟐 [𝑬(𝒀𝒊)]𝟐 A log transformation such as: 𝒍𝒏𝒀𝒊 = 𝜷𝟏 + 𝜷𝟐 𝒍𝒏𝑿𝒊 + 𝑼𝒊

- 33. Remedial Measures Department Of Statistics Begum Rokeya University, Rangpur 33 First-Difference Transformation 1 Generalized Transformation 2 Newey-West Method 3 Multicollinearity Heteroscedasticity Autocorrelation

- 34. First-difference Transformation Department Of Statistics Begum Rokeya University, Rangpur 34 Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation If autocorrelation is of AR(1) type, we have: 𝒖𝒕 − 𝝆𝒖𝒕−𝟏 = 𝒗𝒕 • Assume ρ=1 and run first-difference model (taking first difference of dependent variable and all regressors) 𝒀𝒕 − 𝒀𝒕−𝟏 = 𝜷𝟐(𝑿𝒕 − 𝑿𝒕−𝟏)+ (𝒖𝒕−𝒖𝒕−𝟏)

- 35. Generalized Transformation Department Of Statistics Begum Rokeya University, Rangpur 35 Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation Estimate value of ρ through regression of residual on lagged residual and use value to run transformed regression 𝒀𝒕 = 𝜷𝟏 + 𝜷𝟐𝑿𝒕+ 𝒖𝒕 𝒀𝒕−𝟏 = 𝜷𝟏 + 𝜷𝟐𝑿𝒕−𝟏+ 𝒖𝒕−𝟏 ρ𝒀𝒕−𝟏 = ρ𝜷𝟏 + ρ𝜷𝟐𝑿𝒕−𝟏+ ρ𝒖𝒕−𝟏 𝒀𝒕 − ρ𝒀𝒕−𝟏 = 𝜷𝟏(𝟏 − ρ) + 𝜷𝟐(𝑿𝒕 − 𝝆𝑿𝒕−𝟏)+ (𝒖𝒕 − ρ𝒖𝒕−𝟏)

- 36. Newey-West Method Remedials of Multicollinearity Remedials of Heteroscedasticity Remedials of Autocorrelation Generates HAC (heteroscedasticity and autocorrelation consistent) standard errors. Department Of Statistics Begum Rokeya University, Rangpur 36

- 37. Multicollinearity Heteroscedasticity Autocorrelation Plot Explanation Department Of Statistics Begum Rokeya University, Rangpur 37 From the matrix of scatter plot, we suspect Multicollinearity problem between first & second independent variable because they are highly correlated.

- 38. Multicollinearity Heteroscedasticity Autocorrelation Plot Explanation Department Of Statistics Begum Rokeya University, Rangpur 38 The following figure illustrates the typical pattern of the residuals if the error term is homoscedastic

- 39. Multicollinearity Heteroscedasticity Autocorrelation Plot Explanation Department Of Statistics Begum Rokeya University, Rangpur 39 In this figure, each plot exhibits the potential existence of heteroscedasticity with various relationships between the residual variance (squared residuals) and the values of the independent variable X

- 40. Multicollinearity Heteroscedasticity Autocorrelation Plot Explanation Department Of Statistics Begum Rokeya University, Rangpur 40 The graph of residuals against fitted value shows funnel shape, which indicate presence of heteroscedasticity.

- 41. Multicollinearity Heteroscedasticity Autocorrelation Plot Explanation Department Of Statistics Begum Rokeya University, Rangpur 41 The plot of residuals against time shows a cyclic pattern. So, we can suspect positive autocorrelation.

- 42. Multicollinearity Heteroscedasticity Autocorrelation Plot Explanation Department Of Statistics Begum Rokeya University, Rangpur 42 The plot of residuals against time shows upward and downward trend in the disturbance. So, we can suspect negative autocorrelation.

- 43. Multicollinearity Heteroscedasticity Autocorrelation Plot Explanation Department Of Statistics Begum Rokeya University, Rangpur 43 The plot of residuals against time shows random scatter plot. So, we can suspect no autocorrelation.

- 44. Department Of Statistics Begum Rokeya University, Rangpur 43 Any Query….

- 45. Department Of Statistics Begum Rokeya University, Rangpur 43