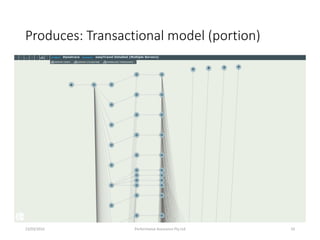

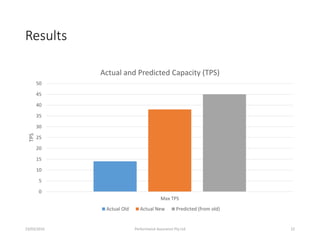

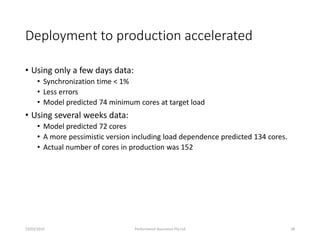

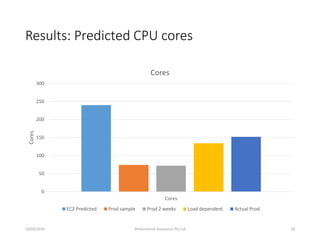

The document discusses the development of automatic performance modeling from application performance management (APM) data by Performance Assurance Pty Ltd. It highlights the challenges of manual model building and presents an automated approach that utilizes APM data to create more accurate, faster, and cost-effective performance models, particularly in various migration and DevOps projects. Key findings indicate that automated modeling can predict application performance and resource requirements, addressing the needs in agile software development environments.