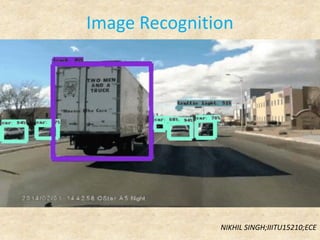

Image recognition

- 2. What is image recognition? o Image Recognition is a technology that strives to acquire, process, analyse and understand images and high-dimensional data from real world in order to produce numerical or symbolic information o In other words it is a process of identifying and detecting an object or a feature in a digital image or video o It is also known as Computer Vision

- 3. Why we need image recognition? o Image recognition is a vital component in robotics such as the driverless vehicles or domestic robots. It is also important in security systems such as face recognition o In image search engines such as Google or Bing image search whereby you use rich image content to query for similar stuff. Like in Google photos where the system uses image recognition to categorize your images into things like cats, dogs, people and so on o In medical imaging such as cancer detection in x-ray images to assist doctors o In robotic navigation systems to track motion of objects or camera tracking o Image recognition is great for marketers in order to optimize all of their marketing strategies. By implementing logo detection, they can gain much clearer brand insights, data, and metrics that they wouldn’t have if they weren’t using image recognition technology o automatic panorama stitching, is used in commercial panorama software such as Adobe Photoshop to recover 3D camera rotations and camera distortion matrices in order to align images into a very wide-angle panoramas

- 4. Why we need image recognition? • Marketers can track how well a sponsorship is doing with image recognition and logo detection which makes it much easier to figure out how much revenue they will return • Over 85% of logos within images posted to social media don’t contain any tag or text brand mention

- 5. How image recognition works? Image Recognition Using Machine Learning: A machine learning approach to image recognition involves identifying and extracting key features from images and using them as input to a machine learning model Image Recognition Using Deep Learning: A deep learning approach to image recognition may involve the use of a convolutional neural network to automatically learn relevant features from sample images and automatically identify those features in new images Fig. denoting image recognition using Machine Learning

- 6. Machine Learning vs Deep Learning • Machine learning uses algorithms to parse data, learn from that data, and make informed decisions based on what it has learned • Deep learning structures algorithms in layers to create an artificial “neural network” that can learn and make intelligent decisions on its own • Deep learning is a subfield of machine learning. While both fall under the broad category of artificial intelligence, deep learning is the term that’s often used to describe how human-like artificial intelligence works Fig. denoting image recognition using Deep Learning

- 7. Neural Network o A neural network is a system of interconnected artificial “neurons” that exchange messages between each other o The connections have numeric weights that are tuned during the training process, so that a properly trained network will respond correctly when presented with an image or pattern to recognize o The network consists of multiple layers of feature-detecting “neurons”. Each layer has many neurons that respond to different combinations of inputs from the previous layers o Typical CNNs use 5 to 25 distinct layers of pattern recognition

- 9. Understanding CNN • First, the computer tries to identify very simple aspects of the images: lines, edges, corners, blobs, etc. Using that information, we build up into slightly, just slightly more complex shapes: squares, circles, triangles • After a few iterations, it starts to recognize high- level features such as eyes, nose, mouth, etc. Finally, by putting all the pieces together, it computes a probability score for this image for each class of objects it could belong to (e.g., cat, dog, bird, etc)

- 10. Understanding CNN • Now the computer sees the image as an array of pixels values. Let’s say the cat image we saw earlier is of size 10x10x3 (where 3 represents the three RGB values). Then the pixel value representation, for one of the 3 RGB color channels, would look something like this: • Then, it scans this entire image a bunch of times, each time looking for one specific feature • There are a few patterns that the computer is interested in: blobs, circles, colors, and edges. It prepares a few reference objects where each represents a blob, a circle, a color, an edge, etc. It puts the reference object on the image and scans over the image, looking for areas of overlap between the reference and the scanned region

- 11. Understanding CNN • This is how the computer looks for areas of overlap between the reference and the scanned region • In deep learning, this “reference object” is called a filter (also referred to as kernel), and the part of the image that is being compared to is called a receptive field I have a filter that tries to identify round shapes, then my filter might look like this:

- 12. Understanding CNN Applying this filter on a part of the image: This image denotes a dot product between the filter and the receptive field to compute how much they overlap Once the other filters like color, blobs and edges are computed the first layer of convolution has been completed. This is called an activation map. Since only one filter won’t be enough to identify other features Thus this process repeats and more convolutional layers are formed.

- 13. Practical Applications Medical Imaging: • extensively used for cancer detection • Retinopathy Industrial Application: • fault detection in manufacturing

- 14. Practical Applications Security: • Face and fingerprint recognition Application for creative media: • Deep dream • Human and Computer interface

- 15. Practical Applications Geographic Information Systems: • Terrain Classification • Meteorology Astronomy: • Enhancement of telescopic images • Recognition of Astronomical Bodies

- 16. Future Prospect and Conclusion • Google Self-Driven Cars • fully automated machinery used in factories • In space exploration • AI powered robots • Face recognition based ATM Image recognition is a futuristic and relatively unexplored field, with wide areas of practical applications, including industrial, scientific and medical applications. This field has a lot of potential for development and implementation in new areas like space exploration, processing signal images, computer vision etc.

- 17. References • www.whatis.techtarget.com • www.ieeeexplore.ieee.org • www.wolfram.com • www.shirleydu.com • www.unitag.io • Basic definitions and images from www.google.com