Evaluation Question 3

- 1. What have you learned from your audience feedback?

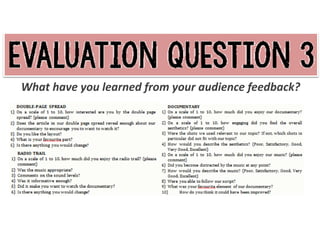

- 2. Why is it important to gain audience feedback on a production? Future references - constructive criticism for own learning or future careers To discover what elements other people view as particular strengths (own confidence and satisfaction) and areas that need improving (to give us knowledge of what we would do next time to succeed further) Helps considerably with own evaluation Aims and Objectives: To gain other people’s opinions of our product (media students – informed and knowledgeable about subject so feedback was useful, constructive, valuable, reliable) To gather specific ideas of how we could have improved our piece and give me confidence with the strengths and constructive criticism with the weaknesses Methods: Questionnaire: I wrote a series of questions for each ancillary tasks – open and closed questions to provoke a variety of different responses - Open – “what is your favourite part of our documentary?” Evaluation of method: Open questions give detailed and answers and chance for focus group to express opinions but are more difficult to analyse, interpret and compare (also difficult to categorise/put into graphs) but definitely more useful for gaining views on specific strengths and weaknesses - Closed – “On a scale of 1 to 10, how much did the double-page spread appeal to you?” Evaluation of method: Closed questions give specific results that are not detailed and therefore easy to analyse, interpret and compare

- 4. On a scale of 1-10, how interested are you by the double-page spread? 10 8 6 4 2 0 Mean Median Mode This question provoked a positive response from our focus group, with the majority of people giving the interest of our doublepage spread a score of 8 out of 10. A particular area of interest was the images used – “good pictures”. I tried a range of graphs to represent these results visually and diverted towards a simple bar chart because it is clear and easy to interpret data from. The range of the scores was 5, which is good dispersion, as no one scored this less than 5. Do you like the layout? Another particular appeal of our double-page spread was the layout. Out of the 15 people we asked, all of them liked the layout, giving us 100% positive feedback for this question. We received a range of positive comments for this question - “eye catching”, “black and white contrasting text box relates to the topic really well”. We tried to be more outgoing with the layout, taking the risk of challenging the conventions of colour in Radio Times, so were very pleased with this feedback. Yes No Is there anything you would change? Main Image Spelling Layout None This question gathered a range of responses, from “The main photograph” to the spelling, giving us qualitative data. In order to transfer our findings into a graph, I conducted content analysis and narrowed the responses down into 4 categories, then tallied how many answers fell into each group, converting these into percentages to create the pie chart. The most common answer was Main Image, which I can understand because, although it shows a college which creates familiarity for our audience, it does not represent the topic.

- 6. Was the music appropriate? 3 members in our focus group felt the music was not appropriate for the radio trail – on one questionnaire was the comment “bit dull”, telling us that we could have done with creating a track which was more upbeat. However, the remaining 12 felt the music was appropriate, so overall we are pleased with the music we created. If done again, we would have added engagement by changing the music slightly and adding more variation of sound. Was it informative enough? 100% No Yes 0 5 10 15 Comments on the sound levels? To enable me to apply the responses from this question to a graph, I tallied the amount of times each category was said, then calculated the percentage of each. Over 50% of our focus group thought the sound levels were good, yet 1 person felt the sound was poor – which is an anomaly due to the exceptional result. I feel the sound levels were good, so am pleased with these results. 80% 60% 40% 20% 0% Yes No On this question, we gained 100% positive feedback, as each of the 15 people we asked felt that our radio trail revealed enough information about our documentary. Whereas some commented that our radio trail “gave a clear idea of what the documentary was about” and was “very informative”, others argued that it was perhaps too informative. These results are great to see – if I were to create another radio trail, I would include more extracts from our documentary rather than the majority voice over to remove some of this detail and add engagement. Good Satisfactory Average Poor

- 8. On a scale of 1-10, how much did you enjoy our documentary? To calculate the Mean, Median and Mode of the responses from this question, I created a table in Microsoft Excel and recorded the scores and the frequency of each. For the Mean, I calculated the Score x Frequency and divided the total by the number of people (15), giving a result of 8. To calculate the Median, I found where the half way mark of the total fell (8). The most common (Mode) was 7 and 9, so an average of 8, and the range is 3. I was very pleased with these findings, as no one scored the enjoyment of our documentary less than 7 and our highest score was a perfect 10. Each measure of central tendency was 8, which is a very high score, giving me confidence that our focus group felt our documentary was a high standard. How would you describe the overall aesthetics? Excellent Very Good Good Satisfactory Poor Whilst recording the results of the questionnaire I was very pleased to find that the answers for this question were particularly positive, with 60% of our focus group saying ‘Very Good’ and 33% saying ‘Good’. The remaining person thought the aesthetics were ‘Excellent’, which was excellent to see because the visuals were the main focus and I had worked hard to manipulate them in order to engage the audience. I had made the question more specific by creating these categories myself, so people could choose which of the group their view fell into. This proved very efficient as it meant answers were less detailed so recording the responses and transferring them into a pie chart was a quick and easy process.

- 9. How would you describe the music? Excellent Very Good Good Satisfactory Poor I applied the same categories to this question to gain a more specific response and make the questionnaire easier and less time consuming for our focus group, encouraging them to answer more truthfully rather than feeling the need to rush and choose any random answer. This question also provoked a particularly positive response. Although 1 person thought the music was ‘Poor’, this result was an anomaly. 20% of our focus group chose ‘Excellent’ as an answer for this question, 60% chose ‘Very Good’ and 13 % chose ‘Good’ – results I am especially pleased with, as I was worried that the music was not up to the standard I would have liked due the lack of time we left to create it. Therefore, the findings surprised me, but I am exceptionally happy with the responses. The responses for this question varied, so I created 6 categories to tally the answers into, which worked well as each answer fit into one of the categories. Surprisingly, the modal answer was ‘None’, as 5 people couldn’t find any specific improvements they would have made. This could have been due to rush since it was the last question, but nonetheless I was very pleased with this result. I was also surprised to find that one response suggested that we should have included a wider range of shots, since all the results from the question ‘How would you describe the overall aesthetics?’ were above average. Even so, I understand that we could have used a wider variation of the types of shots (close ups, medium shots, long shots) and angles (high angle, low angle) so I will take this on board. Other suggested improvements include: quality of voice over, more interviews/vox pops, sound levels and music. How do you think it could have been improved? None Quality of Voice Over More Interviews/Vox Pops Wider Variation of Shots Sound Levels Music

- 10. What issues did you face during audience feedback? - - People may not have been truthful due to wanting to conform to the mainstream/most popular view. Some of the answers to the open questions seemed rushed – not as much detail as desired Participants of self-report methods like interviews or questionnaires, have the ability to answer in a certain way to manipulate results (either negatively – to spite the researcher; or positively – to improve results) Difficult to record, analyse and compare qualitative data from open questions How did you manage to overcome these issues? The members of our focus group were people from our media class, who had completed the same tasks, so knew what the aim of our research was - therefore, they may have chosen to answer in a certain way rather than expressing their own true opinions. We could not have avoided this, so, to make answers more specific and encourage our focus group to answer truthfully, I created a mixture of open and closed questions, meaning the participants could go into detail if they wished, but also didn’t have spend a lot of time filling the questionnaire out. I overcame the issue with difficulty to record, analyse and compare qualitative data from open questions by conducting content analysis – categorising the responses and tallying how many answers fell into each group How would you alter or improve the methods you used to gather audience feedback? A focus group, consisting of media students, watched our documentary and ancillary tasks, and filled out a questionnaire we had made, commenting on elements based on the audio, visual and technical coding. I would have liked to create a focus group that included a range of people, some media students, and some who do not know a lot about the subject, in order to provoke a wider range of responses. If I had had more time, I would have interviewed individuals and recorded their response visually in order to avoid some of the issues that aroused during the questionnaire (e.g. people would be more likely to be truthful in an interview due to being under more pressure).