ACL-WMT13 poster.Quality Estimation for Machine Translation Using the Joint Method of Evaluation Criteria and Statistical Modeling

•

1 like•898 views

Quality Estimation for Machine Translation Using the Joint Method of Evaluation Criteria and Statistical Modeling Publisher: Association for Computational Linguistics2013 Authors: Aaron Li-Feng Han, Yi Lu, Derek F. Wong, Lidia S. Chao, Yervant Ho, Anson Xing Proceedings of the ACL 2013 EIGHTH WORKSHOP ON STATISTICAL MACHINE TRANSLATION (ACL-WMT 2013), 8-9 August 2013. Sofia, Bulgaria. Open tool https://github.com/aaronlifenghan/aaron-project-ebleu (ACM digital library, ACL anthology)

Report

Share

Report

Share

Download to read offline

Recommended

Recommended

ACL 2017 - P17-1181Michael Bloodgood - 2017 - Using Global Constraints and Reranking to Improve ...

Michael Bloodgood - 2017 - Using Global Constraints and Reranking to Improve ...Association for Computational Linguistics

Interior optimization software and algorithms programming methods provide a computational tool with

a number of applications. Theory and computational demonstrations/techniques were primarily shown

in previous articles. The mathematical framework of this new method, (Casesnoves, 2018–2020), was

also proven.The links among interior optimization, graphical optimization (Casesnoves, 2016–7), and

classical methods in non-linear equations systems were developed. This paper is focused on software

engineering with mathematical methods implementation in programming as a primary subject. The

specific details for interior optimization computational adaptation on every specific problem, such as

engineering, physics, and electronics physics, are explained. Second subject is electronics applications

of software in the field of superconductors. It comprises a series of new BCS equation optimization

for Type I superconductors, based on previous research for other different Type I superconductors

previously published. A new dual optimization for two superconductors is simulated. Results are

acceptable with low errors and imaging demonstrations of the interior optimization utility.Interior Dual Optimization Software Engineering with Applications in BCS Elec...

Interior Dual Optimization Software Engineering with Applications in BCS Elec...BRNSS Publication Hub

More Related Content

Similar to ACL-WMT13 poster.Quality Estimation for Machine Translation Using the Joint Method of Evaluation Criteria and Statistical Modeling

ACL 2017 - P17-1181Michael Bloodgood - 2017 - Using Global Constraints and Reranking to Improve ...

Michael Bloodgood - 2017 - Using Global Constraints and Reranking to Improve ...Association for Computational Linguistics

Interior optimization software and algorithms programming methods provide a computational tool with

a number of applications. Theory and computational demonstrations/techniques were primarily shown

in previous articles. The mathematical framework of this new method, (Casesnoves, 2018–2020), was

also proven.The links among interior optimization, graphical optimization (Casesnoves, 2016–7), and

classical methods in non-linear equations systems were developed. This paper is focused on software

engineering with mathematical methods implementation in programming as a primary subject. The

specific details for interior optimization computational adaptation on every specific problem, such as

engineering, physics, and electronics physics, are explained. Second subject is electronics applications

of software in the field of superconductors. It comprises a series of new BCS equation optimization

for Type I superconductors, based on previous research for other different Type I superconductors

previously published. A new dual optimization for two superconductors is simulated. Results are

acceptable with low errors and imaging demonstrations of the interior optimization utility.Interior Dual Optimization Software Engineering with Applications in BCS Elec...

Interior Dual Optimization Software Engineering with Applications in BCS Elec...BRNSS Publication Hub

Similar to ACL-WMT13 poster.Quality Estimation for Machine Translation Using the Joint Method of Evaluation Criteria and Statistical Modeling (20)

Quality Estimation for Machine Translation Using the Joint Method of Evaluati...

Quality Estimation for Machine Translation Using the Joint Method of Evaluati...

ACL-WMT2013.Quality Estimation for Machine Translation Using the Joint Method...

ACL-WMT2013.Quality Estimation for Machine Translation Using the Joint Method...

Efficient Feature Selection for Fault Diagnosis of Aerospace System Using Syn...

Efficient Feature Selection for Fault Diagnosis of Aerospace System Using Syn...

HOPE: A Task-Oriented and Human-Centric Evaluation Framework Using Professio...

HOPE: A Task-Oriented and Human-Centric Evaluation Framework Using Professio...

HOPE: A Task-Oriented and Human-Centric Evaluation Framework Using Profession...

HOPE: A Task-Oriented and Human-Centric Evaluation Framework Using Profession...

Paper review: Learned Optimizers that Scale and Generalize.

Paper review: Learned Optimizers that Scale and Generalize.

ACL-WMT2013.A Description of Tunable Machine Translation Evaluation Systems i...

ACL-WMT2013.A Description of Tunable Machine Translation Evaluation Systems i...

EXTRACTIVE SUMMARIZATION WITH VERY DEEP PRETRAINED LANGUAGE MODEL

EXTRACTIVE SUMMARIZATION WITH VERY DEEP PRETRAINED LANGUAGE MODEL

Michael Bloodgood - 2017 - Using Global Constraints and Reranking to Improve ...

Michael Bloodgood - 2017 - Using Global Constraints and Reranking to Improve ...

Evolving Reinforcement Learning Algorithms, JD. Co-Reyes et al, 2021

Evolving Reinforcement Learning Algorithms, JD. Co-Reyes et al, 2021

A WEB BASED APPLICATION FOR RESUME PARSER USING NATURAL LANGUAGE PROCESSING T...

A WEB BASED APPLICATION FOR RESUME PARSER USING NATURAL LANGUAGE PROCESSING T...

Interior Dual Optimization Software Engineering with Applications in BCS Elec...

Interior Dual Optimization Software Engineering with Applications in BCS Elec...

Comparative Analysis of Transformer Based Pre-Trained NLP Models

Comparative Analysis of Transformer Based Pre-Trained NLP Models

A review of automatic differentiationand its efficient implementation

A review of automatic differentiationand its efficient implementation

Extractive Summarization with Very Deep Pretrained Language Model

Extractive Summarization with Very Deep Pretrained Language Model

More from Lifeng (Aaron) Han

More from Lifeng (Aaron) Han (20)

WMT2022 Biomedical MT PPT: Logrus Global and Uni Manchester

WMT2022 Biomedical MT PPT: Logrus Global and Uni Manchester

Measuring Uncertainty in Translation Quality Evaluation (TQE)

Measuring Uncertainty in Translation Quality Evaluation (TQE)

Meta-Evaluation of Translation Evaluation Methods: a systematic up-to-date ov...

Meta-Evaluation of Translation Evaluation Methods: a systematic up-to-date ov...

Meta-evaluation of machine translation evaluation methods

Meta-evaluation of machine translation evaluation methods

Monte Carlo Modelling of Confidence Intervals in Translation Quality Evaluati...

Monte Carlo Modelling of Confidence Intervals in Translation Quality Evaluati...

Apply chinese radicals into neural machine translation: deeper than character...

Apply chinese radicals into neural machine translation: deeper than character...

cushLEPOR uses LABSE distilled knowledge to improve correlation with human tr...

cushLEPOR uses LABSE distilled knowledge to improve correlation with human tr...

Chinese Character Decomposition for Neural MT with Multi-Word Expressions

Chinese Character Decomposition for Neural MT with Multi-Word Expressions

Build moses on ubuntu (64 bit) system in virtubox recorded by aaron _v2longer

Build moses on ubuntu (64 bit) system in virtubox recorded by aaron _v2longer

Detection of Verbal Multi-Word Expressions via Conditional Random Fields with...

Detection of Verbal Multi-Word Expressions via Conditional Random Fields with...

AlphaMWE: Construction of Multilingual Parallel Corpora with MWE Annotations ...

AlphaMWE: Construction of Multilingual Parallel Corpora with MWE Annotations ...

MultiMWE: Building a Multi-lingual Multi-Word Expression (MWE) Parallel Corpora

MultiMWE: Building a Multi-lingual Multi-Word Expression (MWE) Parallel Corpora

ADAPT Centre and My NLP journey: MT, MTE, QE, MWE, NER, Treebanks, Parsing.

ADAPT Centre and My NLP journey: MT, MTE, QE, MWE, NER, Treebanks, Parsing.

A deep analysis of Multi-word Expression and Machine Translation

A deep analysis of Multi-word Expression and Machine Translation

machine translation evaluation resources and methods: a survey

machine translation evaluation resources and methods: a survey

Incorporating Chinese Radicals Into Neural Machine Translation: Deeper Than C...

Incorporating Chinese Radicals Into Neural Machine Translation: Deeper Than C...

Chinese Named Entity Recognition with Graph-based Semi-supervised Learning Model

Chinese Named Entity Recognition with Graph-based Semi-supervised Learning Model

ACL-WMT13 poster.Quality Estimation for Machine Translation Using the Joint Method of Evaluation Criteria and Statistical Modeling

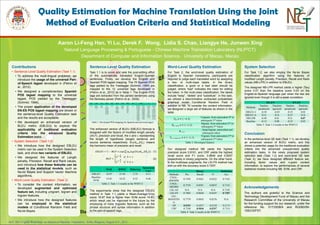

- 1. 中文 English Deutsch Français Italiano 日本語 Pусский Español Português Dansk, ελληνικά, , 한국어, ... Magyar nyelv Aaron Li-Feng Han, Yi Lu, Derek F. Wong, Lidia S. Chao, Liangye He, Junwen Xing Natural Language Processing & Portuguese - Chinese Machine Translation Laboratory (NLP2CT) Department of Computer and Information Science,University of Macau, Macau Acknowledgements The authors are grateful to the Science and Technology Development Fund of Macau and the Research Committee of the University of Macau for the funding support for our research, under the reference No. 017/2009/A and RG060/09- 10S/CS/FST. Quality Estimation for Machine Translation Using the Joint Method of Evaluation Criteria and Statistical Modeling ACL 2013 Eighth Workshop on Statistical Machine Translation, Sofia, Bulgaria, August 8-9 , 2013. Contributions • Sentence-Level Quality Estimation (Task 1.1) • To address the multi-lingual problems, we introduce the usage of the universal Part- of-Speech tagset developed in (Petrov et al., 2012). • We designed a complementary Spanish POS tagset mapping to the universal tagset, POS yielded by the Treetagger (Schmid, 1994). • The proper application of the developed EN-ES POS tagset mapping are shown in the sentence-level Quality Estimation task and the results are acceptable. • We developed an enhanced version of BLEU metric (EBLEU) to explore the applicability of traditional evaluation criteria into the advanced Quality Estimation tasks. • System Selection (Task 1.2) • We introduce how the designed EBLEU metric can be used in the System Selection task, and show two variants of EBLEU. • We designed the features of Length penalty, Precision, Recall and Rank values, and introduce how these features can be used in the statistical models, such as Naïve Bayes and Support Vector Machine algorithms. • Word-Level Quality Estimation (Task 2) • To consider the context information, we developed augmented and optimized feature set, including unigram, bigram and trigram features. • We introduce how the designed features can be employed in the statistical models of Conditional Random Field and Naïve Bayes. Sentence-Level Quality Estimation Task 1.1 is to score and rank the post-editing effort of the automatically translated English-Spanish sentences. Firstly, we develop the English and Spanish POS tagset mapping. The 75 Spanish POS tags yielded by the Treetagger (Schmid, 1994) are mapped to the 12 universal tags developed in (Petrov et al., 2012) as in Table 1. The English POS tags are extracted from the parsed sentences using the Berkeley parser (Petrov et al., 2006). The enhanced version of BLEU (EBLEU) formula is designed with the factors of modified length penalty (𝑀𝐿𝑃), precision and recall, the ℎ and 𝑠 representing the length of hypothesis (target) sentence and source sentence respectively. 𝐻(𝛼𝑅 𝑛, 𝛽𝑃𝑛) means the harmonic mean of precision and recall. The experiments show that the designed EBLEU method in Task 1.1 yields a Mean-Average-Error score 16.97 that is higher than SVM score 14.81, which result can be improved in the future by the employing of more linguistic features, such as the phrase structure and syntax information in addition to the part-of-speech tags. Word-Level Quality Estimation For Task 2, the word-level quality estimation of English to Spanish translations, participants are required to judge each translated word by assigning a two- or multi-class labels. In the binary classification, a good or a bad label should be judged, where “bad” indicates the need for editing the token. In the multi-class classification, the labels include “keep”, “delete” and “substitute”. In this task, we utilized a discriminative undirected probabilistic graphical model Conditional Random Field in addition to NB. To consider the content information, we designed a large set of features as shown in the table. Our designed method NB yields the highest precision score 0.8181, and CRF yields the highest recall score and F1 score, 0.9846 and 0.8297 respectively in binary judgments. On the other hand, in the multiclass judgments, the LIG-FS method has won us with the accuracy score 0.7207. System Selection For Task 1.2, we also employ the Naïve Bayes classification algorithm using the features of modified Length penalty, Precision, Recall and Rank values (NB-LPR) in addition to EBLEU. The designed NB-LPR method yields a higher |Tau| score 0.07 than the baseline score 0.03 on the English-to-Spanish language pair when the ties are ignored even though it is still a weak correlation. Conclusion In the sentence-level QE task (Task 1.1), we develop an enhanced version of BLEU metric, and this shows a potential usage for the traditional evaluation criteria into the advanced unsupervised quality estimation tasks. In the newly proposed system selection task (Task 1.2) and word-level QE task (Task 2), we have designed different feature set, including factor values and n-gram context information, to explore the performances of several statistical models including NB, SVM, and CRF.MAE RMSE DeltaAvg Spearman Corr EBLEU 16.97 21.94 2.74 0.11 Baseline SVM 14.81 18.22 8.52 0.46 Table 2: Task 1-1 results in the WMT13 DE-EN EN-ES Methods Tau(ties penalized) |Tau|(ties ignored) Tau(ties penalized) |Tau|(ties ignored) EBLEU-I -0.38 -0.03 -0.35 0.02 EBLEU-A N/A N/A -0.27 N/A NB-LPR -0.49 0.01 N/A 0.07 Baseline -0.12 0.08 -0.23 0.03 Table 5: Task 1-2 results in the WMT13 Binary Multiclass Methods Pre Recall F1 Acc CNGL- dMEMM 0.7392 0.9261 0.8222 0.7162 CNGL- MEMM 0.7554 0.8581 0.8035 0.7116 LIG-All N/A N/A N/A 0.7192 LIG-FS 0.7885 0.8644 0.8247 0.7207 LIG- BOOSTIN G 0.7779 0.8843 0.8276 N/A NB 0.8181 0.4937 0.6158 0.5174 CRF 0.7169 0.9846 0.8297 0.7114 Table 4: Task 2 results in the WMT13 ADJ ADP ADV CONJ DET NOUN NUM PRON PRT VERB X . ADJ PREP, PREP/D EL ADV, NEG CC, CCAD, CCNEG, CQUE, CSUBF, CSUBI, CSUBX ART NC, NMEA, NMON, NP, PERCT, UMMX CARD, CODE, QU DM, INT, PPC, PPO, PPX, REL SE VCLIger, VCLIinf, VCLIfin, VEadj, VEfin, VEger, VEinf, VHadj, VHfin, VHger, VHinf, VLadj, VLfin, VLger, VLinf, VMadj, VMfin, VMger, VMinf, VSadj, VSfin, VSger, VSinf ACRNM, ALFP, ALFS, FO, ITJN, ORD, PAL, PDEL, PE, PNC, SYM BACKSL ASH, CM, COLON, DASH, DOTS, FS, LP, QT, RP, SEMICO LON, SLASH Table 1: Developed POS mapping for Spanish and universal tagset 𝐸𝐵𝐿𝐸𝑈 = 1 − 𝑀𝐿𝑃 × 𝑒𝑥𝑝 𝑤 𝑛 𝑙𝑜𝑔 𝐻(𝛼𝑅 𝑛, 𝛽𝑃𝑛) (1) 𝑀𝐿𝑃 = 𝑒1− 𝑠 ℎ𝑖𝑓ℎ < 𝑠 𝑒1− ℎ 𝑠 𝑖𝑓ℎ ≥ 𝑠 (2) 𝑈 𝑛, 𝑛 ∈ (−4, 3) Unigram, from antecedent 4th to subsequent 3rd token 𝐵 𝑛−1,𝑛, 𝑛 ∈ (−1, 2) Bigram, from antecedent 2nd to subsequent 2nd token 𝐵−1,1 Jump bigram, antecedent and subsequent token 𝑇𝑛−1,𝑛,𝑛+1, 𝑛 ∈ (−1, 1) Trigram, from antecedent 2nd to subsequent 2nd token Table 3: Developed features