Writing questions that measure what matters workshop slides

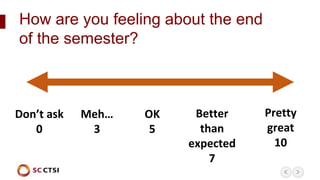

- 1. Pretty great 10 Better than expected 7 OK 5 Meh… 3 Don’t ask 0 How are you feeling about the end of the semester?

- 2. Writing Questions that Measure What Matters: Best Practices for Designing Multiple Choice Test Questions with Katherine Guevara Associate Director of Clinical and Translational Research Education Programs SC CTSI Education Resource Center (ERC) in Workforce Development (WD) Rossier School of Education Faculty Gemma North Associate Director of Evaluation & Improvement SC CTSI Evaluation & Improvement

- 3. Session Norms & Netiquette Throughout our time together today, we will o Remain muted until indicated turn-taking time o Use Raise Hand function to indicate desire to participate via voice o Use the chat Everyone for questions/comments applicable to all o Use the chat Private for personal questions/comments o Keep identifying information out of questions/comments

- 4. Session Objectives By the end of this workshop, we will be able to 1. Determine whether a multiple choice test is the most appropriate assessment format per learning objectives 2. Analyze multiple choice questions using a set of best practices for question design 3. Revise multiple choice questions to better align with best practices

- 5. Is Multiple Choice Best?

- 6. Write a research question according to taught criteria Evidence of skill we collect through assessment How to write a research question What we teach By the end of this course, students will be able to write a research question for a proposed study Learning objective Measuring Skills We Teach

- 7. Write a research question according to taught criteria How to write a research question By the end of this course, students will be able to write a research question for a proposed study Alignment Assessment Teaching Learning Objective Measuring Skills We Teach

- 8. Write a research question according to taught criteria How to write a research question By the end of this course, students will be able to write a research question for a proposed study Not ideal to assess with Multiple Choice Measuring Skills We Teach

- 9. Identify which of the following is an appropriate research question How to write a research question By the end of this course, students will be able to write a research question for a proposed study Misalignment Assessment Teaching Learning Objective Measuring Skills We Teach

- 10. Discussion (8 minutes) Which of the common reasons for misalignment may be affecting your assessment? 1. In breakout groups, review the 6 common reasons for misalignment 2. Select which of the 6 may be affecting your assessment

- 11. Common Reasons for Misalignment 1. Learning objectives are not specific and measurable 2. Learning objectives may not align with external professional standards (if required) 3. Questions do not measure/match learning objective skills 4. Rotating faculty may not have taught to the learning objectives or may have changed them 5. Faculty submitting questions yearly may not have received information/training 6. Questions may be written in ways that also test other skills unrelated to the learning objectives (such as embedded cultural context)

- 12. Takeaway #1 Identify the course learning objective each question is meant to test & check alignment

- 13. Do questions match skill levels?

- 15. Match the question to the skill level taught Analyzing Our Questions

- 16. Learner is simply asked to remember the definition of signal learning. OK if that was the learning objective skill, what we taught, and what they practiced. Remember Level According to [the researcher], the association of an already available response with a new stimulus is called: a) b) c) d) Question/Skill Level Example 1

- 17. Learner is asked to apply principles learned & practiced in class related to child development in a new context/scenario. Application Level Ali, age three-and-a-half, spills their milk at the table. According to current principles of child development, the parents should: a) b) c) d) Question/Skill Level Example 2

- 18. Learner is asked to recognize unstated assumptions/inferences, break down complex material into parts & determine relationships. Analysis Level Professor Stepp’s statistics class student asked what their average score was for 3 exams. The reply was +1.7. Which of the following assumptions about the student’s test scores is most plausible? a) b) c) d) Question/Skill Level Example 3

- 19. Discussion (5 minutes) In breakout groups… 1. One person shares 1 multiple choice question from a real test they give 2. Other colleagues guess – What learning objective skill was taught – Which level of Bloom’s Taxonomy the question represents

- 20. Takeaway #2 Identify when in the course the learner was taught and practiced the skill each question is meant to test

- 22. Question Construction Top Tips 15 tips covering 3 categories o Question Stem – Meaningful, relevant, positive o Answer Alternatives – Plausible, clear, mutually exclusive, homogenous… o Content – Simple, independent, higher cognition-focused

- 23. Discussion (10 minutes) In breakout groups… 1. Consult the list of 15 tips for writing test questions 2. Prioritize 1 tip to use to analyze your test questions 3. With your colleagues’ assistance, revise at least 1 test question to better align with the tip you prioritized

- 24. Example Prioritized Tip: The stem should be meaningful by itself and should present a definite problem Existing Test Question: Which of the following is a true statement? (not meaningful) Revised Question: Which characteristic is relatively constant in mitochondrial genomes across species? (more meaningful)

- 25. Takeaway #3 Identify whether a learner with subject knowledge but minimal reading skills can still answer the question

- 26. Session Objectives Review By the end of this workshop, we will be able to 1. Determine whether a multiple choice test is the most appropriate assessment format per learning objectives 2. Analyze multiple choice questions using a set of best practices for question design 3. Revise multiple choice questions to better align with best practices

- 27. Recommended Resources – CET Learning Objectives, Bloom’s Taxonomy & Test Question Design – Vanderbilt University’s Writing Good Multiple Choice Test Questions – Brigham Young University’s 14 Rules for Writing Multiple Choice Questions – Shrock & Coscarelli’s Criterion-Referenced Test Development

- 28. Twitter: @SoCalCTSIEmail: info@sc-ctsi.orgPhone: (323) 442-0217SC CTSI | www.sc-ctsi.org Thank You

Editor's Notes

- Briefly introduce ourselves; One sentence about what SC CTSI is

- Getting this by email as well