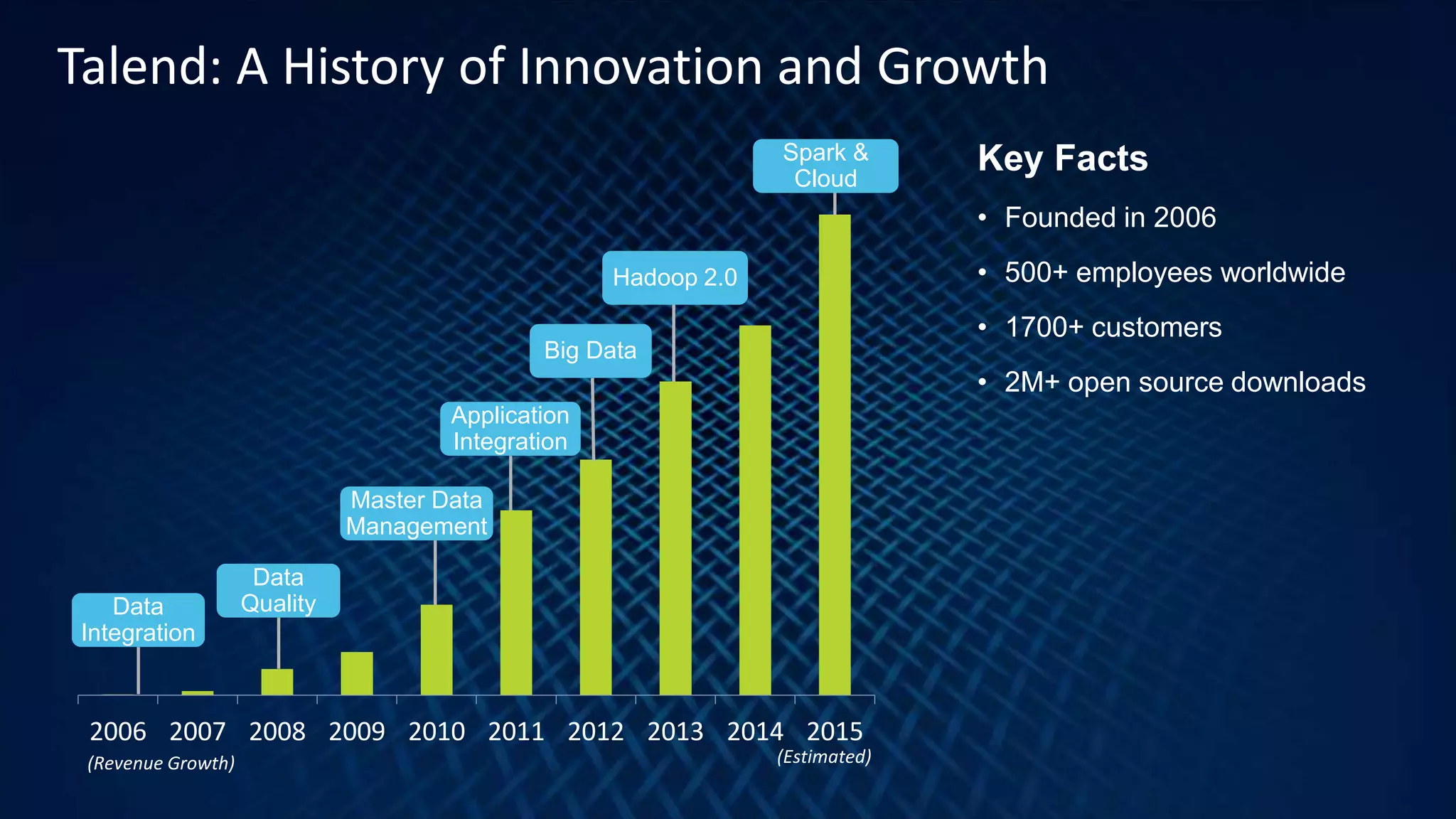

Talend is a data integration company founded in 2006 with over 1,700 customers and a focus on big data and analytics. The document highlights the benefits of using customer analytics for improved corporate performance, citing significant increases in customer acquisition and retention. An example from the Otto Group showcases how Talend's solutions enable quicker decision-making and enhanced customer experiences.