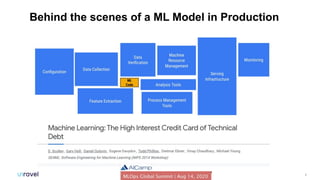

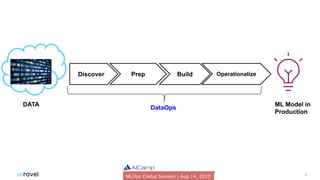

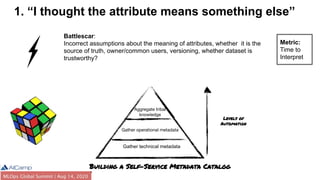

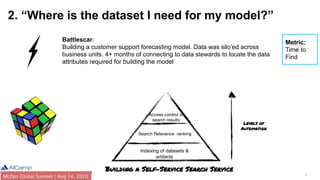

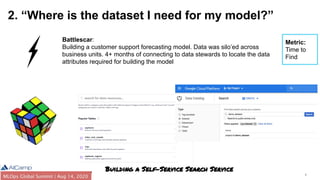

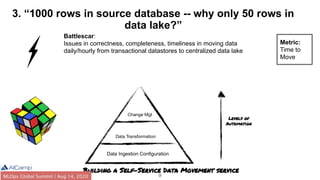

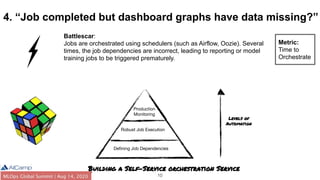

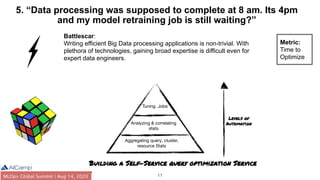

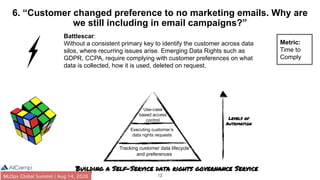

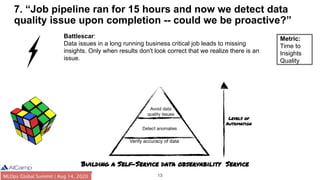

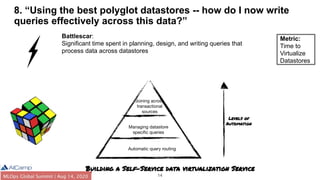

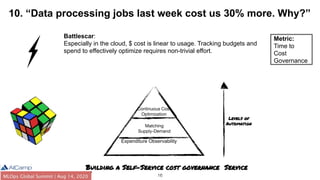

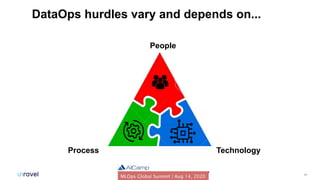

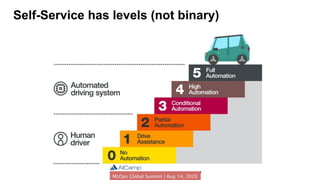

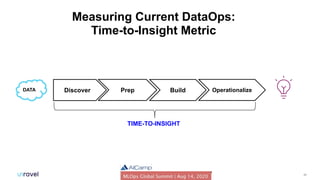

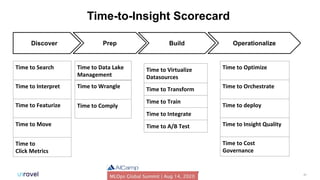

The document outlines common DataOps challenges encountered when deploying machine learning models in production, including issues with data accessibility, completeness, and compliance. It emphasizes the importance of creating self-service solutions to address these challenges, such as metadata catalogs and orchestration services, in order to improve time-to-insight and governance. The document concludes with a call to action for organizations to assess and enhance their DataOps processes through self-service metrics and design patterns.