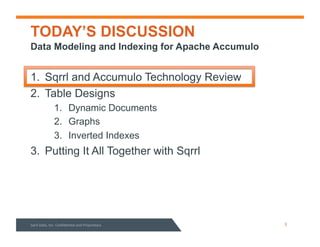

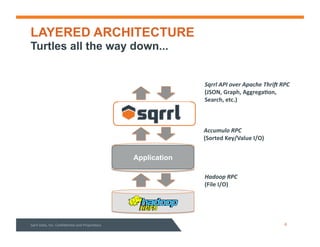

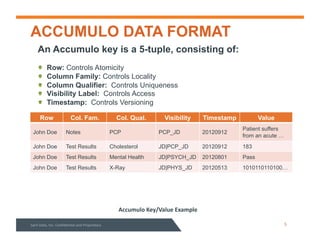

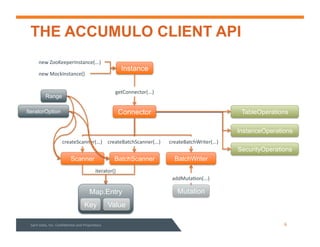

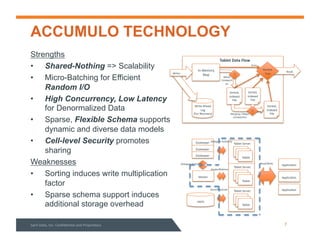

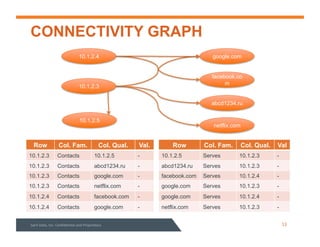

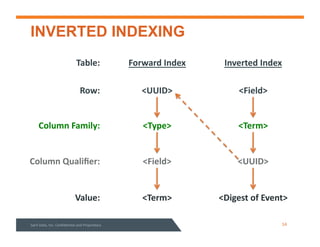

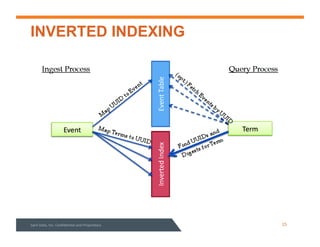

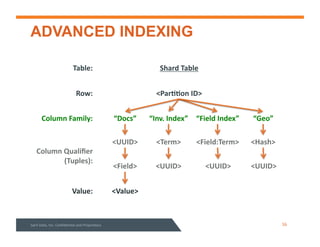

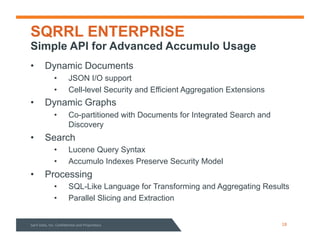

This document summarizes a webinar about data modeling and indexing for Apache Accumulo using Sqrrl. It discusses Accumulo and Sqrrl technology, including table designs for dynamic documents, graphs and inverted indexes. It also describes how Sqrrl Enterprise allows building advanced indexes and the real-time operational applications it enables.