Newt Global provides DevOps transformation, cloud enablement, and test automation services. It was founded in 2004 and is headquartered in Dallas, Texas with locations in the US and India. The company is a leader in DevOps transformations and has been one of the top 100 fastest growing companies in Dallas twice. The document discusses an upcoming webinar on Docker 101 that will be presented by two Newt Global employees: Venkatnadhan Thirunalai, the DevOps Practice Leader, and Jayakarthi Dhanabalan, an AWS Solution Specialist.

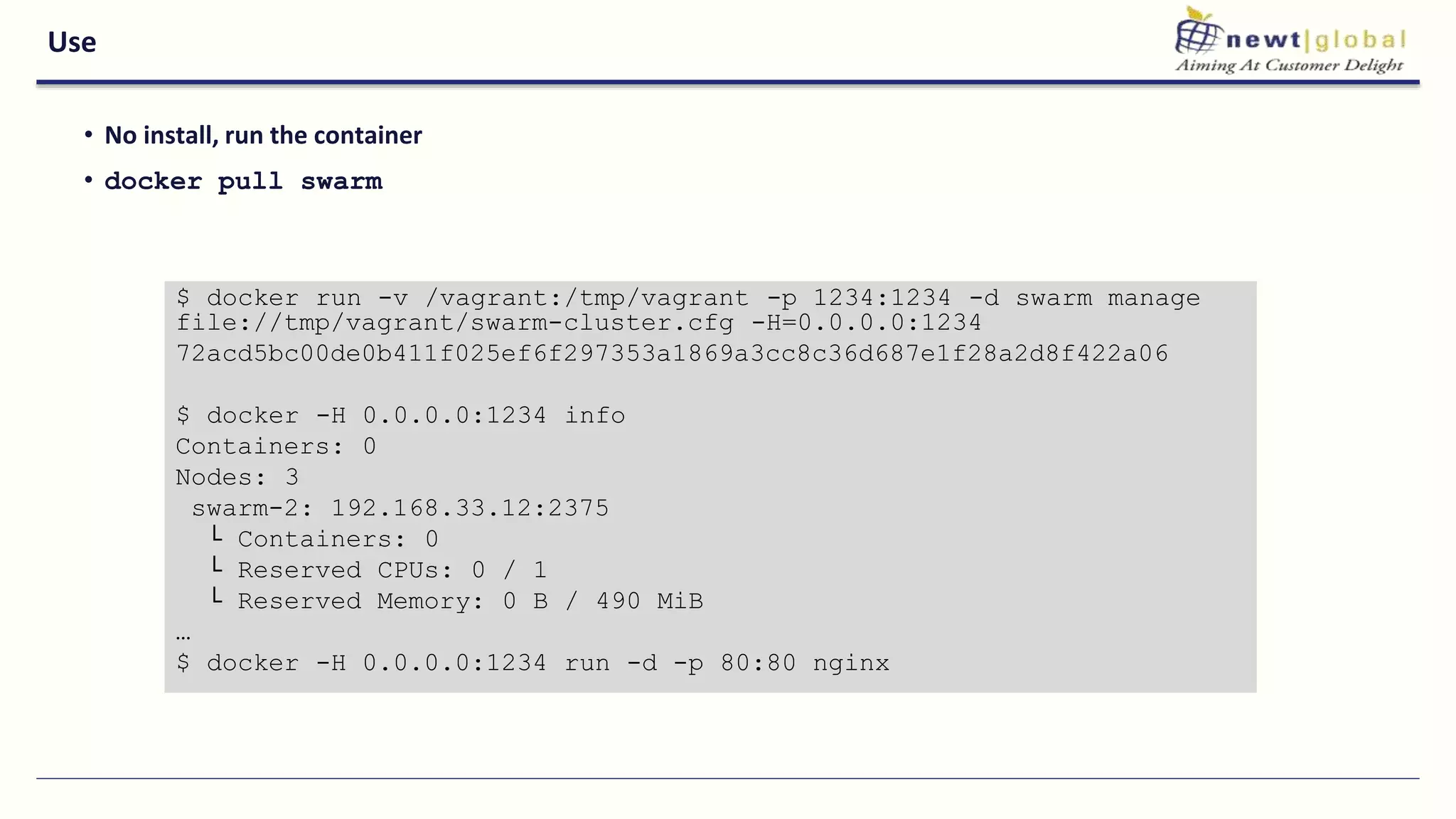

![Cloud Use

• Many drivers

• Many more waiting for merge (i.e cloudstack )

$ ./docker-machine create -d digitalocean foobar

INFO[0000] Creating SSH key...

INFO[0001] Creating Digital Ocean droplet...

INFO[0005] Waiting for SSH...

INFO[0072] Configuring Machine...](https://image.slidesharecdn.com/newtglobaldocker101-170127064202/75/Webinar-Docker-Tri-Series-40-2048.jpg)