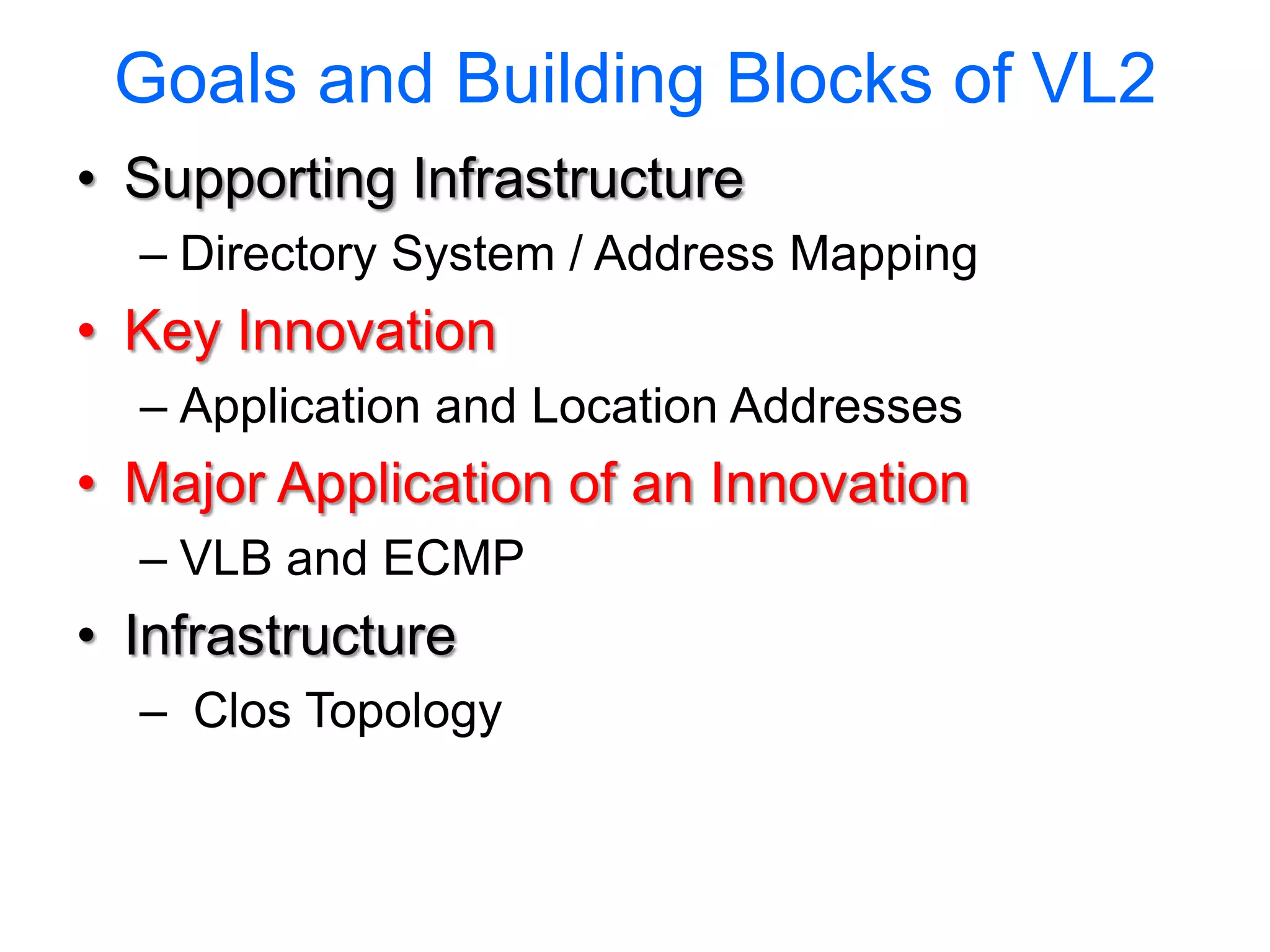

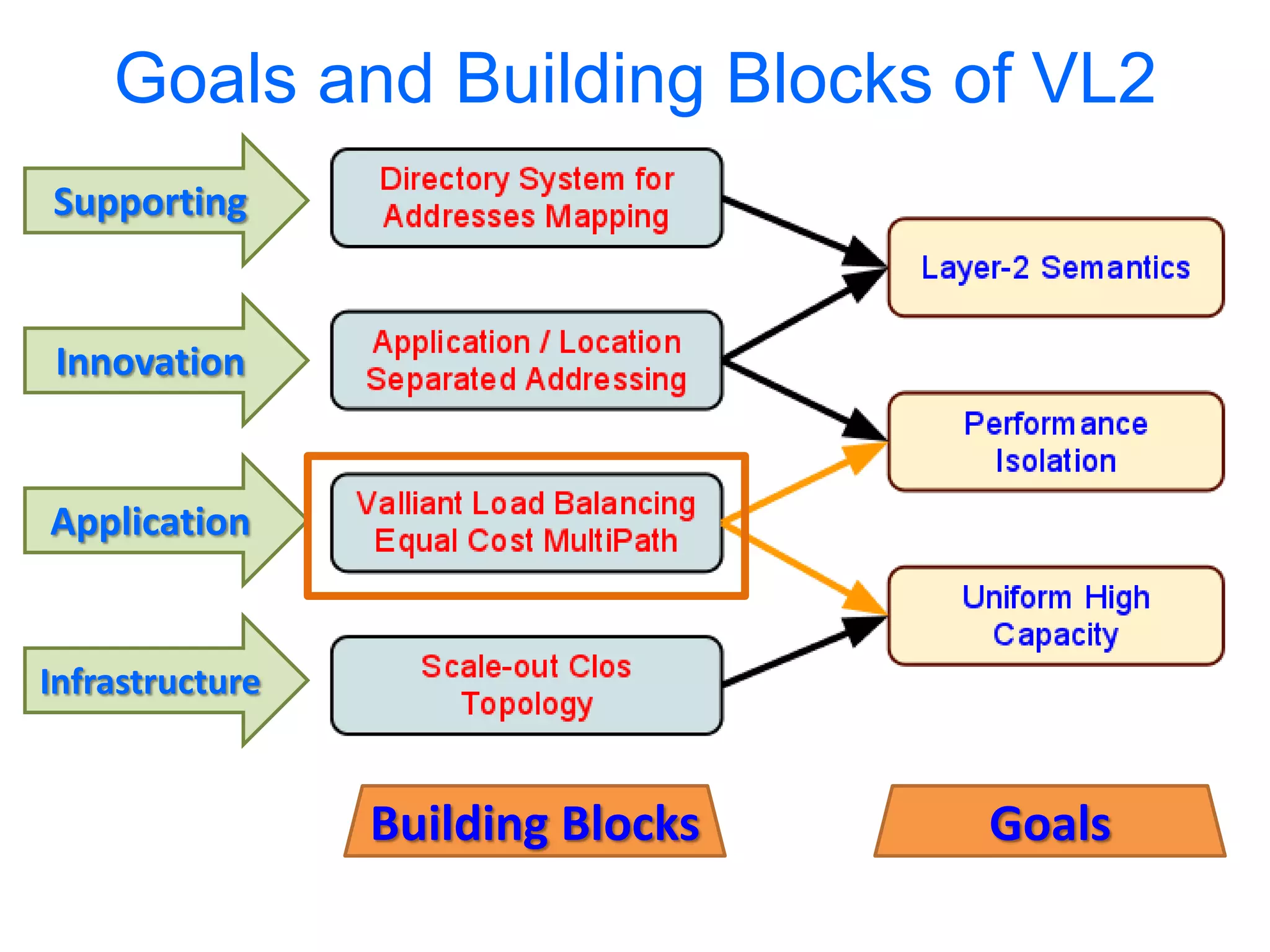

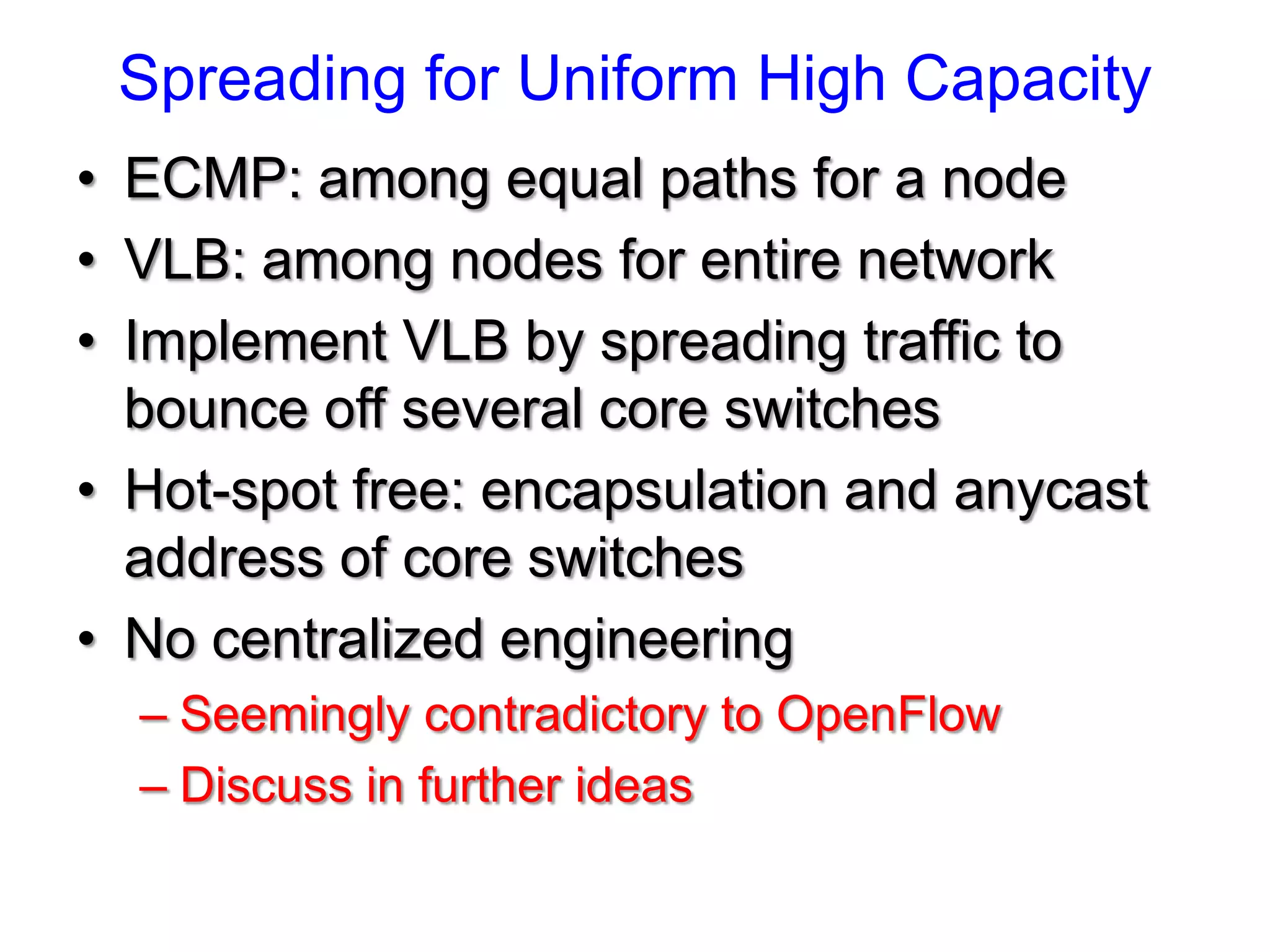

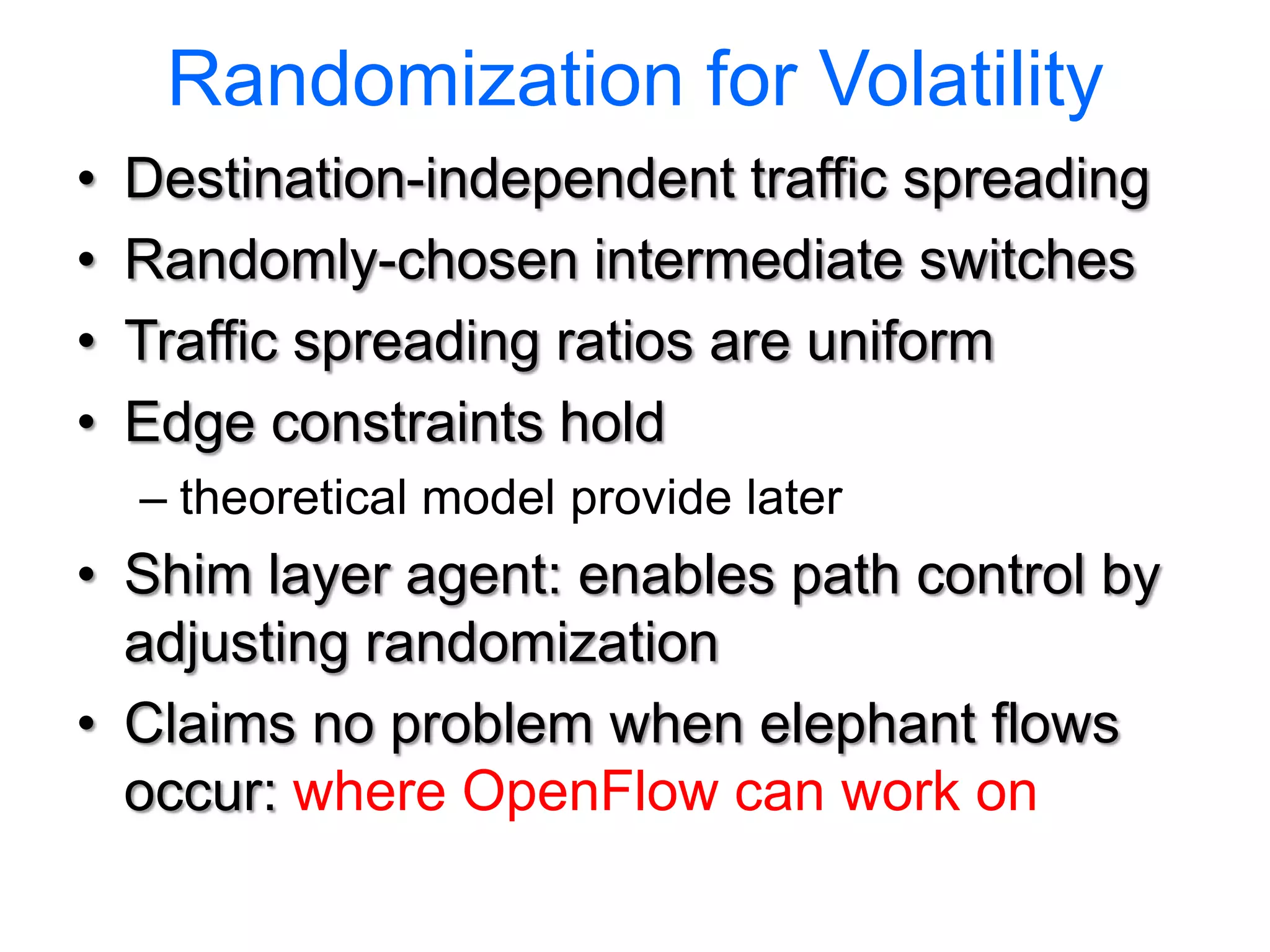

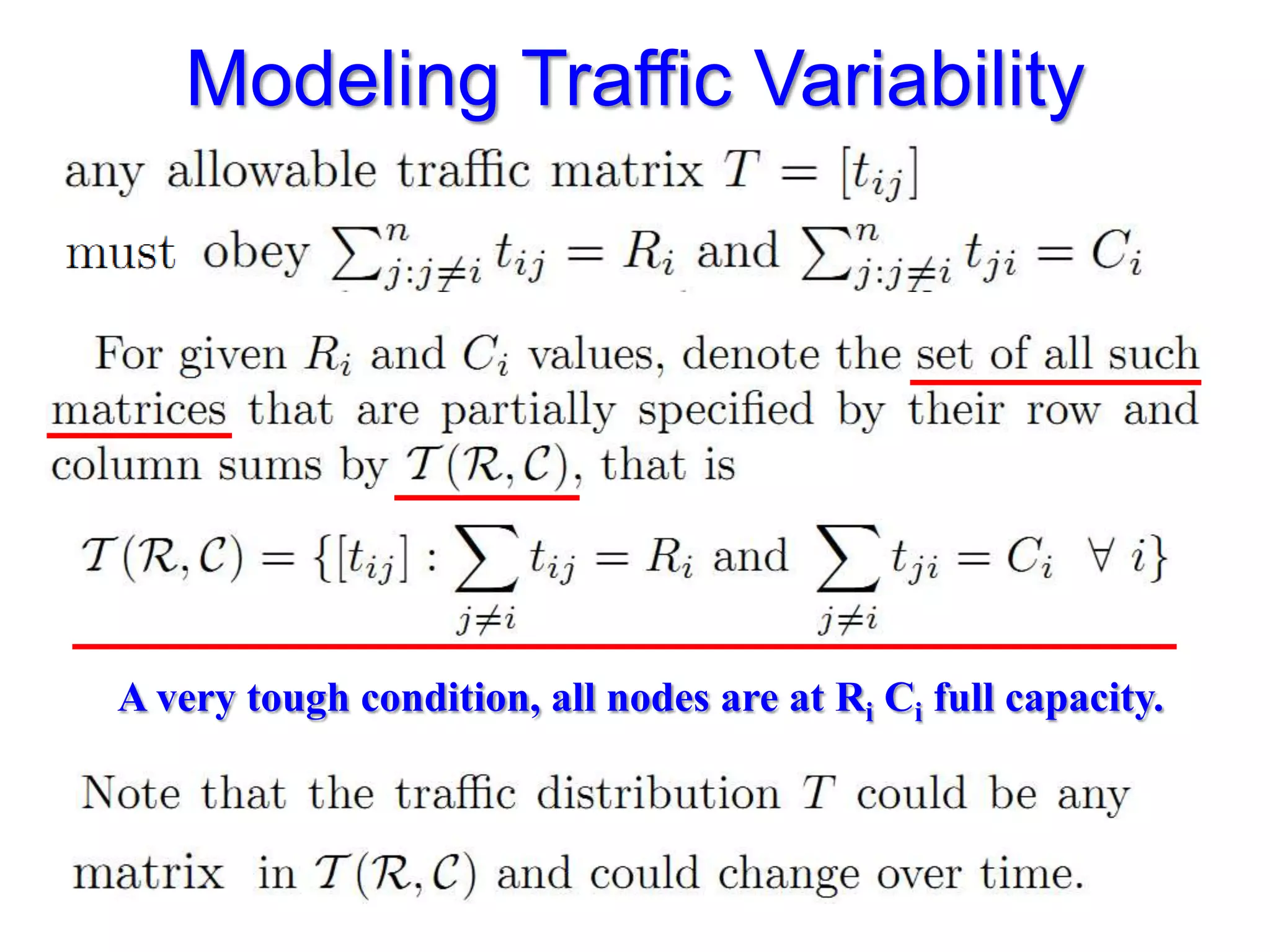

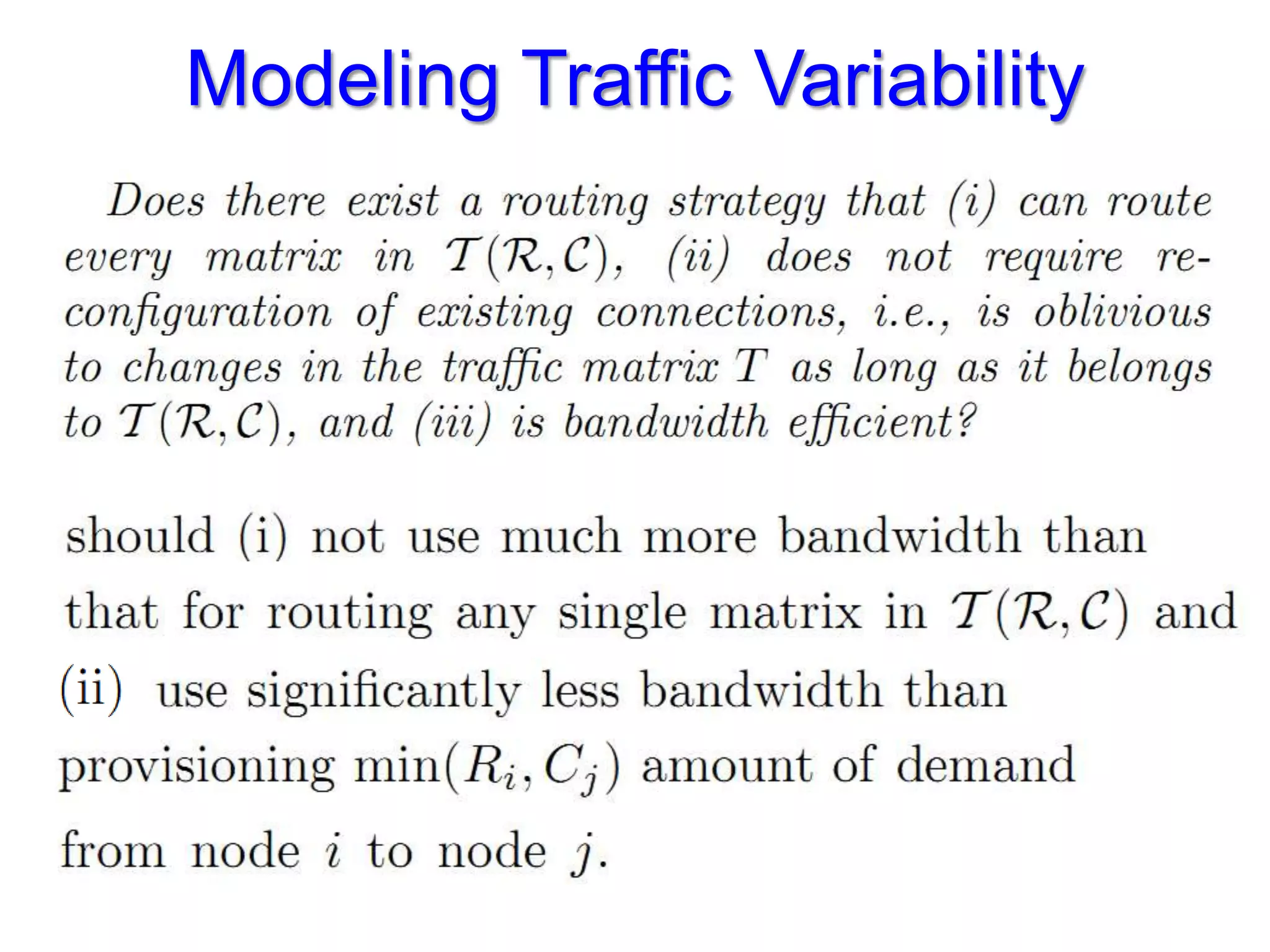

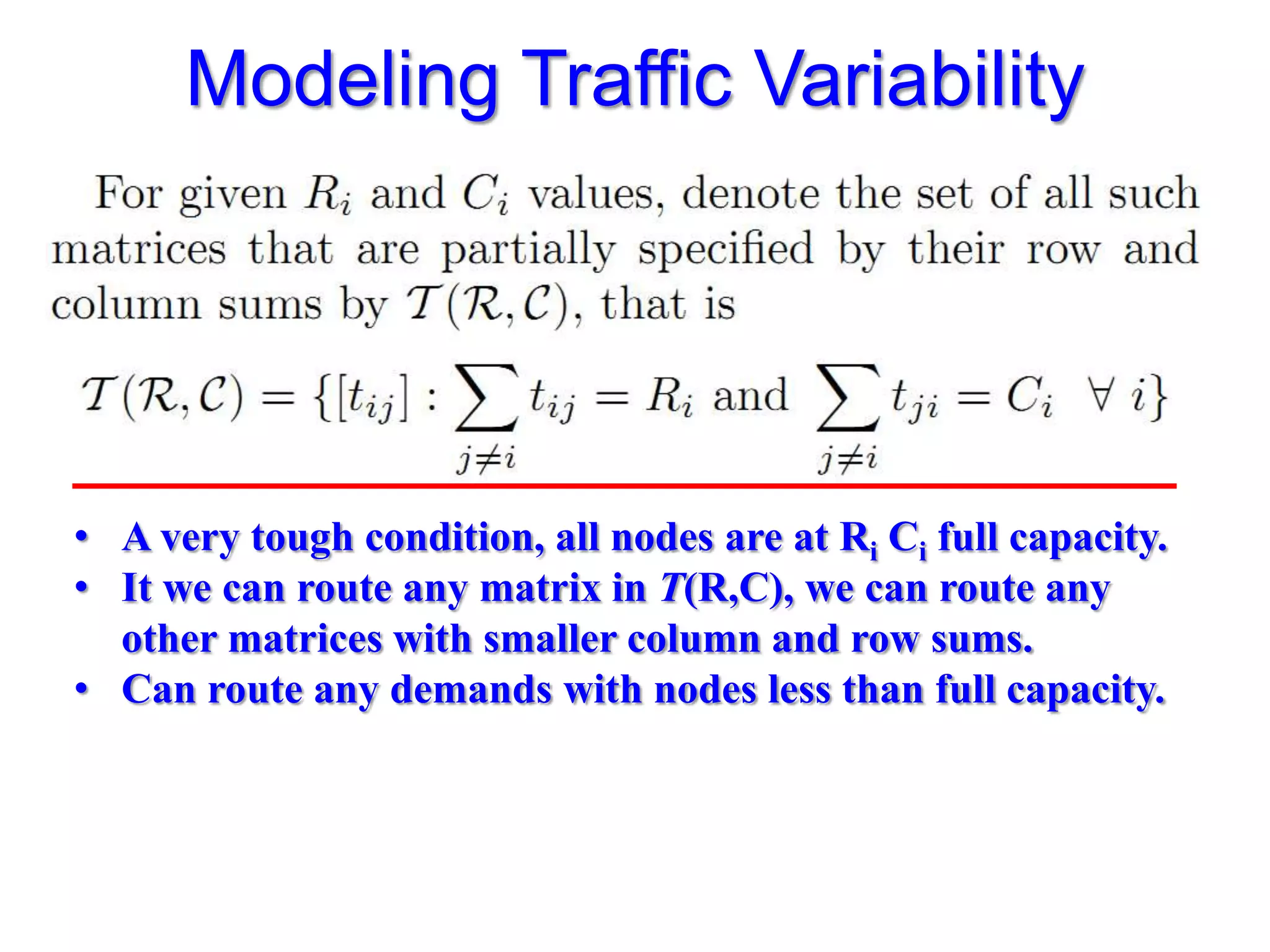

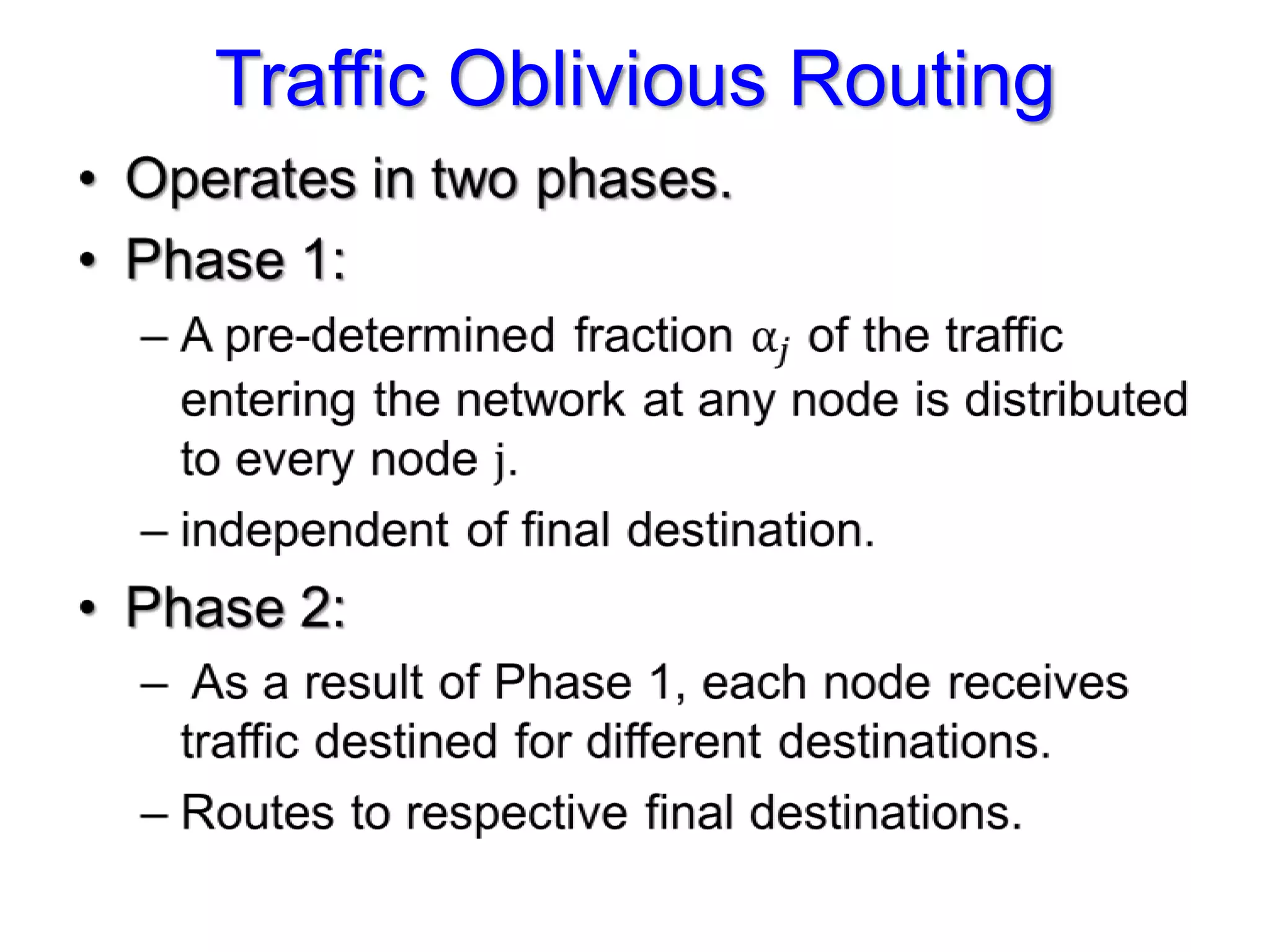

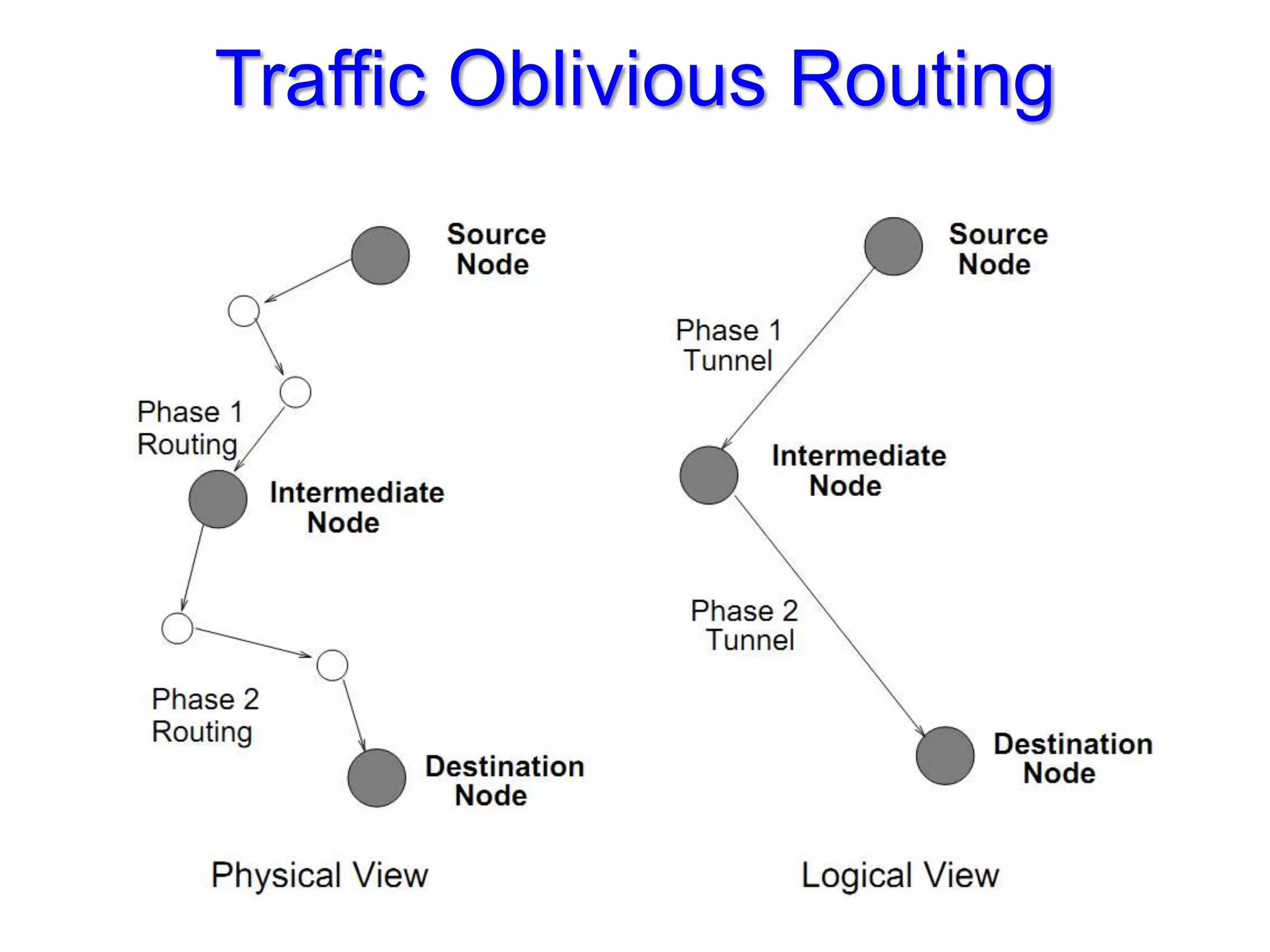

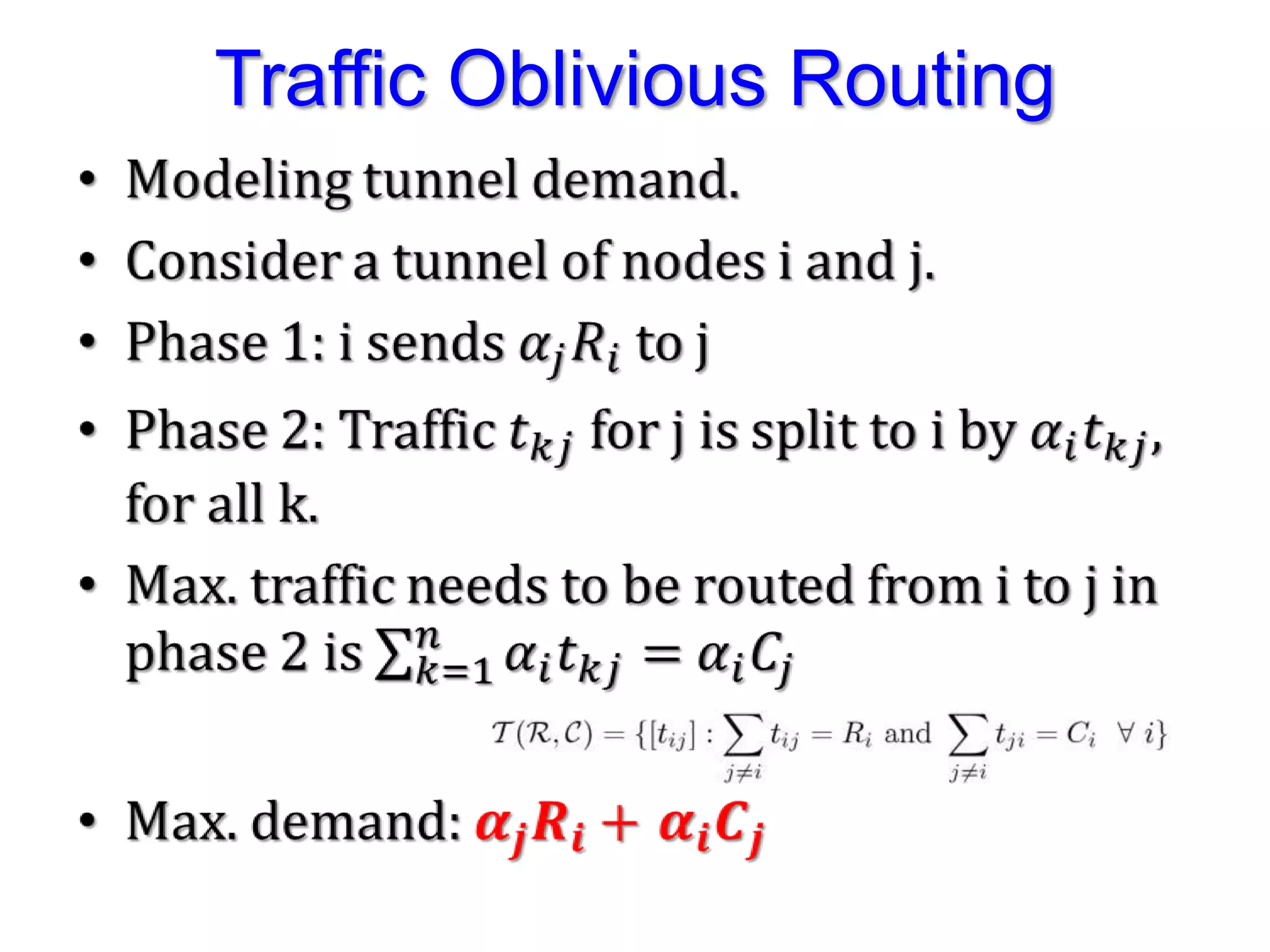

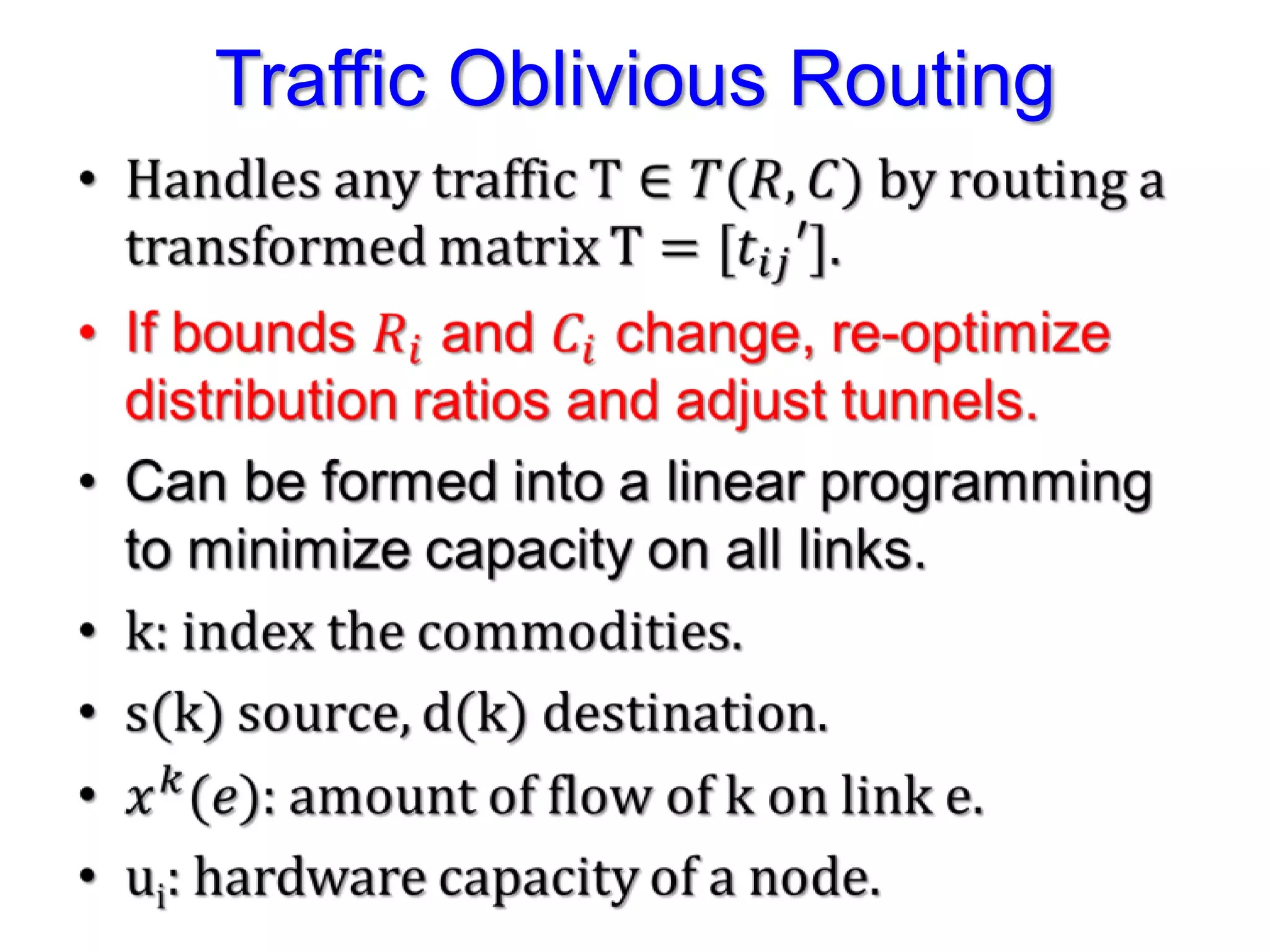

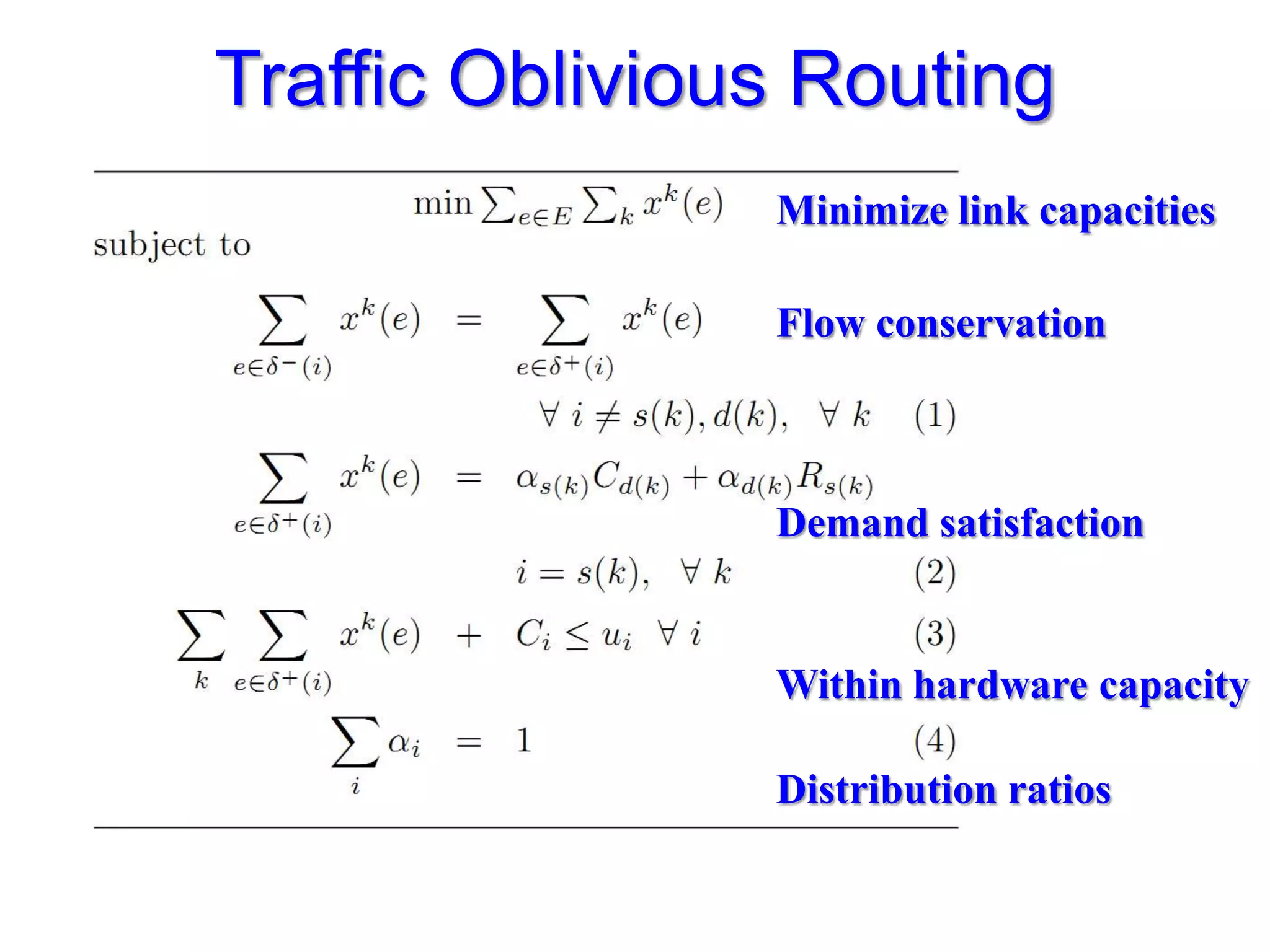

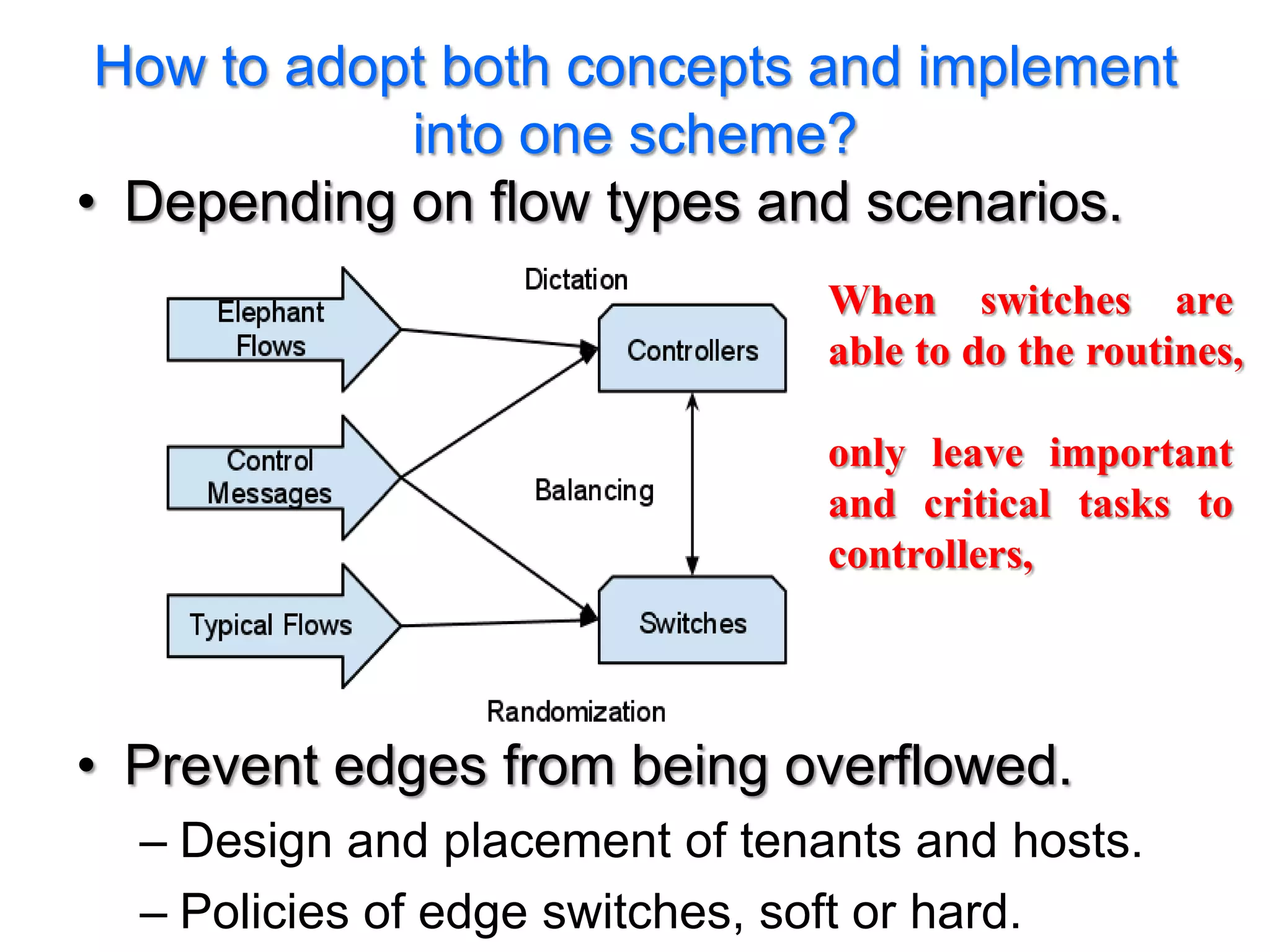

The document discusses the Valiant Load Balancing (VLB) and Traffic Oblivious Routing (TOR) in data center networks, particularly within the VL2 architecture. It outlines the innovative goals, infrastructure, and techniques for achieving scalable and efficient routing in environments with unpredictable traffic patterns, while addressing traditional routing limitations. Furthermore, it explores the concept of combining randomization with dictation in routing schemes to optimize traffic management under varying conditions.