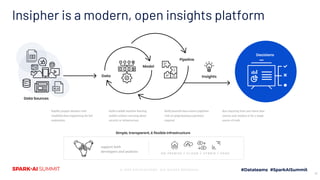

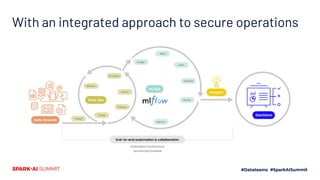

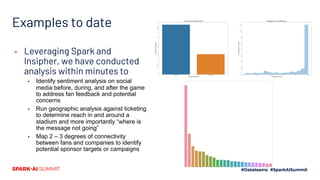

The document discusses the integration of machine learning and sports analytics to enhance fan engagement, maximize sponsorship opportunities, and improve game and player analysis within sports organizations. Key contributors David Cunningham and Young Bang highlight the interconnectedness of data sources to drive success, increase stadium attendance, and optimize business operations. The insights platform Insipher supports these efforts by simplifying data engineering and enabling scalable analytics to address fan feedback and identify profitability opportunities.