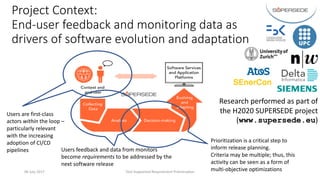

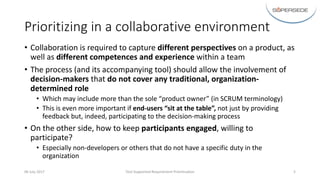

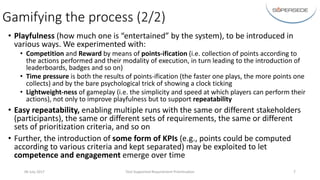

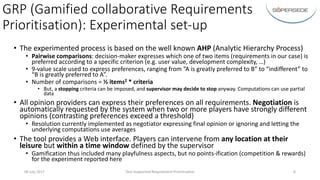

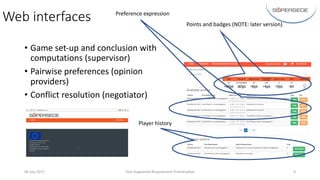

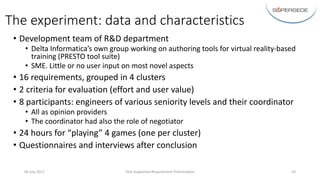

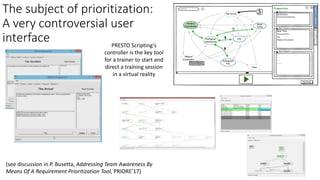

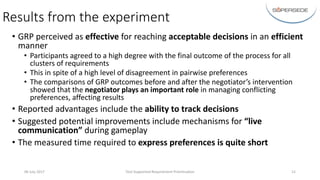

The document discusses a tool-supported process for collaborative requirements prioritization in software development, emphasizing the importance of stakeholder involvement and diverse perspectives. It introduces gamification elements to enhance engagement and flexibility during decision-making sessions, which are crucial for effective release planning. Experimental results indicate that the approach is effective, with positive feedback regarding the process's efficiency and the role of a negotiator in managing conflicting preferences.