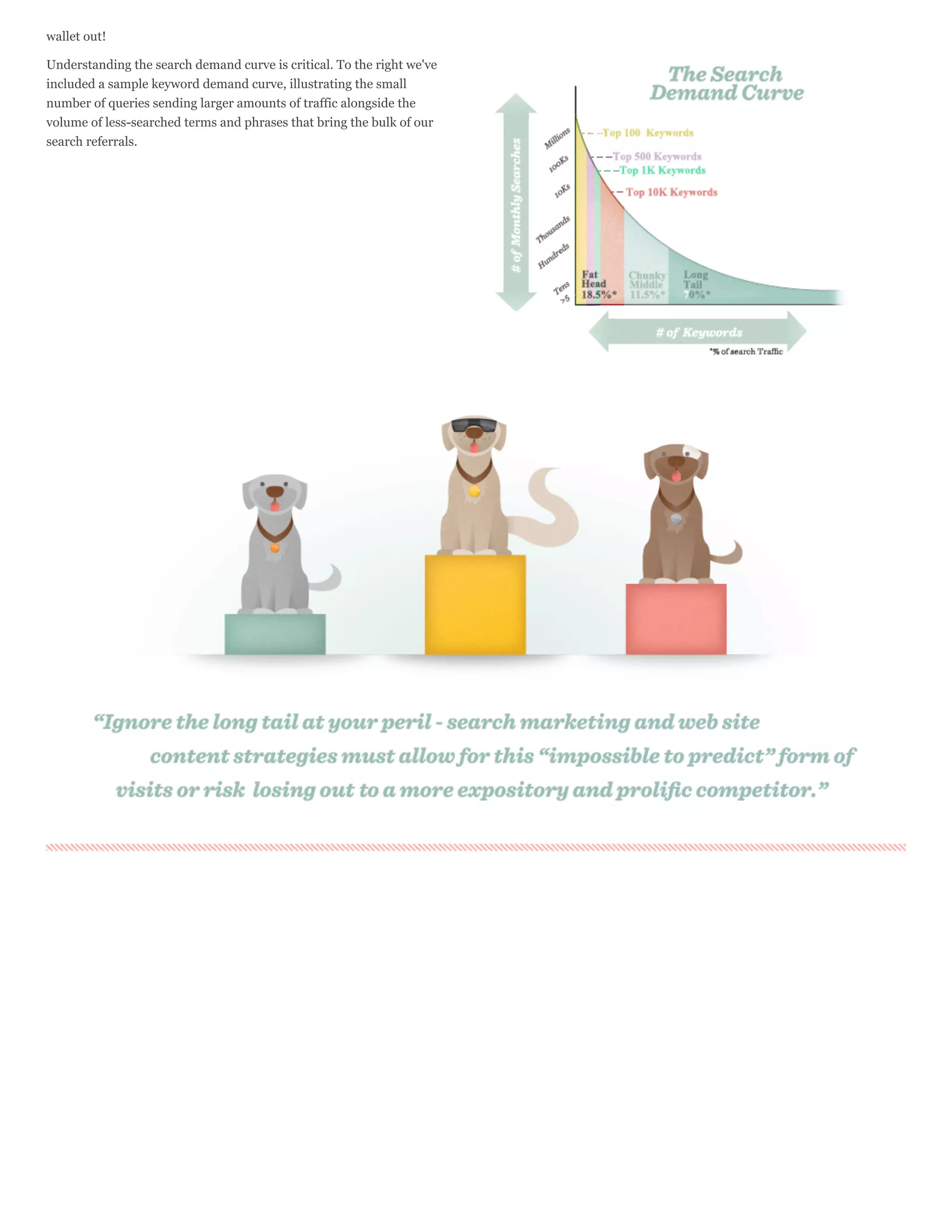

Search engines crawl billions of webpages to build an index and provide relevant search results. They use links between pages to efficiently discover and index content, storing snippets of text and metadata in vast data centers. Complicated algorithms rank results based on over 100 factors related to relevance and popularity to return the most useful pages for a user's query within seconds. Search engine optimization aims to understand and influence these algorithms through on-page and off-page techniques.

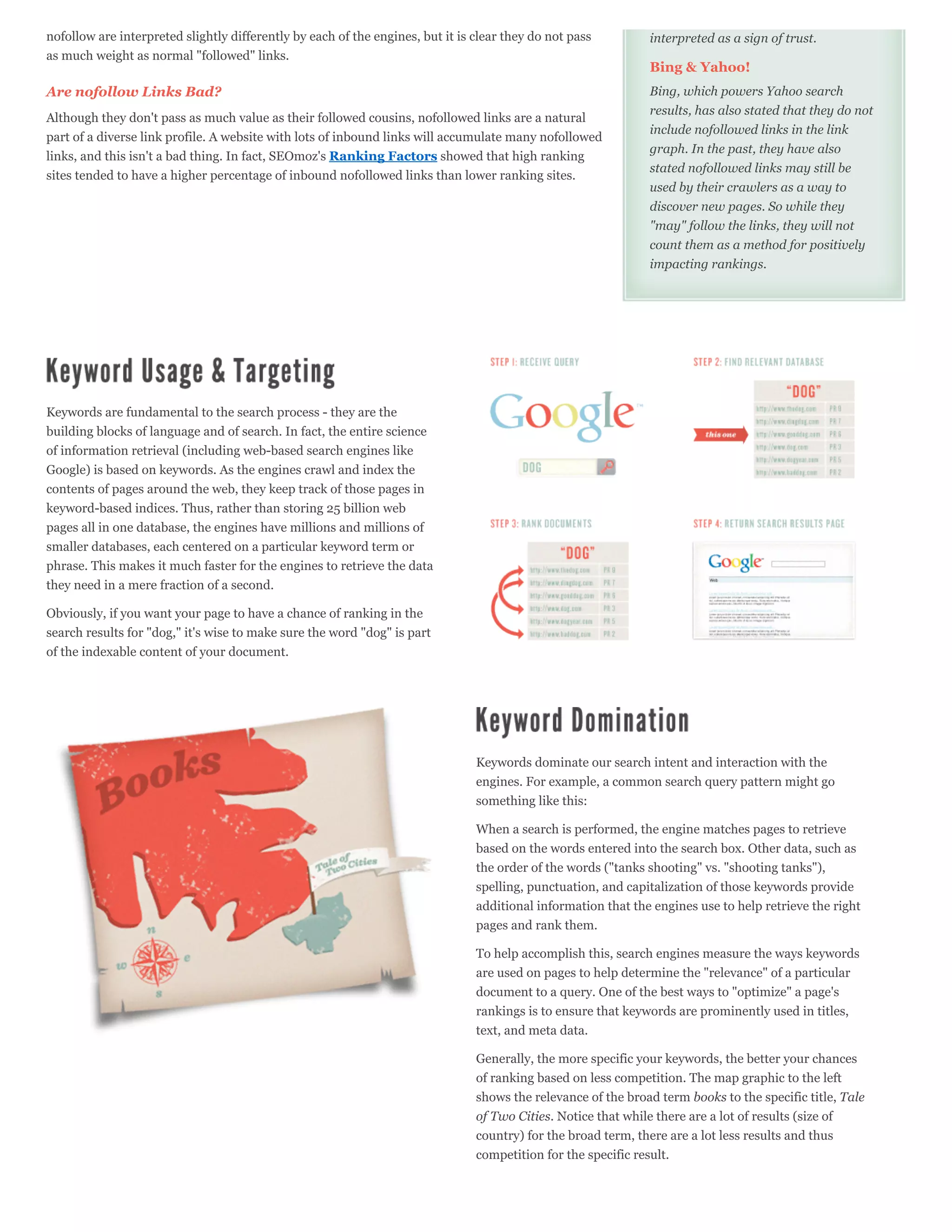

![Resources

Where do we get all of this knowledge about keyword demand and

keyword referrals? From research sources like these listed here:

Google Adwords’ Keyword Tool

Google Insights for Search

Google Trends Keyword Demand Prediction

Microsoft Advertising Intelligence

Wordtracker’s Free Basic Keyword Demand

Google's AdWords Keyword tool is a common starting point for SEO

keyword research. It not only suggests keywords and provides

estimated search volume, but also predicts the cost of running paid

campaigns for these terms. To determine volume for a particular

keyword, be sure to set the Match Type to [Exact] and look under

Local Monthly Searches. Remember that these represent total

Google’s AdWords Keyword Tool provides suggested searches. Depending on your ranking and click-through rate, the

keyword and volume data. actual number of visitors you achieve for these keywords will usually

be much lower.

Other sources for keyword information exist, as do tools with more

advanced data. The SEOmoz blog category on Keyword Research

is an excellent place to start.

What are my chances of success?

In order to know which keywords to target, it's essential to not only

understand the demand for a given term or phrase, but also the

work required to achieve those rankings. If big brands take the top

10 results and you're just starting out on the web, the uphill battle for

rankings can take years of effort. This is why it's essential to

understand keyword difficulty.

Different tools around the web help provide this

information. One of these, SEOmoz’s own Keyword

Analysis Tool does a good job collecting all of these

metrics and providing a comparative score for any given

search term or phrase.](https://image.slidesharecdn.com/seomoz-the-beginners-guide-to-seo-2012-120910111925-phpapp01/75/The-SEO-Guide-for-Beginners-31-2048.jpg)