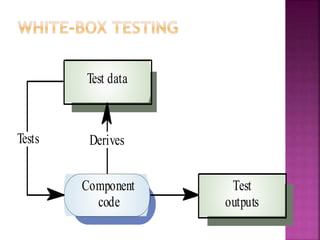

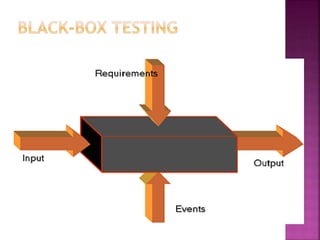

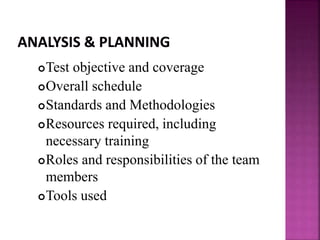

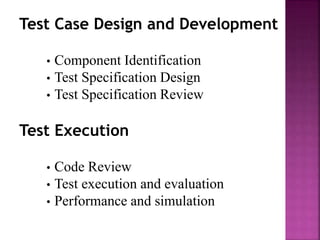

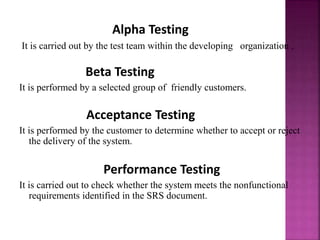

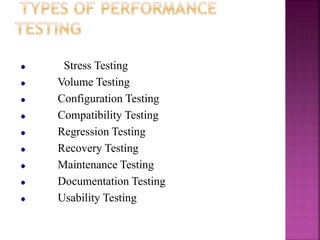

The document provides an overview of software testing, including definitions of key terms, objectives and goals of testing, different testing methodologies and levels, and the typical phases of the software testing lifecycle. It describes error, bug, fault, and failure. It also outlines different types of testing like white box and black box testing and discusses unit, integration, and system testing. Finally, it emphasizes the importance of planning testing to be most effective and cost-efficient.