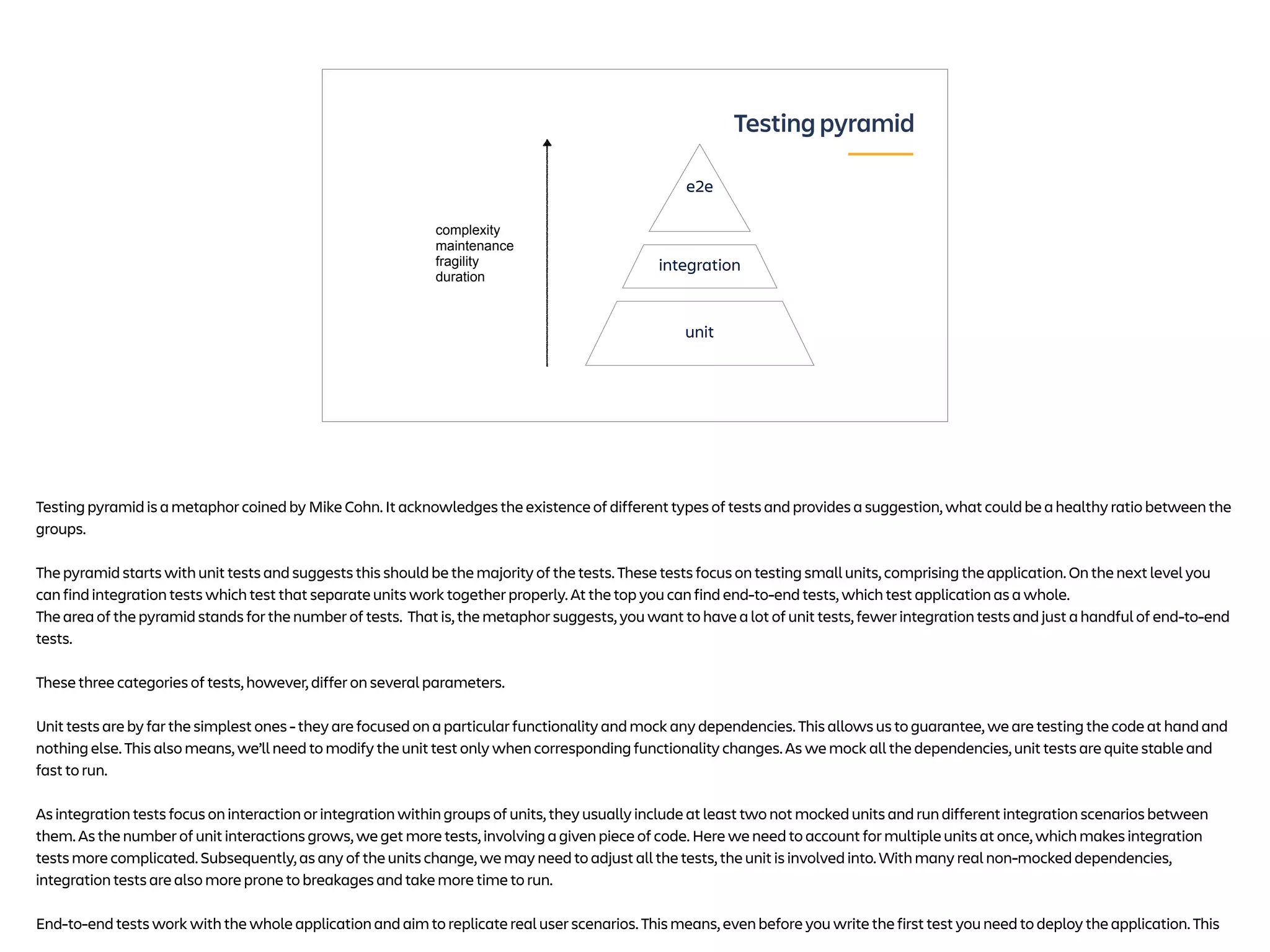

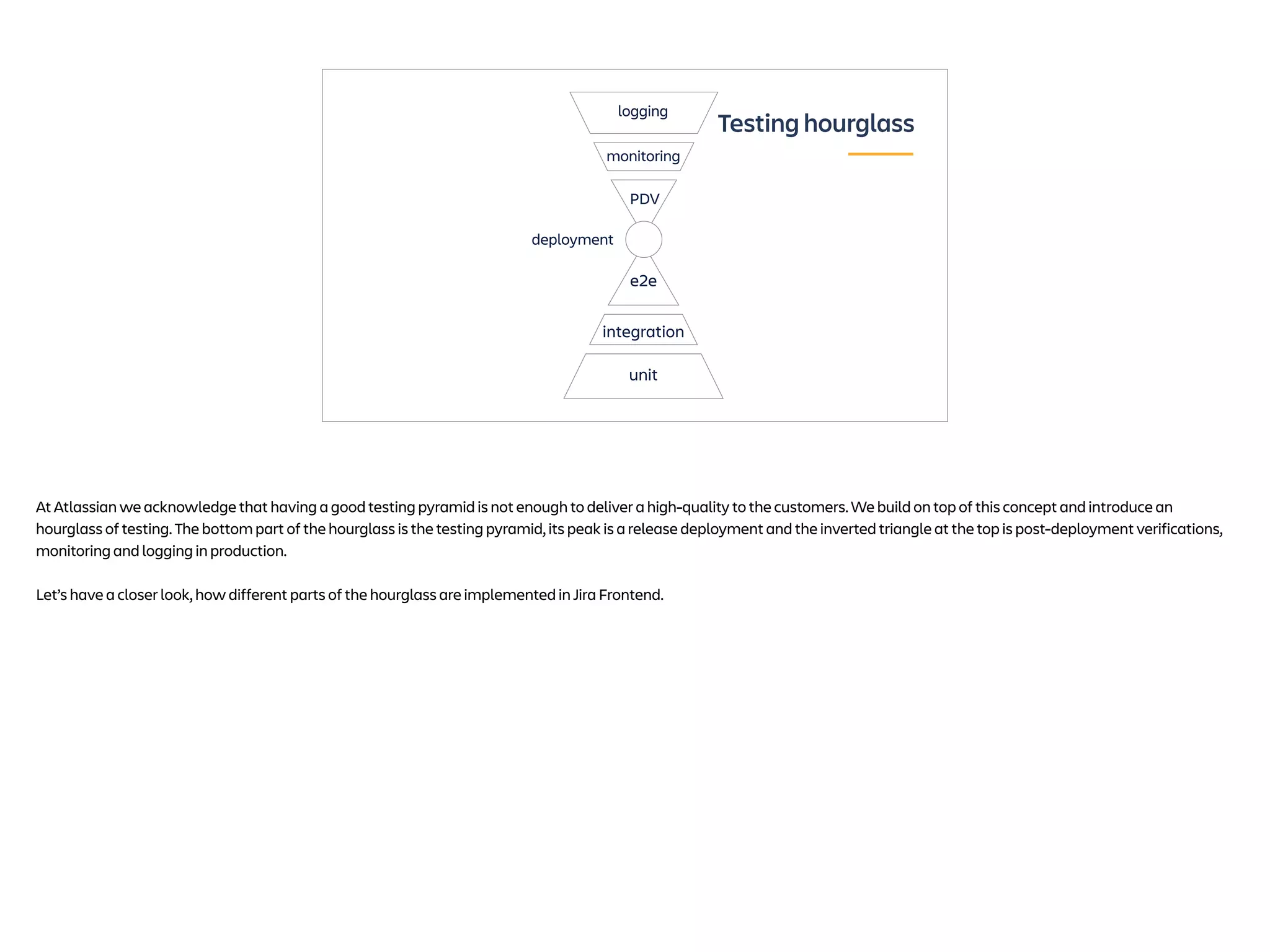

Alexey Shpakov presents on testing in Jira Frontend. He discusses the testing pyramid with unit, integration, and end-to-end tests. He then introduces the concept of a "testing hourglass" which adds deployment and post-deployment verification to the pyramid. Key aspects of each type of test are discussed such as using feature flags, monitoring for flaky tests, and gradual rollouts to reduce risk.