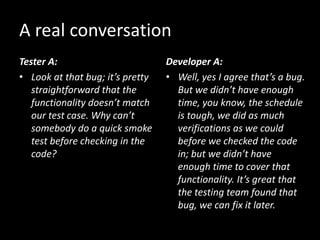

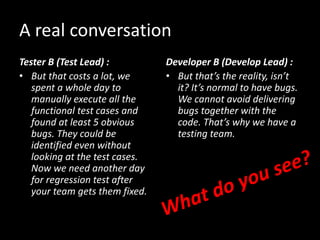

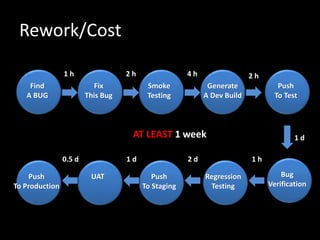

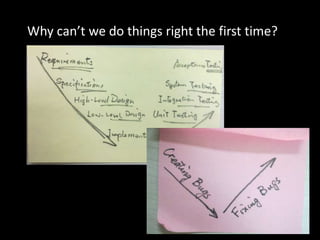

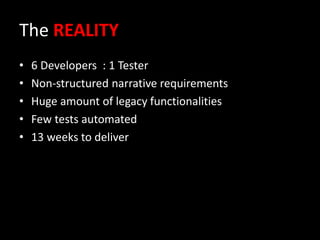

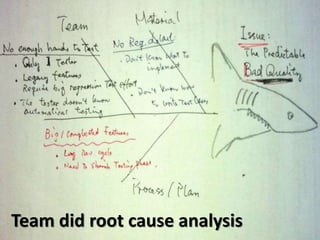

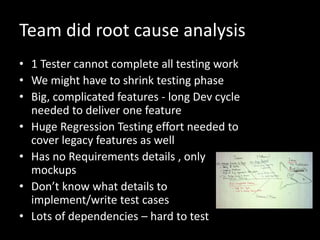

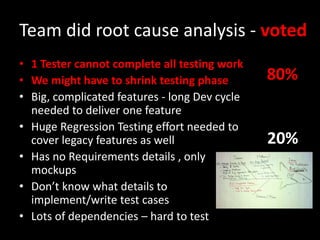

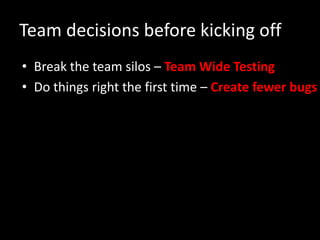

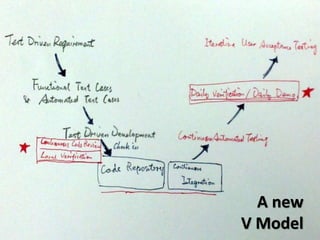

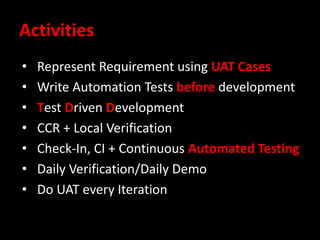

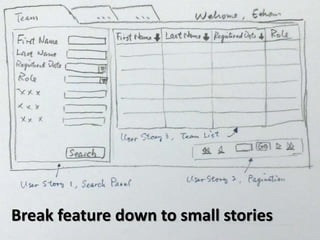

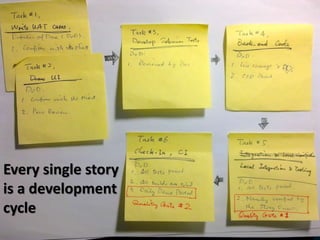

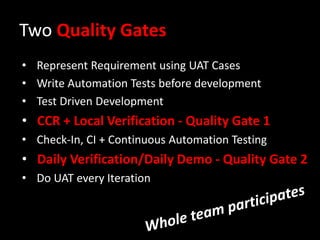

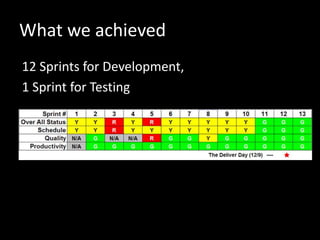

This document discusses implementing team wide testing to improve quality and reduce bugs. It describes current problems like developers feeling pressure to deliver code quickly without proper testing. This leads to bugs found later by testers, wasting time on rework. The team analyzed root causes like lack of test automation and testers. They decided to break down silos between developers and testers. The new process involves test-driven development, continuous testing, and demos at quality gates. While not all user stories were completed, the delivered stories had no bugs found by clients, showing the new process improved quality.