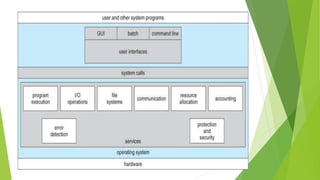

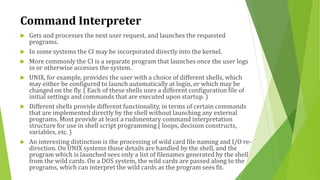

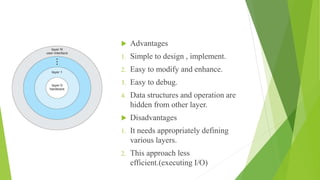

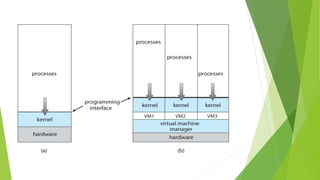

This chapter discusses operating system structure and design. It examines issues like layered designs, microkernels, and modules. It presents the basic structures of popular OSes. Key points covered include system services, system calls, system programs, and user interfaces like command interpreters and graphical user interfaces. Popular OS examples like UNIX, Windows, and Mac OS are referenced throughout.