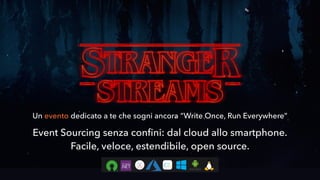

Stranger Streams | NStore @ DevMarche

•

1 like•164 views

Evento DevMarche su Eventsourcing (DDD + Stream Processing). Presentazione del nuovo engine NStore per l'implementazione di un sistema in Eventsourcing multipiattaforma e multidatabase.

Report

Share

Report

Share

Download to read offline

Recommended

Geographic information websites for water management.

Talk I gave at the Dutch python usergroup meeting of 2011-02-16.

Aws cloud big data trends

A subjective comparison the different Big Data Ecosystems through modern development principles

Cloud computing

What is cloud computing and its characteristics and why your organization needs it more than ever?

FIWARE Global Summit - IoT Virtualization for Platform Interoperability

Presentation by Andrea Detti

Professor, University of Rome “Tor Vergata”

FIWARE Global Summit

23-24 October 2019 - Berlin, Germany

Zentral QueryCon 2018

Learn about core functions and architecture of Zentral. Zentral is a open source hub to process event streams from osquery and other sources into the ElasticStack. Besides support for distinct osquery features like file carving, Zentral provides numerous integrations for inventory acquisition and alerting.

MongoDB IoT City Tour LONDON: Managing the Database Complexity, by Arthur Vie...

Arthur Viegers, Senior Solutions Architect, MongoDB.

The value of the fast growing class of NoSQL databases is the ability to handle high velocity and volumes of data while enabling greater agility with dynamic schemas. MongoDB gives you those benefits while also providing a rich querying capability and a document model for developer productivity. Arthur Viegers outlines the reasons for MongoDB's popularity in IoT applications and how you can leverage the core concepts of NoSQL to build robust and highly scalable IoT applications.

Google cloud Study Essentials

This is getting started guide on how to start in Google Cloud Platform. Includes some basic introduction to Cloud

Recommended

Geographic information websites for water management.

Talk I gave at the Dutch python usergroup meeting of 2011-02-16.

Aws cloud big data trends

A subjective comparison the different Big Data Ecosystems through modern development principles

Cloud computing

What is cloud computing and its characteristics and why your organization needs it more than ever?

FIWARE Global Summit - IoT Virtualization for Platform Interoperability

Presentation by Andrea Detti

Professor, University of Rome “Tor Vergata”

FIWARE Global Summit

23-24 October 2019 - Berlin, Germany

Zentral QueryCon 2018

Learn about core functions and architecture of Zentral. Zentral is a open source hub to process event streams from osquery and other sources into the ElasticStack. Besides support for distinct osquery features like file carving, Zentral provides numerous integrations for inventory acquisition and alerting.

MongoDB IoT City Tour LONDON: Managing the Database Complexity, by Arthur Vie...

Arthur Viegers, Senior Solutions Architect, MongoDB.

The value of the fast growing class of NoSQL databases is the ability to handle high velocity and volumes of data while enabling greater agility with dynamic schemas. MongoDB gives you those benefits while also providing a rich querying capability and a document model for developer productivity. Arthur Viegers outlines the reasons for MongoDB's popularity in IoT applications and how you can leverage the core concepts of NoSQL to build robust and highly scalable IoT applications.

Google cloud Study Essentials

This is getting started guide on how to start in Google Cloud Platform. Includes some basic introduction to Cloud

Implementing Real-Time IoT Stream Processing in Azure

So, you have IoT Devices connected to IoT Hub sending telemetry data into the Microsoft Azure cloud. Now what? This session will take you through setting up real-time stream processing of IoT data. We’ll look at integrating services like Azure Stream Analytics, Azure Functions, and Cosmos DB to build a highly scalable stream processing backend for any IoT solution. You’ll leave this session better prepared to handle real-time IoT stream processing in Azure; plus you’ll do it with less code by utilizing serverless Azure Functions.

IOT Paris Seminar 2015 - Storage Challenges in IOT

Why your 'Dad's database' won't work for the Internet of Things.

[WSO2Con USA 2018] Microservices, Containers, and Beyond![[WSO2Con USA 2018] Microservices, Containers, and Beyond](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[WSO2Con USA 2018] Microservices, Containers, and Beyond](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

This slide deck discusses what's next in this highly agile, massively distributed environment. It will focus on fine-tuned DevOps processes, governance, and observability in a massively distributed container native microservices platform.

Elasticsearch and the Database Market

The number of databases and database technologies has grown considerably. Databases are also becoming more and more application specific. Neither end of the spectrum is easy to manage. That's how database-as-a-service (DBaaS) can help. You can limit the number of technologies and still be flexible.

Introducing MagnetoDB, a key-value storage sevice for OpenStack

Introducing MagnetoDB, NoSQL database as a service for OpenStack. MagnetoDB acts as a key-value store, is tightly integrated with OpenStack, and yet is compatible with the Amazon DynamoDB API, and can be used as a drop-in replacement.

An overview of BigQuery

A brief introduction to Google BigQuery concepts with learning resources. Understand the benefits, challenges, and core concepts of BigQuery.

Fact oriented modeling

As presented at "The Data Vault Modelling & Data Governance Conference" 2019 Belgium

Aleksei Udatšnõi – Crunching thousands of events per second in nearly real ti...

Aleksei Udatšnõi – Crunching thousands of events per second in nearly real time

Imagine you have a product which generates up to 10 thousands events per second or around 1 billion events per day. This live stream of data need to be tracked, processed and presented to end-users in a visually appealing way. The solution needs to be integrated into a traditional web application. That is the real use case at Softonic. In this talk we will show how it was solved in Softonic. We use the stack of technologies around Big Data to process and store live stream of data and present the results to users in nearly real time. This real-life solution is built around Hadoop ecosystem and it includes Flume, Hive, Oozie and Impala. We will show how to store and query such volumes of data using NoSQL database and how to build a scalable end-user web application using nearly real time data feed.

Cloud Capacity Planning Tooling - South Bay SRE Meetup Aug-09-2016

Sebastien de Larquier from our Data Analytics and Engineering team discusses the tools and associated methodology we apply to tackle our cloud capacity planning needs at Netflix.

XenApp on Google Cloud Deployment Guide

Deploying Citrix Cloud on the Google Cloud Platform (GCP) provides agility in provisioning applications and desktops.

Deploying Citrix XenApp & XenDesktop Service on Google Cloud Platform

Deploying the Citrix XenApp & XenDesktop Service on the Google Cloud Platform.

Dynamic Scheduling - Federated clusters in mesos

An approach to scheduling batch loads across multiple globally distributed datacenters using Mesos. Includes maths ;)

Always on! Or not?

My Slides about creating web sites which could also be useable even if you are not online! From Web Storages to Service Workers.

Presented at Mobiletech Conference in Munich March 2017

Create a serverless architecture for data collection with Python and AWS

The talk illustrates a real-world example of how to collect data from your web, mobile, server and cloud apps and then send them to third party services and tools or load them into your data warehouse.

The data collection pipeline is integrated with multiple AWS services, such as Kinesis Firehose, Lambda functions and StepFunctions; Python is used to write each module. The data workflow is fully described pointing out how to store backup correctly, manage the conditional routing (in order to allow or discard data for specific services), implement a retry strategy on failure and finally compare performance and costs for each module.

A serverless IoT Story From Design to Production and Monitoring

The slides for our talk in Architecture Next 2019. Was held on 30/05/2019.

The event was organized by CodeValue.

A serverless IoT story from design to production and monitoring

Alex Pshul & Moaid Hathot Senior Consultants @CodeValue, in their session from "Architecture Next 19"

Spark Streaming - Meetup Data Analysis

Meetup Streaming data loaded and analysed successfully.

Streaming events data loaded through Spark Streaming using Custom Receivers and handled as Asynchronous HTTP requests.

History Events and Rsvp data analysed through Spark MLlib to build an Group member recommendations based on K-means clustering model.

Code: https://github.com/ssushmanth/meetup-stream

Securing TodoMVC Using the Web Cryptography API

The open source TodoMVC project implements a Todo application using popular JavaScript MV* frameworks. Some of the implementations add support for compile to JavaScript languages, module loaders and real time backends. This presentation will demonstrate a TodoMVC implementation which adds support for the forthcoming W3C Web Cryptography API, as well as review some key cryptographic concepts and definitions.

Instead of storing the Todo list as plaintext in localStorage, this "secure" TodoMVC implementation encrypts Todos using a password derived key. The PBKDF2 algorithm is used for the deriveKey operation, with getRandomValues generating a cryptographically random salt. The importKey method sets up usage of AES-CBC for both encrypt and decrypt operations. The final solution helps address item "A6-Sensitive Data Exposure" from the OWASP Top 10.

With the Web Cryptography API being a recommendation in 2014, any Q&A time will likely include browser implementations and limitations, and whether JavaScript cryptography adds any value.

More Related Content

What's hot

Implementing Real-Time IoT Stream Processing in Azure

So, you have IoT Devices connected to IoT Hub sending telemetry data into the Microsoft Azure cloud. Now what? This session will take you through setting up real-time stream processing of IoT data. We’ll look at integrating services like Azure Stream Analytics, Azure Functions, and Cosmos DB to build a highly scalable stream processing backend for any IoT solution. You’ll leave this session better prepared to handle real-time IoT stream processing in Azure; plus you’ll do it with less code by utilizing serverless Azure Functions.

IOT Paris Seminar 2015 - Storage Challenges in IOT

Why your 'Dad's database' won't work for the Internet of Things.

[WSO2Con USA 2018] Microservices, Containers, and Beyond![[WSO2Con USA 2018] Microservices, Containers, and Beyond](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[WSO2Con USA 2018] Microservices, Containers, and Beyond](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

This slide deck discusses what's next in this highly agile, massively distributed environment. It will focus on fine-tuned DevOps processes, governance, and observability in a massively distributed container native microservices platform.

Elasticsearch and the Database Market

The number of databases and database technologies has grown considerably. Databases are also becoming more and more application specific. Neither end of the spectrum is easy to manage. That's how database-as-a-service (DBaaS) can help. You can limit the number of technologies and still be flexible.

Introducing MagnetoDB, a key-value storage sevice for OpenStack

Introducing MagnetoDB, NoSQL database as a service for OpenStack. MagnetoDB acts as a key-value store, is tightly integrated with OpenStack, and yet is compatible with the Amazon DynamoDB API, and can be used as a drop-in replacement.

An overview of BigQuery

A brief introduction to Google BigQuery concepts with learning resources. Understand the benefits, challenges, and core concepts of BigQuery.

Fact oriented modeling

As presented at "The Data Vault Modelling & Data Governance Conference" 2019 Belgium

Aleksei Udatšnõi – Crunching thousands of events per second in nearly real ti...

Aleksei Udatšnõi – Crunching thousands of events per second in nearly real time

Imagine you have a product which generates up to 10 thousands events per second or around 1 billion events per day. This live stream of data need to be tracked, processed and presented to end-users in a visually appealing way. The solution needs to be integrated into a traditional web application. That is the real use case at Softonic. In this talk we will show how it was solved in Softonic. We use the stack of technologies around Big Data to process and store live stream of data and present the results to users in nearly real time. This real-life solution is built around Hadoop ecosystem and it includes Flume, Hive, Oozie and Impala. We will show how to store and query such volumes of data using NoSQL database and how to build a scalable end-user web application using nearly real time data feed.

Cloud Capacity Planning Tooling - South Bay SRE Meetup Aug-09-2016

Sebastien de Larquier from our Data Analytics and Engineering team discusses the tools and associated methodology we apply to tackle our cloud capacity planning needs at Netflix.

XenApp on Google Cloud Deployment Guide

Deploying Citrix Cloud on the Google Cloud Platform (GCP) provides agility in provisioning applications and desktops.

Deploying Citrix XenApp & XenDesktop Service on Google Cloud Platform

Deploying the Citrix XenApp & XenDesktop Service on the Google Cloud Platform.

Dynamic Scheduling - Federated clusters in mesos

An approach to scheduling batch loads across multiple globally distributed datacenters using Mesos. Includes maths ;)

What's hot (16)

Implementing Real-Time IoT Stream Processing in Azure

Implementing Real-Time IoT Stream Processing in Azure

IOT Paris Seminar 2015 - Storage Challenges in IOT

IOT Paris Seminar 2015 - Storage Challenges in IOT

[WSO2Con USA 2018] Microservices, Containers, and Beyond![[WSO2Con USA 2018] Microservices, Containers, and Beyond](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[WSO2Con USA 2018] Microservices, Containers, and Beyond](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[WSO2Con USA 2018] Microservices, Containers, and Beyond

Introducing MagnetoDB, a key-value storage sevice for OpenStack

Introducing MagnetoDB, a key-value storage sevice for OpenStack

Aleksei Udatšnõi – Crunching thousands of events per second in nearly real ti...

Aleksei Udatšnõi – Crunching thousands of events per second in nearly real ti...

Cloud Capacity Planning Tooling - South Bay SRE Meetup Aug-09-2016

Cloud Capacity Planning Tooling - South Bay SRE Meetup Aug-09-2016

Deploying Citrix XenApp & XenDesktop Service on Google Cloud Platform

Deploying Citrix XenApp & XenDesktop Service on Google Cloud Platform

Similar to Stranger Streams | NStore @ DevMarche

Always on! Or not?

My Slides about creating web sites which could also be useable even if you are not online! From Web Storages to Service Workers.

Presented at Mobiletech Conference in Munich March 2017

Create a serverless architecture for data collection with Python and AWS

The talk illustrates a real-world example of how to collect data from your web, mobile, server and cloud apps and then send them to third party services and tools or load them into your data warehouse.

The data collection pipeline is integrated with multiple AWS services, such as Kinesis Firehose, Lambda functions and StepFunctions; Python is used to write each module. The data workflow is fully described pointing out how to store backup correctly, manage the conditional routing (in order to allow or discard data for specific services), implement a retry strategy on failure and finally compare performance and costs for each module.

A serverless IoT Story From Design to Production and Monitoring

The slides for our talk in Architecture Next 2019. Was held on 30/05/2019.

The event was organized by CodeValue.

A serverless IoT story from design to production and monitoring

Alex Pshul & Moaid Hathot Senior Consultants @CodeValue, in their session from "Architecture Next 19"

Spark Streaming - Meetup Data Analysis

Meetup Streaming data loaded and analysed successfully.

Streaming events data loaded through Spark Streaming using Custom Receivers and handled as Asynchronous HTTP requests.

History Events and Rsvp data analysed through Spark MLlib to build an Group member recommendations based on K-means clustering model.

Code: https://github.com/ssushmanth/meetup-stream

Securing TodoMVC Using the Web Cryptography API

The open source TodoMVC project implements a Todo application using popular JavaScript MV* frameworks. Some of the implementations add support for compile to JavaScript languages, module loaders and real time backends. This presentation will demonstrate a TodoMVC implementation which adds support for the forthcoming W3C Web Cryptography API, as well as review some key cryptographic concepts and definitions.

Instead of storing the Todo list as plaintext in localStorage, this "secure" TodoMVC implementation encrypts Todos using a password derived key. The PBKDF2 algorithm is used for the deriveKey operation, with getRandomValues generating a cryptographically random salt. The importKey method sets up usage of AES-CBC for both encrypt and decrypt operations. The final solution helps address item "A6-Sensitive Data Exposure" from the OWASP Top 10.

With the Web Cryptography API being a recommendation in 2014, any Q&A time will likely include browser implementations and limitations, and whether JavaScript cryptography adds any value.

Streaming Analytics for Financial Enterprises

Streaming Analytics (or Fast Data processing) is becoming an increasingly popular subject in the financial sector. There are two main reasons for this development. First, more and more data has to be analyze in real-time to prevent fraud; all transactions that are being processed by banks have to pass and ever-growing number of tests to make sure that the money is coming from and going to legitimate sources. Second, customers want to have friction-less mobile experiences while managing their money, such as immediate notifications and personal advise based on their online behavior and other users’ actions.

A typical streaming analytics solution follows a ‘pipes and filters’ pattern that consists of three main steps: detecting patterns on raw event data (Complex Event Processing), evaluating the outcomes with the aid of business rules and machine learning algorithms, and deciding on the next action. At the core of this architecture is the execution of predictive models that operate on enormous amounts of never-ending data streams.

In this talk, I’ll present an architecture for streaming analytics solutions that covers many use cases that follow this pattern: actionable insights, fraud detection, log parsing, traffic analysis, factory data, the IoT, and others. I’ll go through a few architecture challenges that will arise when dealing with streaming data, such as latency issues, event time vs server time, and exactly-once processing. The solution is build on the KISSS stack: Kafka, Ignite, and Spark Structured Streaming. The solution is open source and available on GitHub.

Spark on Azure HDInsight - spark meetup seattle

Since HDInsight launched Spark clusters last year, HDInsight spark team’s mission has been making Spark easy-to-use and production-ready. In the process, we have explored many open source technologies such as Livy, Jupyter, Zeppelin. In this talk, we will demo top customer features, deep dive into HDInsight Spark architecture, and share learnings from building the perfect cluster.

Speakers: Judy Nash and Lin Chan

Spring in the Cloud - using Spring with Cloud Foundry

This talk's about using the power of the Spring framework with Cloud Foundry, the open source PaaS (platform as-a-service) from VMware. This is a bit more deep an introduction than my other Spring and Cloud Foundry talk, and so I've kept both, while encouraging people to check this one out, first.

Introduction to WSO2 Data Analytics Platform

WSO2 have had several analytics products: WSO2 BAM and WSO2 CEP for some time (or Big Data products if you prefer the term). We are added WSO2 Machine Learner, a product to create, evaluate, and deploy predictive models and renamed WSO2 BAM to WSO2 DAS ( Data Analytics Server).

The platform let you publish ( collect data) once and process them through batch ( Spark) , realtime ( CEP), search the data ( Lucene) and build machine learning models.

This post describes how all those fit within to a single story.

For more information, see https://iwringer.wordpress.com/2015/03/18/introducing-wso2-analytics-platform-note-for-architects/

Working with data using Azure Functions.pdf

Azure Functions are great for a wide range of scenarios, including working with data on a transactional or event-driven basis. In this session, we'll look at how you can interact with Azure SQL, Cosmos DB, Event Hubs, and more so you can see how you can take a lightweight but code-first approach to building APIs, integrations, ETL, and maintenance routines.

WSO2Con ASIA 2016: WSO2 Analytics Platform: The One Stop Shop for All Your Da...

Today’s highly connected world is flooding businesses with big and fast-moving data. The ability to trawl this data ocean and identify actionable insights can deliver a competitive advantage to any organization. The WSO2 Analytics Platform enables businesses to do just that by providing batch, real-time, interactive and predictive analysis capabilities all in one place.

In this tutorial we will

Plug in the WSO2 Analytics Platform to some common business use cases

Showcase the numerous capabilities of the platform

Demonstrate how to collect data, analyze, predict and communicate effectively

Azure Data Factory for Redmond SQL PASS UG Sept 2018

ADF V2 inside and out, including new Visual Data Flow preview feature

Raquel Guimaraes- Third party infrastructure as code

While implementing cloud Infrastructure as Code you might have come across the problem of dealing with third-party resources. This is most common in complex environments where most of the resources live in a cloud provider (GCP or AWS for example) and there are some SaaS solutions to integrate with (Datadog and Pingdom for example). In this talk we will expose the problem and explain a solution that is currently being used by one of our key clients in Spain.

RHOSP6 DELL Summit - OpenStack

Apresentação DELL Summit - OpenStack - RHOSP6 (Juno)

Solutions Summit LATAM 2015

Similar to Stranger Streams | NStore @ DevMarche (20)

Create a serverless architecture for data collection with Python and AWS

Create a serverless architecture for data collection with Python and AWS

A serverless IoT Story From Design to Production and Monitoring

A serverless IoT Story From Design to Production and Monitoring

A serverless IoT story from design to production and monitoring

A serverless IoT story from design to production and monitoring

Spring in the Cloud - using Spring with Cloud Foundry

Spring in the Cloud - using Spring with Cloud Foundry

WSO2Con ASIA 2016: WSO2 Analytics Platform: The One Stop Shop for All Your Da...

WSO2Con ASIA 2016: WSO2 Analytics Platform: The One Stop Shop for All Your Da...

Azure Data Factory for Redmond SQL PASS UG Sept 2018

Azure Data Factory for Redmond SQL PASS UG Sept 2018

Raquel Guimaraes- Third party infrastructure as code

Raquel Guimaraes- Third party infrastructure as code

More from Andrea Balducci

Agile Industry 4.0 - IoT Day 2019

This talk is about a real project for an Industry 4.0 assembly line for Electric Vehicles part manufacturing and what we learned in the making. We started with no prior knowledge of machine communication protocols and M2M integration patterns and ended with a fully clustered Supervision and Control information system. We had to fight with Purchase Managers buying the wrong stuff, incorrect documentation, last minute changes on the manufacturing stations, last second software specifications changes, IT Managers, manufacturing and logistics operators screaming "don't touch that switch", etc.. We're not survivors, all went as we planned in "the agile way": project delivered on time and operational from day 1.

Inception

Talk for DevOps@Work 2018 - Rome.

Small teams delivering enterprise grade projects with SCRUM.

Event based modelling and prototyping

Evento DevMarche in Microsoft House del 7 Aprile 2017.

Dal discovery all'implementazione di un dominio applicativo utilizzando EventStorming, Modellathon e EventSourcing

Storage dei dati con MongoDB

Panoramica delle funzionalità di MongoDB e delle topologie di installazione per il workshop DevMarche su stack MEAN.

Open domus 2016

Presentazione in OpenDomus del nostro processo di modellazione utilizzando CQRS+ES+DDD con EventStorming.

Alam aeki 2015

Tecniche e tools per velocizzare il ciclo di sviluppo del software in ambito enterprise. Riassunto di quello che abbiamo imparato fino ad oggi nel realizzare Jarvis.

[Alam aeki] Guida illustrata alla modellazione di un dominio con Event Sourci...![[Alam aeki] Guida illustrata alla modellazione di un dominio con Event Sourci...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Alam aeki] Guida illustrata alla modellazione di un dominio con Event Sourci...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Guida illustrata alla modellazione di un dominio con Event Sourcing & Event Storming.

DDD Saturdaty 2014 @ Pordenone

Event Sourcing con NEventStore

Estratto tecnico del talk http://lanyrd.com/2014/cdays14/scxbbf/ fatto a quattro mani con @ziobrando

Asp.Net MVC 2 :: VS 2010 Community Tour

Presentazione di MVC2 per il community tour del lancio di Visual Studio 2010. Tappa di Perugia

DotNetUmbria + DotNetMarche

More from Andrea Balducci (17)

[Alam aeki] Guida illustrata alla modellazione di un dominio con Event Sourci...![[Alam aeki] Guida illustrata alla modellazione di un dominio con Event Sourci...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Alam aeki] Guida illustrata alla modellazione di un dominio con Event Sourci...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[Alam aeki] Guida illustrata alla modellazione di un dominio con Event Sourci...

Recently uploaded

Accelerate your Kubernetes clusters with Varnish Caching

A presentation about the usage and availability of Varnish on Kubernetes. This talk explores the capabilities of Varnish caching and shows how to use the Varnish Helm chart to deploy it to Kubernetes.

This presentation was delivered at K8SUG Singapore. See https://feryn.eu/presentations/accelerate-your-kubernetes-clusters-with-varnish-caching-k8sug-singapore-28-2024 for more details.

ODC, Data Fabric and Architecture User Group

Let's dive deeper into the world of ODC! Ricardo Alves (OutSystems) will join us to tell all about the new Data Fabric. After that, Sezen de Bruijn (OutSystems) will get into the details on how to best design a sturdy architecture within ODC.

Connector Corner: Automate dynamic content and events by pushing a button

Here is something new! In our next Connector Corner webinar, we will demonstrate how you can use a single workflow to:

Create a campaign using Mailchimp with merge tags/fields

Send an interactive Slack channel message (using buttons)

Have the message received by managers and peers along with a test email for review

But there’s more:

In a second workflow supporting the same use case, you’ll see:

Your campaign sent to target colleagues for approval

If the “Approve” button is clicked, a Jira/Zendesk ticket is created for the marketing design team

But—if the “Reject” button is pushed, colleagues will be alerted via Slack message

Join us to learn more about this new, human-in-the-loop capability, brought to you by Integration Service connectors.

And...

Speakers:

Akshay Agnihotri, Product Manager

Charlie Greenberg, Host

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

Reflecting on new architectures for knowledge based systems in light of generative ai

UiPath Test Automation using UiPath Test Suite series, part 4

Welcome to UiPath Test Automation using UiPath Test Suite series part 4. In this session, we will cover Test Manager overview along with SAP heatmap.

The UiPath Test Manager overview with SAP heatmap webinar offers a concise yet comprehensive exploration of the role of a Test Manager within SAP environments, coupled with the utilization of heatmaps for effective testing strategies.

Participants will gain insights into the responsibilities, challenges, and best practices associated with test management in SAP projects. Additionally, the webinar delves into the significance of heatmaps as a visual aid for identifying testing priorities, areas of risk, and resource allocation within SAP landscapes. Through this session, attendees can expect to enhance their understanding of test management principles while learning practical approaches to optimize testing processes in SAP environments using heatmap visualization techniques

What will you get from this session?

1. Insights into SAP testing best practices

2. Heatmap utilization for testing

3. Optimization of testing processes

4. Demo

Topics covered:

Execution from the test manager

Orchestrator execution result

Defect reporting

SAP heatmap example with demo

Speaker:

Deepak Rai, Automation Practice Lead, Boundaryless Group and UiPath MVP

Essentials of Automations: Optimizing FME Workflows with Parameters

Are you looking to streamline your workflows and boost your projects’ efficiency? Do you find yourself searching for ways to add flexibility and control over your FME workflows? If so, you’re in the right place.

Join us for an insightful dive into the world of FME parameters, a critical element in optimizing workflow efficiency. This webinar marks the beginning of our three-part “Essentials of Automation” series. This first webinar is designed to equip you with the knowledge and skills to utilize parameters effectively: enhancing the flexibility, maintainability, and user control of your FME projects.

Here’s what you’ll gain:

- Essentials of FME Parameters: Understand the pivotal role of parameters, including Reader/Writer, Transformer, User, and FME Flow categories. Discover how they are the key to unlocking automation and optimization within your workflows.

- Practical Applications in FME Form: Delve into key user parameter types including choice, connections, and file URLs. Allow users to control how a workflow runs, making your workflows more reusable. Learn to import values and deliver the best user experience for your workflows while enhancing accuracy.

- Optimization Strategies in FME Flow: Explore the creation and strategic deployment of parameters in FME Flow, including the use of deployment and geometry parameters, to maximize workflow efficiency.

- Pro Tips for Success: Gain insights on parameterizing connections and leveraging new features like Conditional Visibility for clarity and simplicity.

We’ll wrap up with a glimpse into future webinars, followed by a Q&A session to address your specific questions surrounding this topic.

Don’t miss this opportunity to elevate your FME expertise and drive your projects to new heights of efficiency.

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar

Epistemic Interaction - tuning interfaces to provide information for AI support

Paper presented at SYNERGY workshop at AVI 2024, Genoa, Italy. 3rd June 2024

https://alandix.com/academic/papers/synergy2024-epistemic/

As machine learning integrates deeper into human-computer interactions, the concept of epistemic interaction emerges, aiming to refine these interactions to enhance system adaptability. This approach encourages minor, intentional adjustments in user behaviour to enrich the data available for system learning. This paper introduces epistemic interaction within the context of human-system communication, illustrating how deliberate interaction design can improve system understanding and adaptation. Through concrete examples, we demonstrate the potential of epistemic interaction to significantly advance human-computer interaction by leveraging intuitive human communication strategies to inform system design and functionality, offering a novel pathway for enriching user-system engagements.

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

91mobiles recently conducted a Smart TV Buyer Insights Survey in which we asked over 3,000 respondents about the TV they own, aspects they look at on a new TV, and their TV buying preferences.

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scalable Platform by VP of Product, The New York Times

Search and Society: Reimagining Information Access for Radical Futures

The field of Information retrieval (IR) is currently undergoing a transformative shift, at least partly due to the emerging applications of generative AI to information access. In this talk, we will deliberate on the sociotechnical implications of generative AI for information access. We will argue that there is both a critical necessity and an exciting opportunity for the IR community to re-center our research agendas on societal needs while dismantling the artificial separation between the work on fairness, accountability, transparency, and ethics in IR and the rest of IR research. Instead of adopting a reactionary strategy of trying to mitigate potential social harms from emerging technologies, the community should aim to proactively set the research agenda for the kinds of systems we should build inspired by diverse explicitly stated sociotechnical imaginaries. The sociotechnical imaginaries that underpin the design and development of information access technologies needs to be explicitly articulated, and we need to develop theories of change in context of these diverse perspectives. Our guiding future imaginaries must be informed by other academic fields, such as democratic theory and critical theory, and should be co-developed with social science scholars, legal scholars, civil rights and social justice activists, and artists, among others.

Leading Change strategies and insights for effective change management pdf 1.pdf

Leading Change strategies and insights for effective change management pdf 1.pdf

Knowledge engineering: from people to machines and back

Keynote at the 21st European Semantic Web Conference

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovation With Your Product by VP of Product Design, Warner Music Group

From Daily Decisions to Bottom Line: Connecting Product Work to Revenue by VP...

From Daily Decisions to Bottom Line: Connecting Product Work to Revenue by VP of Product, Amplitude

When stars align: studies in data quality, knowledge graphs, and machine lear...

Keynote at DQMLKG workshop at the 21st European Semantic Web Conference 2024

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

💥 Speed, accuracy, and scaling – discover the superpowers of GenAI in action with UiPath Document Understanding and Communications Mining™:

See how to accelerate model training and optimize model performance with active learning

Learn about the latest enhancements to out-of-the-box document processing – with little to no training required

Get an exclusive demo of the new family of UiPath LLMs – GenAI models specialized for processing different types of documents and messages

This is a hands-on session specifically designed for automation developers and AI enthusiasts seeking to enhance their knowledge in leveraging the latest intelligent document processing capabilities offered by UiPath.

Speakers:

👨🏫 Andras Palfi, Senior Product Manager, UiPath

👩🏫 Lenka Dulovicova, Product Program Manager, UiPath

Slack (or Teams) Automation for Bonterra Impact Management (fka Social Soluti...

Sidekick Solutions uses Bonterra Impact Management (fka Social Solutions Apricot) and automation solutions to integrate data for business workflows.

We believe integration and automation are essential to user experience and the promise of efficient work through technology. Automation is the critical ingredient to realizing that full vision. We develop integration products and services for Bonterra Case Management software to support the deployment of automations for a variety of use cases.

This video focuses on the notifications, alerts, and approval requests using Slack for Bonterra Impact Management. The solutions covered in this webinar can also be deployed for Microsoft Teams.

Interested in deploying notification automations for Bonterra Impact Management? Contact us at sales@sidekicksolutionsllc.com to discuss next steps.

Recently uploaded (20)

Accelerate your Kubernetes clusters with Varnish Caching

Accelerate your Kubernetes clusters with Varnish Caching

FIDO Alliance Osaka Seminar: Passkeys at Amazon.pdf

FIDO Alliance Osaka Seminar: Passkeys at Amazon.pdf

Connector Corner: Automate dynamic content and events by pushing a button

Connector Corner: Automate dynamic content and events by pushing a button

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

UiPath Test Automation using UiPath Test Suite series, part 4

UiPath Test Automation using UiPath Test Suite series, part 4

Essentials of Automations: Optimizing FME Workflows with Parameters

Essentials of Automations: Optimizing FME Workflows with Parameters

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

Epistemic Interaction - tuning interfaces to provide information for AI support

Epistemic Interaction - tuning interfaces to provide information for AI support

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

Search and Society: Reimagining Information Access for Radical Futures

Search and Society: Reimagining Information Access for Radical Futures

Leading Change strategies and insights for effective change management pdf 1.pdf

Leading Change strategies and insights for effective change management pdf 1.pdf

Knowledge engineering: from people to machines and back

Knowledge engineering: from people to machines and back

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

From Daily Decisions to Bottom Line: Connecting Product Work to Revenue by VP...

From Daily Decisions to Bottom Line: Connecting Product Work to Revenue by VP...

When stars align: studies in data quality, knowledge graphs, and machine lear...

When stars align: studies in data quality, knowledge graphs, and machine lear...

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

Slack (or Teams) Automation for Bonterra Impact Management (fka Social Soluti...

Slack (or Teams) Automation for Bonterra Impact Management (fka Social Soluti...

Stranger Streams | NStore @ DevMarche

- 1. Event Sourcing senza confini: dal cloud allo smartphone. Facile, veloce, estendibile, open source. Un evento dedicato a te che sogni ancora “Write Once, Run Everywhere”

- 4. NSTORE .NET DATA STREAMING LIBRARY

- 5. PERSISTENCE STREAMS SNAPSHOTS DOMAIN PROCESSING NSTORE - ARCHITECTURE LAYOUT

- 7. await stream.AppendAsync ( new ItemPurchased() { ItemId = “ABCC”, Quantity = 43, TotalPrice = 1203.74 } );

- 9. public class Sum { public decimal Total { get; private set; } private void On(ItemPurchased i) { this.Total += i.TotalPrice; } } var result = await stream.Fold().RunAsync<Sum>();

- 11. public class Room : Aggregate<RoomState> { public void EnableBookings() { if(!this.State.BookingsEnabled) Emit(new BookingsEnabled(this.Id)); } public void AddBooking(DateRange dates) { if (State.IsAvailableOn(dates)){ Emit(new RoomBooked(this.Id, dates)); } else { Emit(new BookingFailed(this.Id, dates)); } } }

- 12. public class RoomState { private IList<DateRange> _reservations = new List<DateRange>(); public bool BookingsEnabled { get; private set; } private void On(BookingsEnabled e) { this.BookingsEnabled = true; } private void On(RoomBooked e) { _reservations.Add(e.Dates); } public bool IsAvailableOn(DateRange range) { return !_reservations.Any(range.Overlaps); } }

- 13. SCALABLE

- 14. MULTIPLATFORM