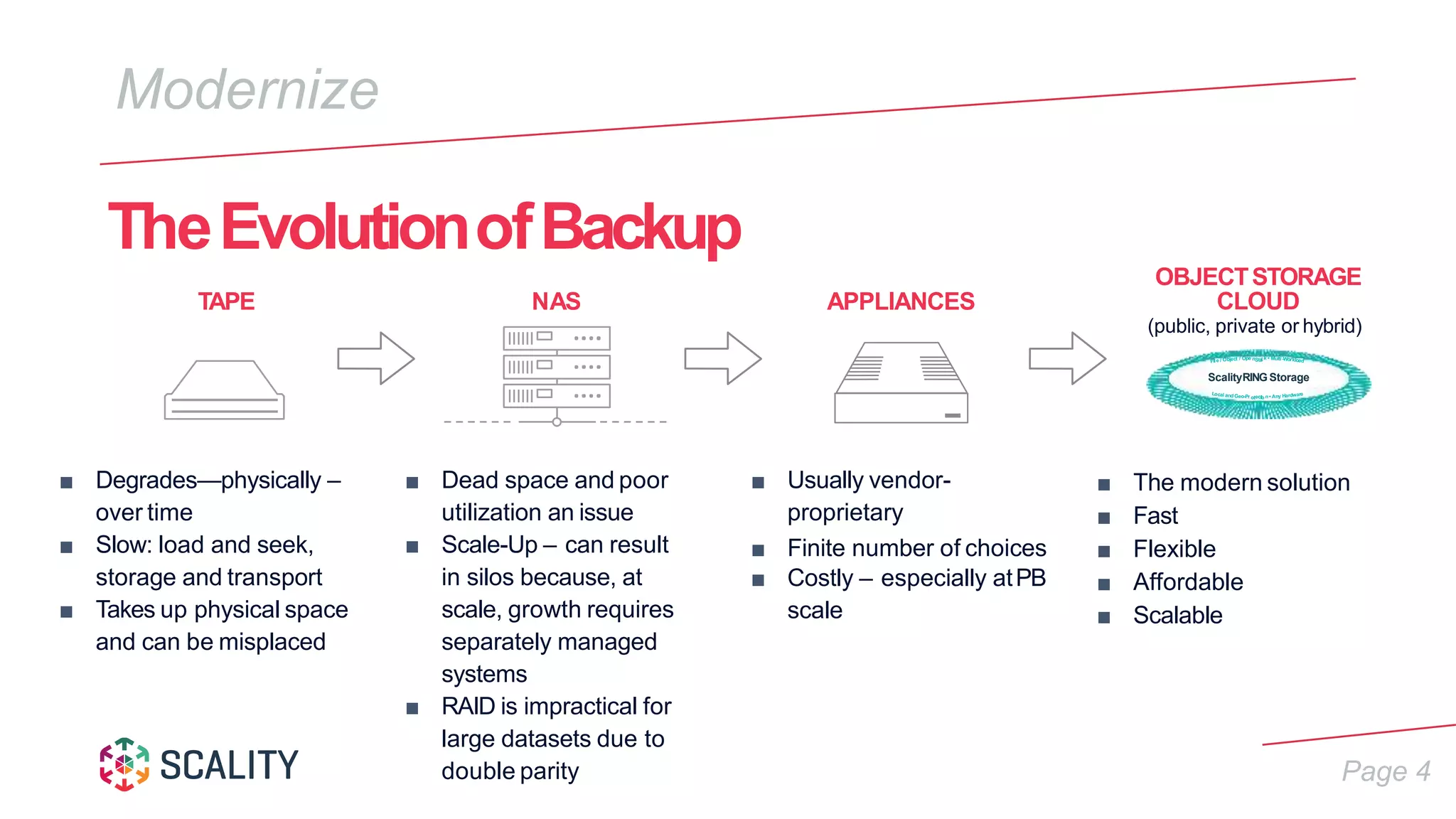

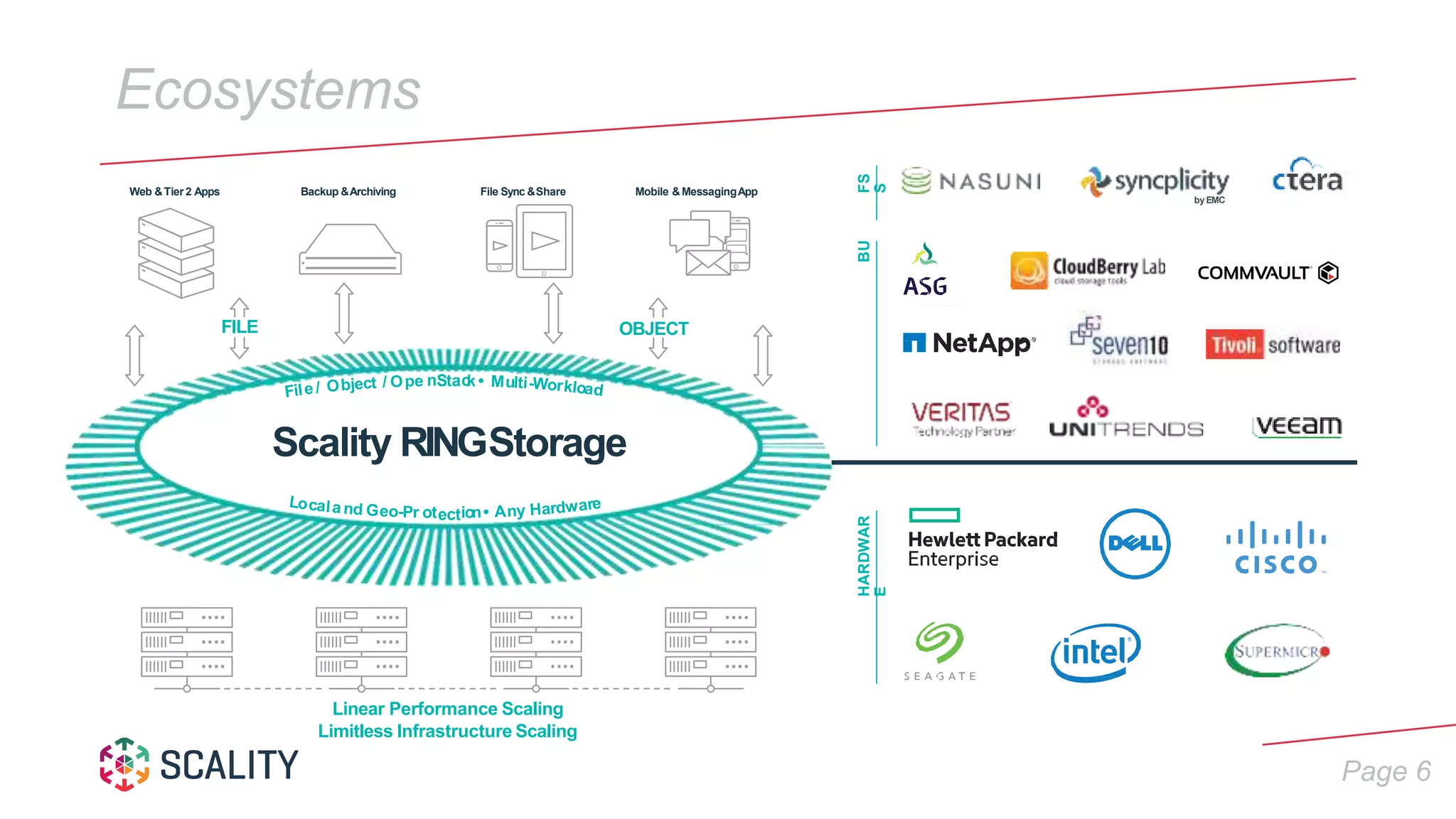

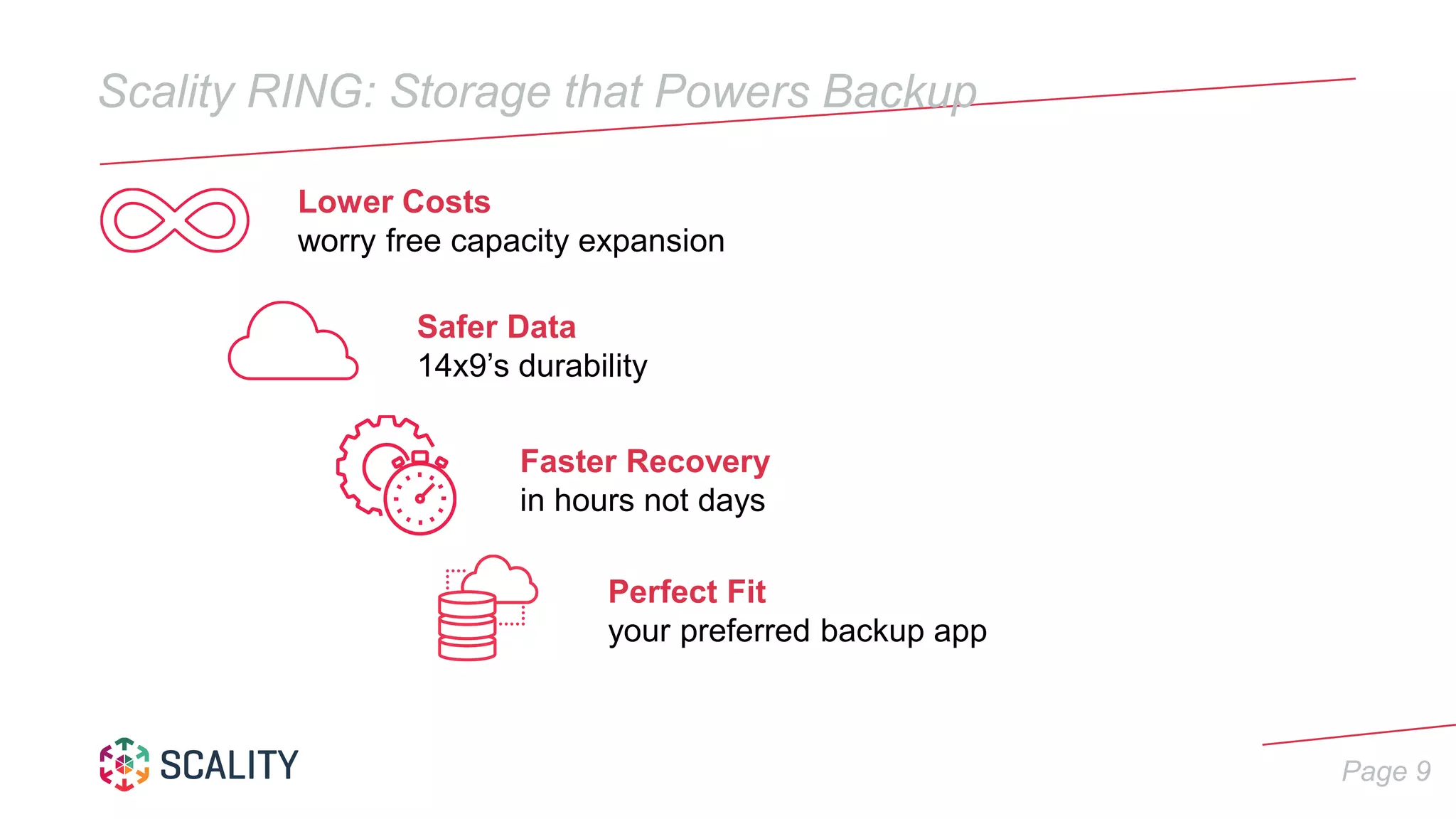

Scality offers a storage solution designed for enterprise backup with significant efficiency and cost benefits, including up to 90% cost reduction and 100% availability. Their Scality Ring technology is scalable, flexible, and can handle large datasets without vendor lock-in, providing faster recovery times and high durability. This modern approach to data backup addresses the limitations of traditional methods, emphasizing a secure and reliable storage environment.