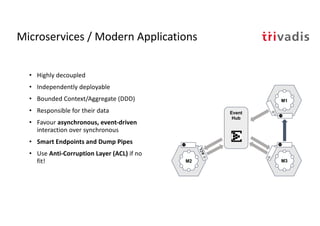

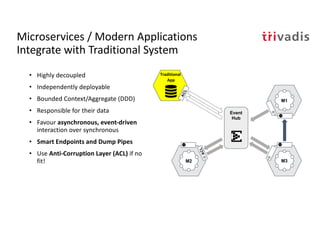

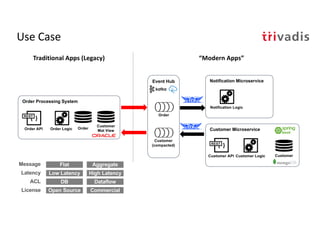

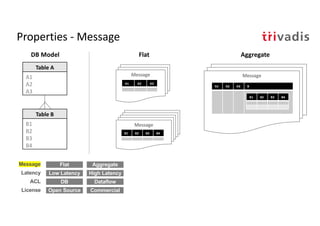

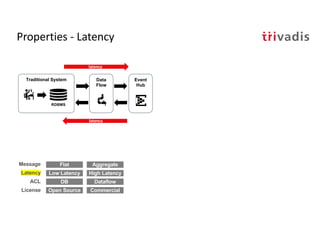

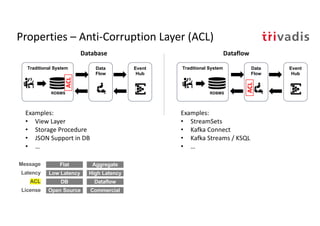

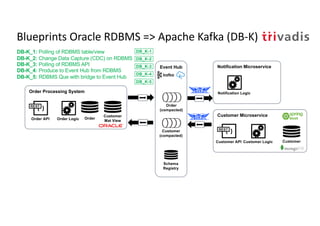

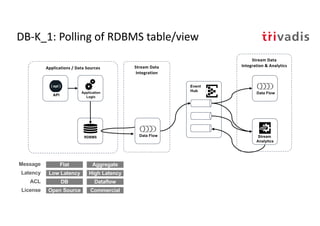

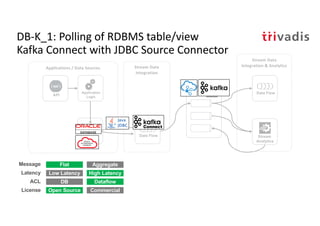

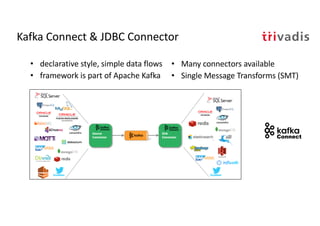

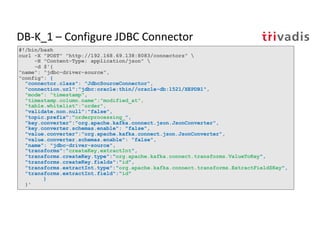

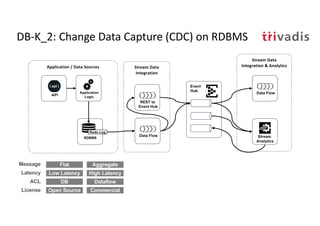

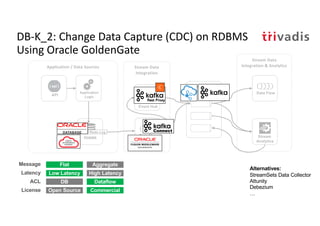

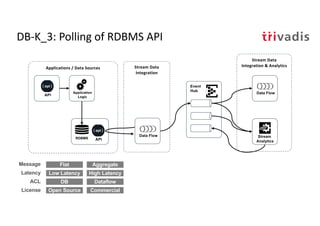

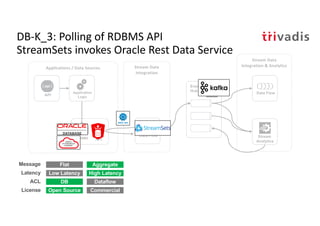

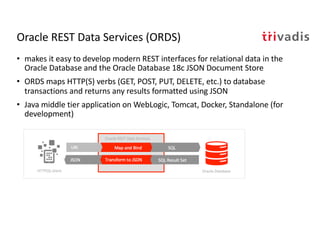

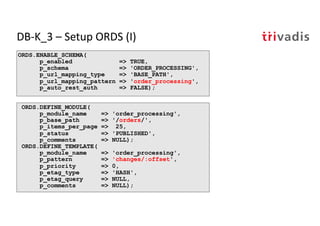

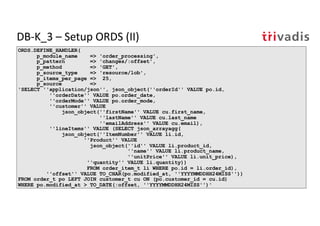

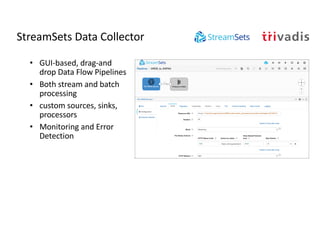

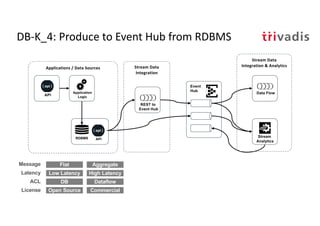

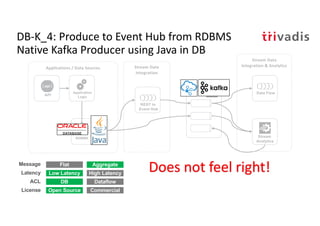

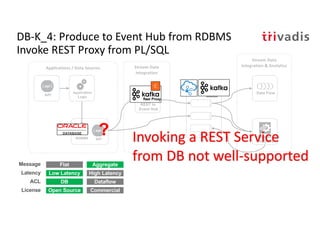

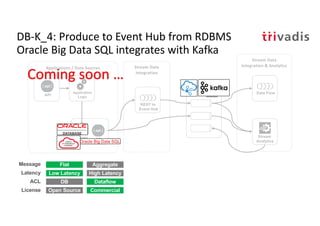

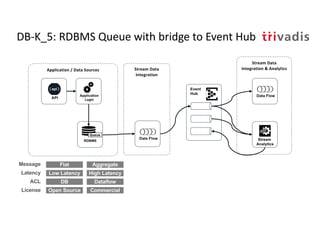

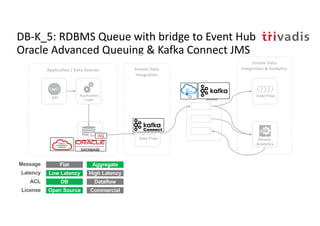

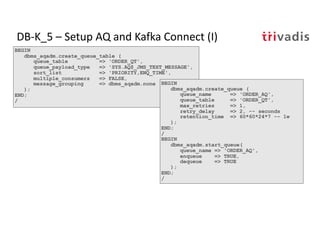

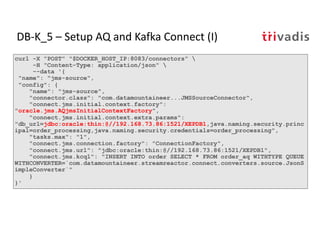

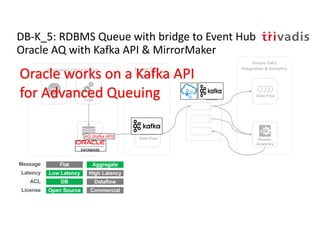

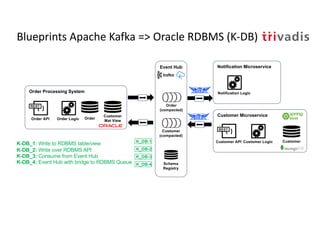

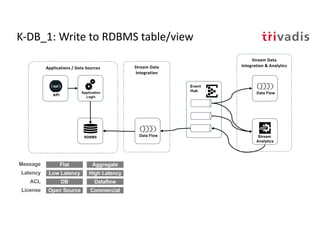

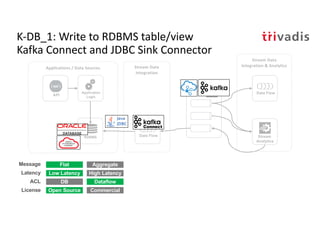

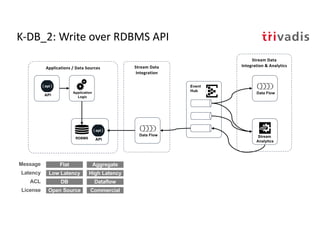

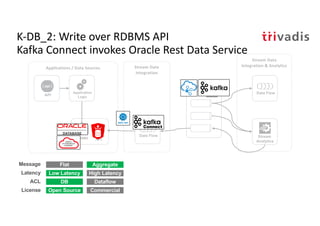

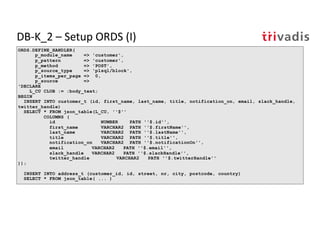

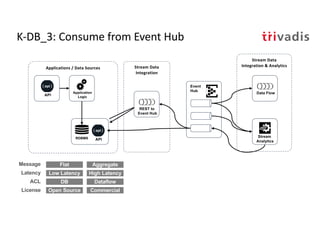

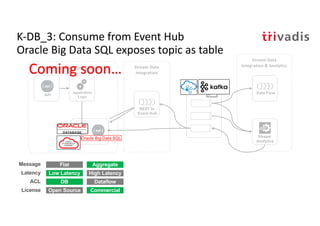

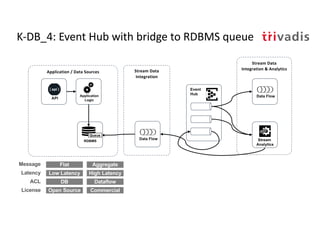

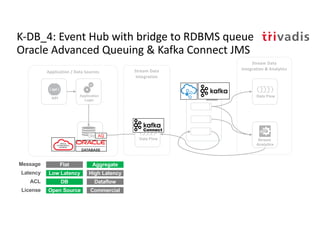

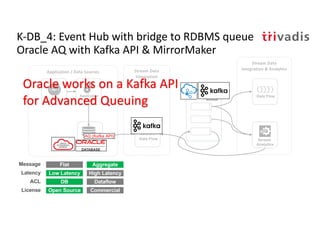

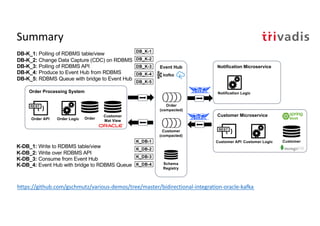

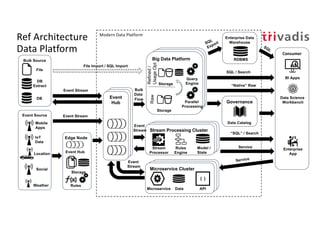

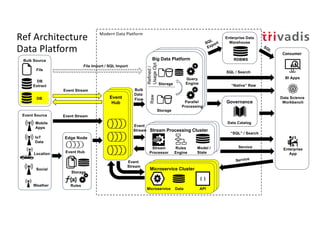

The document discusses bi-directional integration solutions between Oracle RDBMS and Apache Kafka, detailing various blueprints and methodologies for data flow and integration. It introduces concepts of microservices and modern applications, emphasizes asynchronous communication, and provides practical examples for event-driven architectures. Additionally, it outlines specific configurations for integrating Oracle databases with Kafka, including change data capture and REST APIs.