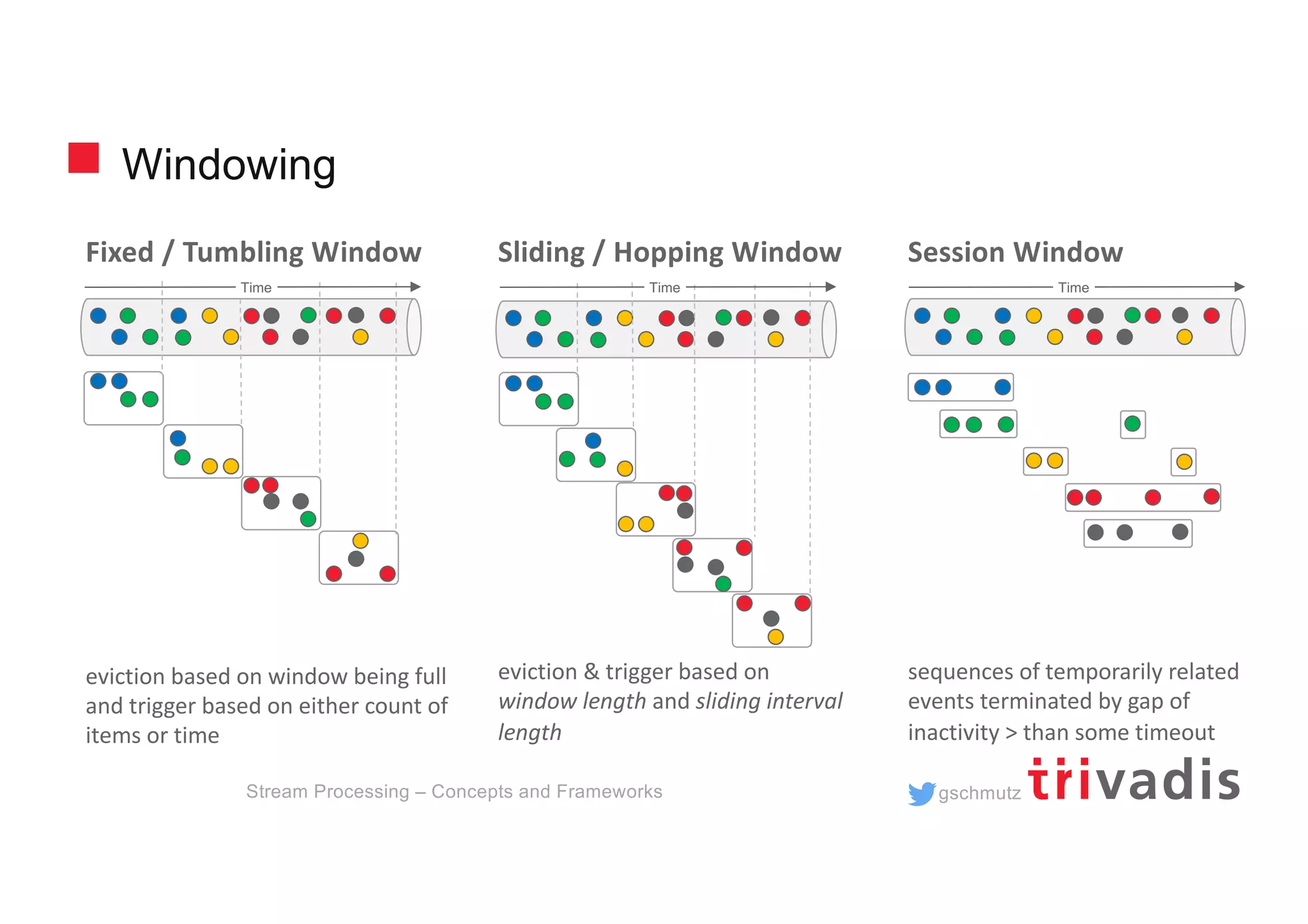

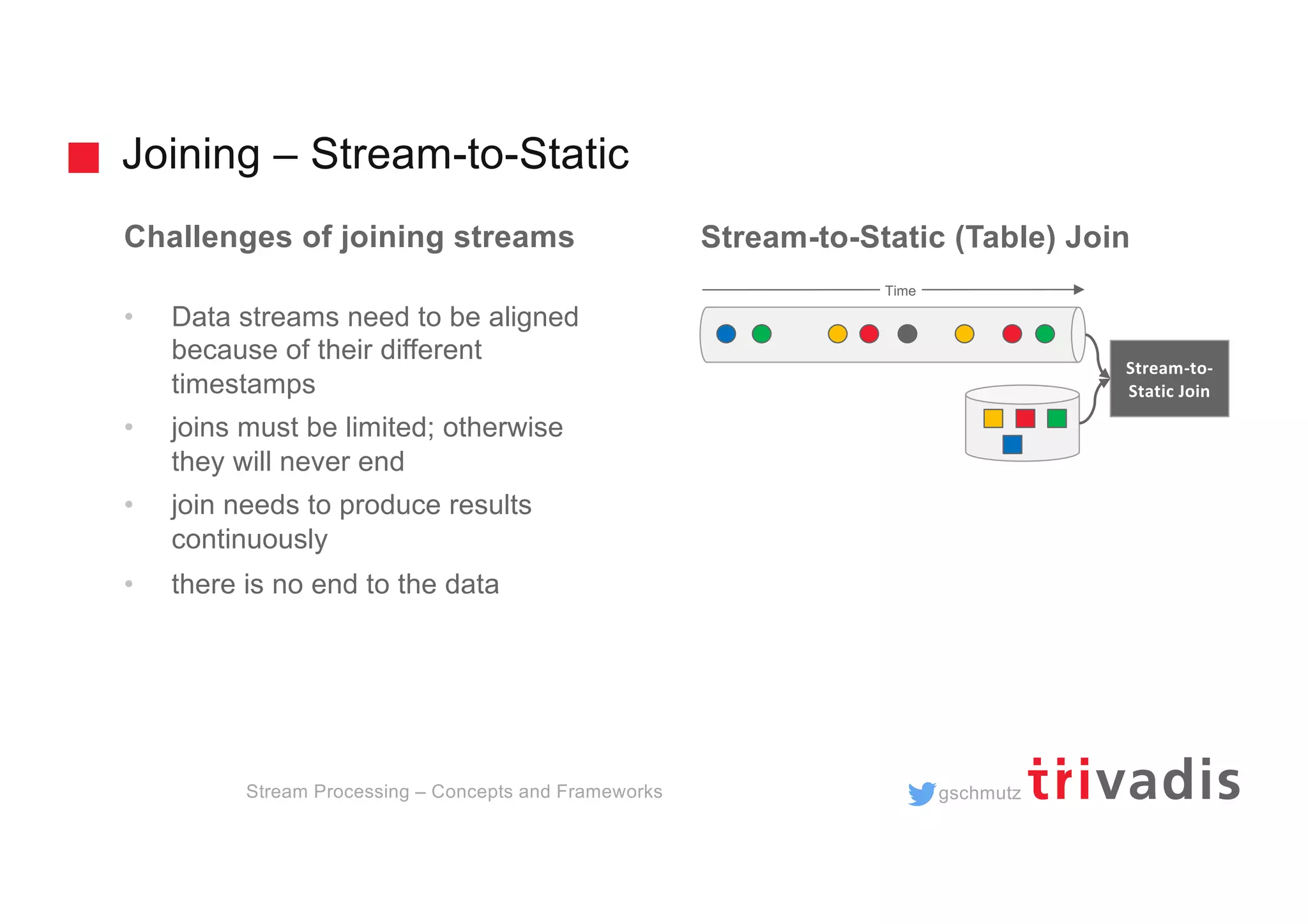

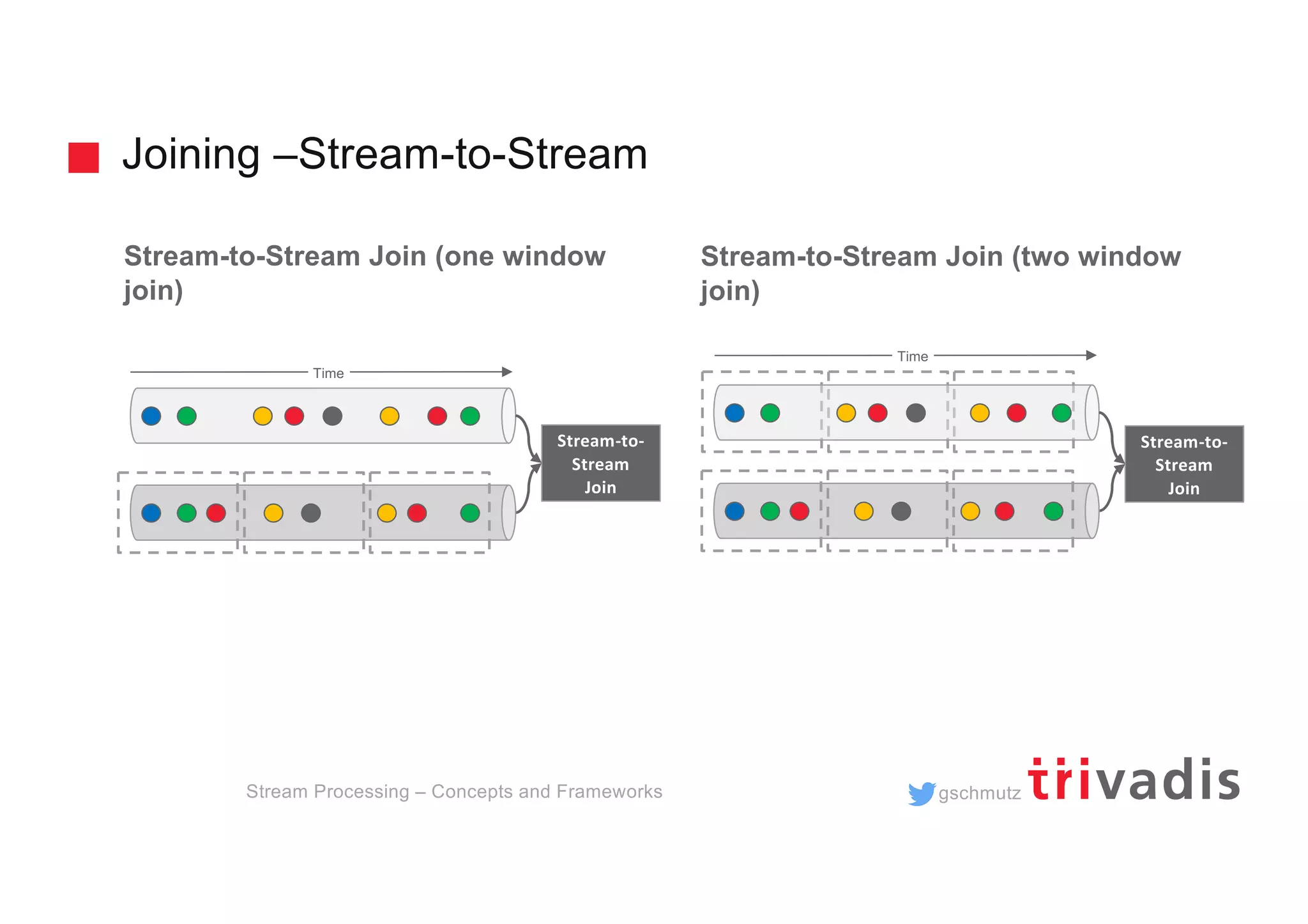

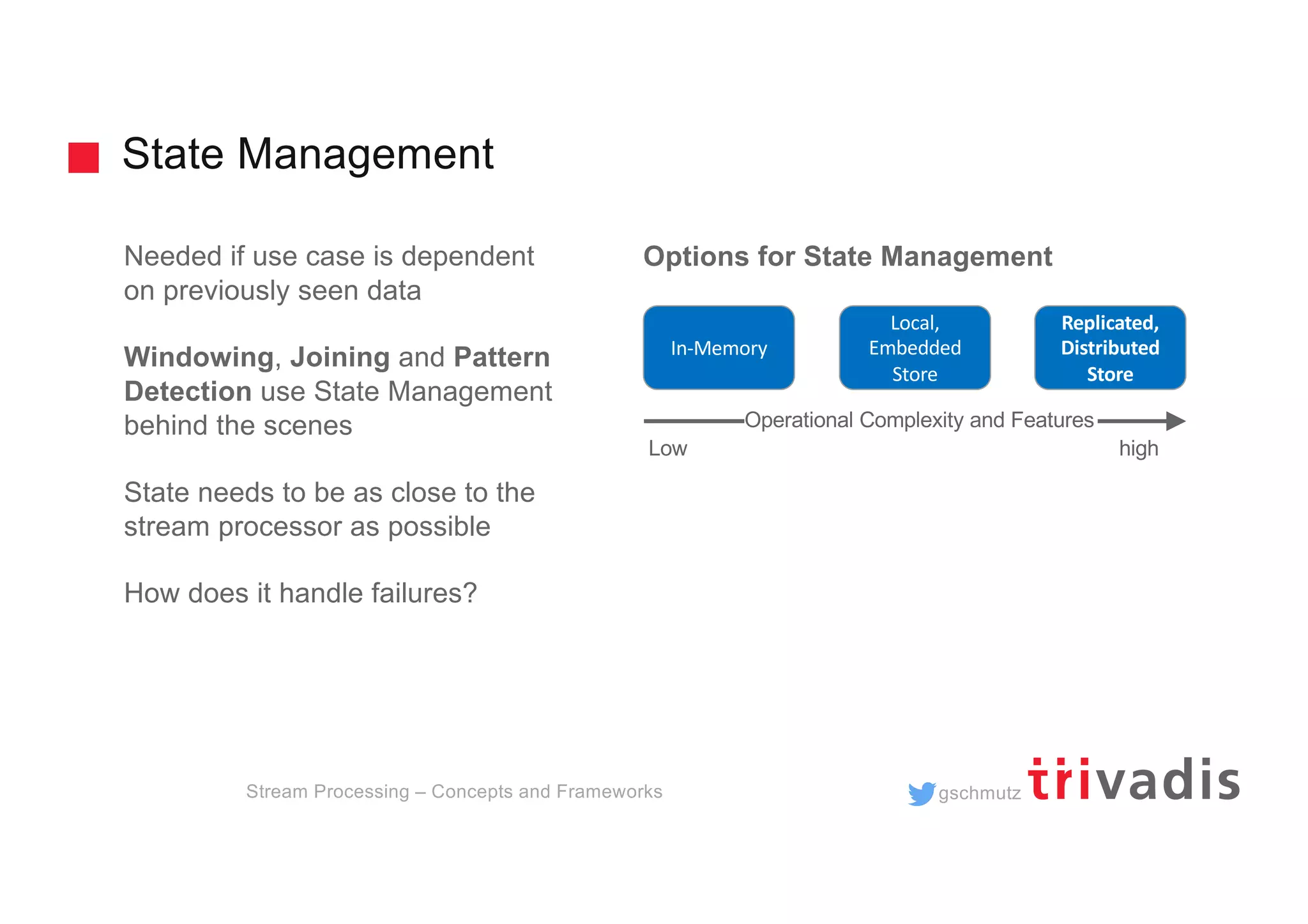

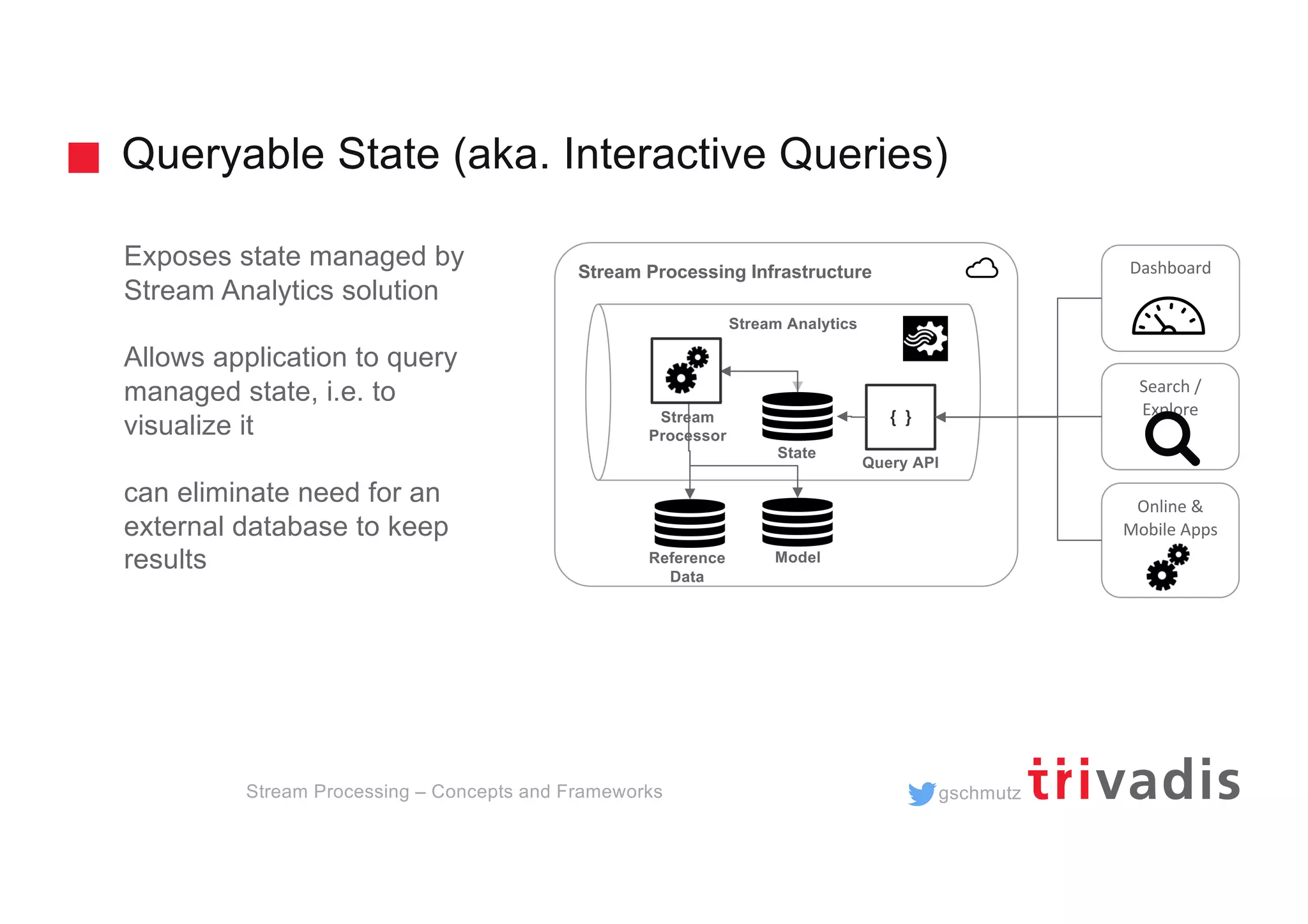

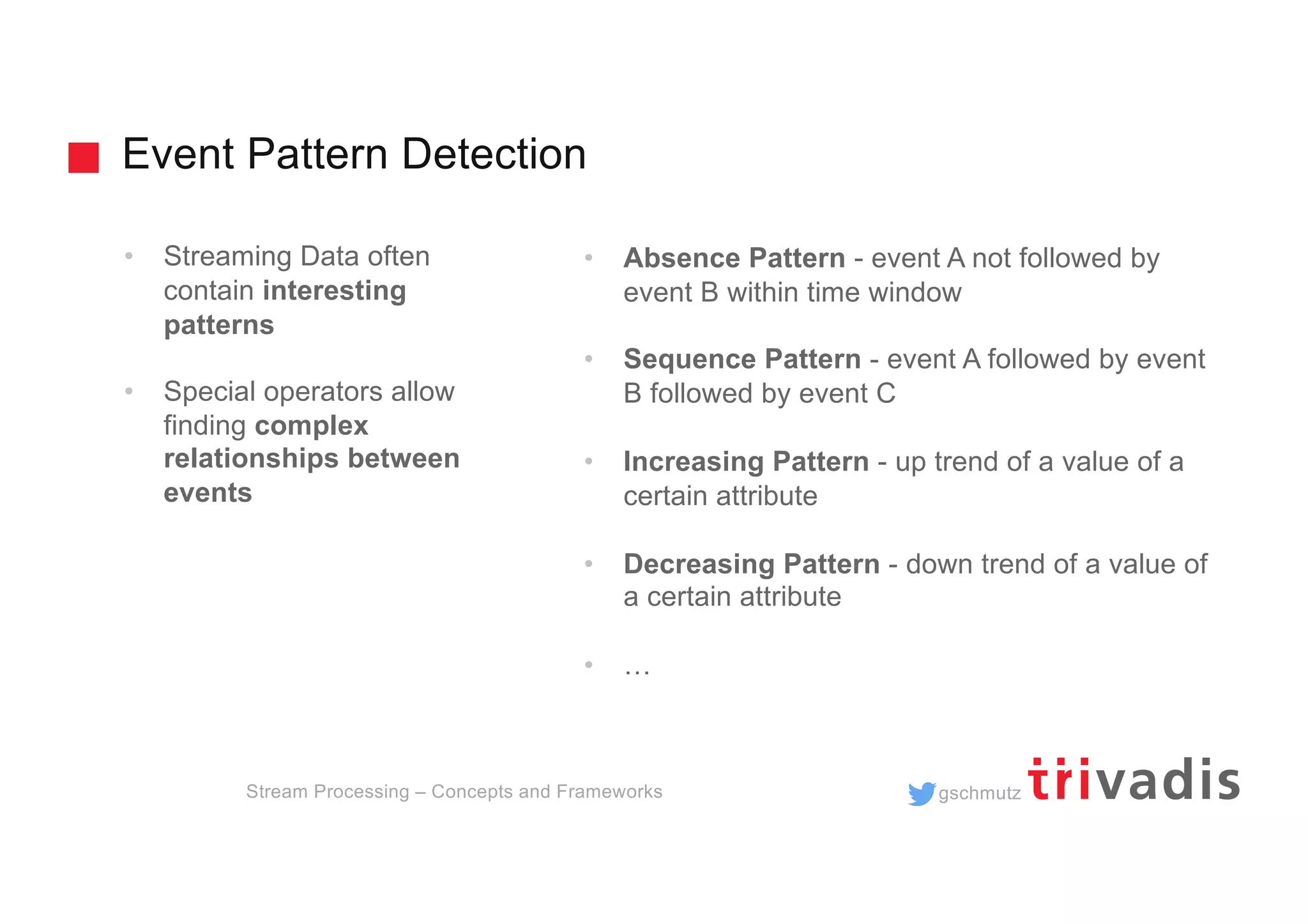

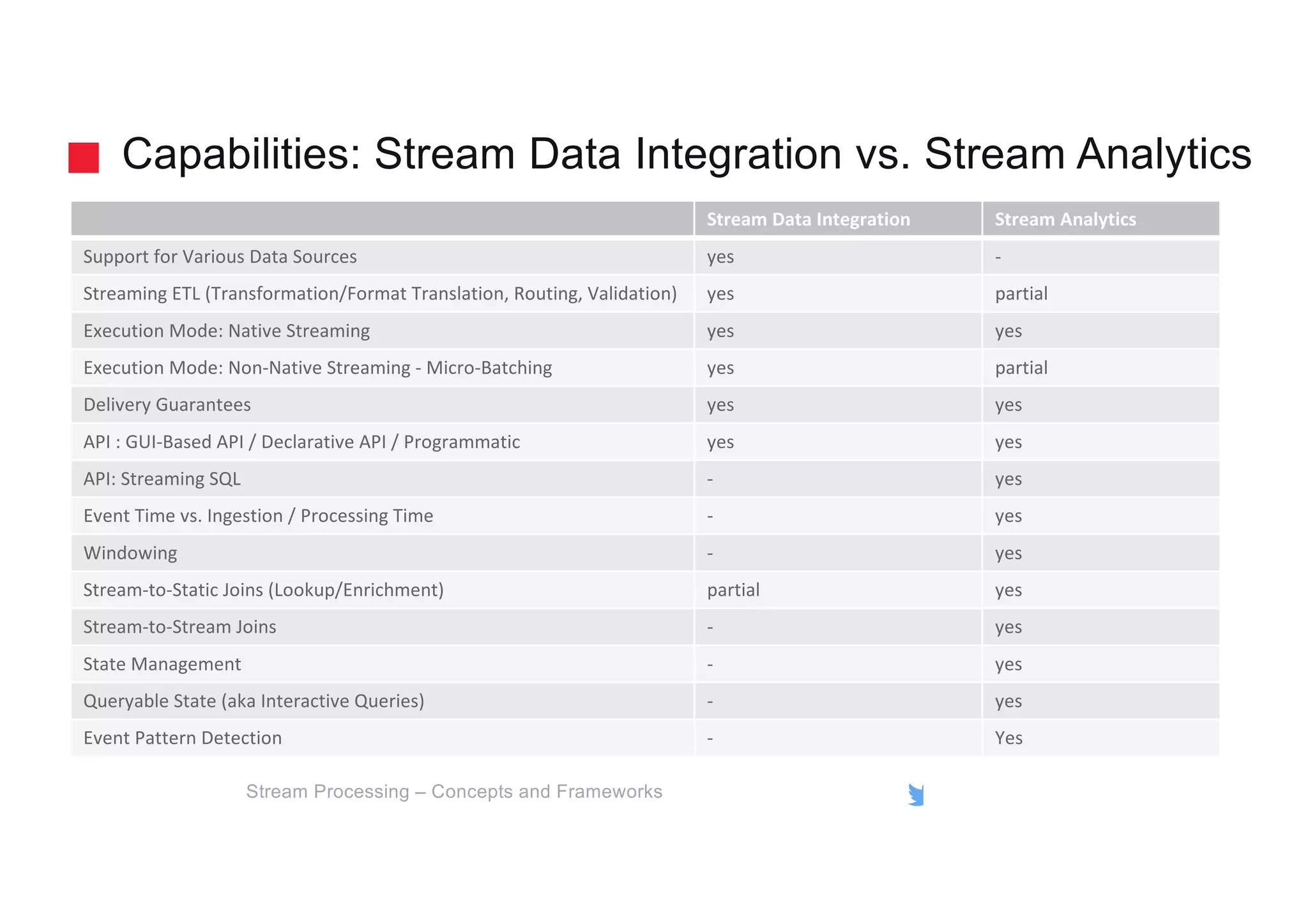

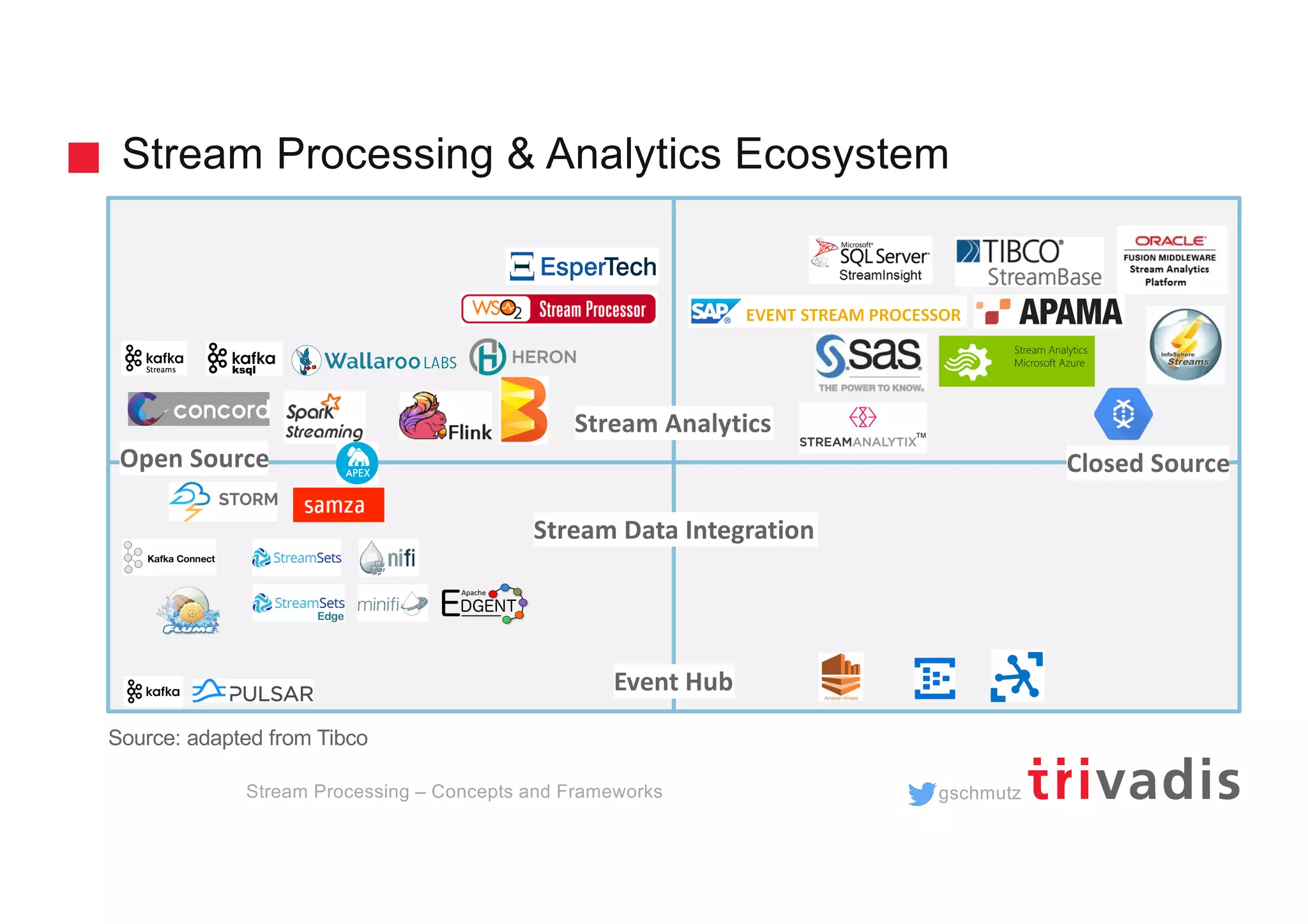

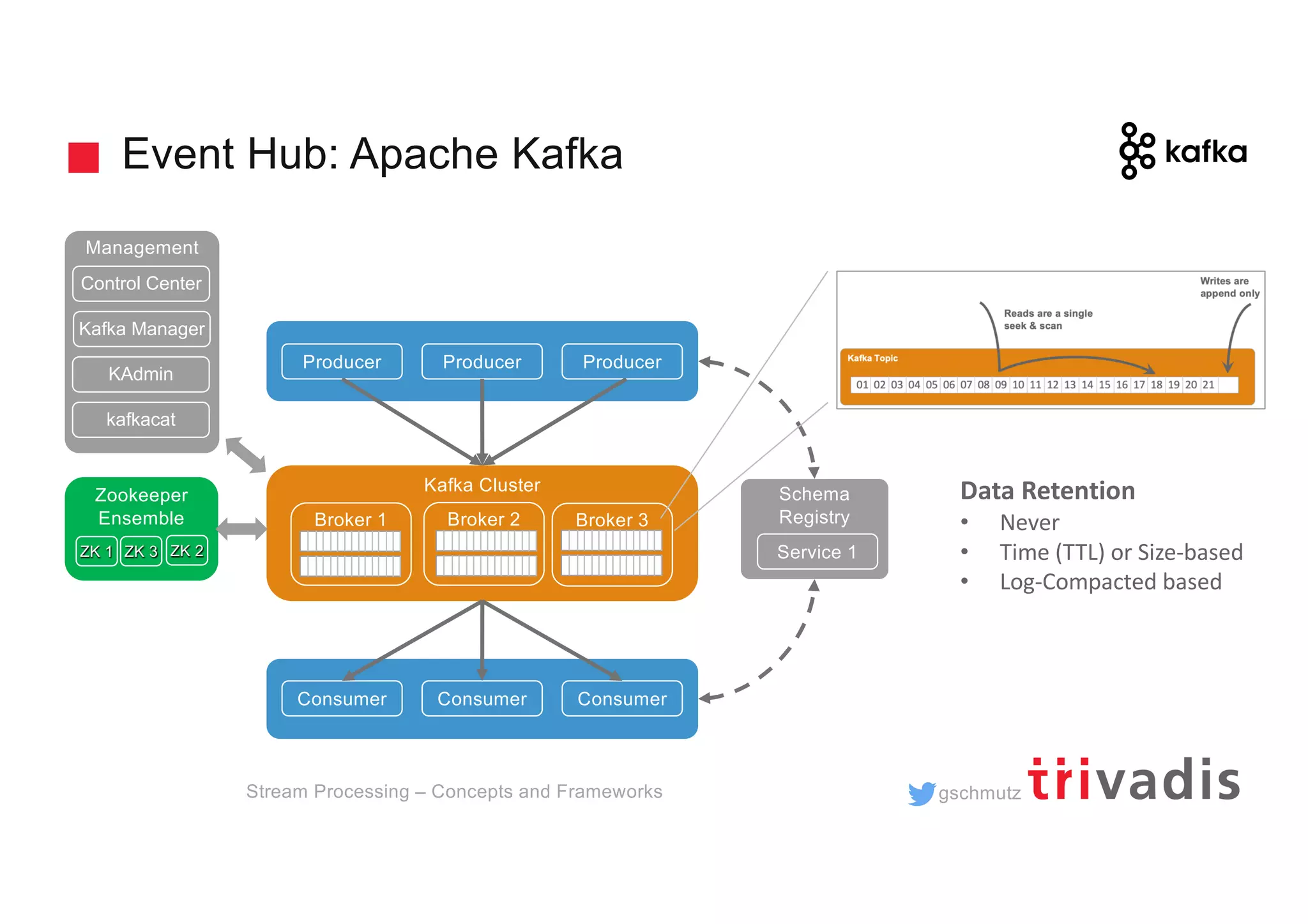

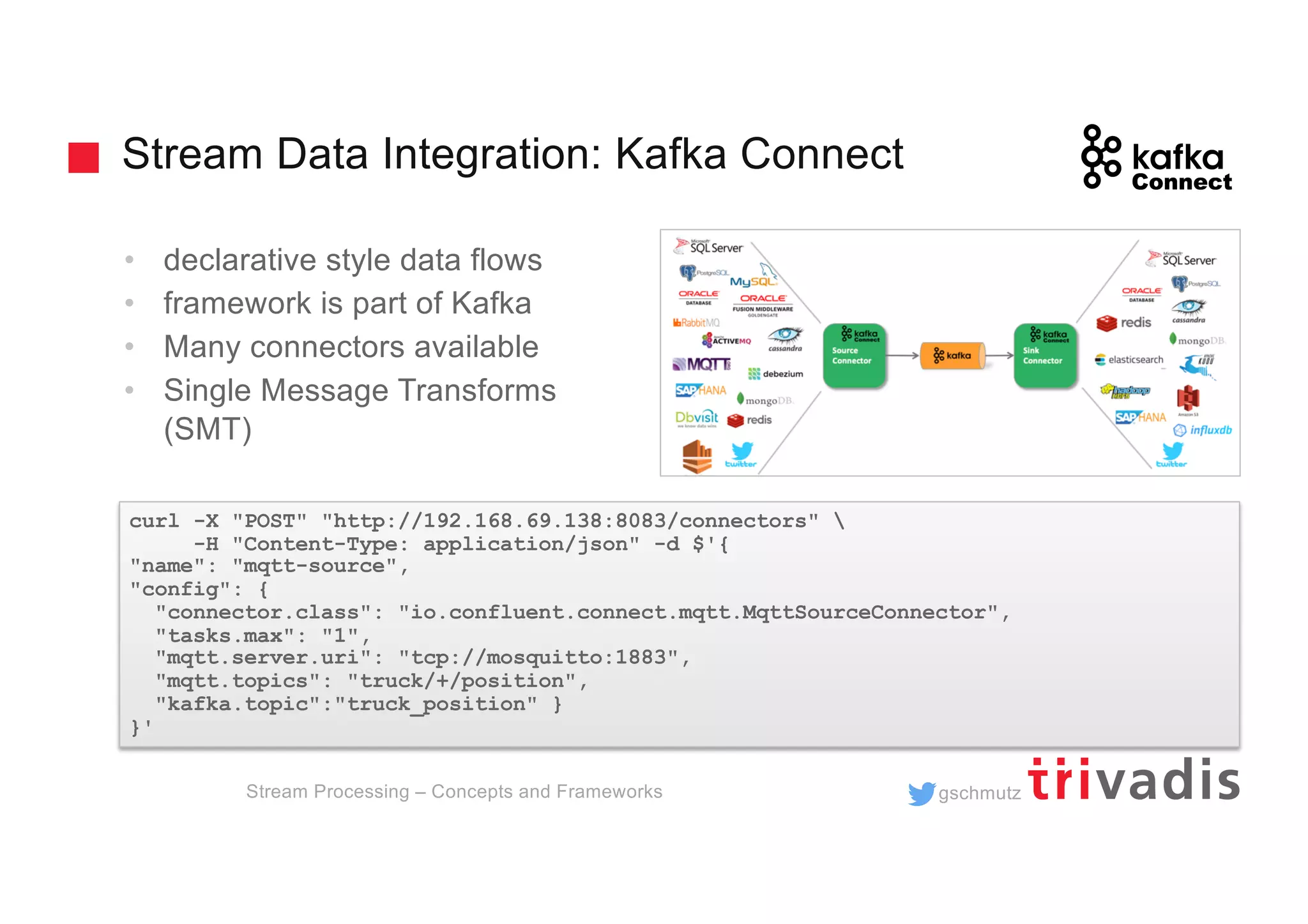

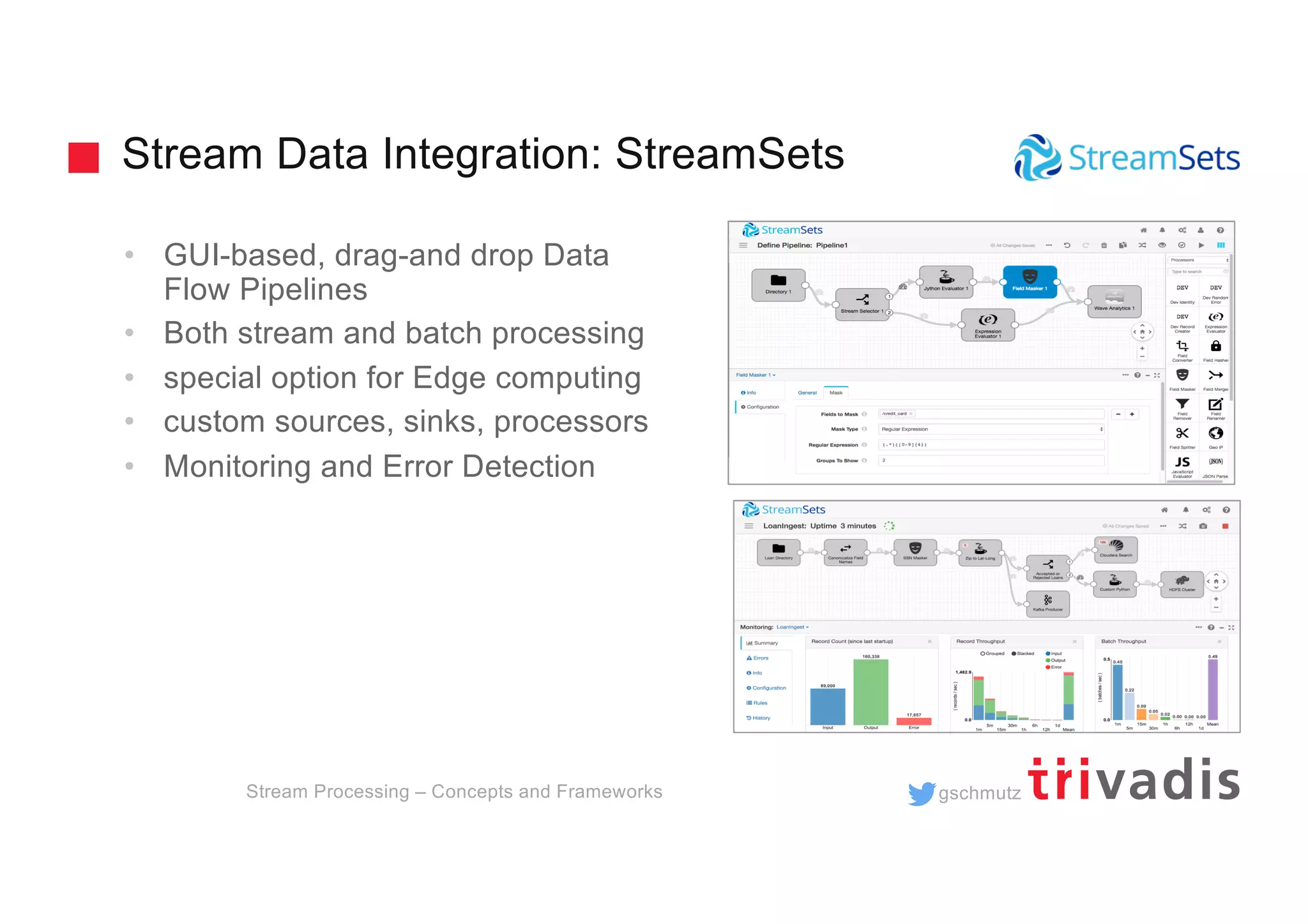

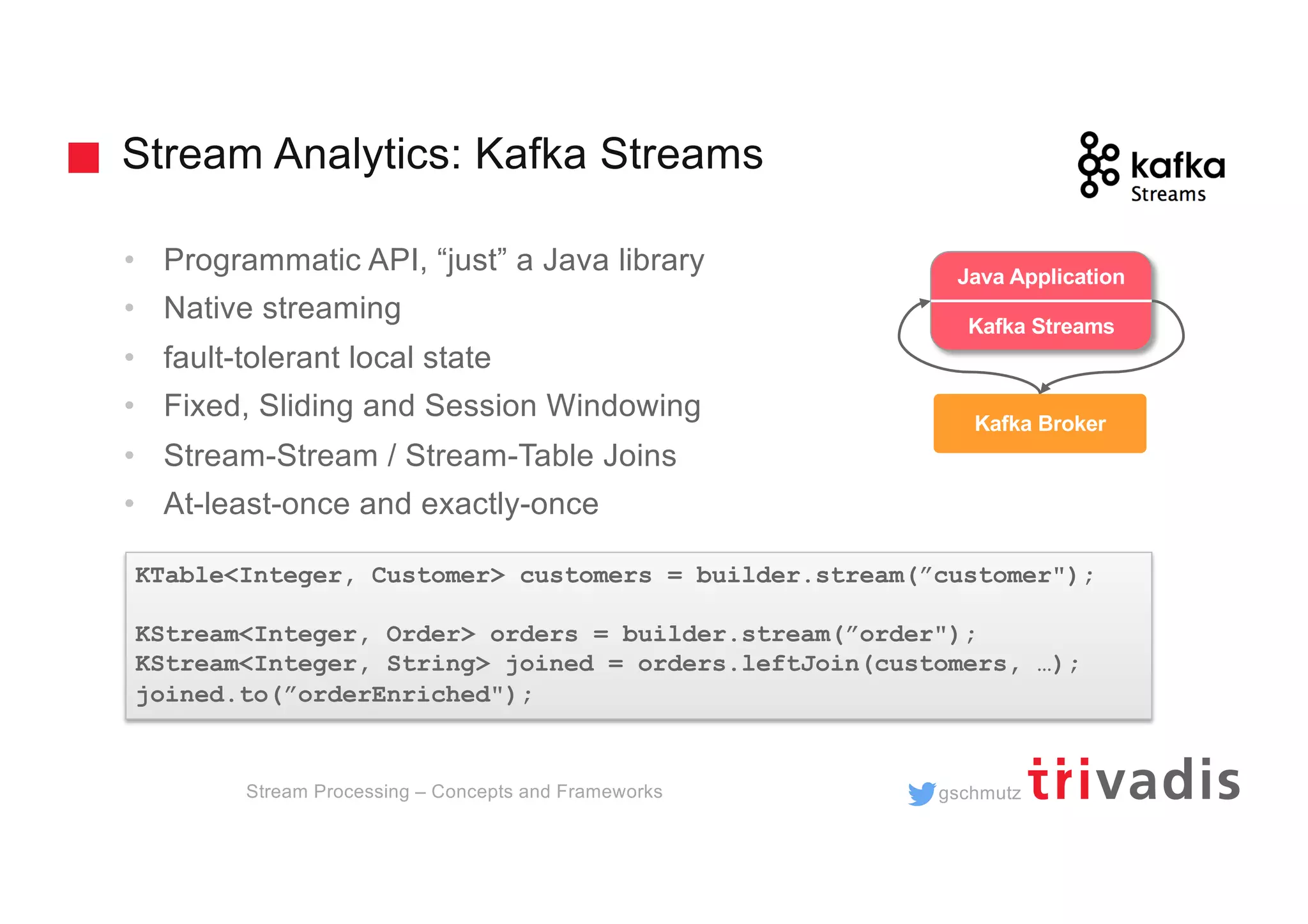

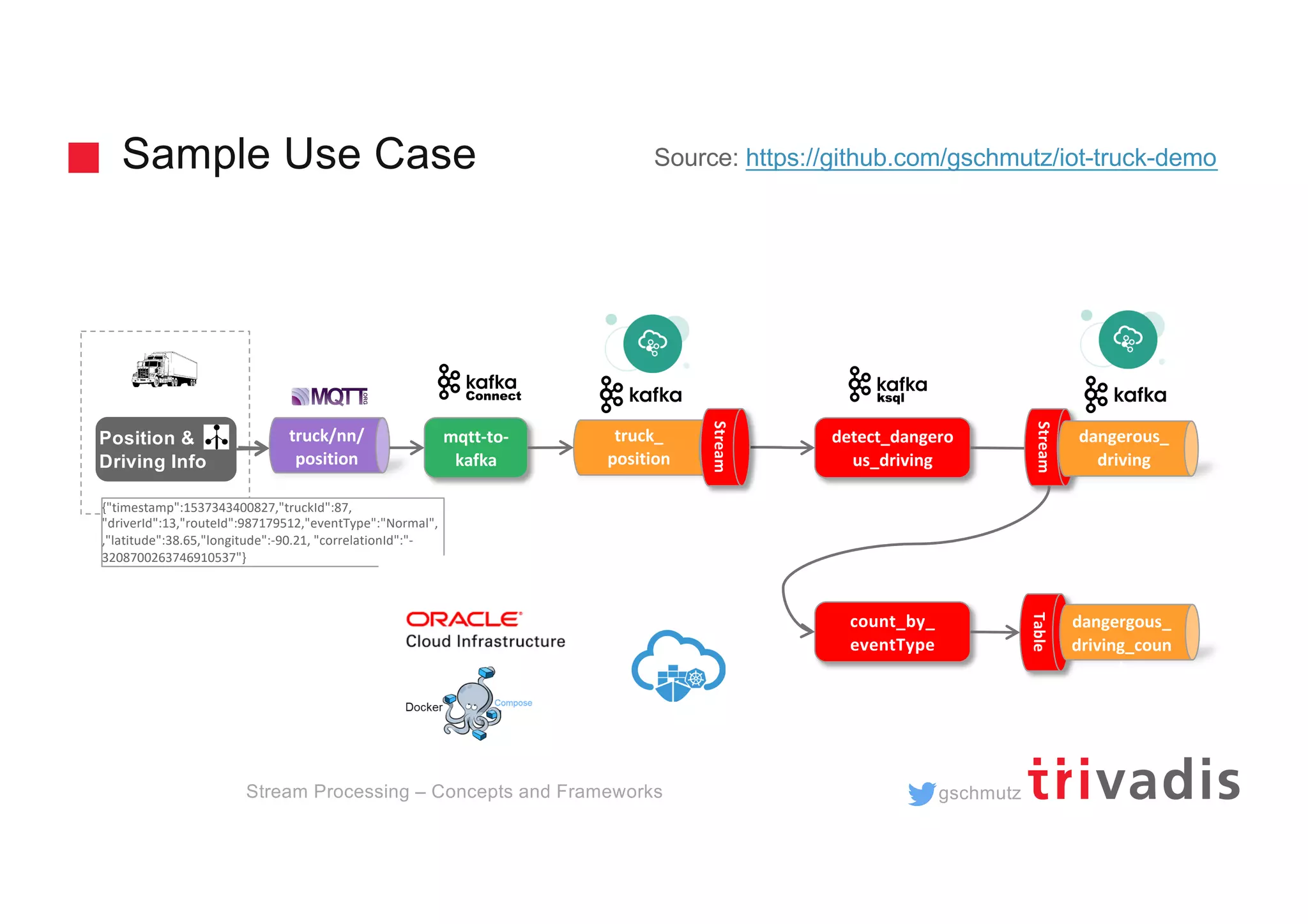

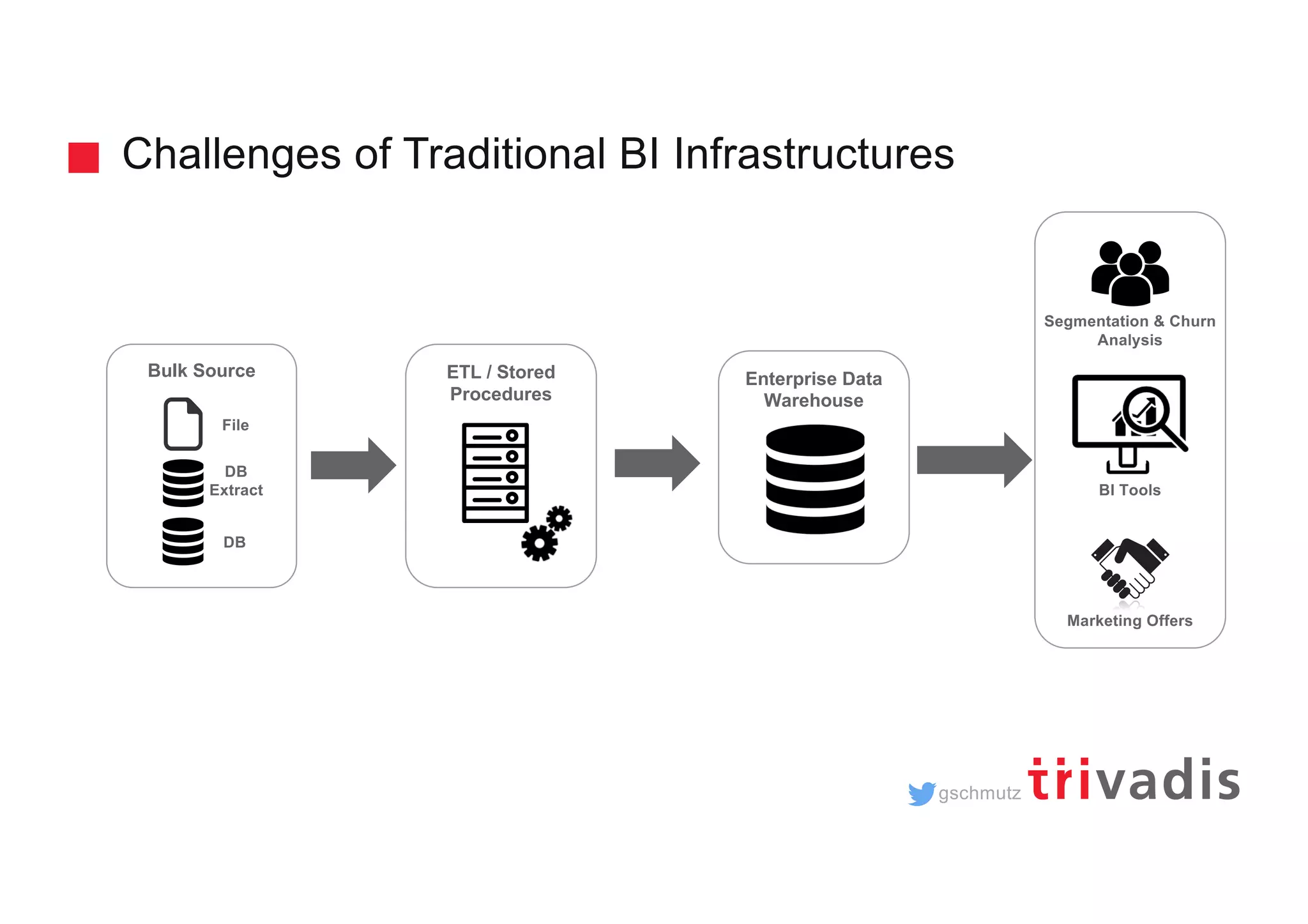

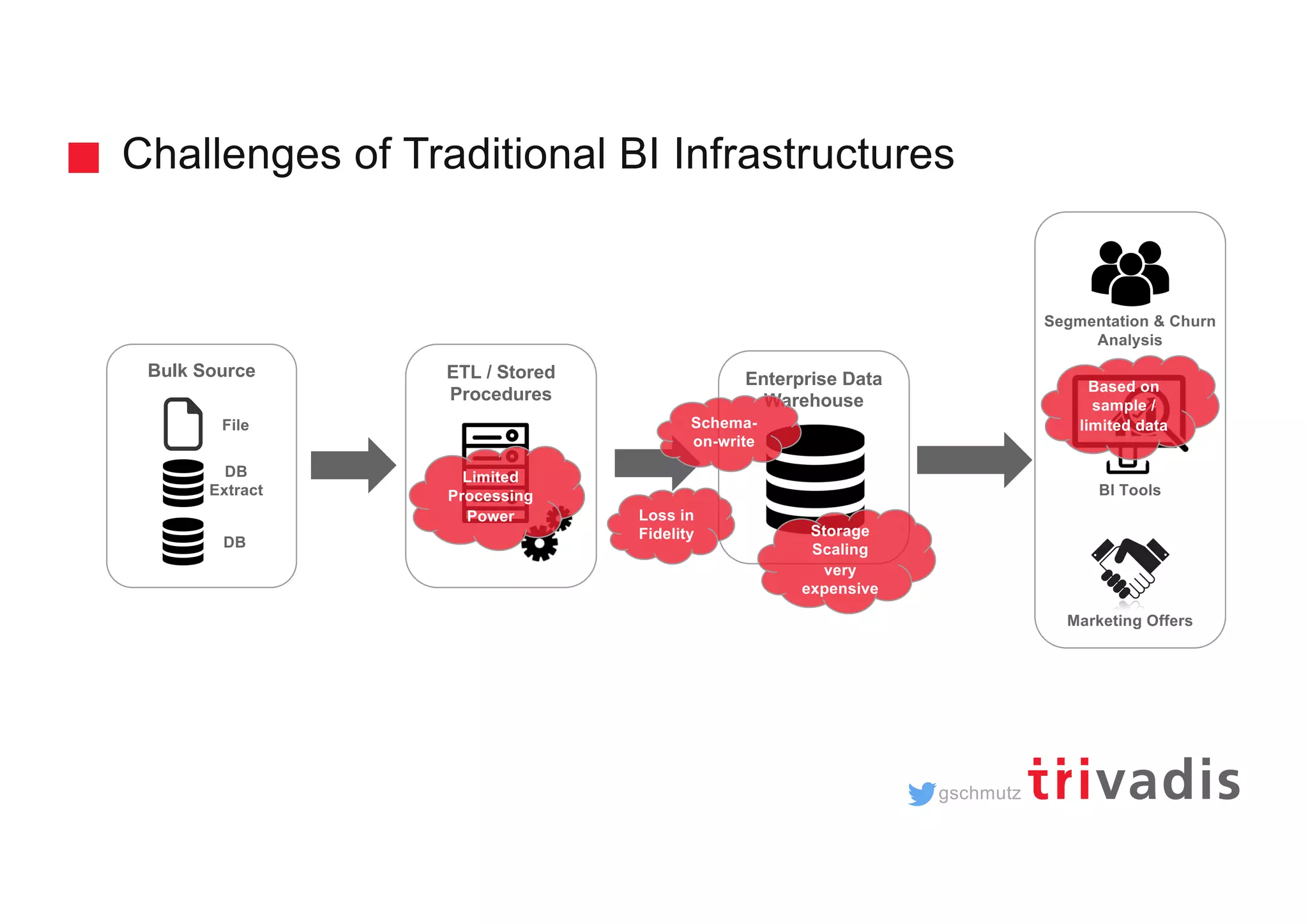

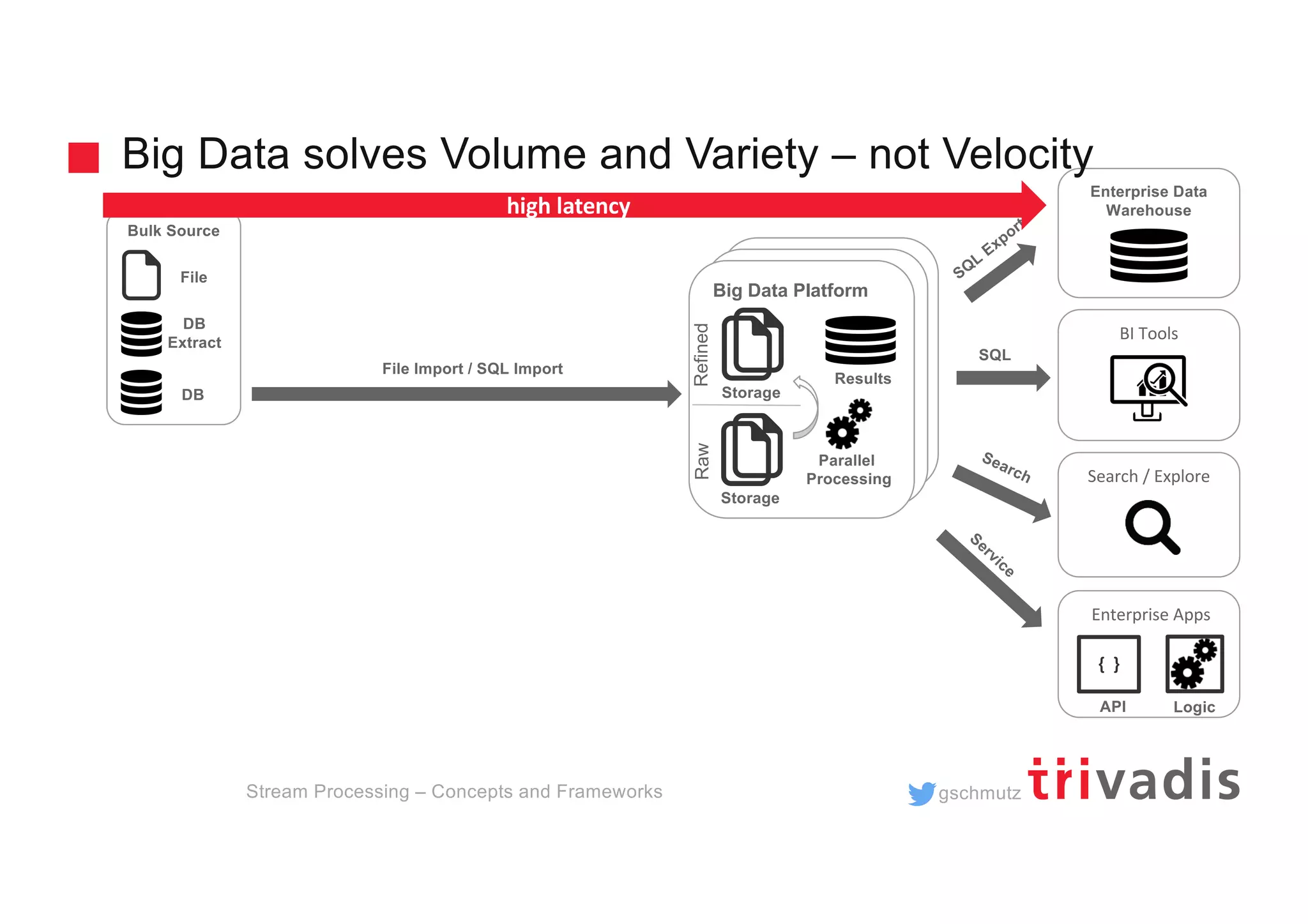

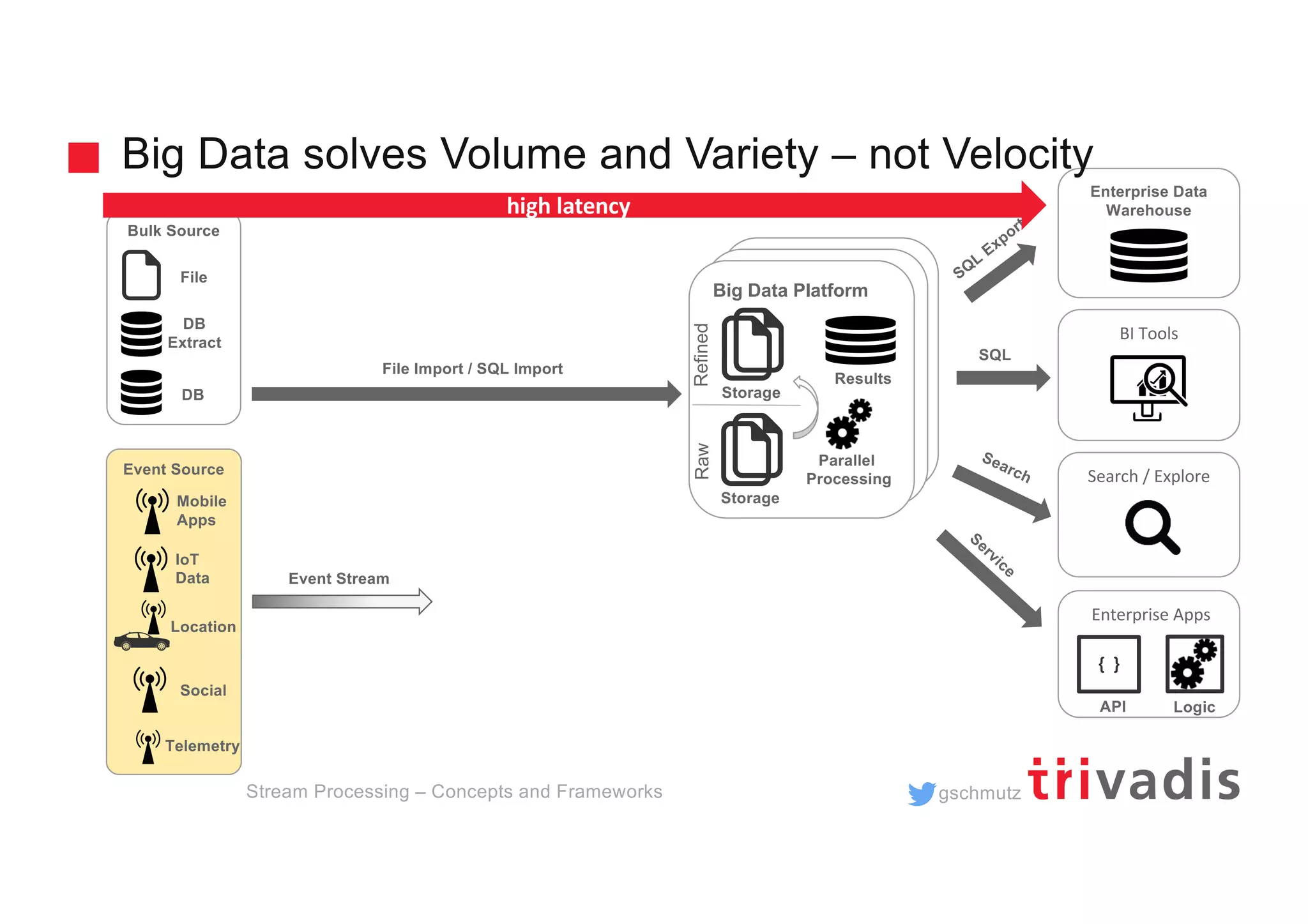

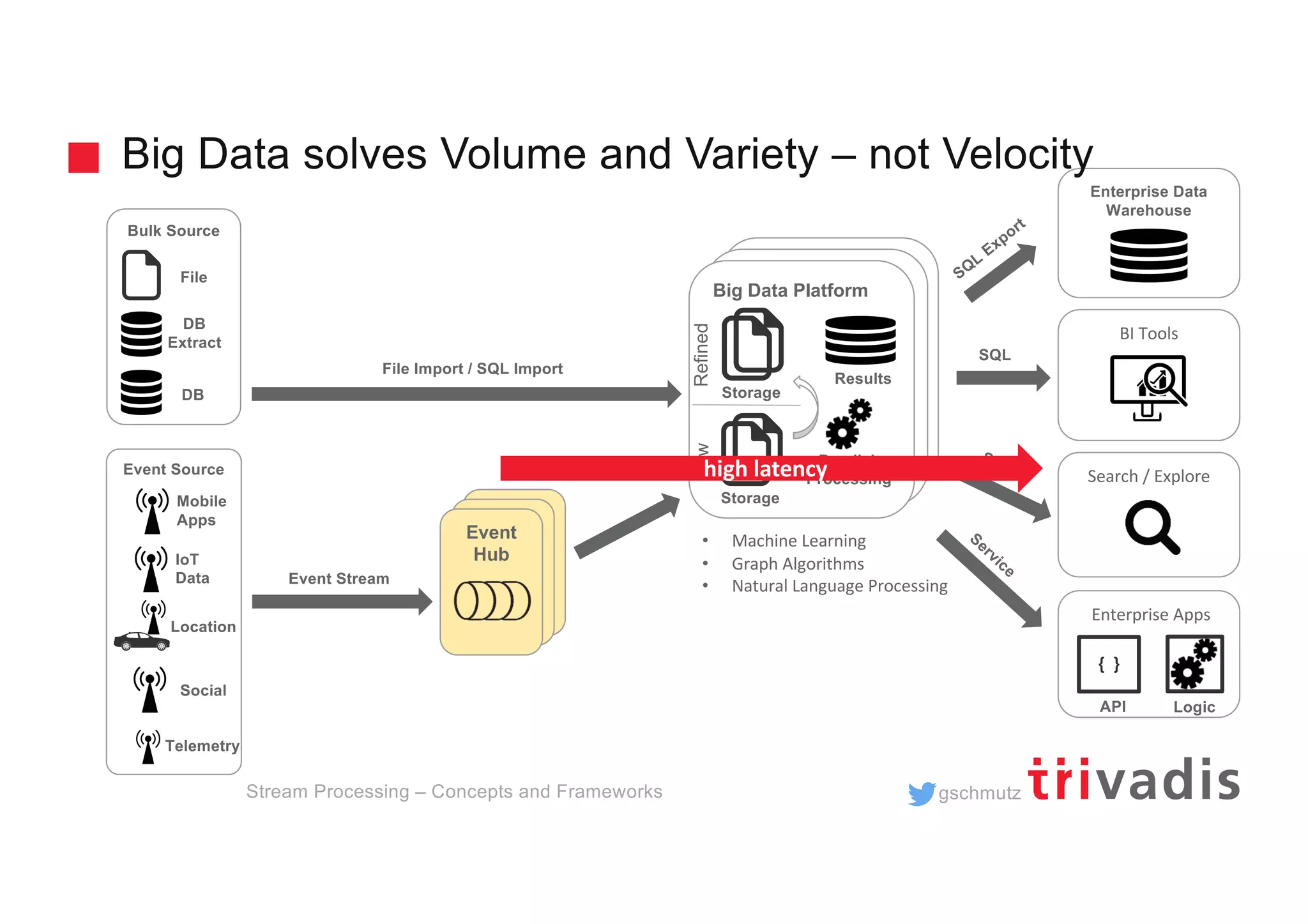

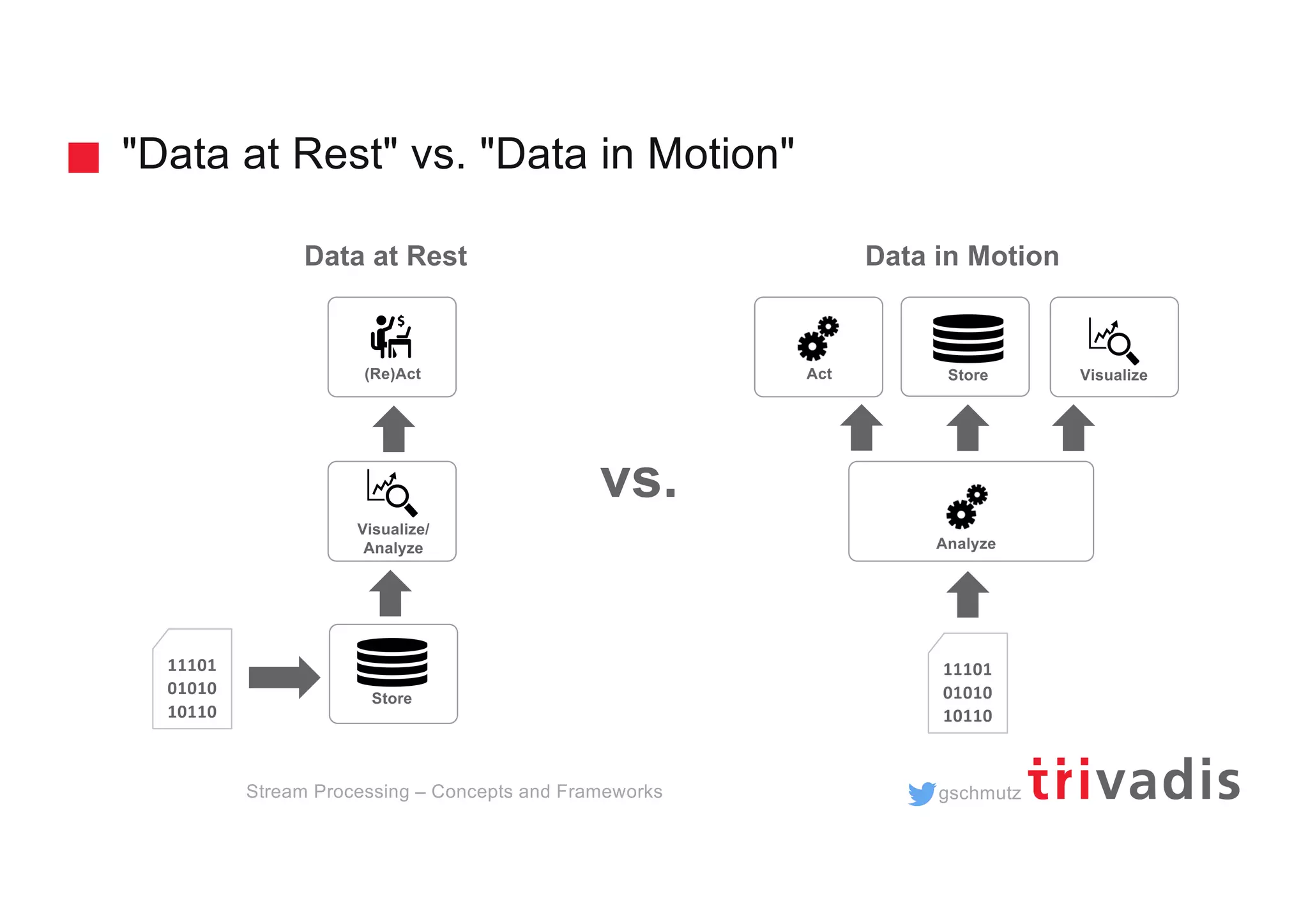

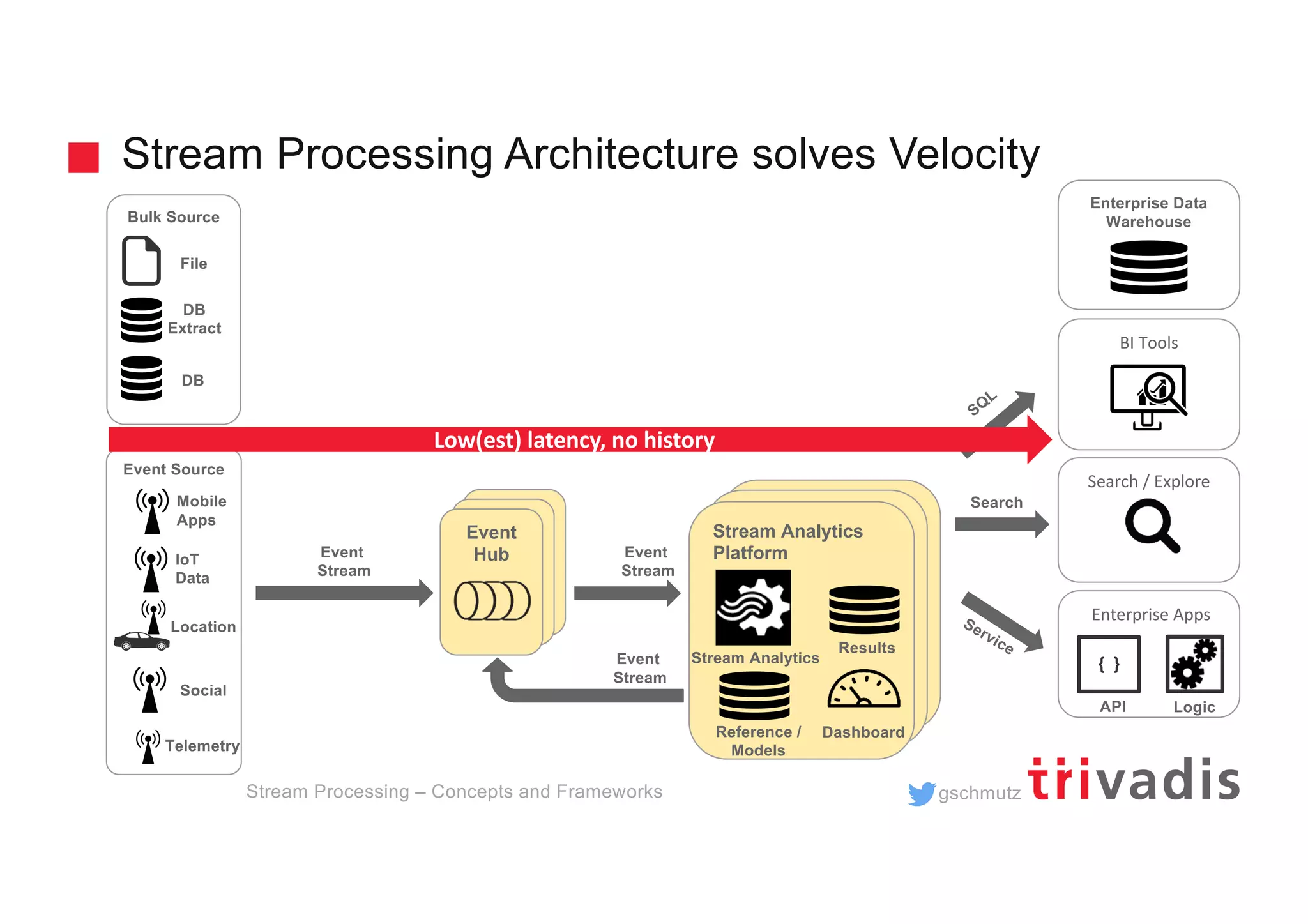

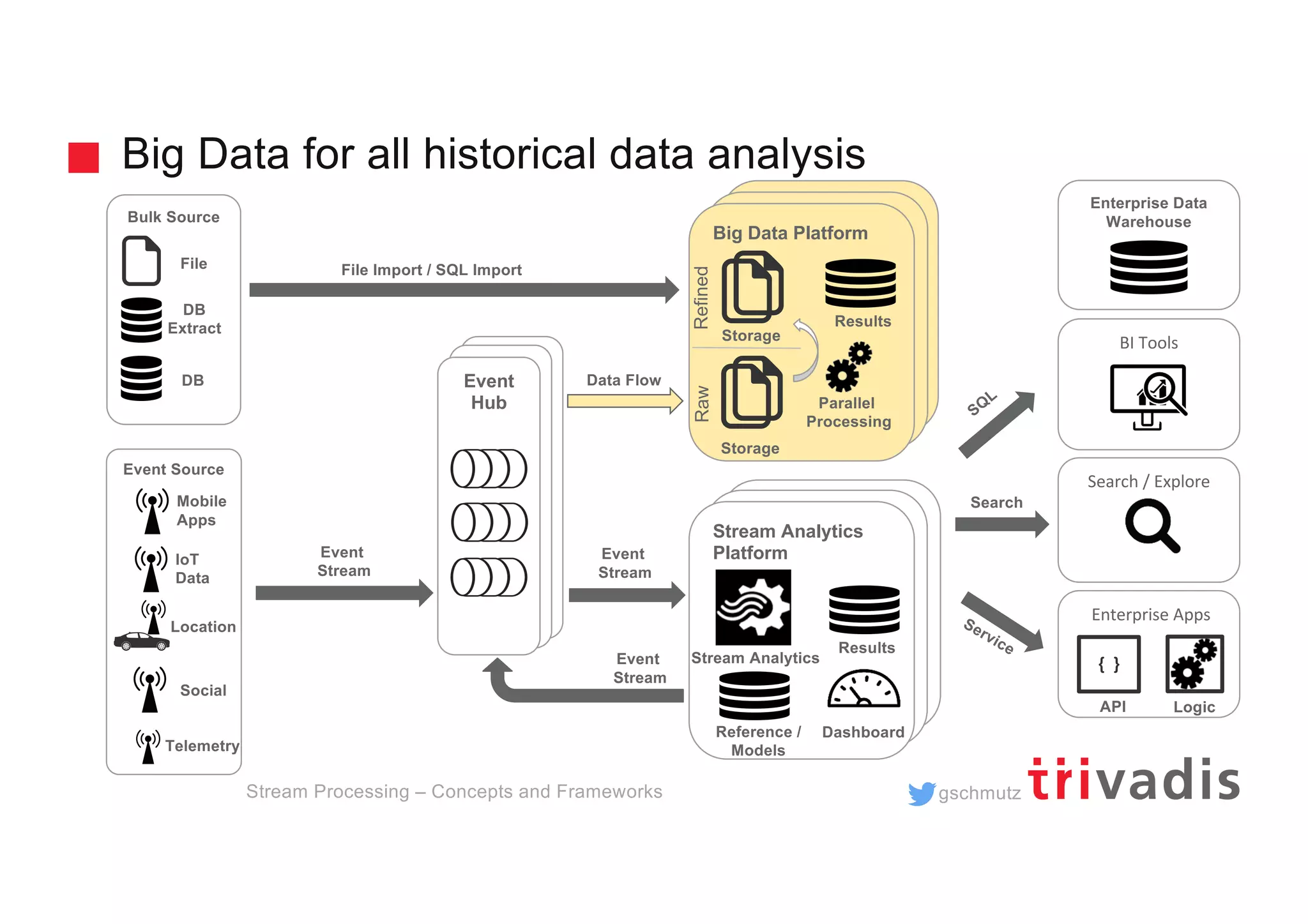

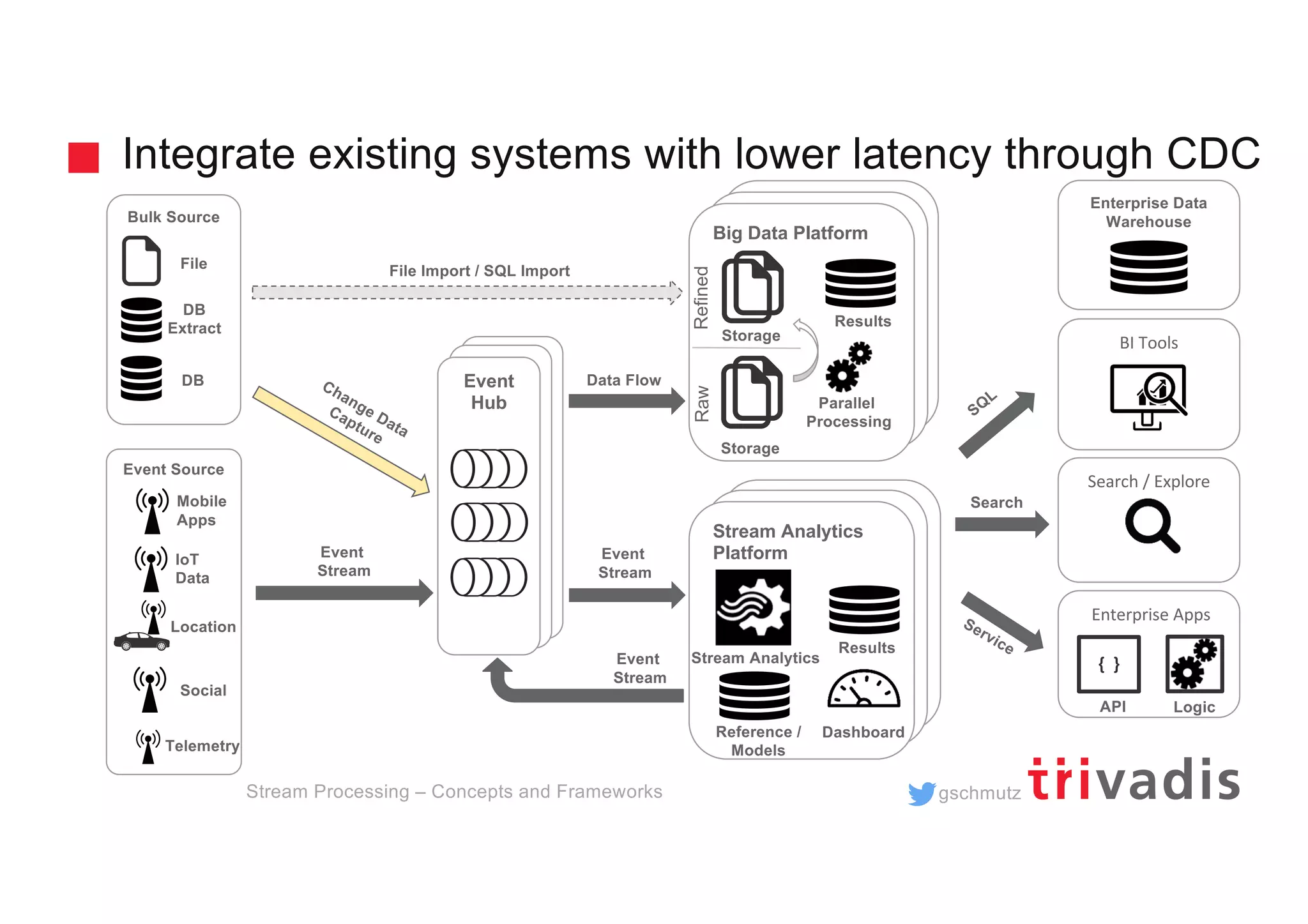

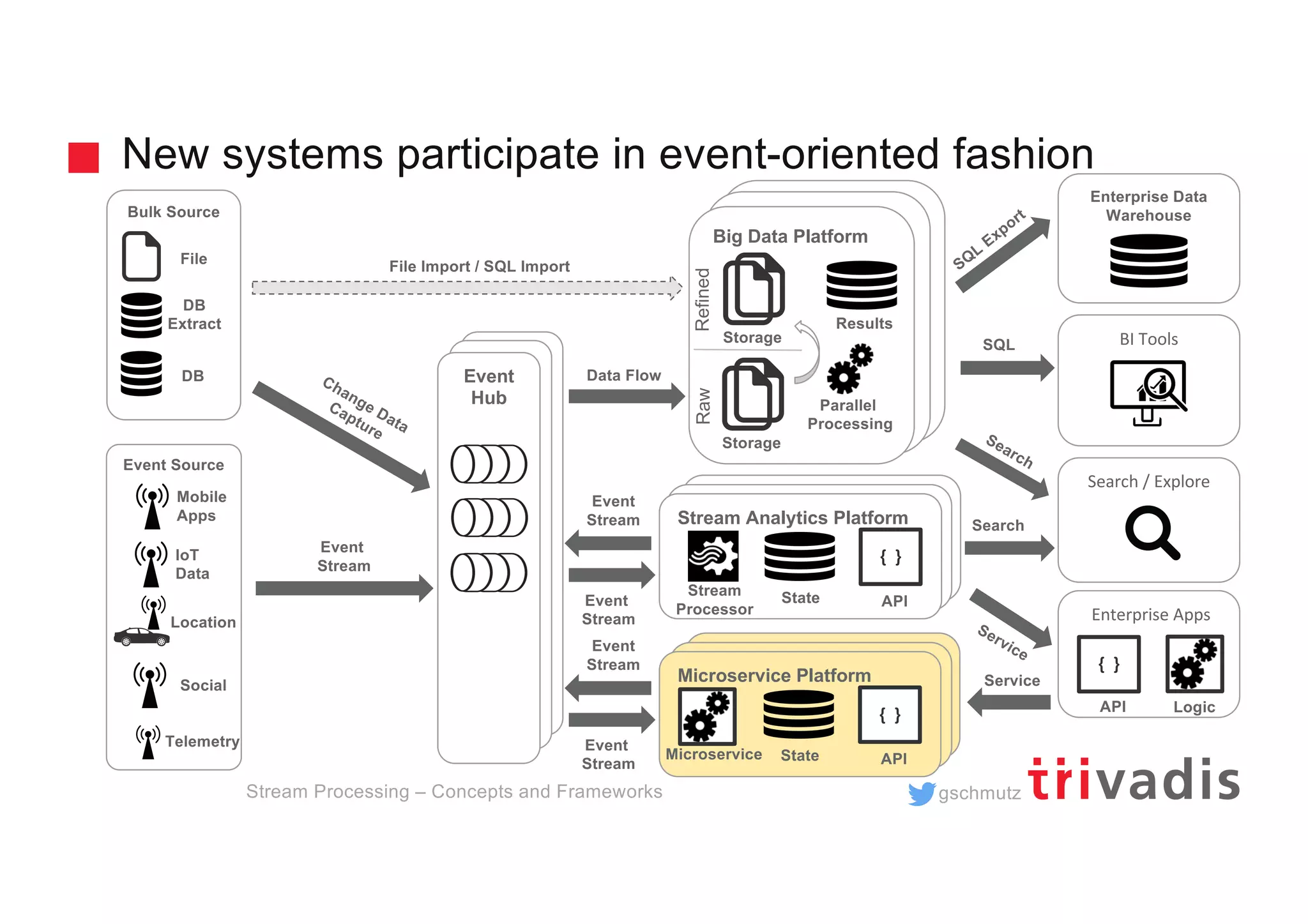

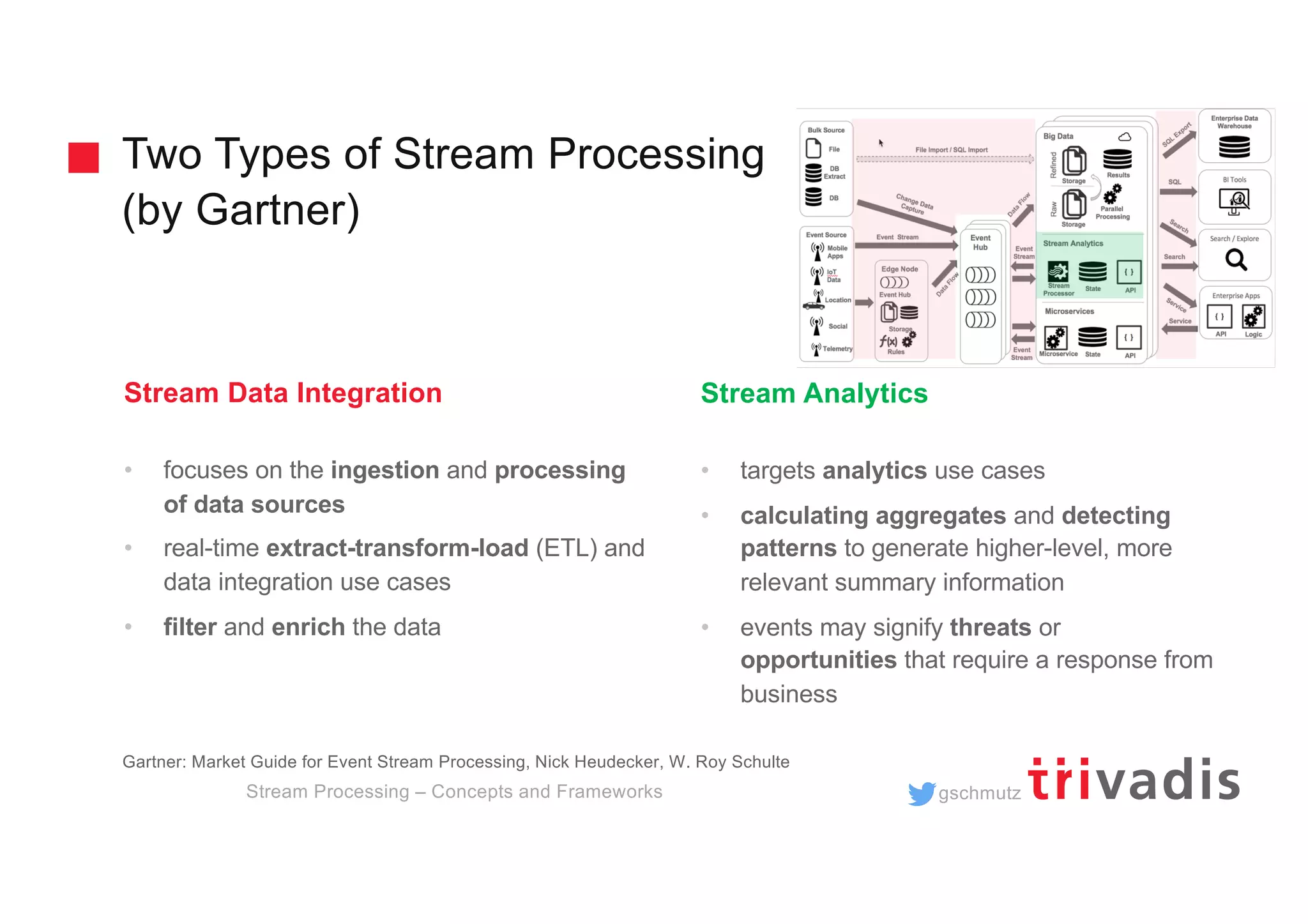

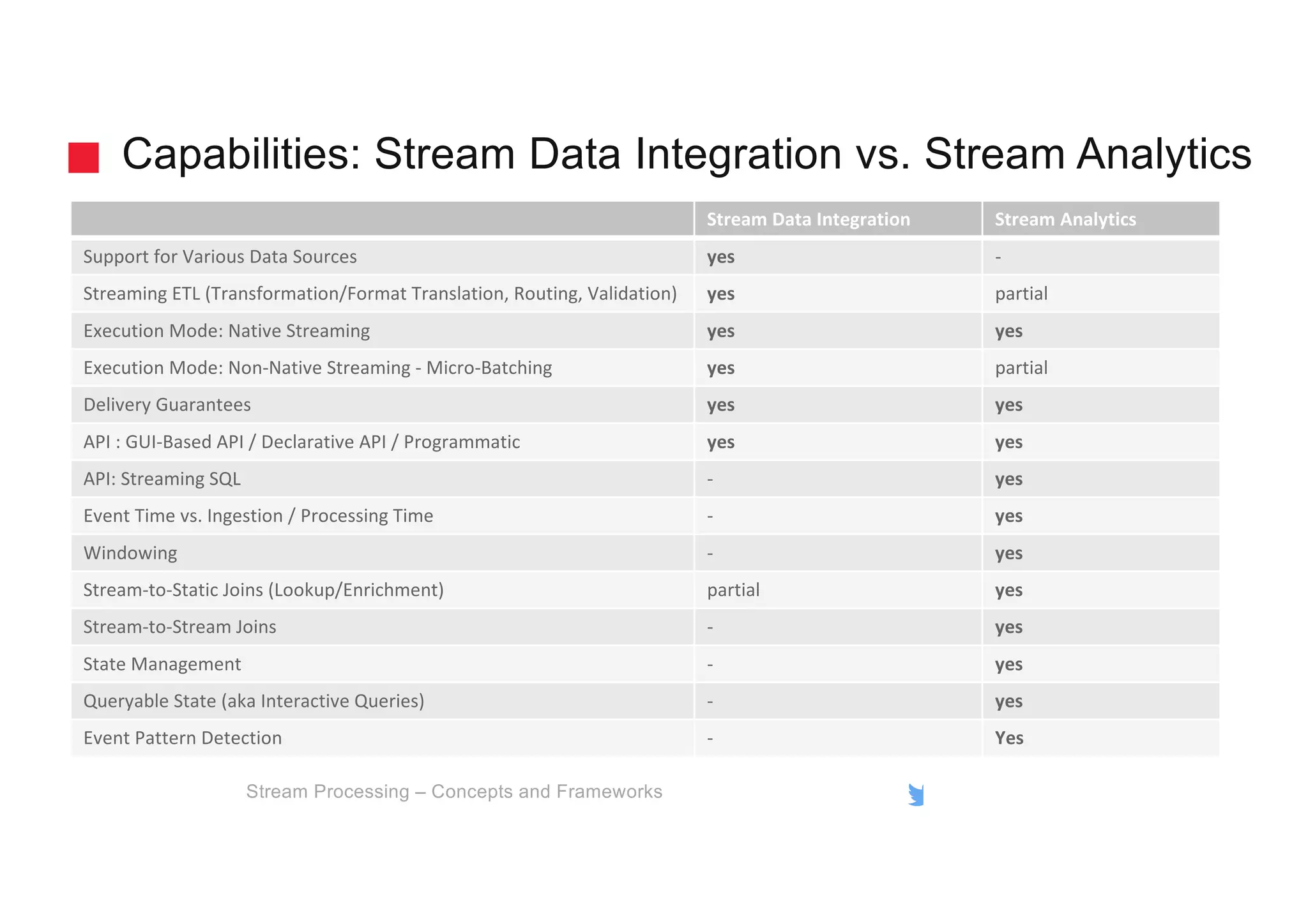

The document discusses the fundamental concepts and frameworks of stream processing, emphasizing low latency and the importance of event hubs, stream data integration, and stream analytics in modern architecture. It covers challenges with traditional BI infrastructures and outlines solutions like Apache Kafka for efficient data processing and integration. Additionally, it highlights critical capabilities of stream processing, including state management, event pattern detection, and different processing modes.

![gschmutz

Delivery Guarantees

Stream Processing – Concepts and Frameworks

At most once (fire-and-forget)

§ message is sent, but the sender doesn’t care if it’s received or lost.

At least once

§ Retransmission of messages can cause messages to be sent one or

more times

Exactly once

§ ensures that a message is received once and only once (never lost

and never repeated)

[ 0 | 1 ]

[ 1+ ]

[ 1 ]](https://image.slidesharecdn.com/introduction-to-stream-processing-v1-190522181459/75/Introduction-to-Stream-Processing-23-2048.jpg)